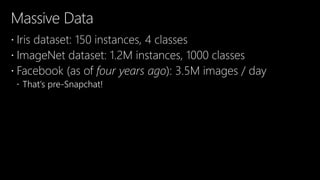

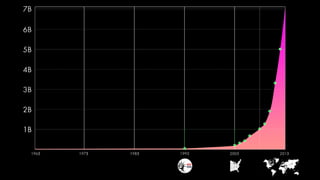

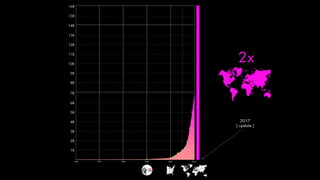

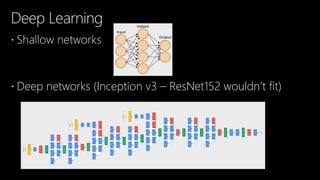

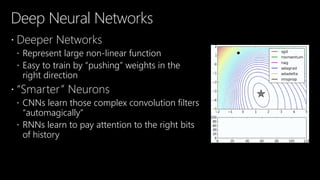

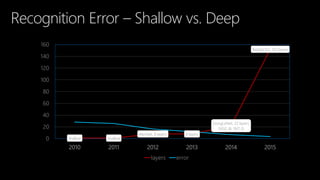

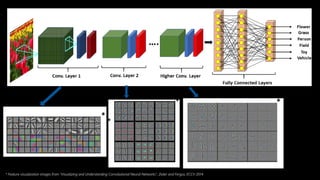

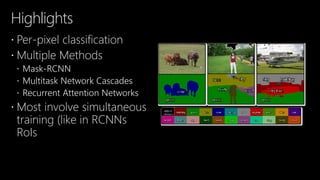

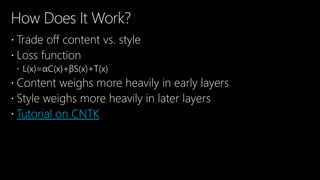

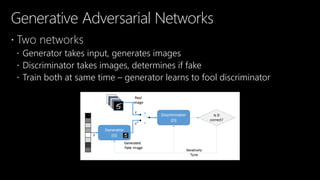

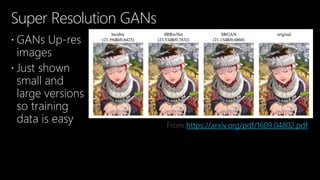

The document outlines the goals of a presentation on deep learning, emphasizing the importance of excitement and understanding of the subject rather than detailing programming techniques. It discusses the significant advancements in deep learning, including applications like image recognition and generation, and the evolution of deep neural networks. The content also highlights various methodologies, tools, and the potential future directions of deep learning technology.

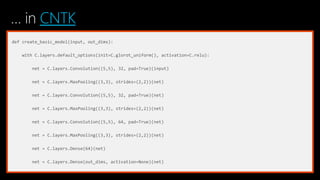

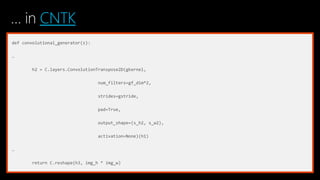

![…in CNTK

def create_model(model_uri, num_classes, input_features, feature_node_name, last_hidden_name, new_prediction_node_name='pr

base_model = C.load_model(download_from_uri(model_uri))

feature_node = C.logging.find_by_name(base_model, feature_node_name)

last_node = C.logging.find_by_name(base_model, last_hidden_name)

cloned_layers = C.combine([last_node.owner]).clone(C.CloneMethod.clone,

{feature_node: C.placeholder(name='features')})

feat_norm = input_features - C.Constant(114)

cloned_out = cloned_layers(feat_norm)

return C.layers.Dense(num_classes, activation=None, name=new_prediction_node_name) (cloned_out)](https://image.slidesharecdn.com/ai07-170602095346/85/AI07-Revolutionizing-Image-Processing-with-Cognitive-Toolkit-31-320.jpg)

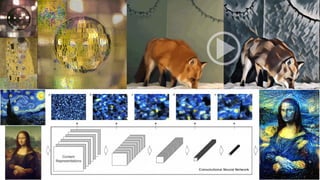

![…in CNTK

y = C.input_variable((3, SIZE, SIZE), needs_gradient=True)

z, intermediate_layers = model(y, layers)

content_activations = ordered_outputs(intermediate_layers, {y: [[content]]})

style_activations = ordered_outputs(intermediate_layers, {y: [[style]]})

style_output = np.squeeze(z.eval({y: [[style]]}))

total = (1-decay**(n+1))/(1-decay) # makes sure that changing the decay does not affect the magnitude of content/style

loss = (1.0/total * content_weight * content_loss(y, content)

+ 1.0/total * style_weight * style_loss(z, style_output)

+ total_variation_loss(y))](https://image.slidesharecdn.com/ai07-170602095346/85/AI07-Revolutionizing-Image-Processing-with-Cognitive-Toolkit-46-320.jpg)

![[AI07] Revolutionizing Image Processing with Cognitive Toolkit](https://image.slidesharecdn.com/ai07-170602095346/85/AI07-Revolutionizing-Image-Processing-with-Cognitive-Toolkit-62-320.jpg)