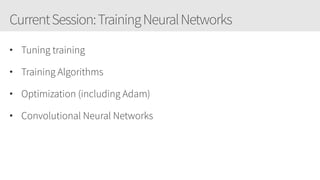

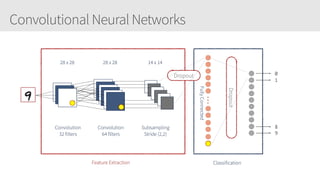

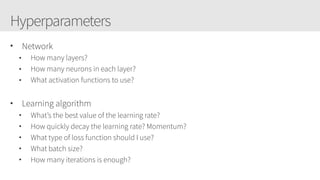

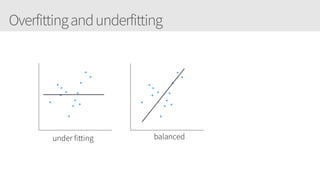

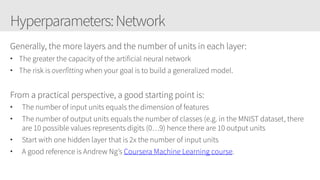

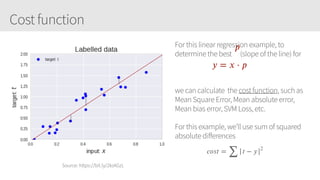

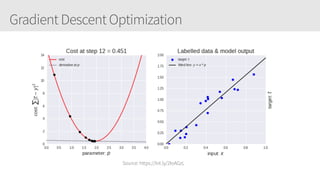

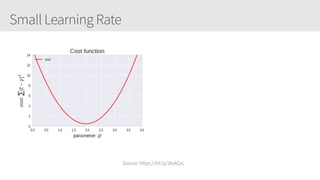

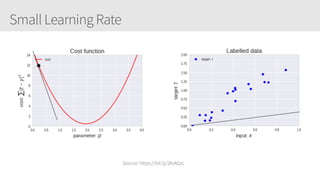

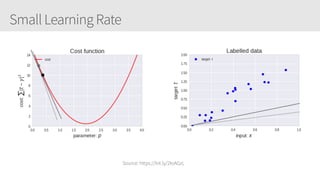

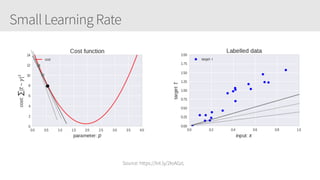

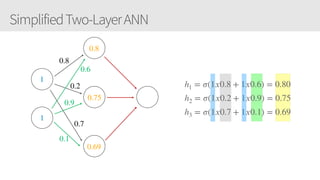

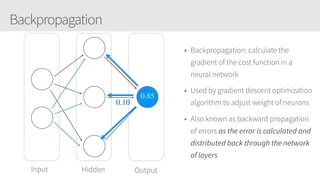

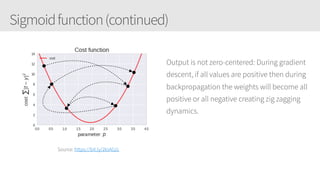

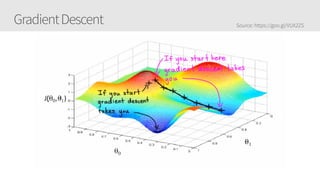

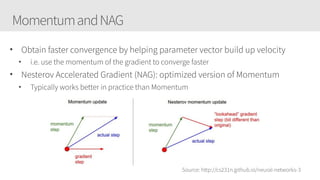

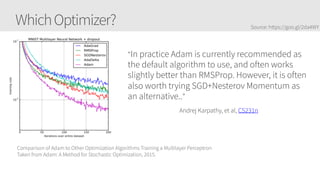

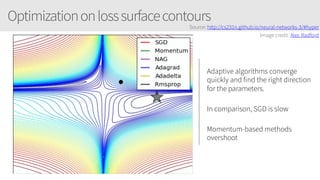

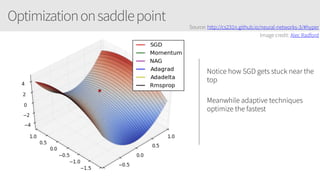

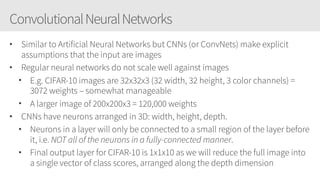

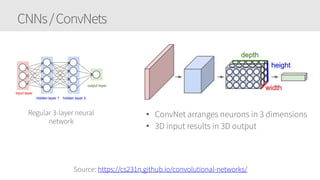

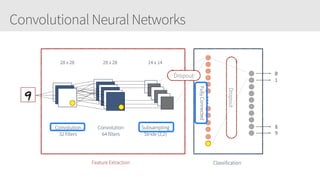

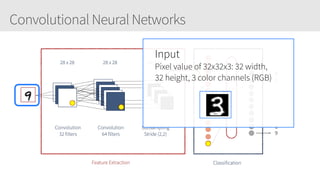

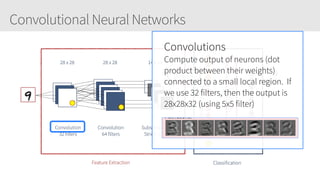

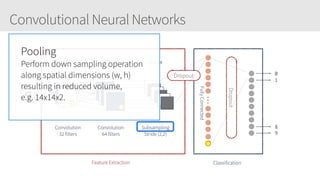

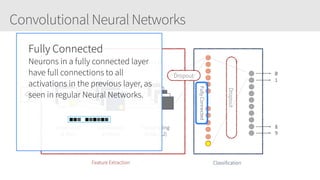

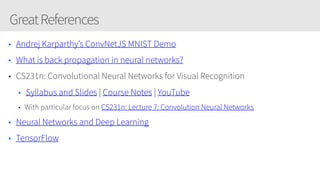

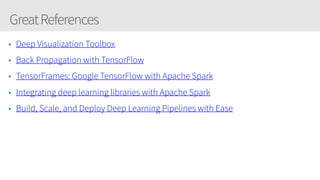

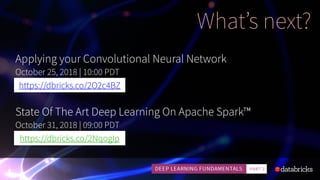

The document outlines a training series on neural networks focused on concepts and practical applications using Keras. It covers tuning, optimization, and training algorithms, alongside challenges such as overfitting and underfitting, and discusses the architecture and advantages of convolutional neural networks (CNNs). The content is designed for individuals interested in understanding deep learning fundamentals and applying them effectively.