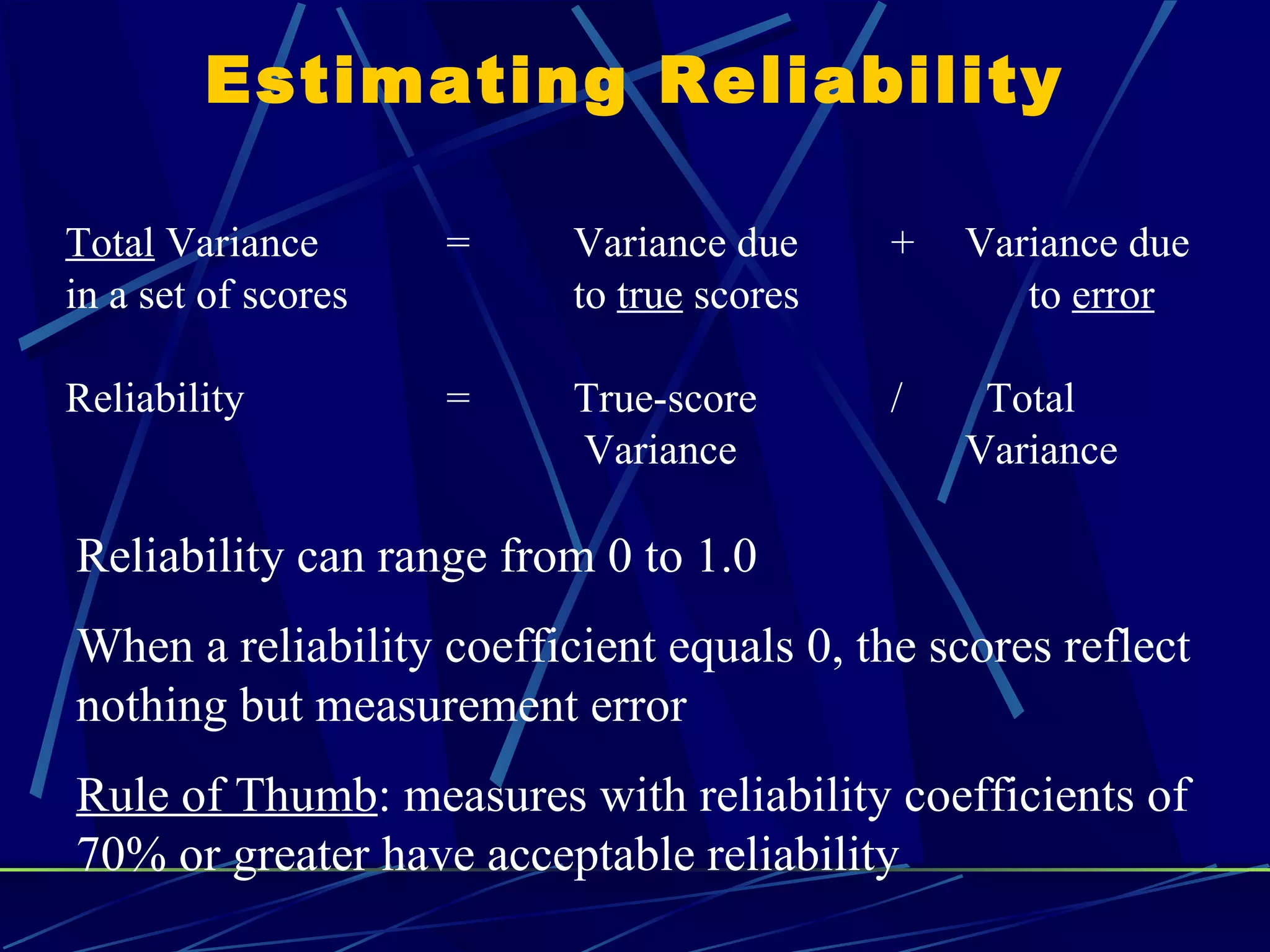

This document discusses the importance of reliability and validity in psychological measurement. Reliability refers to the consistency and repeatability of measurements. It is influenced by measurement error from factors like a participant's mood or fatigue. Validity indicates how well a measure assesses the intended construct. There are several types of validity including face validity, construct validity, convergent validity, discriminant validity, and criterion-related validity. Reliability is necessary for validity and can be estimated using methods like test-retest reliability, internal consistency reliability, and inter-rater reliability. Validity compares a measure to other related and unrelated constructs to determine if it is measuring what it intends to measure.