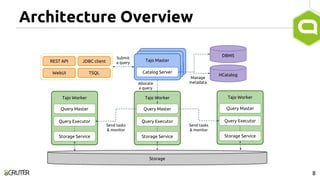

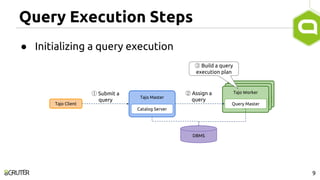

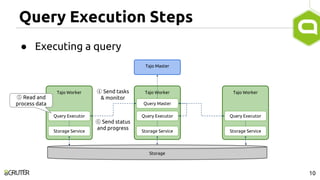

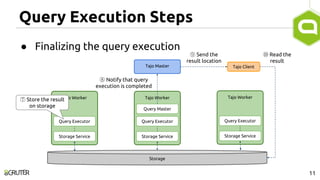

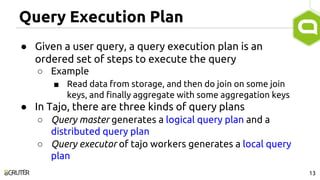

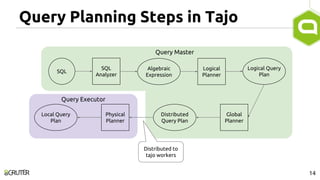

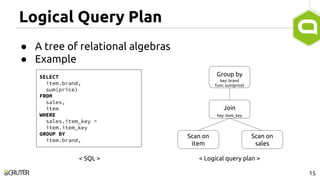

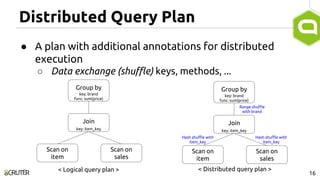

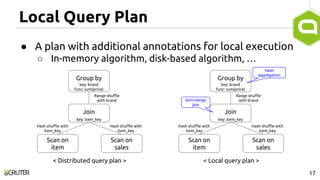

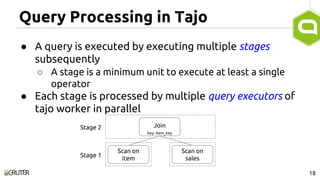

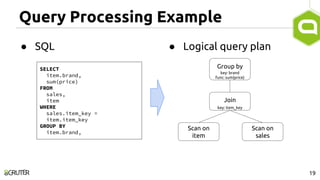

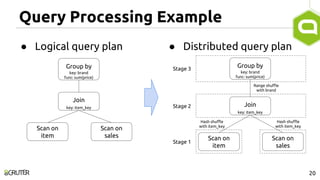

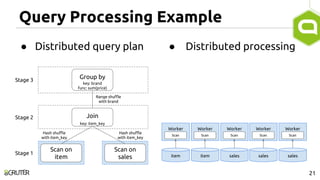

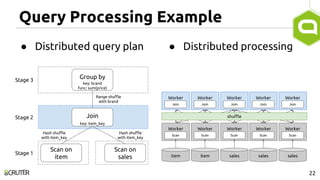

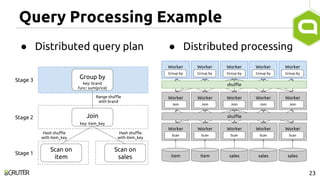

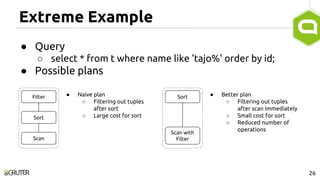

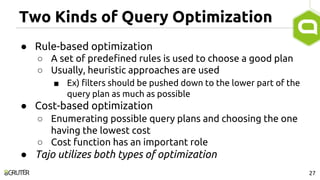

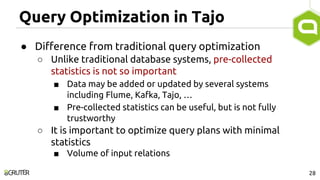

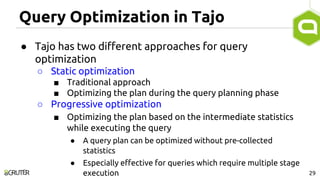

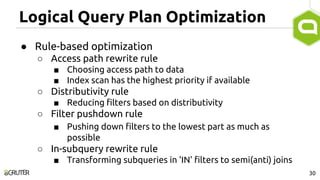

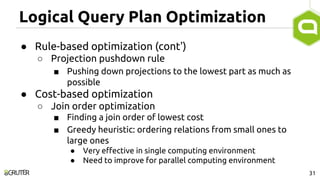

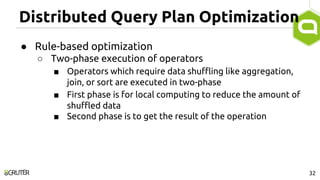

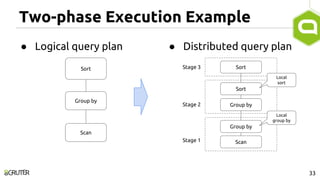

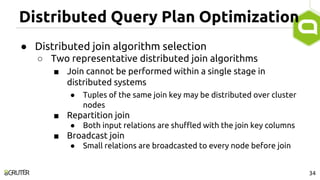

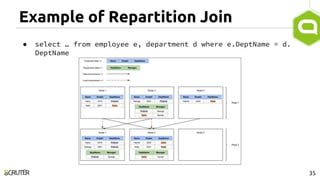

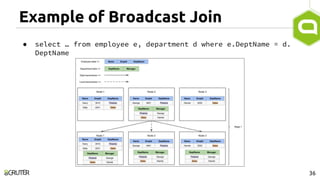

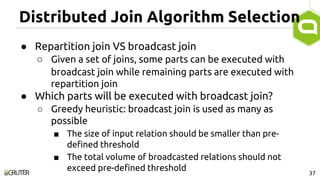

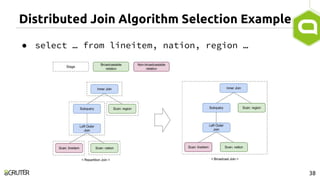

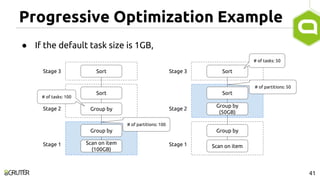

This document discusses query optimization in Apache Tajo. It describes how Tajo generates logical, distributed, and local query plans and optimizes them using rule-based and cost-based techniques. Some key optimization techniques include pushing down filters, selecting join algorithms, and optimizing data partitioning progressively during query execution based on intermediate statistics. The document provides examples of query planning and optimization in Tajo.