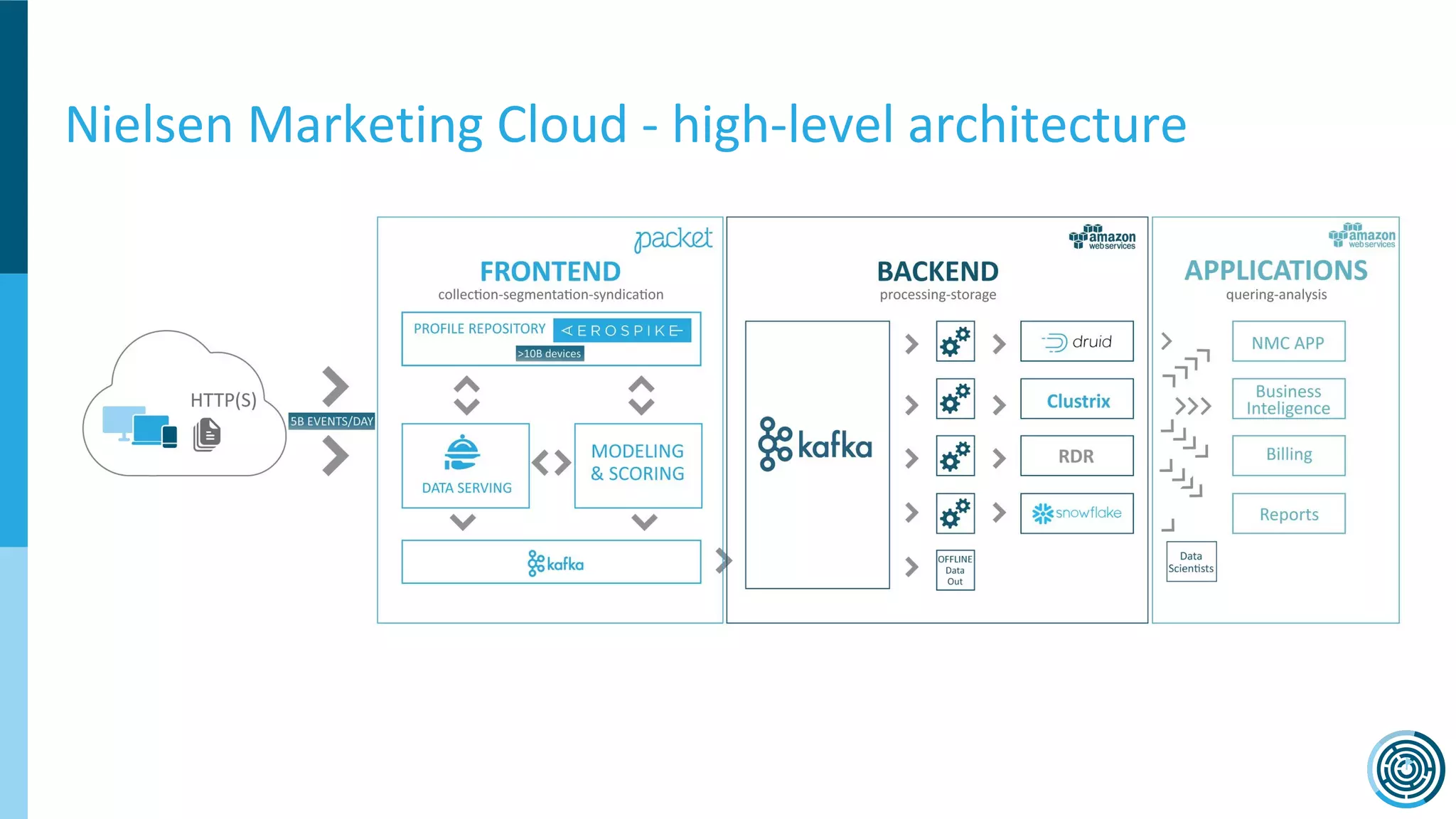

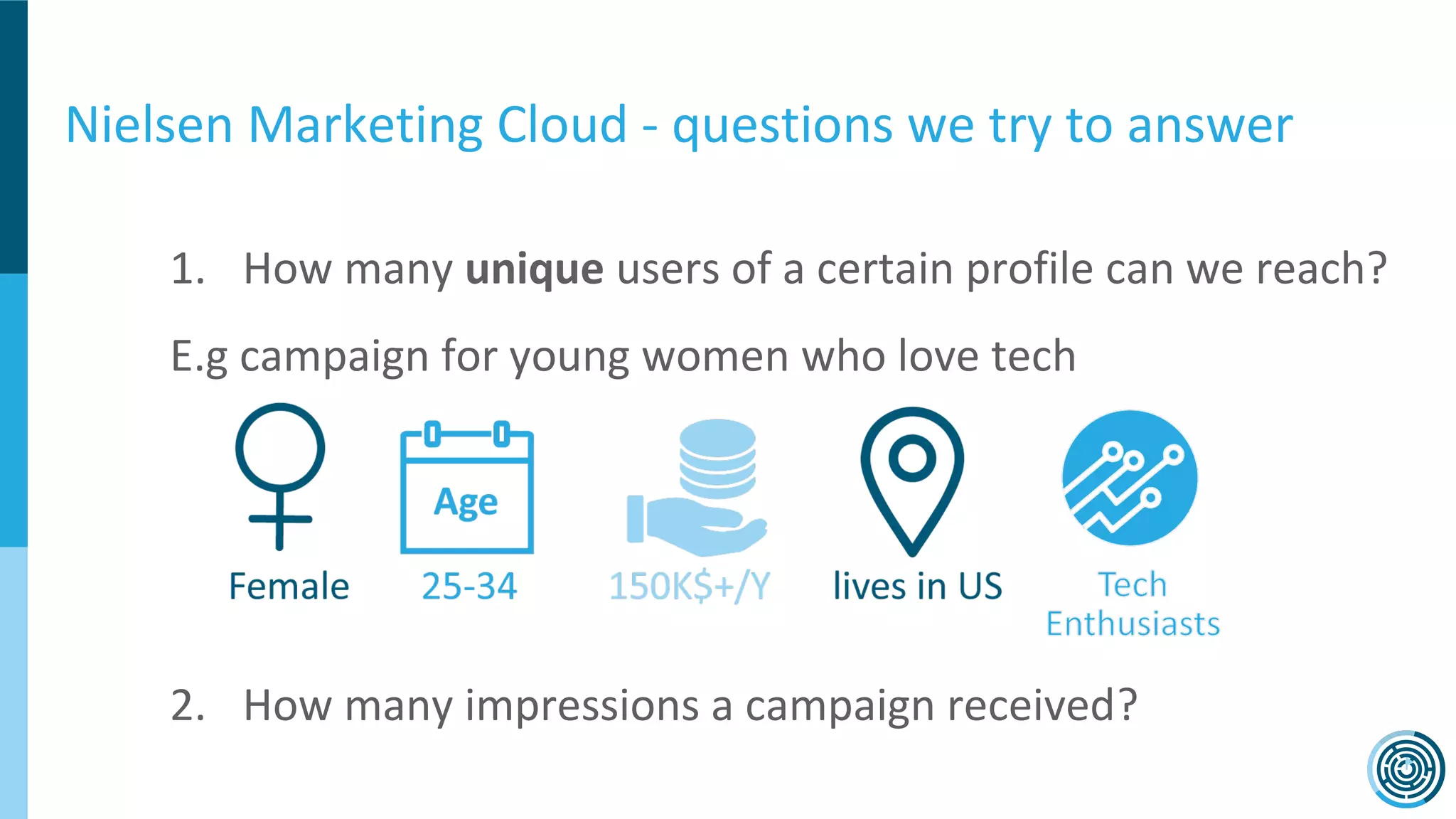

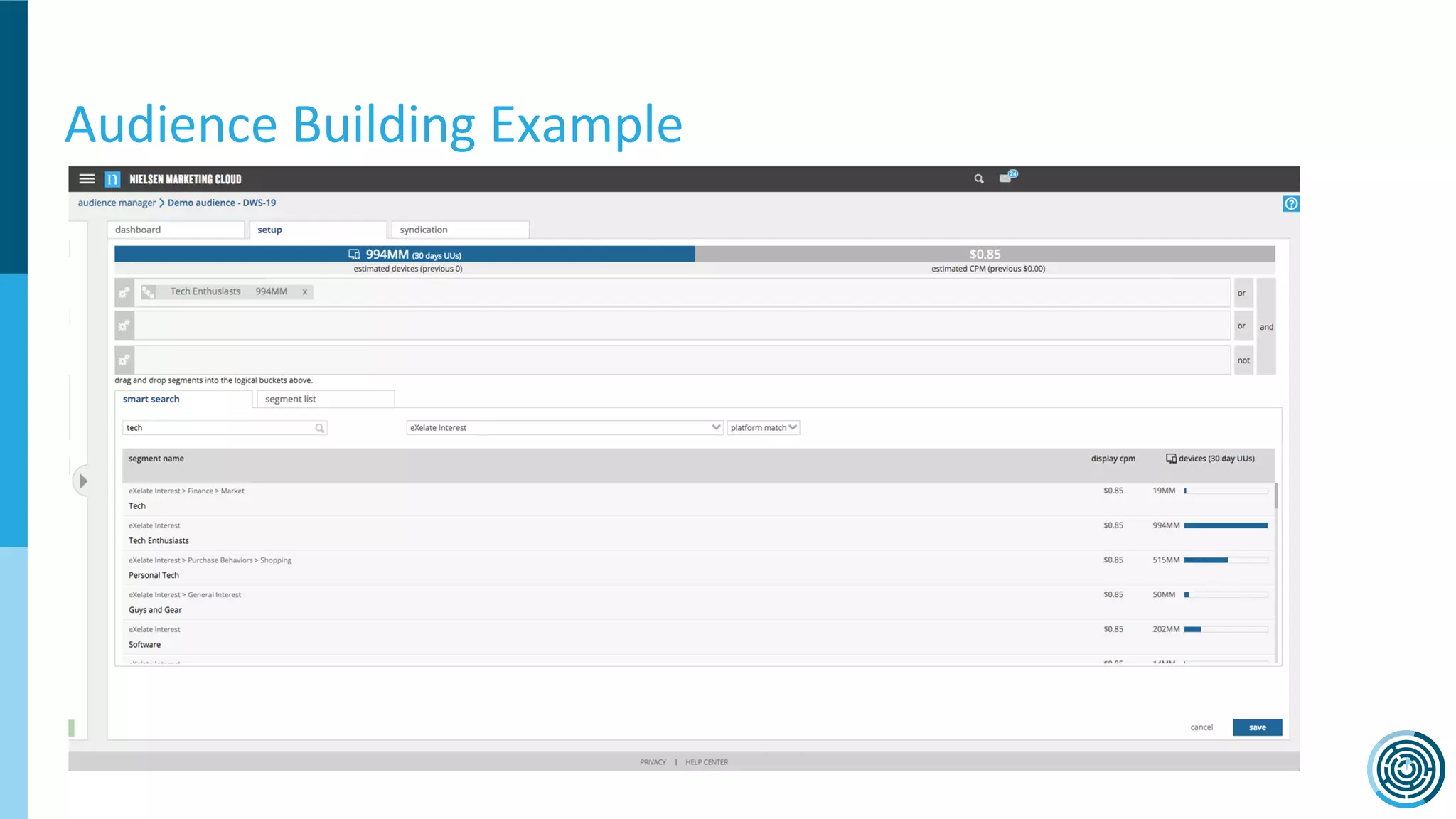

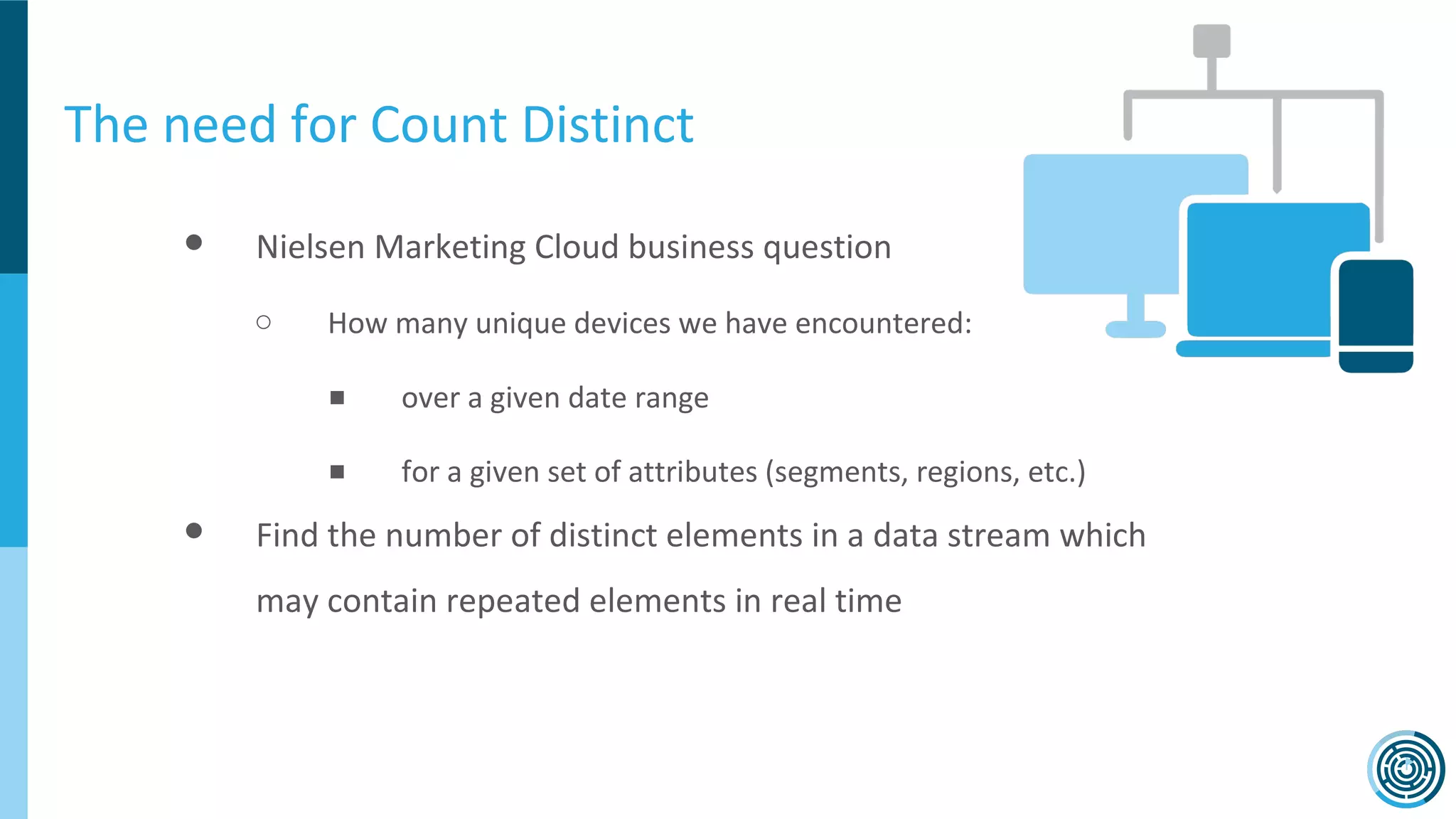

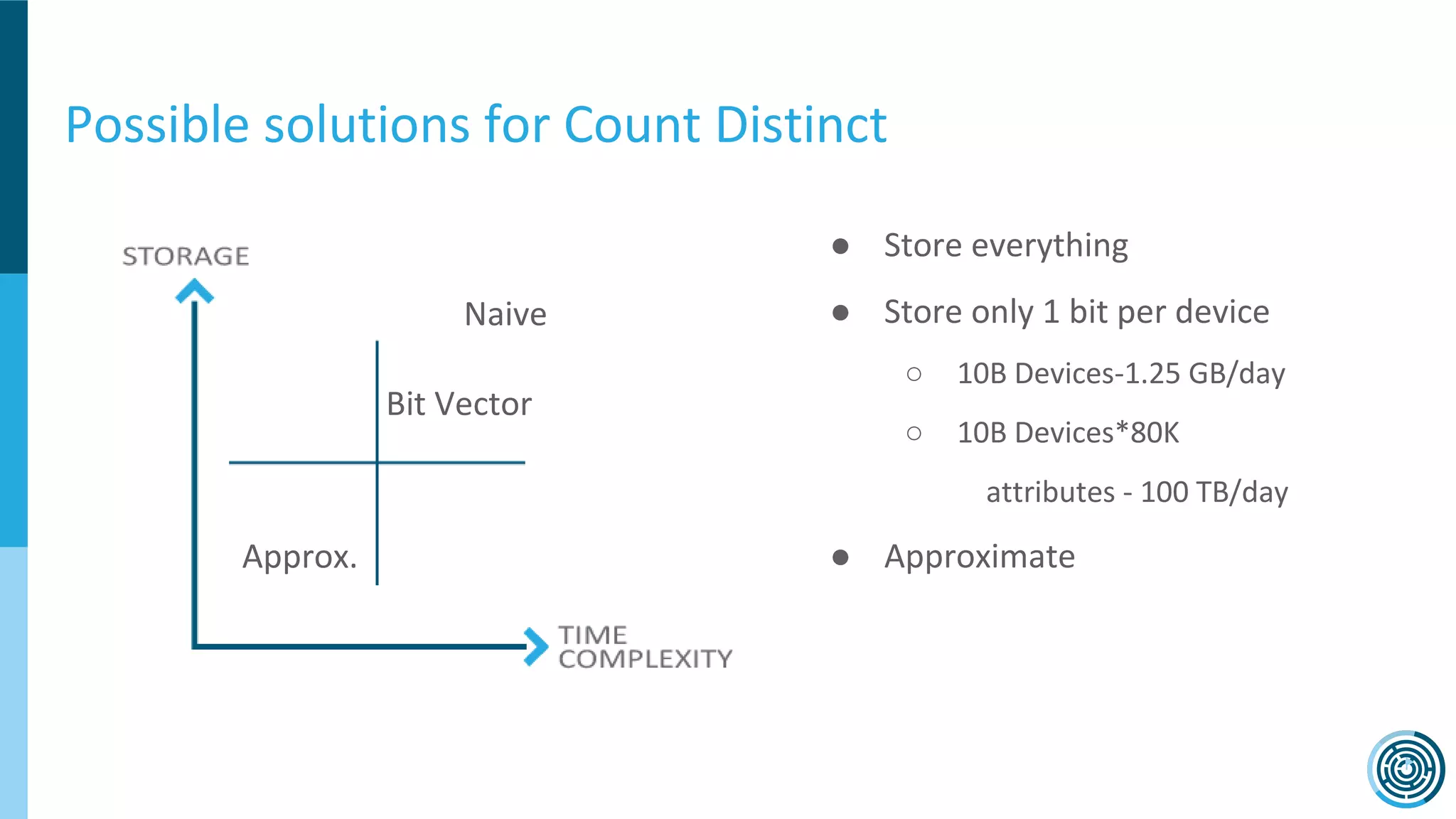

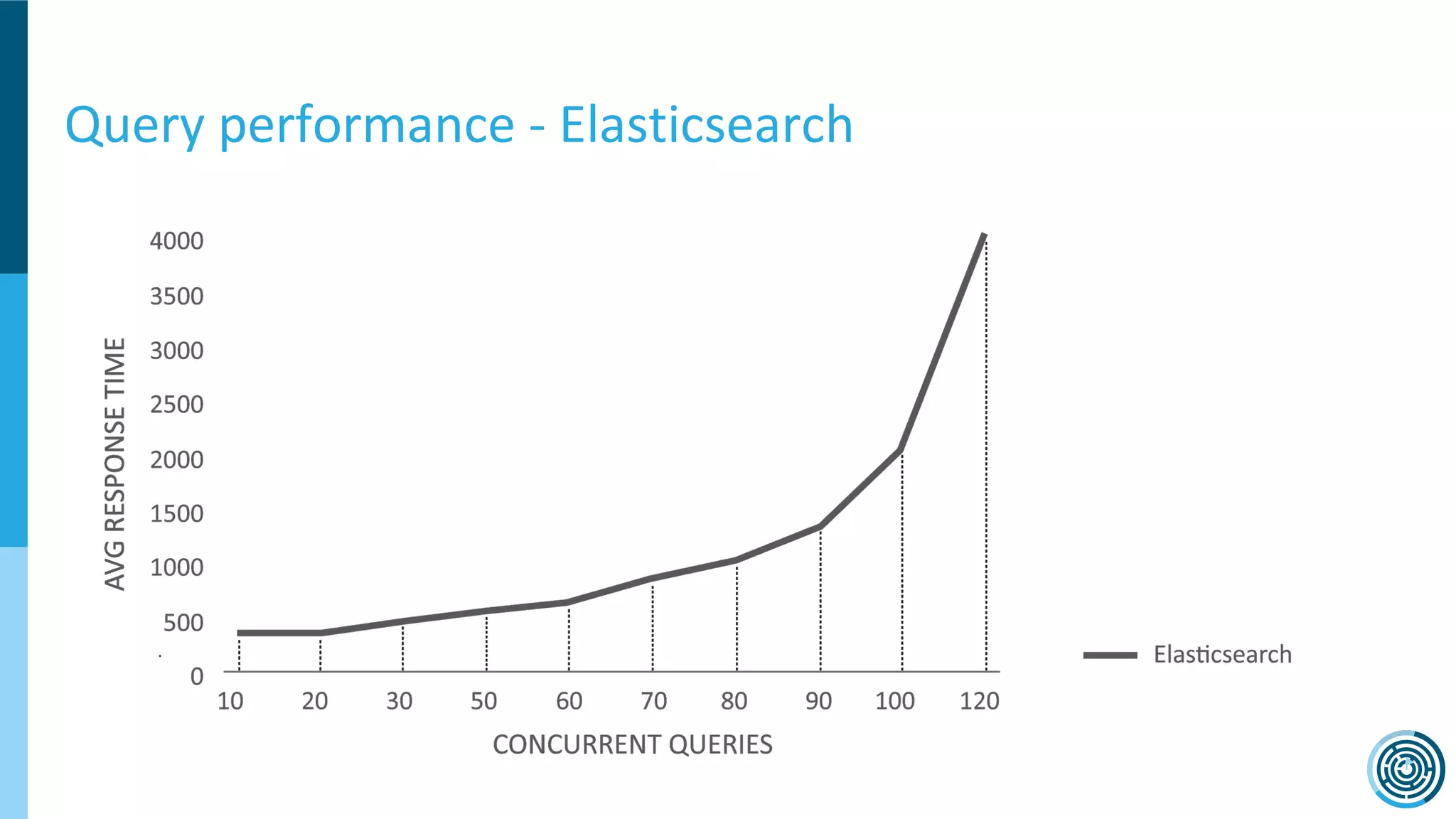

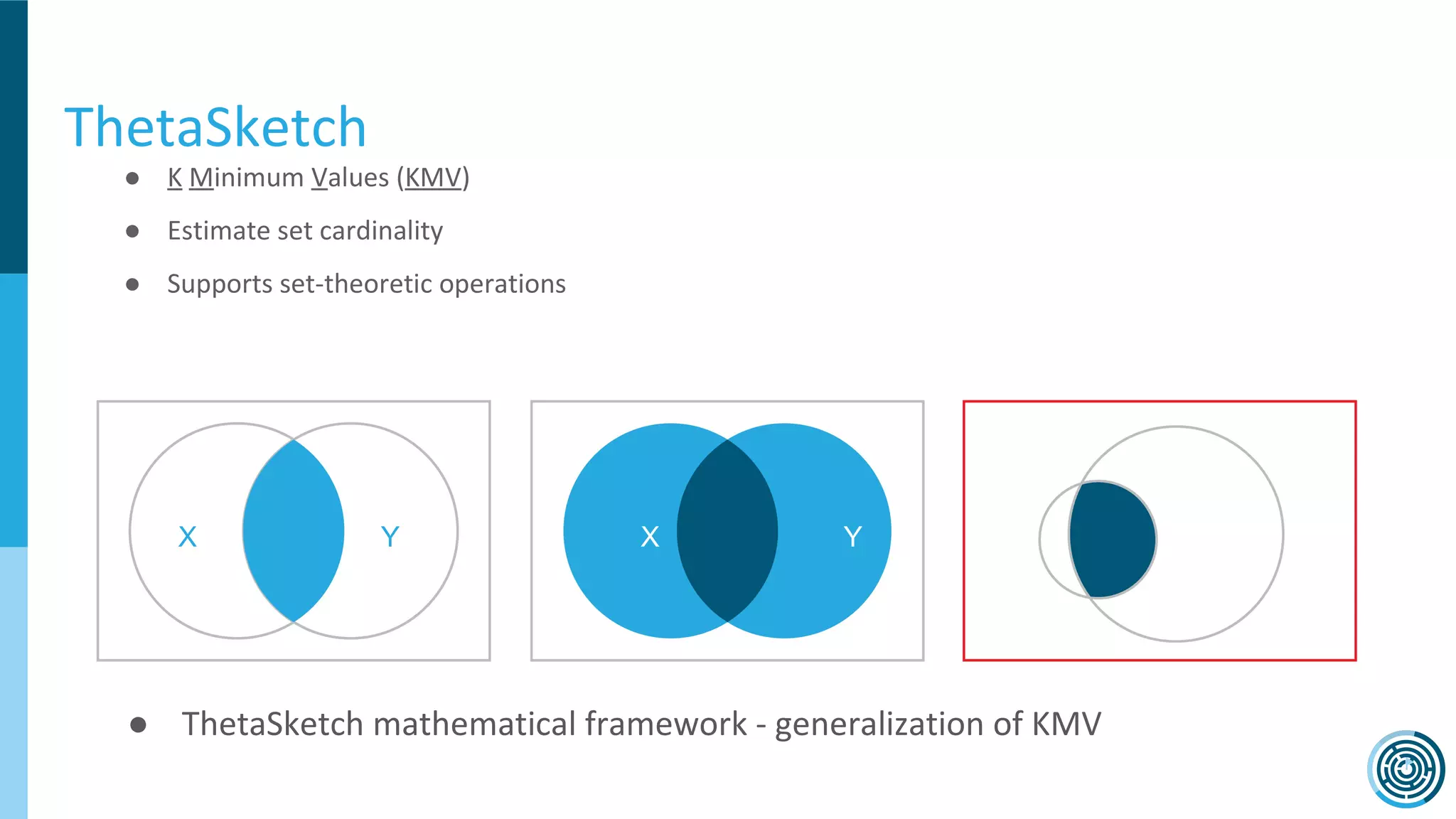

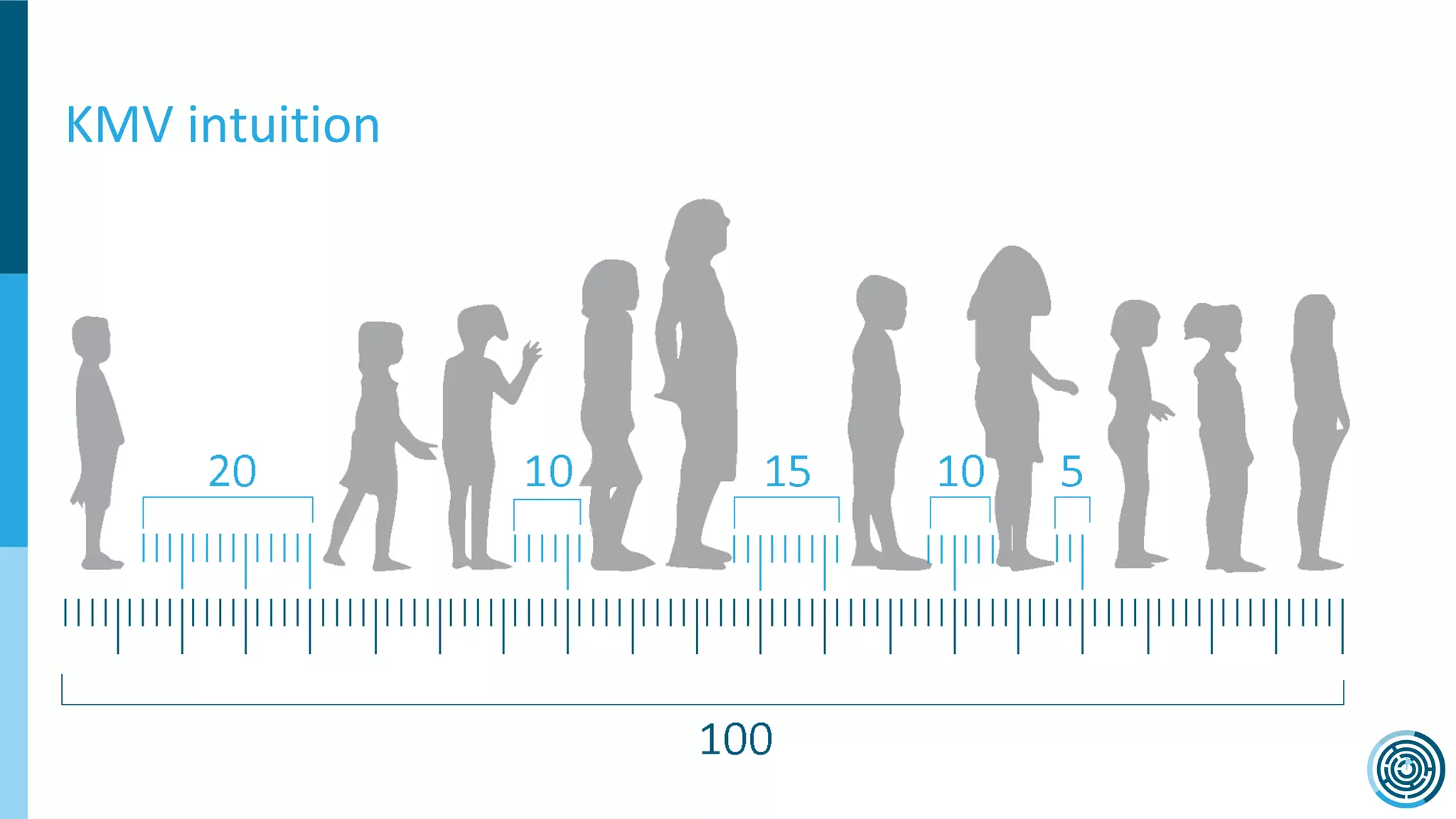

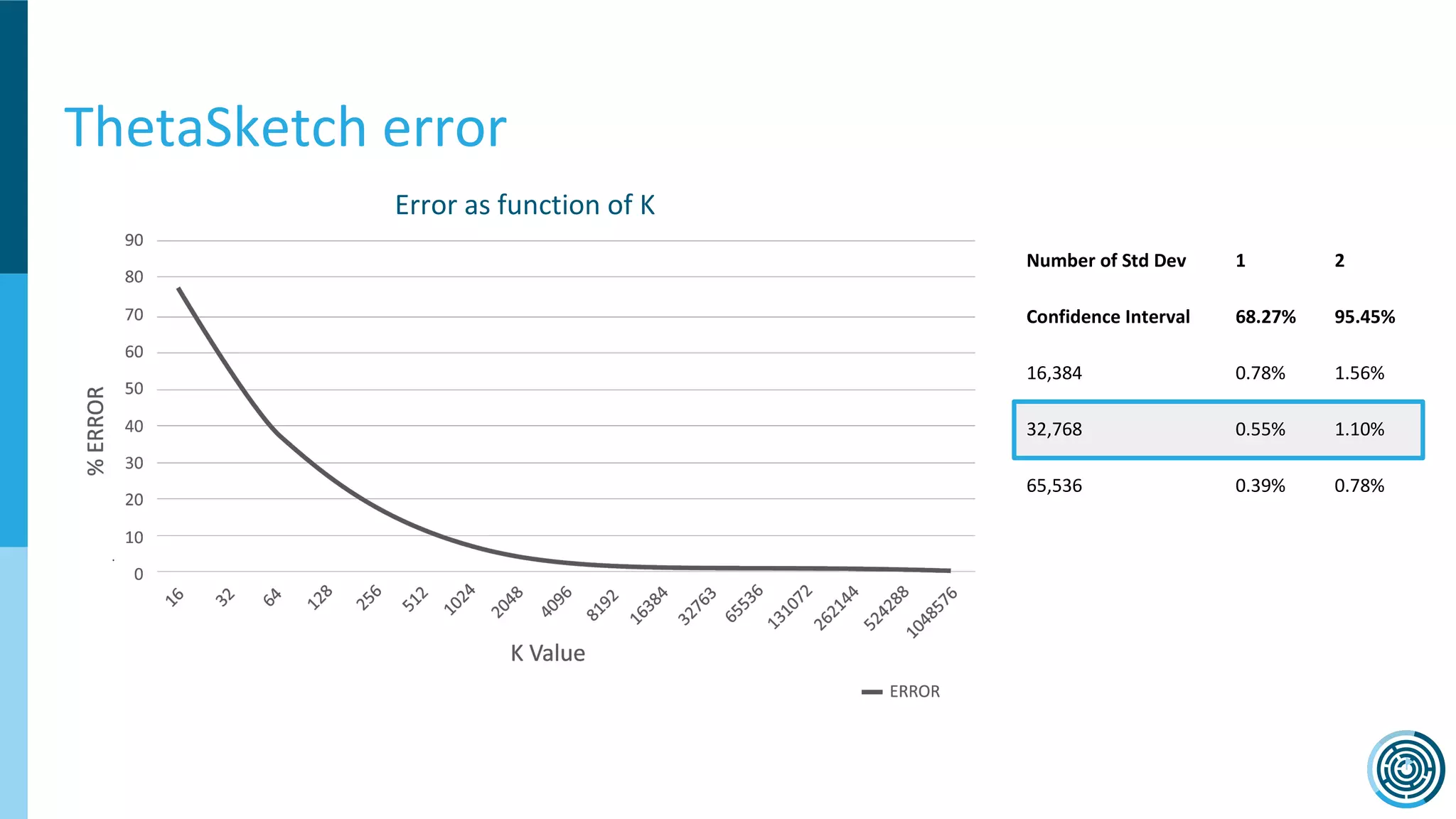

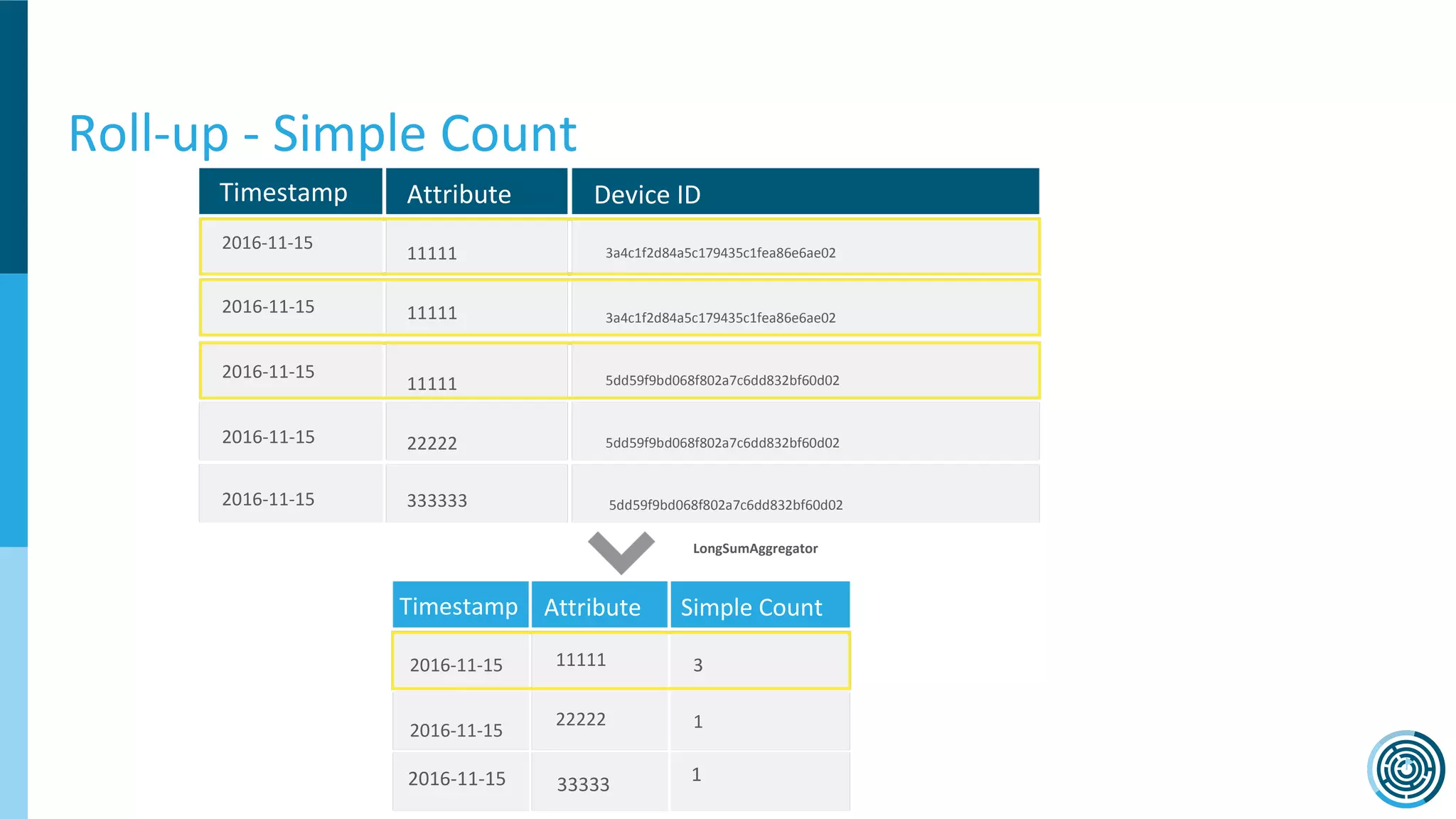

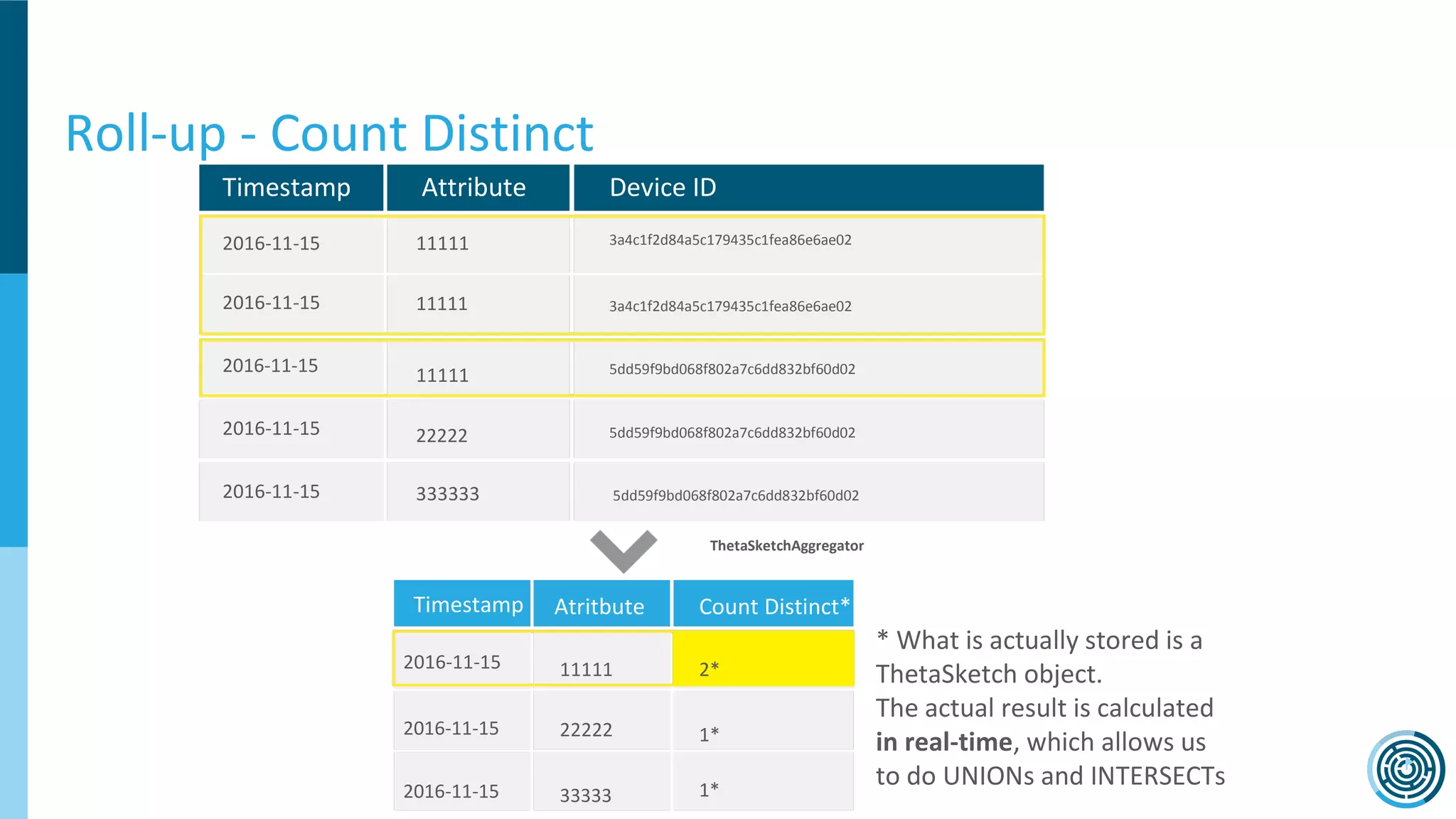

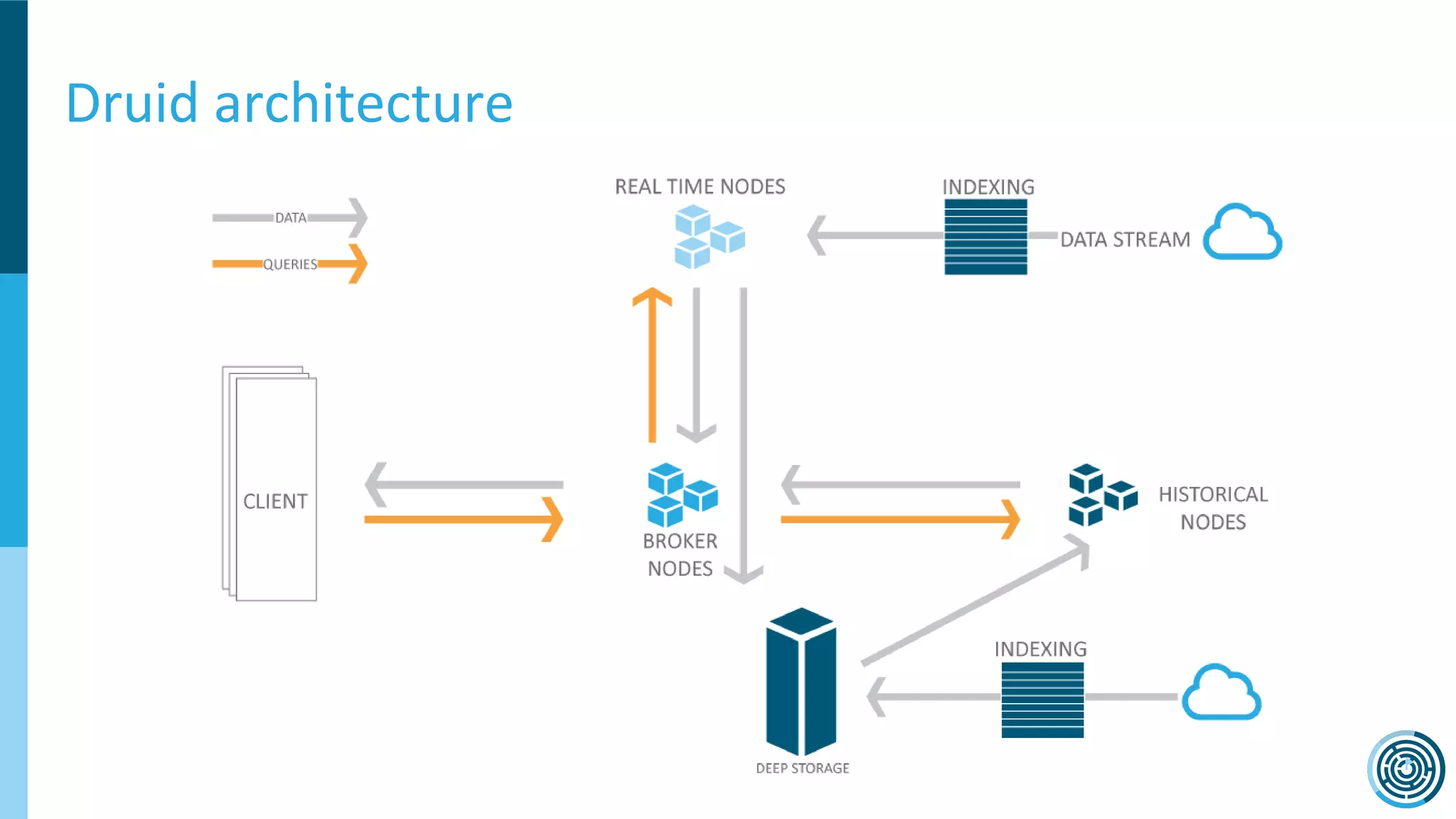

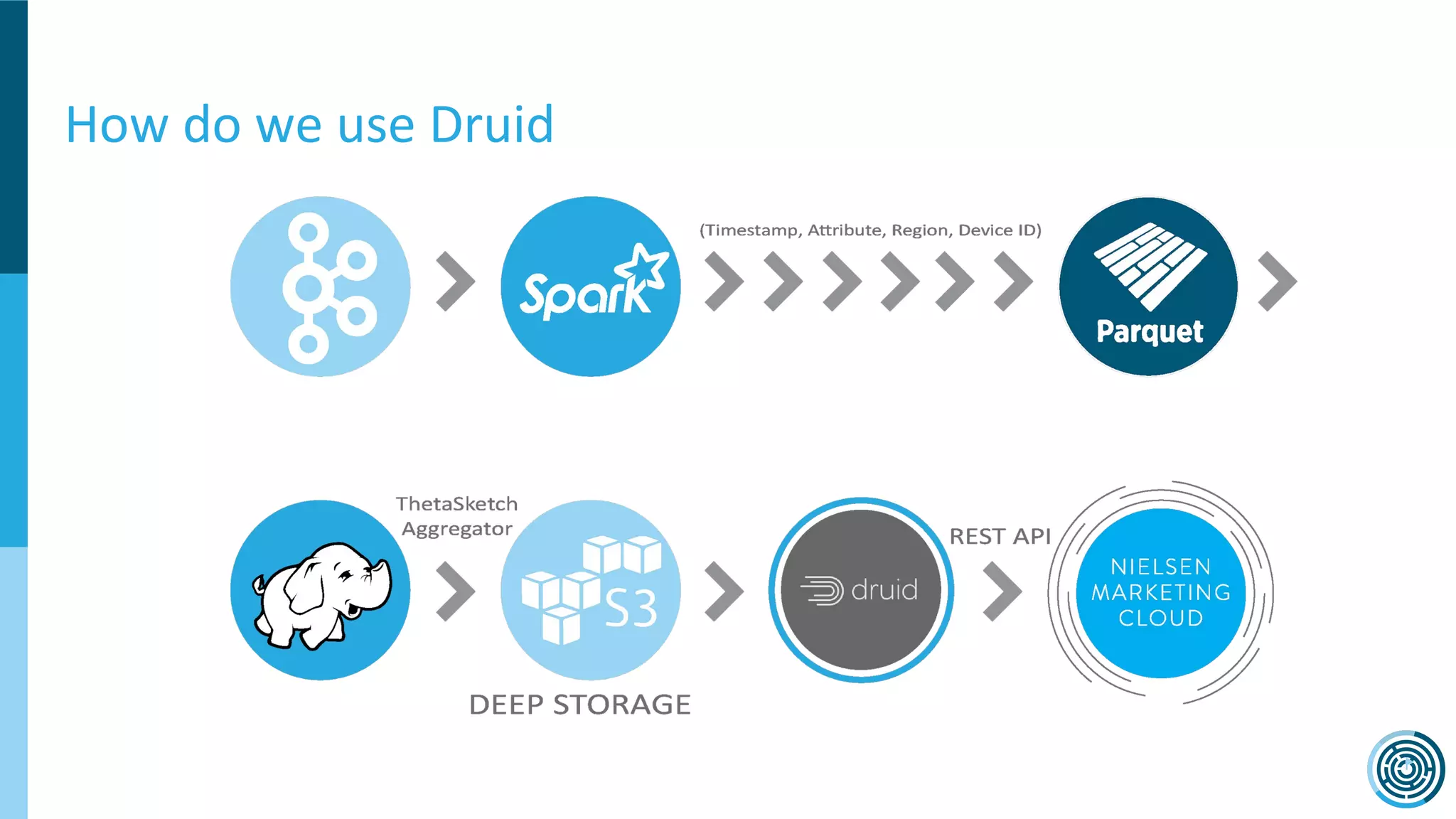

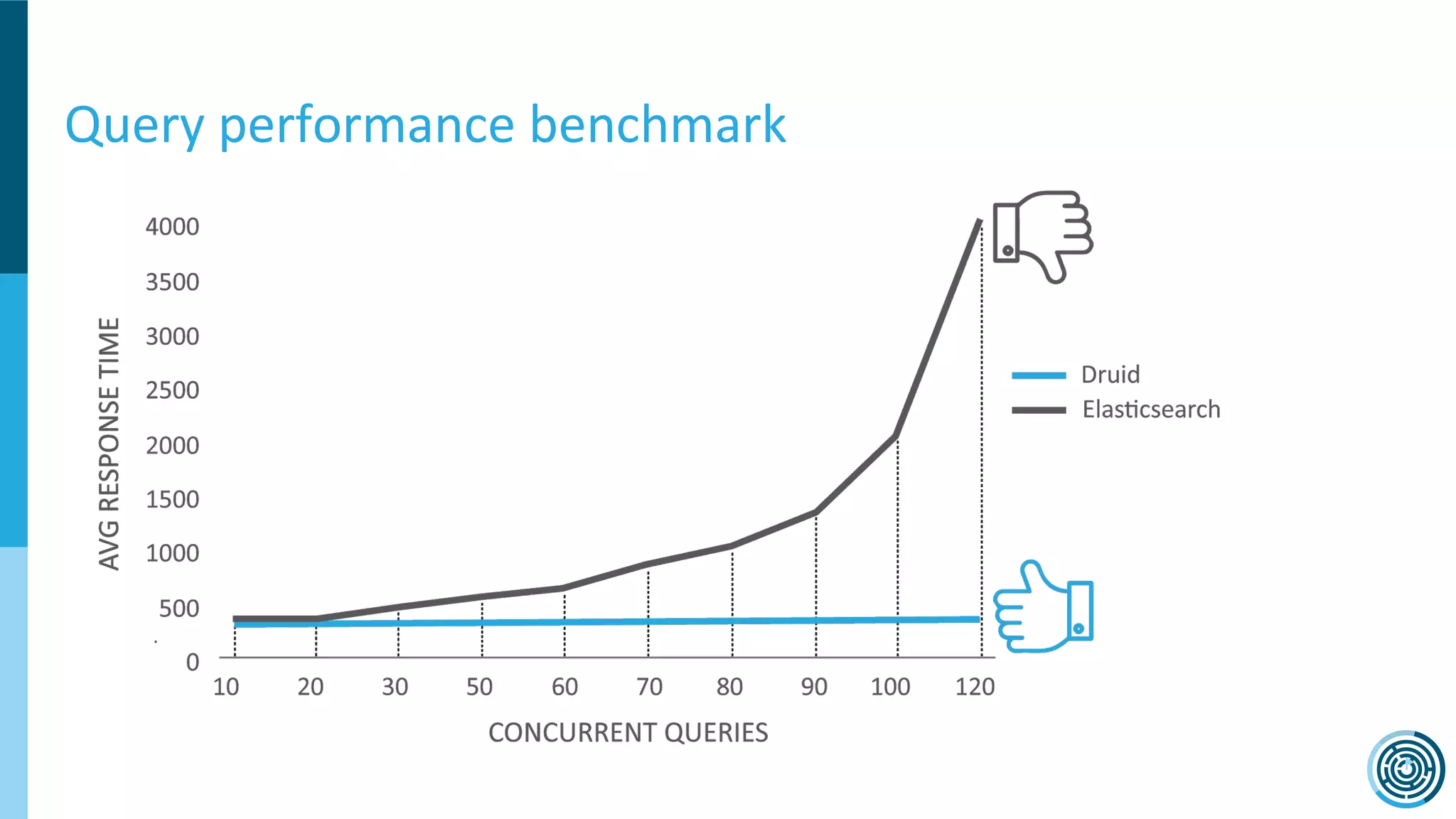

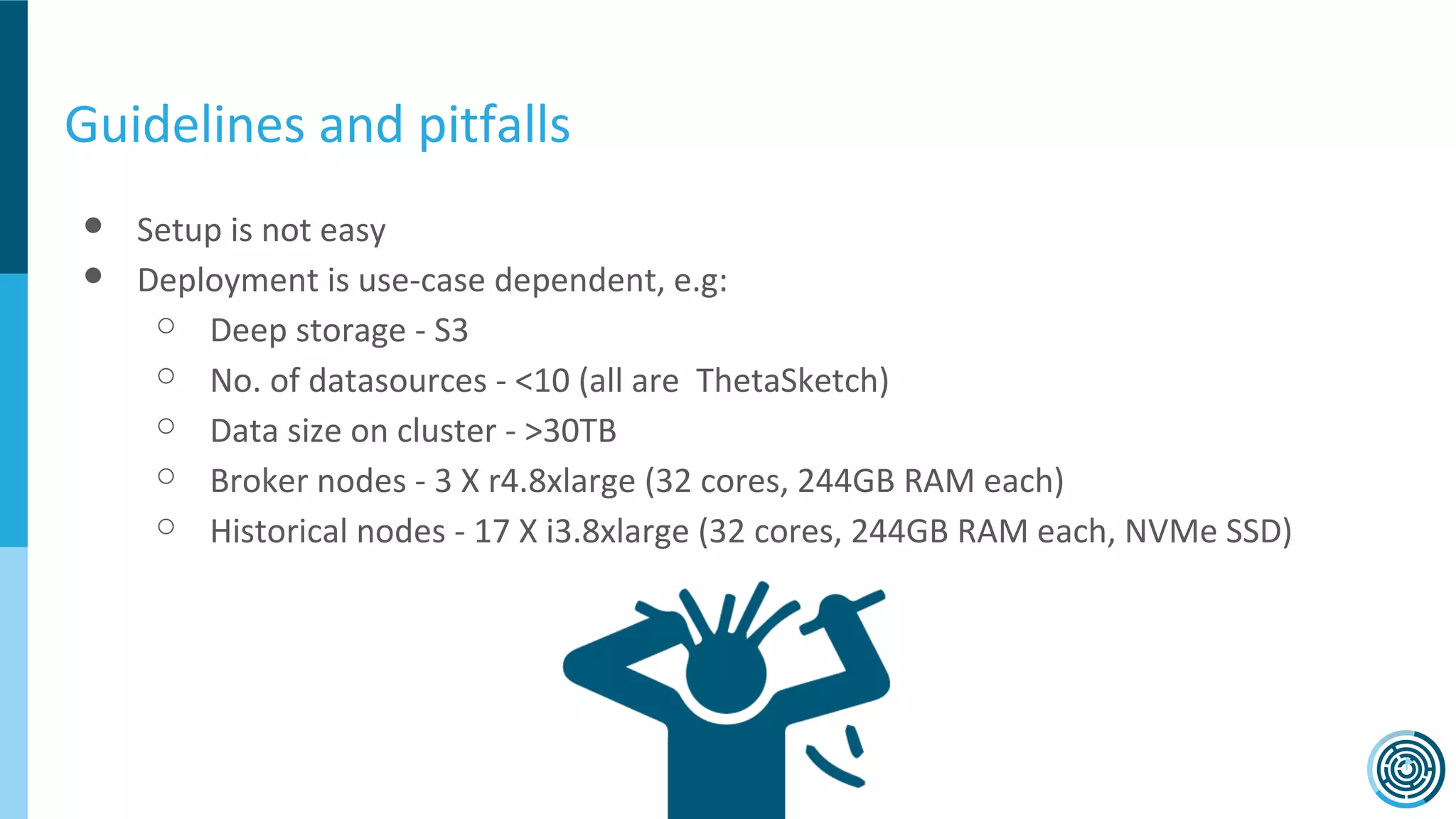

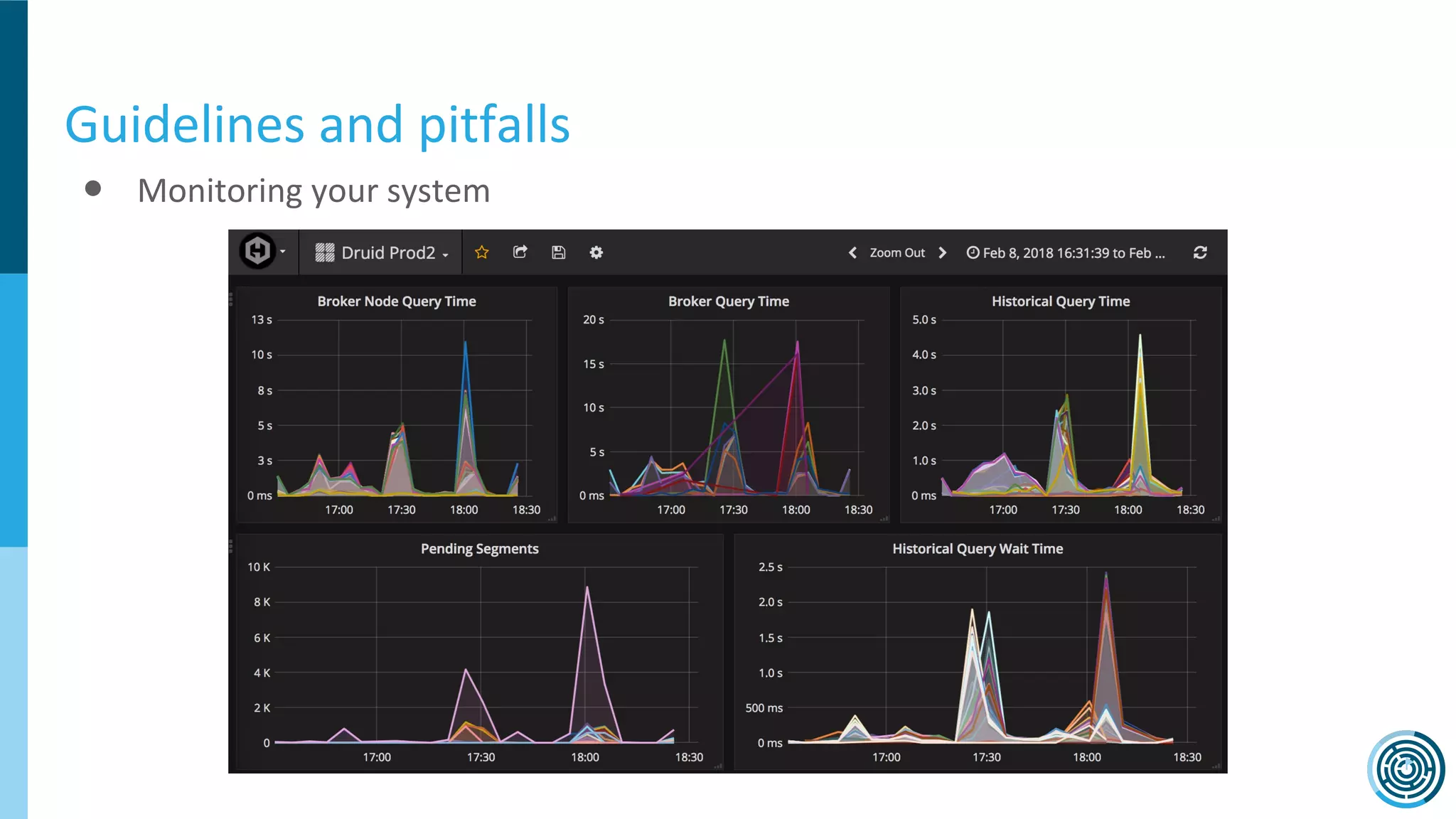

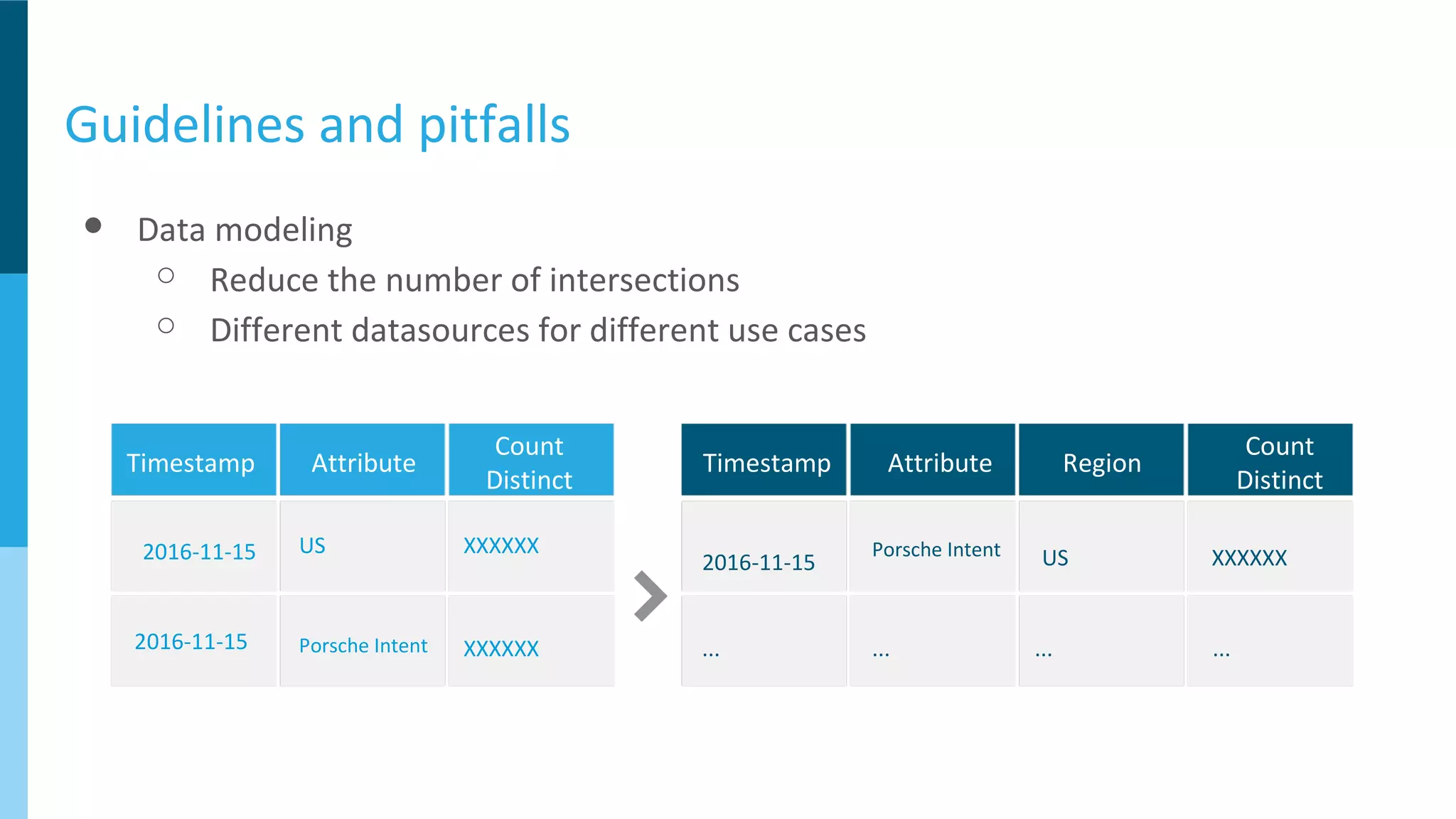

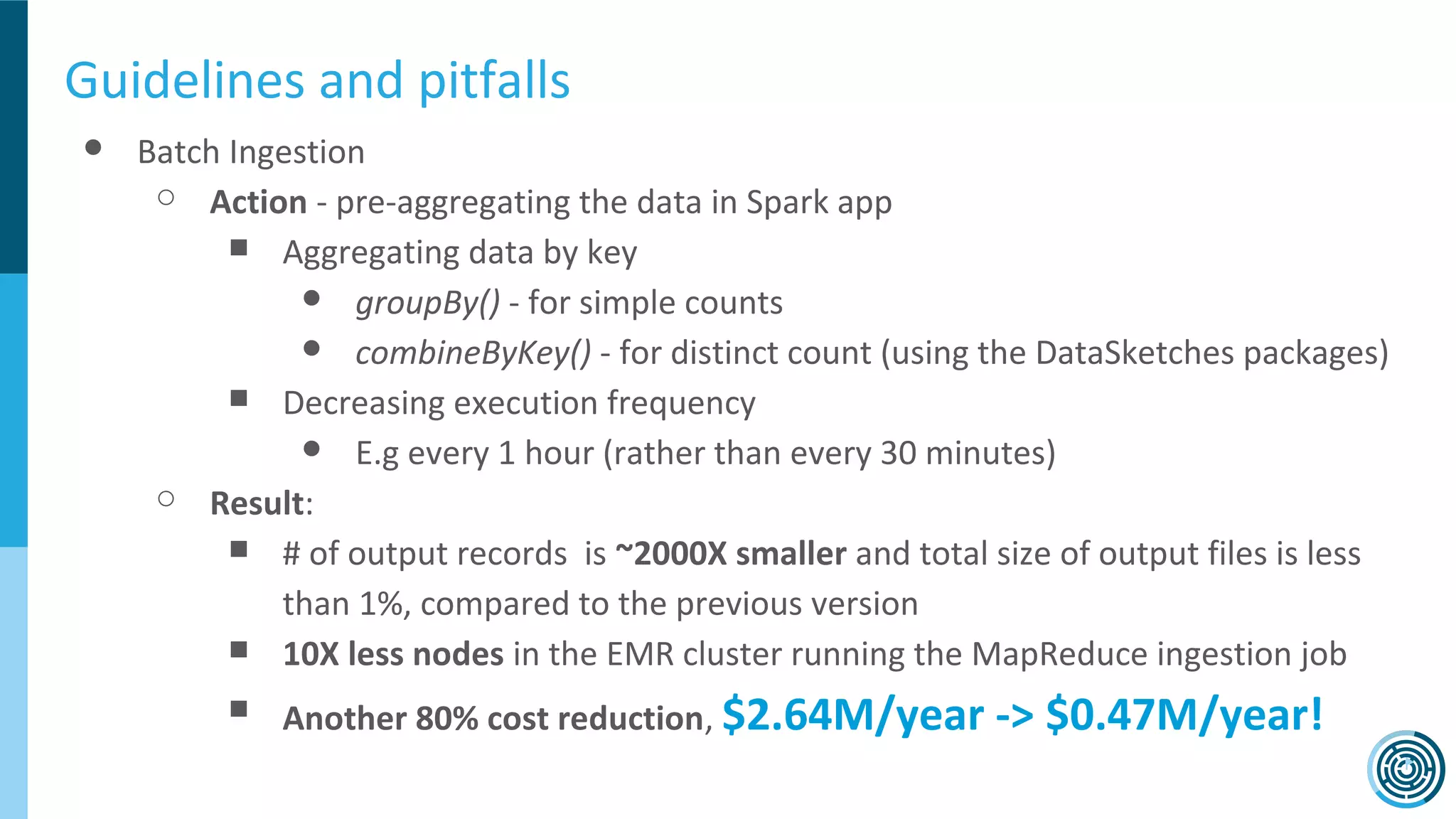

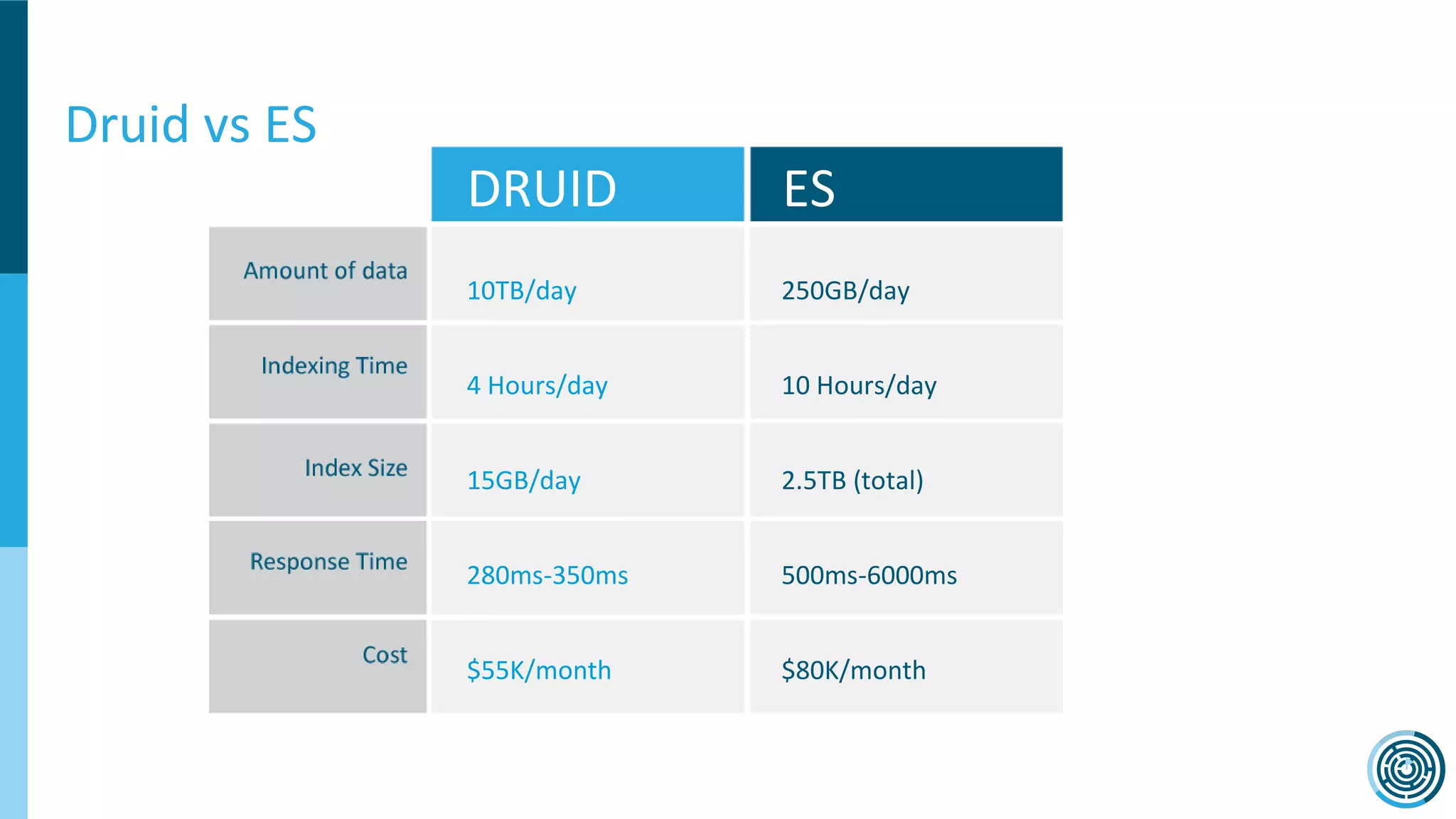

The document discusses the challenge of counting unique users in real-time using the Nielsen Marketing Cloud, with a focus on big data processing and machine learning solutions. It describes the various methods and frameworks, including theTasketch and Druid, for efficiently handling large datasets and real-time distinct count queries. The authors emphasize the importance of query performance optimization, data modeling, and community resources for achieving effective big data applications.