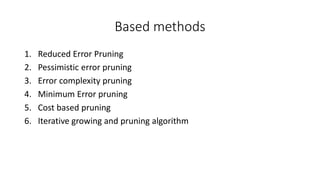

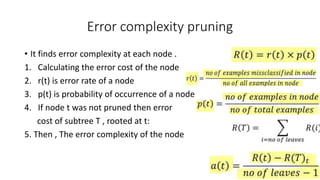

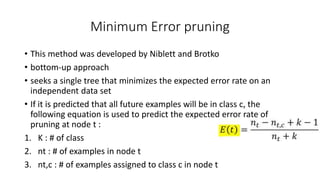

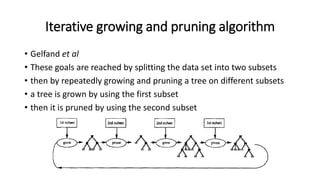

The document discusses various post-pruning methods for decision trees, including reduced error pruning, pessimistic error pruning, error complexity pruning, minimum error pruning, and cost-based pruning. Reduced error pruning uses separate training, validation, and test datasets to prune nodes that do not decrease validation error. Pessimistic error pruning avoids a separate validation set by using the training set for both growing and pruning the tree. Other methods prune nodes based on minimizing error complexity, expected error rate, or consideration of both error rate and costs.