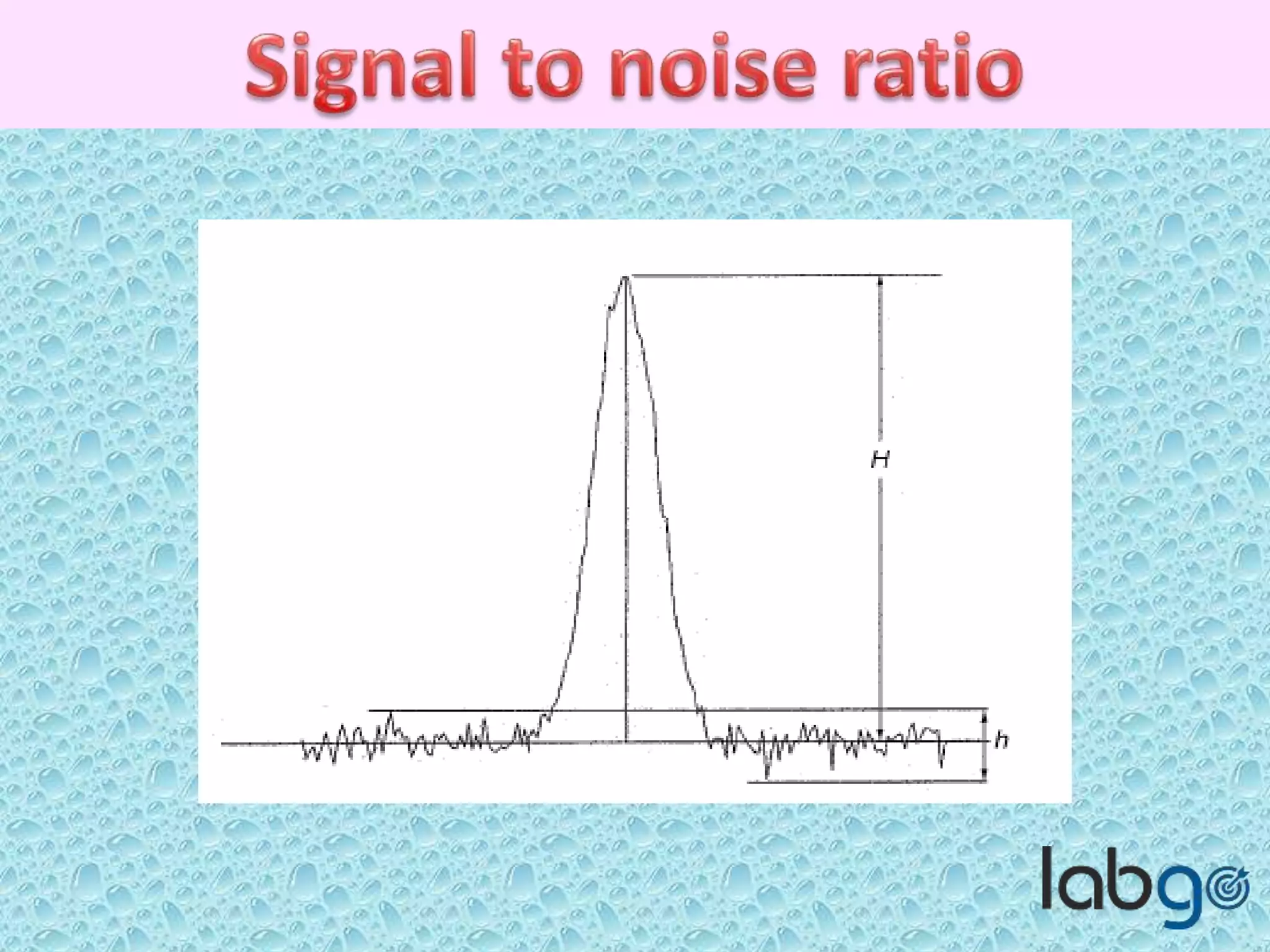

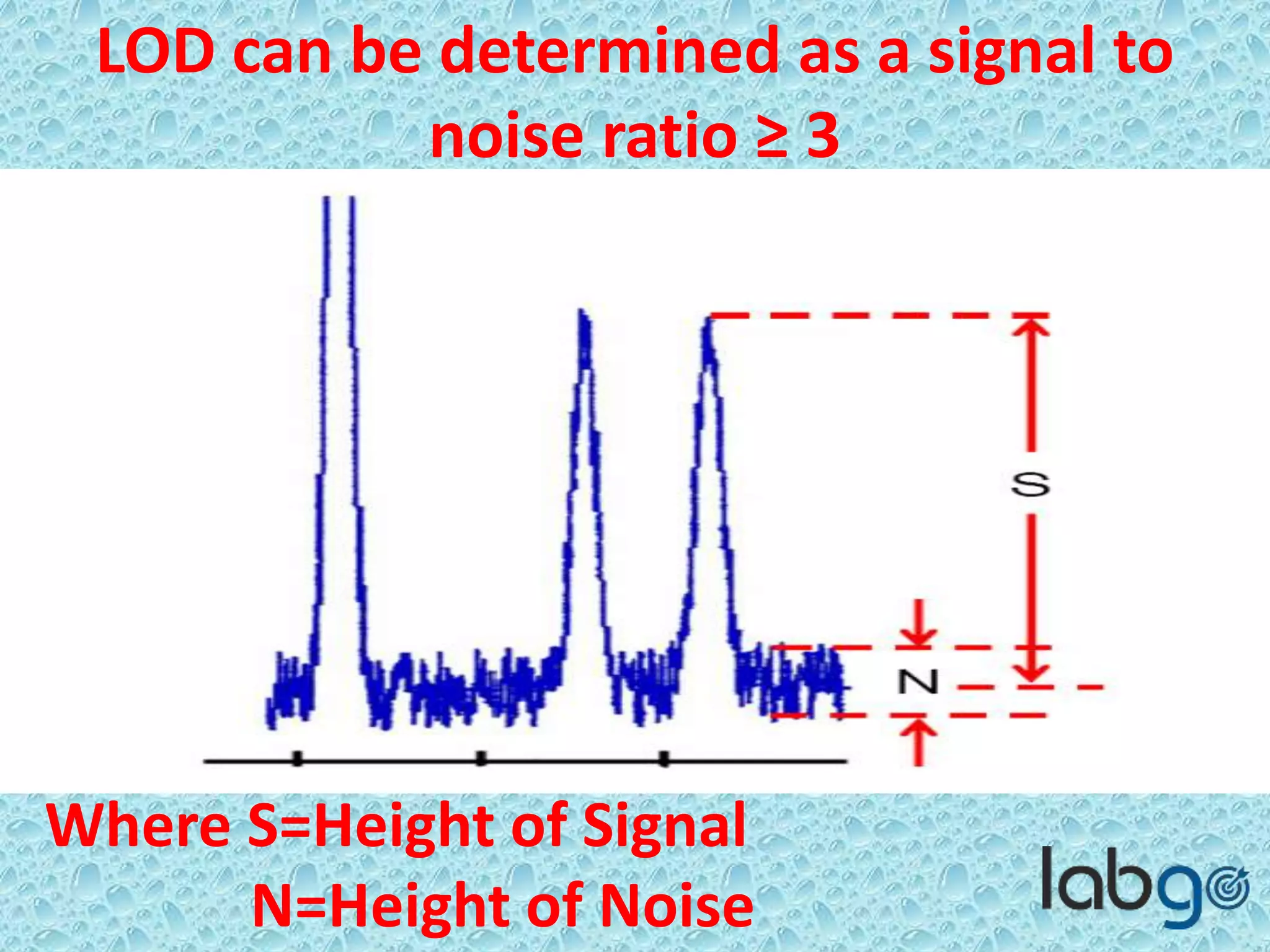

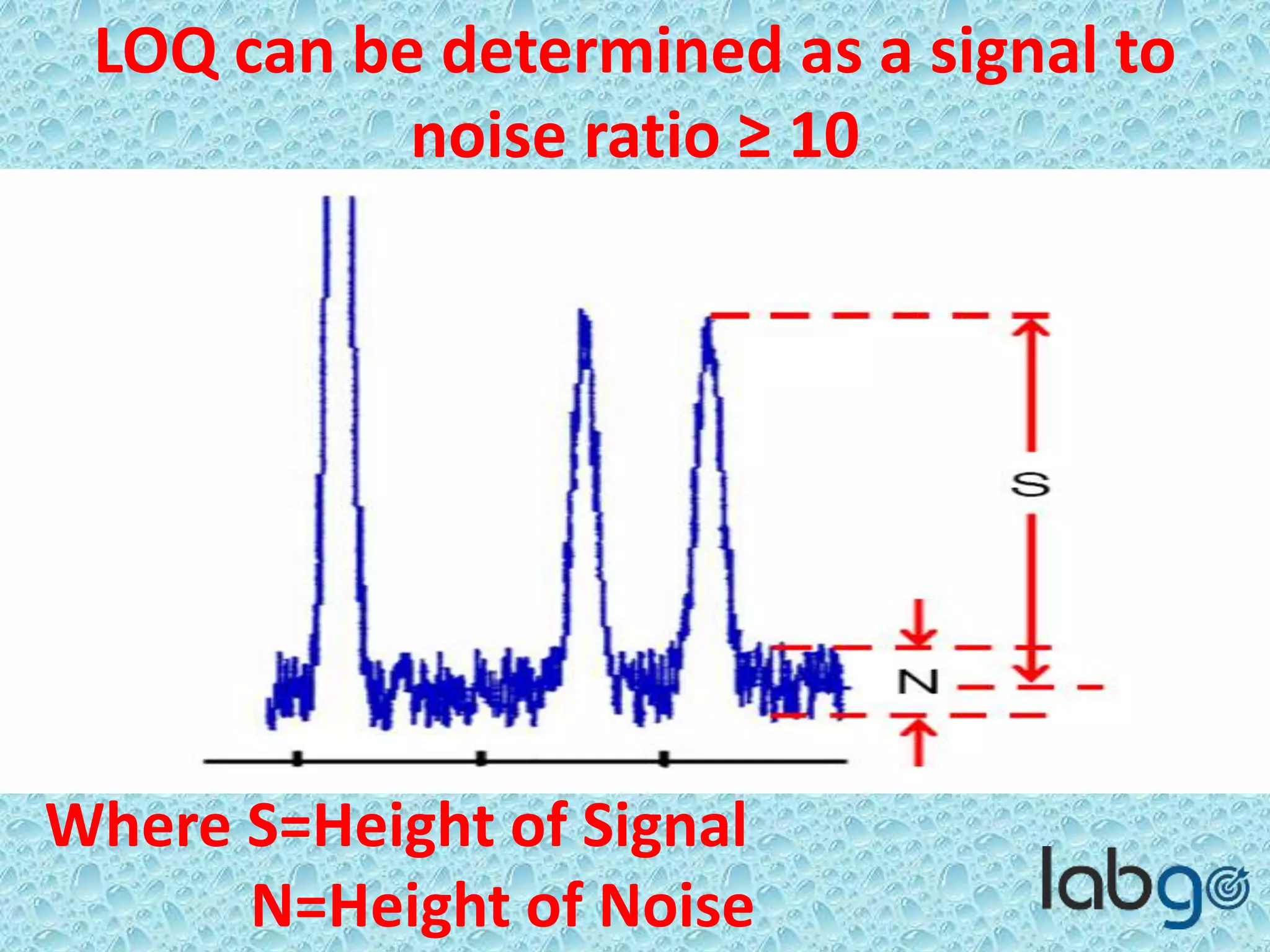

The document outlines methods for determining the limit of detection (LOD) and limit of quantitation (LOQ) in analytical procedures, emphasizing the role of signal-to-noise (S/N) ratios in establishing reliable detection and quantitation levels. It defines accepted S/N ratios of 3:1 for LOD and 10:1 for LOQ, along with methods to assess the robustness and ruggedness of analytical methods amidst variations in parameters like flow rate, temperature, and pH. The importance of evaluating robustness is highlighted to maintain the validity of the analytical procedure.