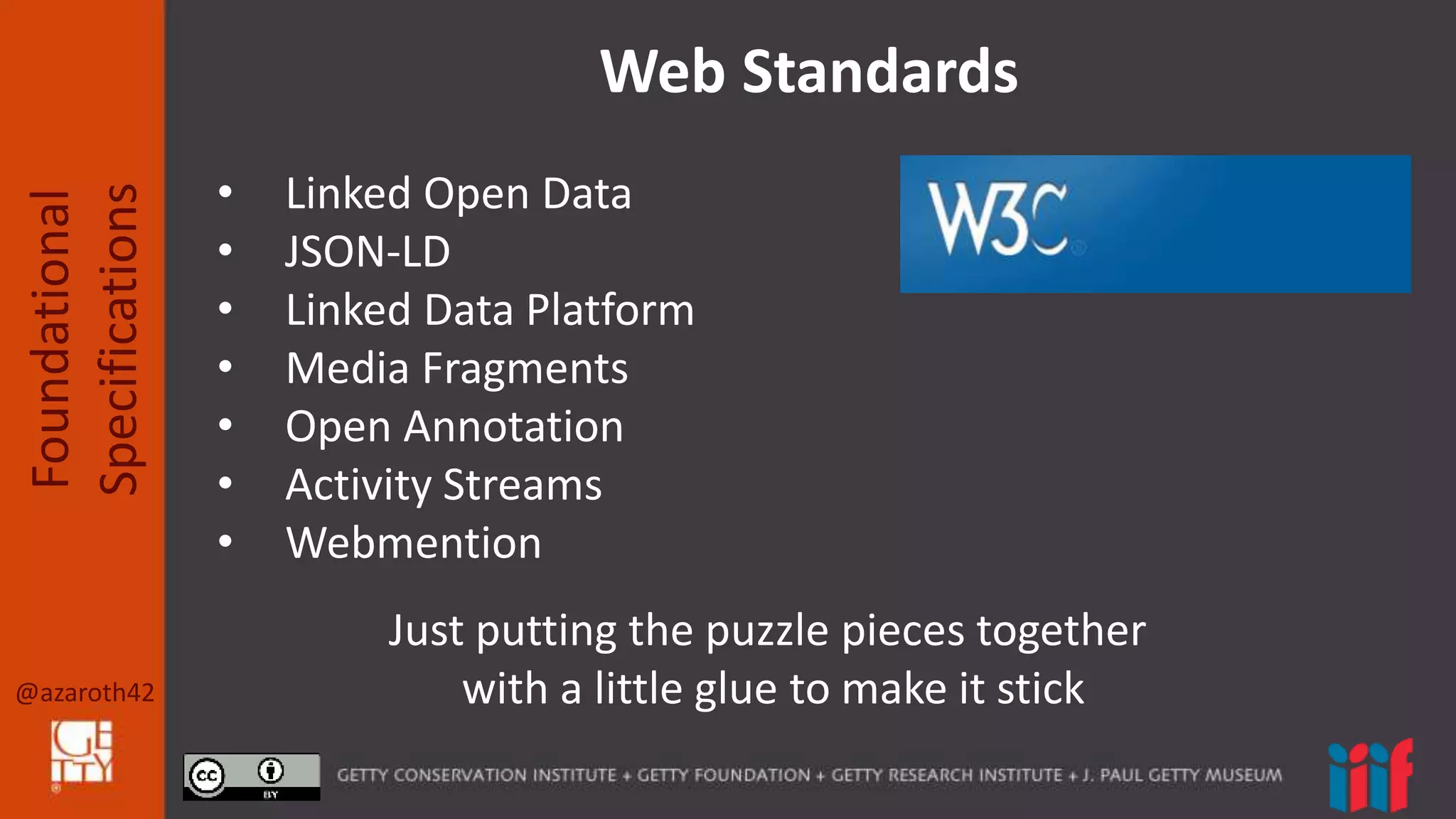

The document outlines foundational specifications for the International Image Interoperability Framework (IIIF), focusing on the development and implementation of APIs based on web standards such as JSON-LD and linked open data. It discusses various aspects including media fragments, open annotation, activity streams, and webmention, providing examples of how linked data can be structured. Additionally, it includes references to existing specifications and protocols relevant to these topics.

![@azaroth42

Foundational

Specifications

JSON-LD

Developer friendly way to express Linked Data

{

“@context”: ”http://iiif.io/api/presentation/2/context.json”,

“@id”: “http://example.org/iiif/book1/manifest”,

“@type”: “sc:Manifest”,

“label”: “Book 1”,

“metadata”: [

{“label”: “Author”, “value”: “A. Authorus”}

],

“license”: ”http://example.org/rights/license.html”,

“sequences”: [ … ]

}](https://image.slidesharecdn.com/nyamotherspecswide-160514203055/75/IIIF-Foundational-Specifications-6-2048.jpg)