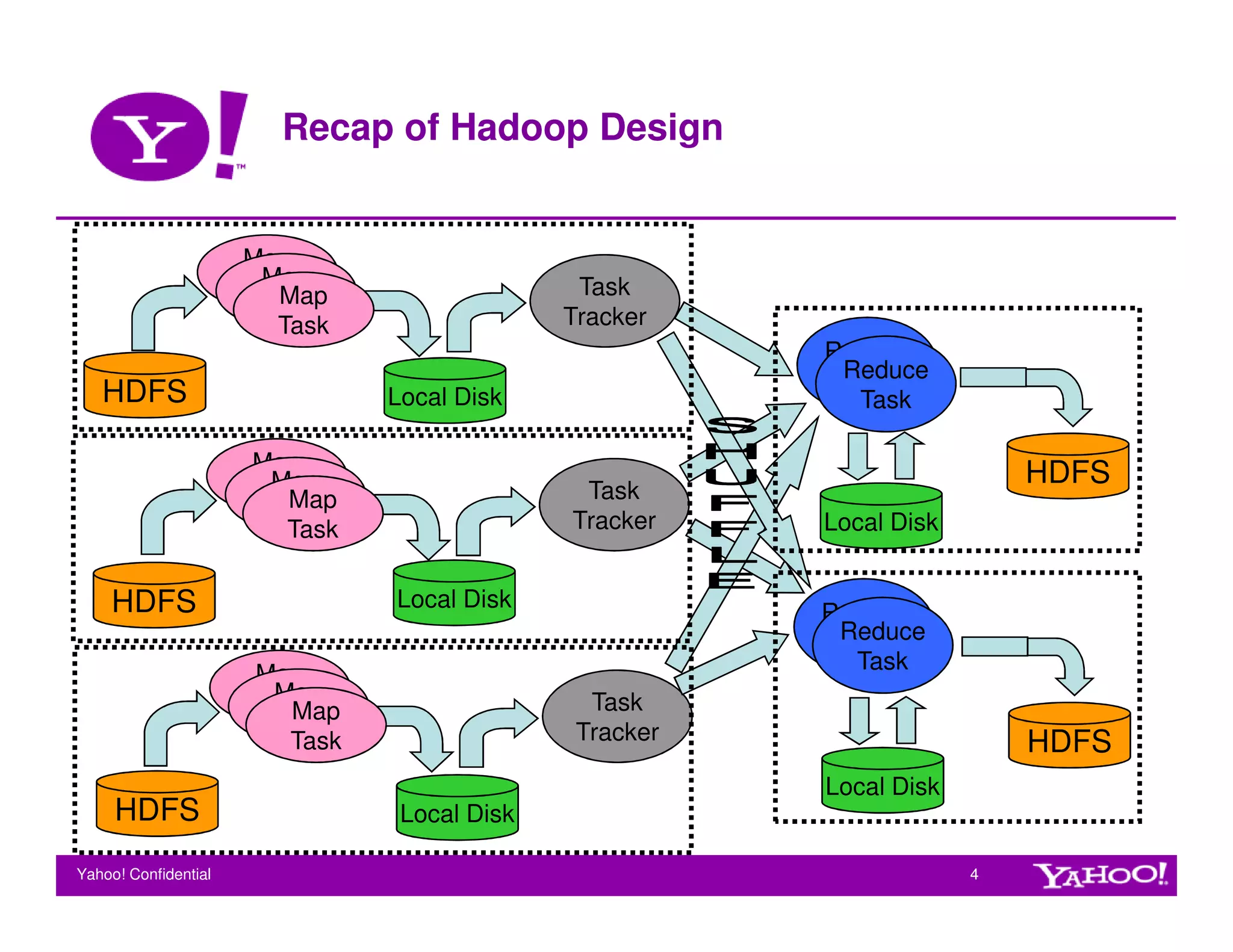

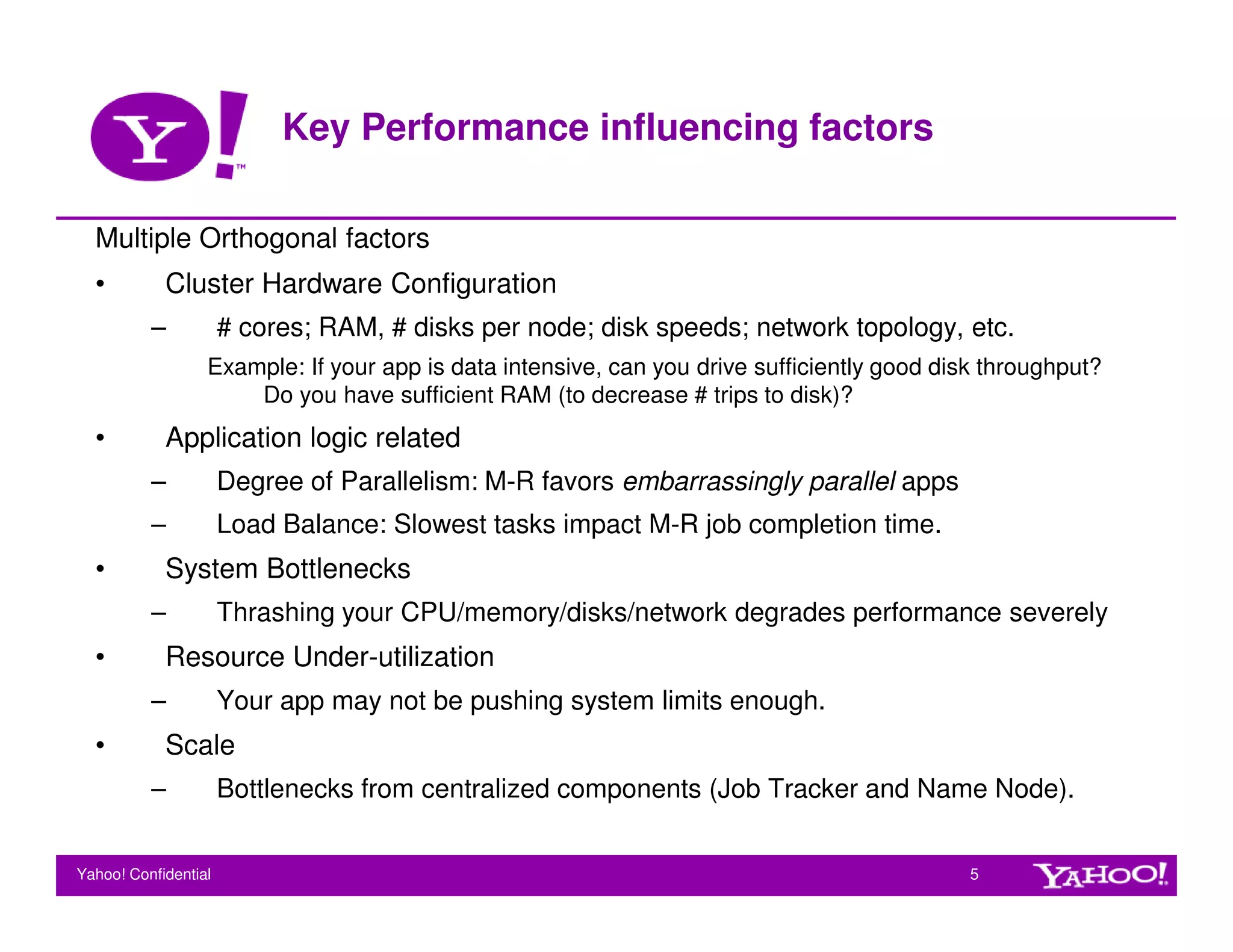

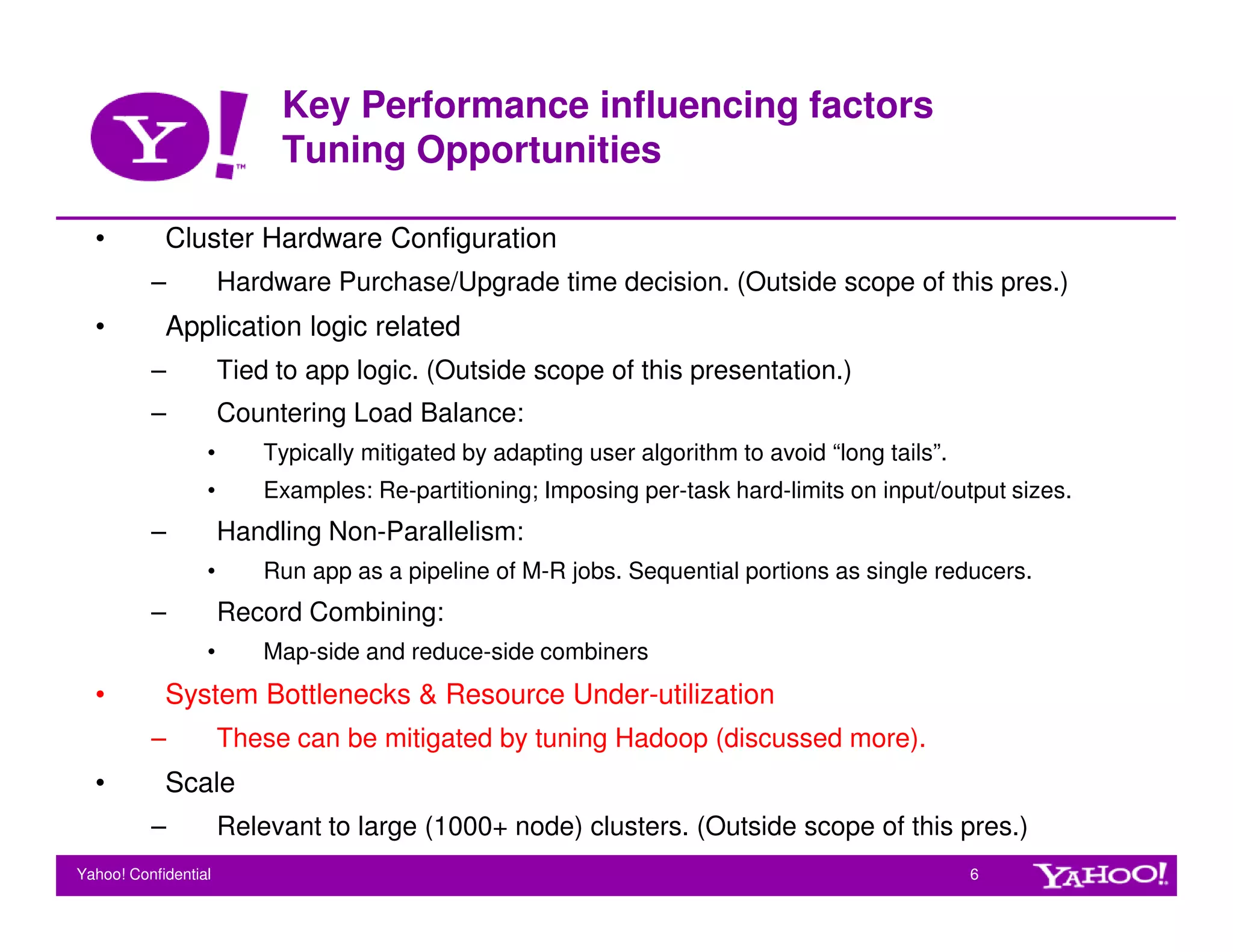

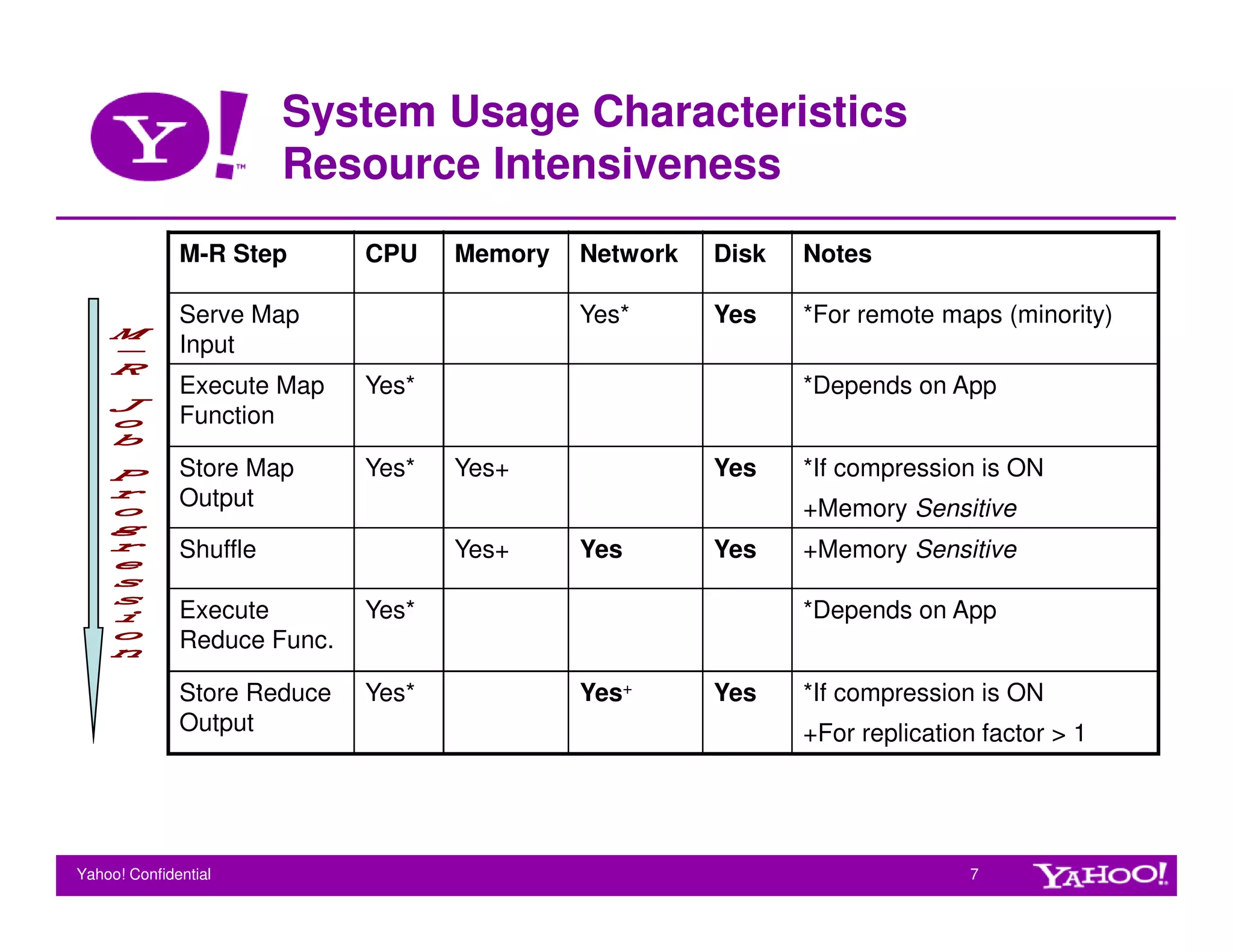

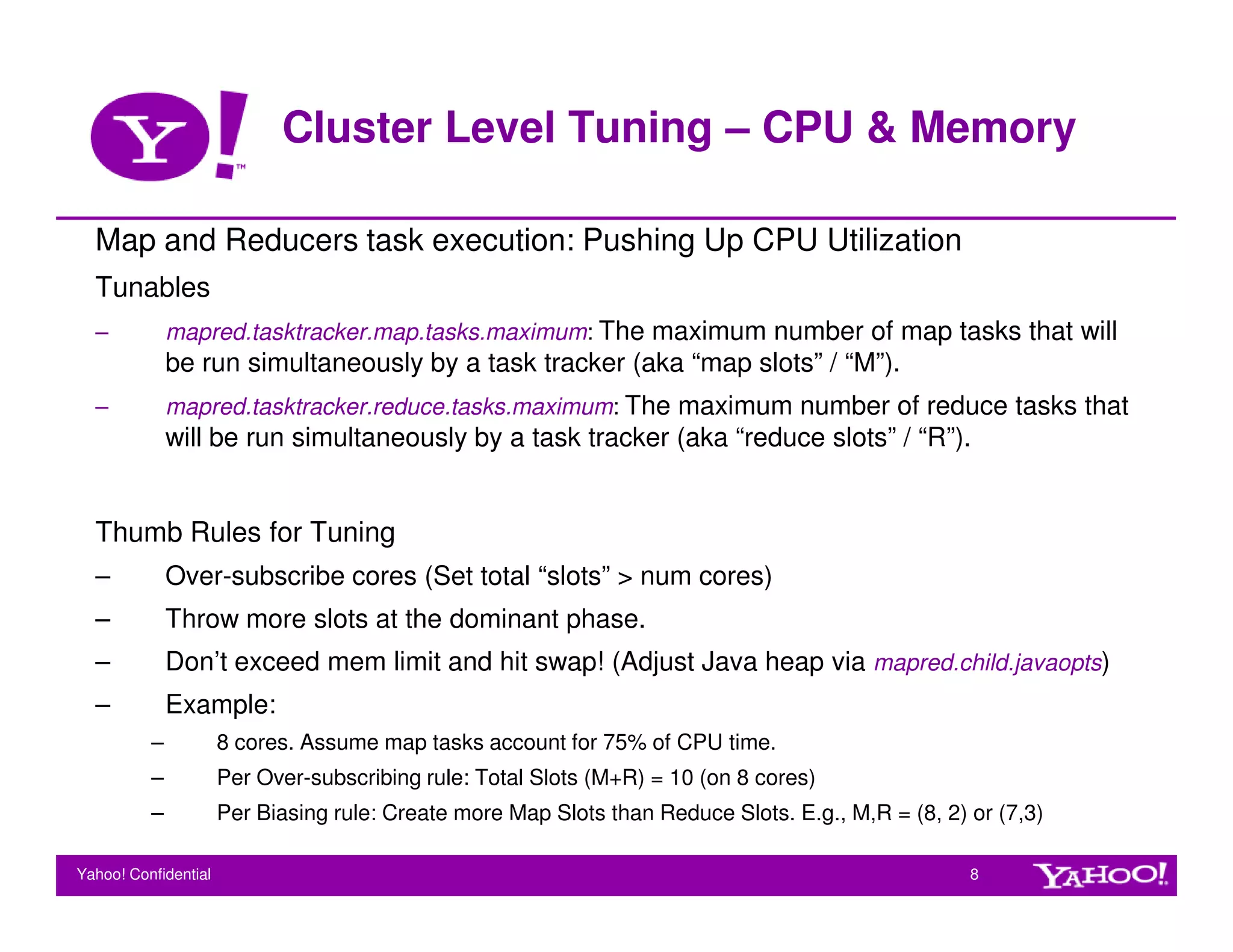

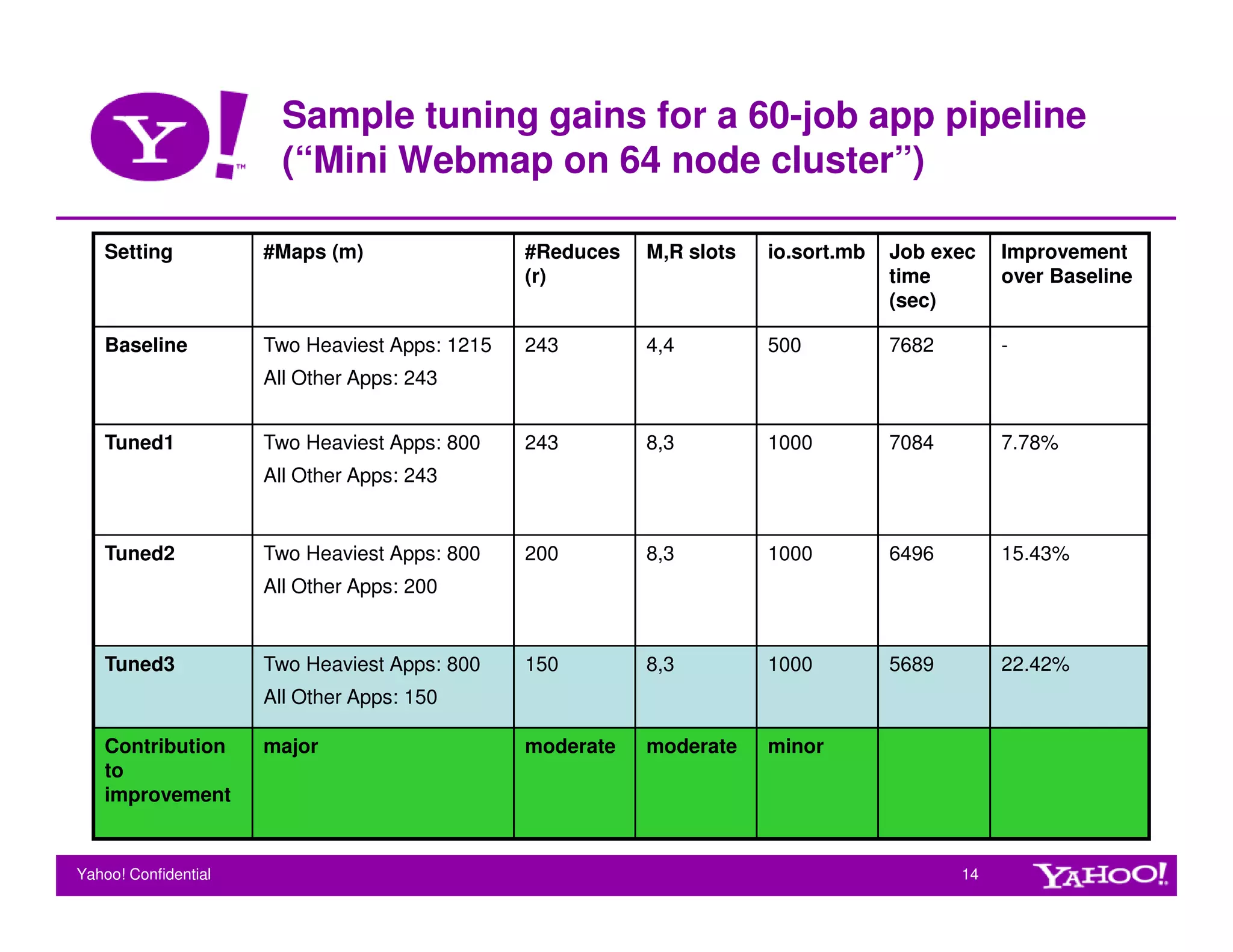

This document provides guidelines for tuning Hadoop for performance. It discusses key factors that influence Hadoop performance like hardware configuration, application logic, and system bottlenecks. It also outlines various configuration parameters that can be tuned at the cluster and job level to optimize CPU, memory, disk throughput, and task granularity. Sample tuning gains are shown for a webmap application where tuning multiple parameters improved job execution time by up to 22%.