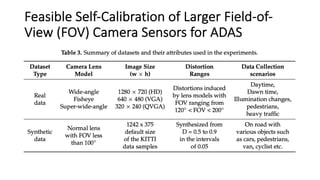

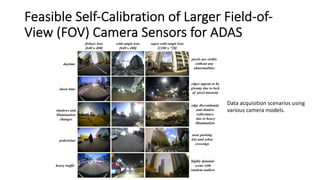

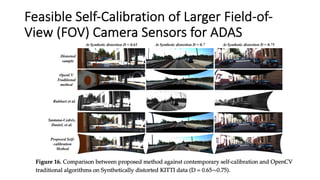

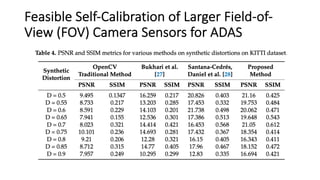

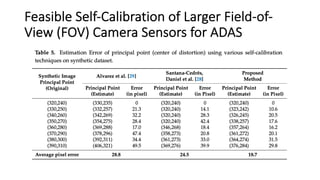

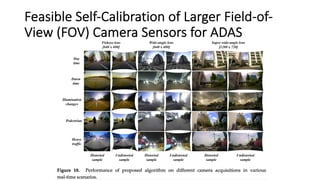

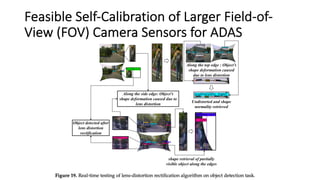

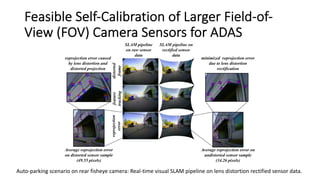

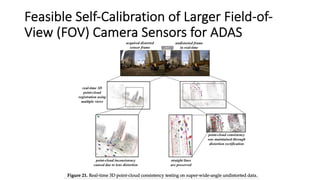

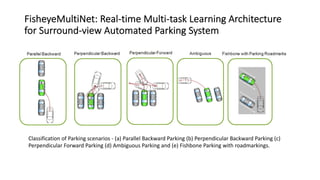

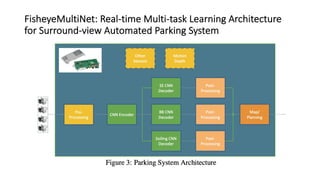

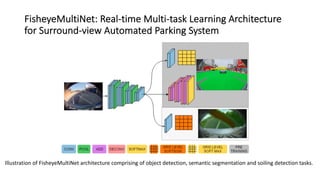

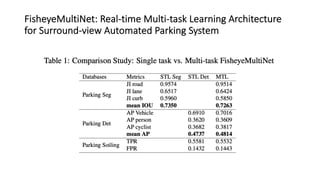

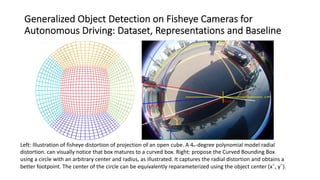

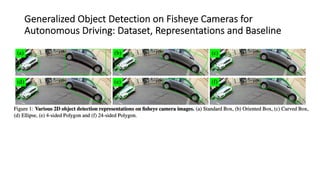

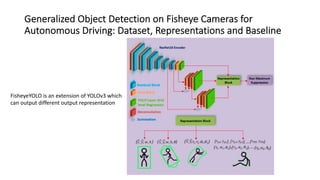

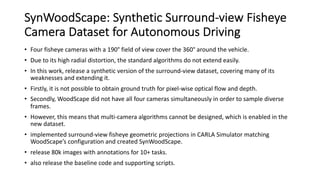

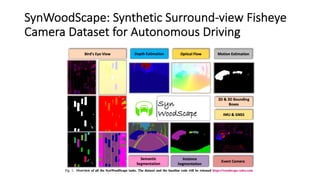

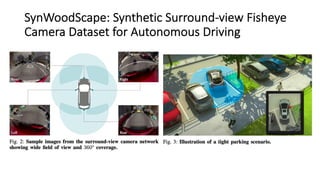

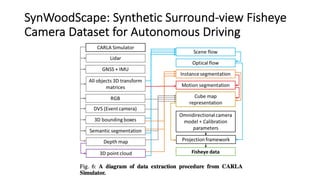

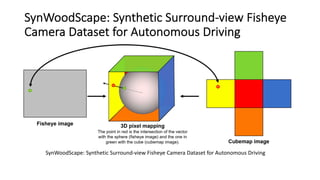

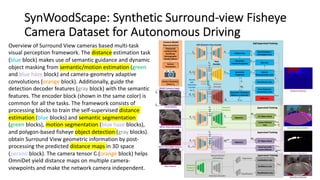

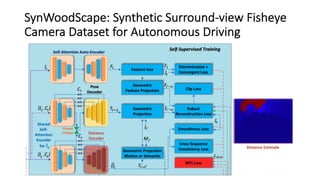

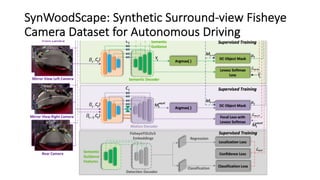

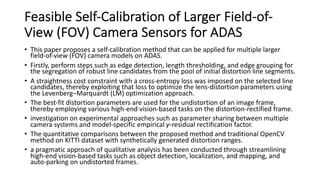

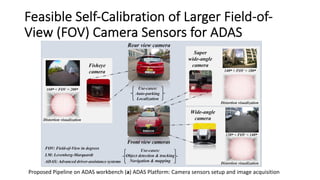

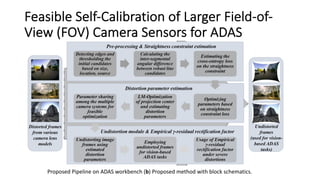

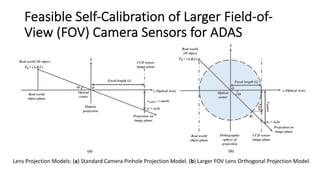

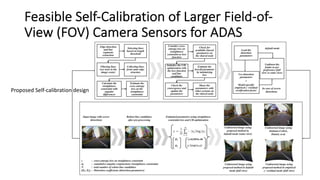

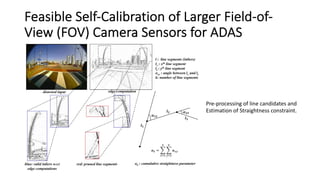

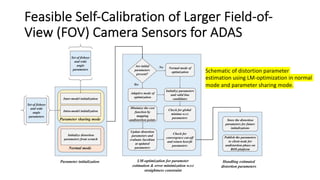

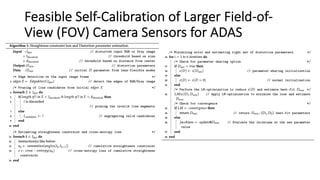

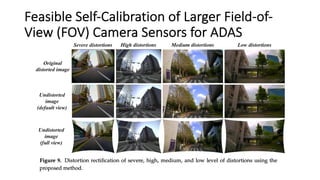

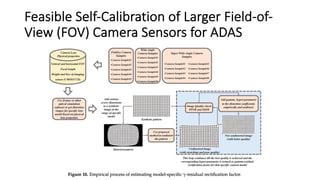

The document discusses the development of a multi-task learning architecture, fisheyemultinet, for surround-view automated parking systems using fisheye cameras, which includes tasks like object detection and semantic segmentation. It also introduces novel object detection methods tailored for fisheye camera distortion, along with a new synthetic dataset, synwoodscape, comprising 80,000 annotated images for improved algorithm performance. Additionally, a self-calibration method for larger field-of-view camera sensors used in advanced driver-assistance systems (ADAS) is proposed, enhancing the application of these cameras in real-world scenarios.

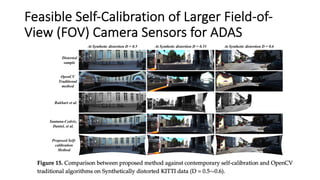

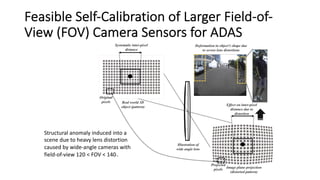

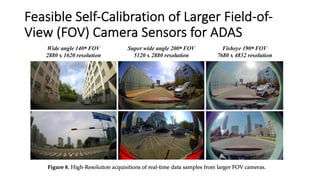

![Feasible Self-Calibration of Larger Field-of-

View (FOV) Camera Sensors for ADAS

Severe distortion cases rectified

using several approaches

[28,29], proposed method with

and without empirical γ-hyper

parameter.](https://image.slidesharecdn.com/fisheyepanoram4-220517104647-fc177cb1/85/Fisheye-Omnidirectional-View-in-Autonomous-Driving-IV-37-320.jpg)