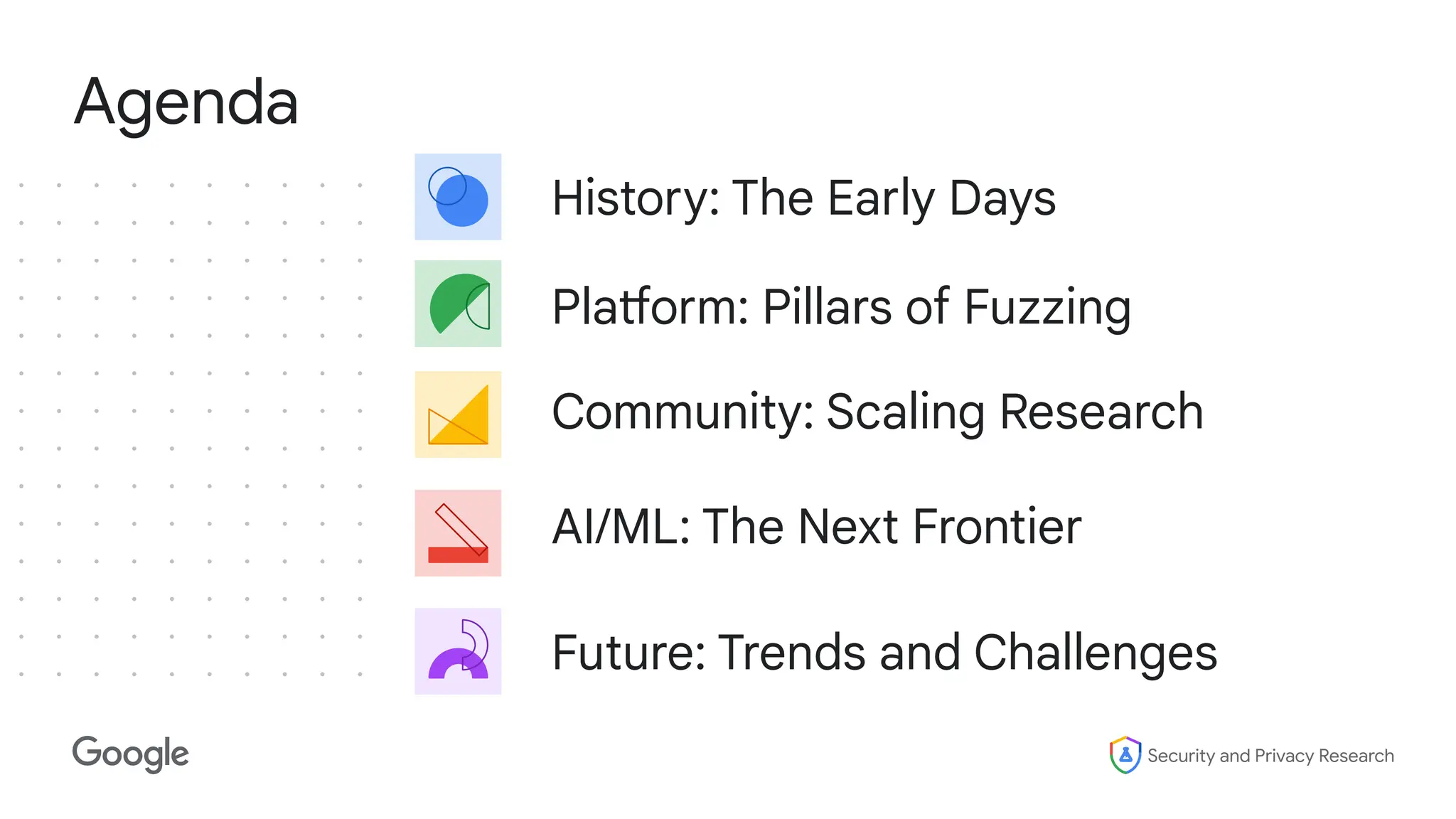

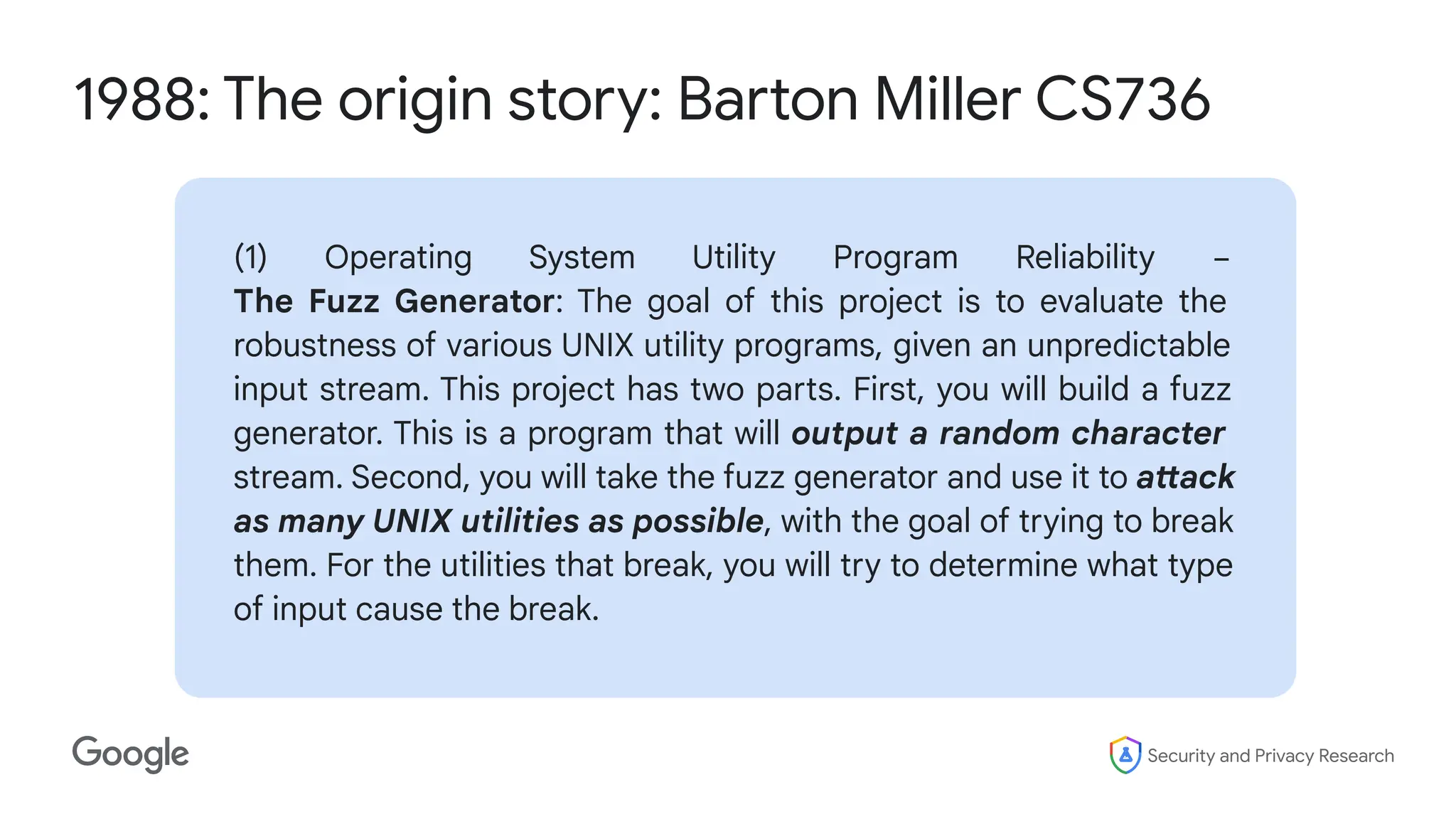

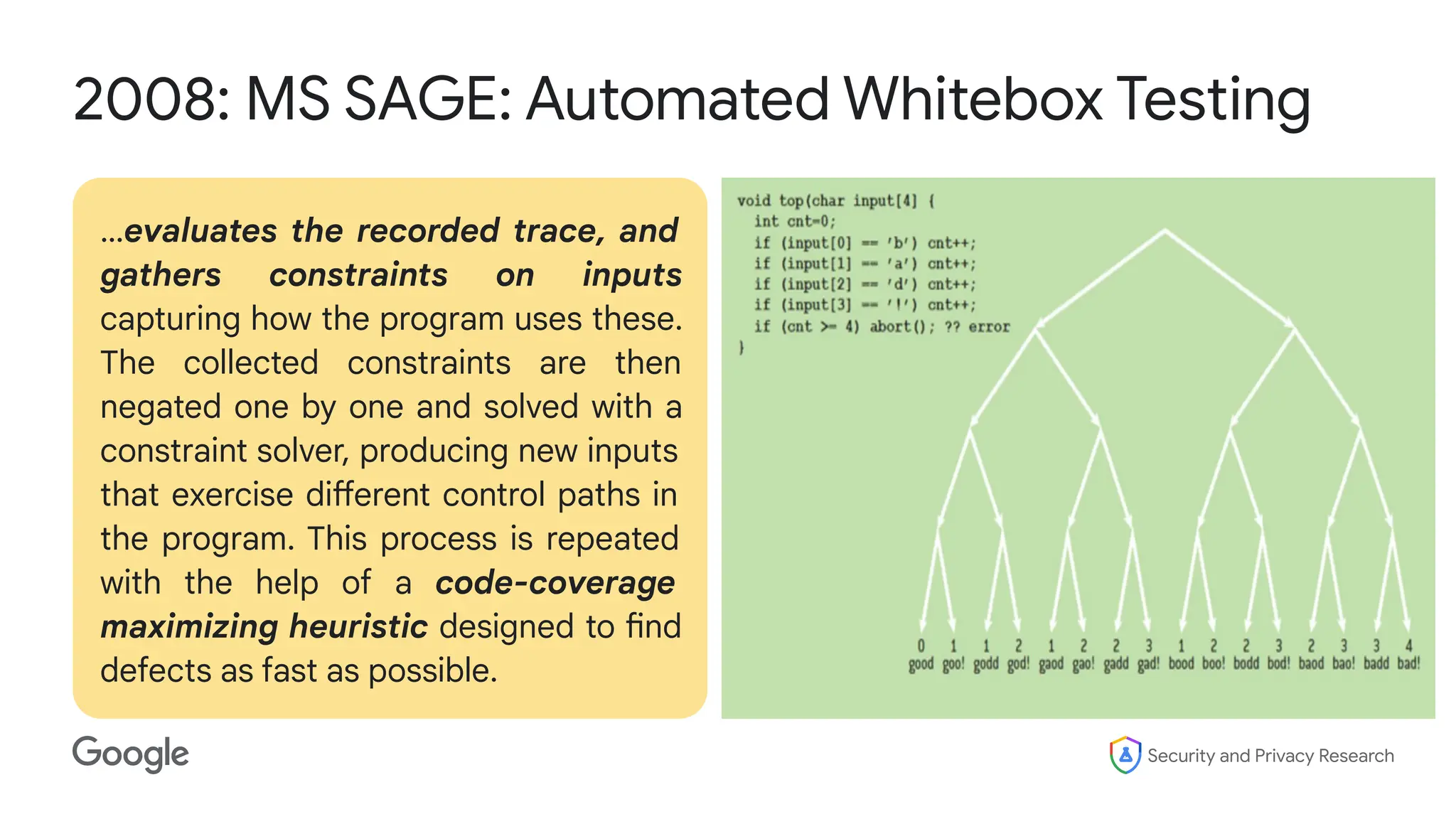

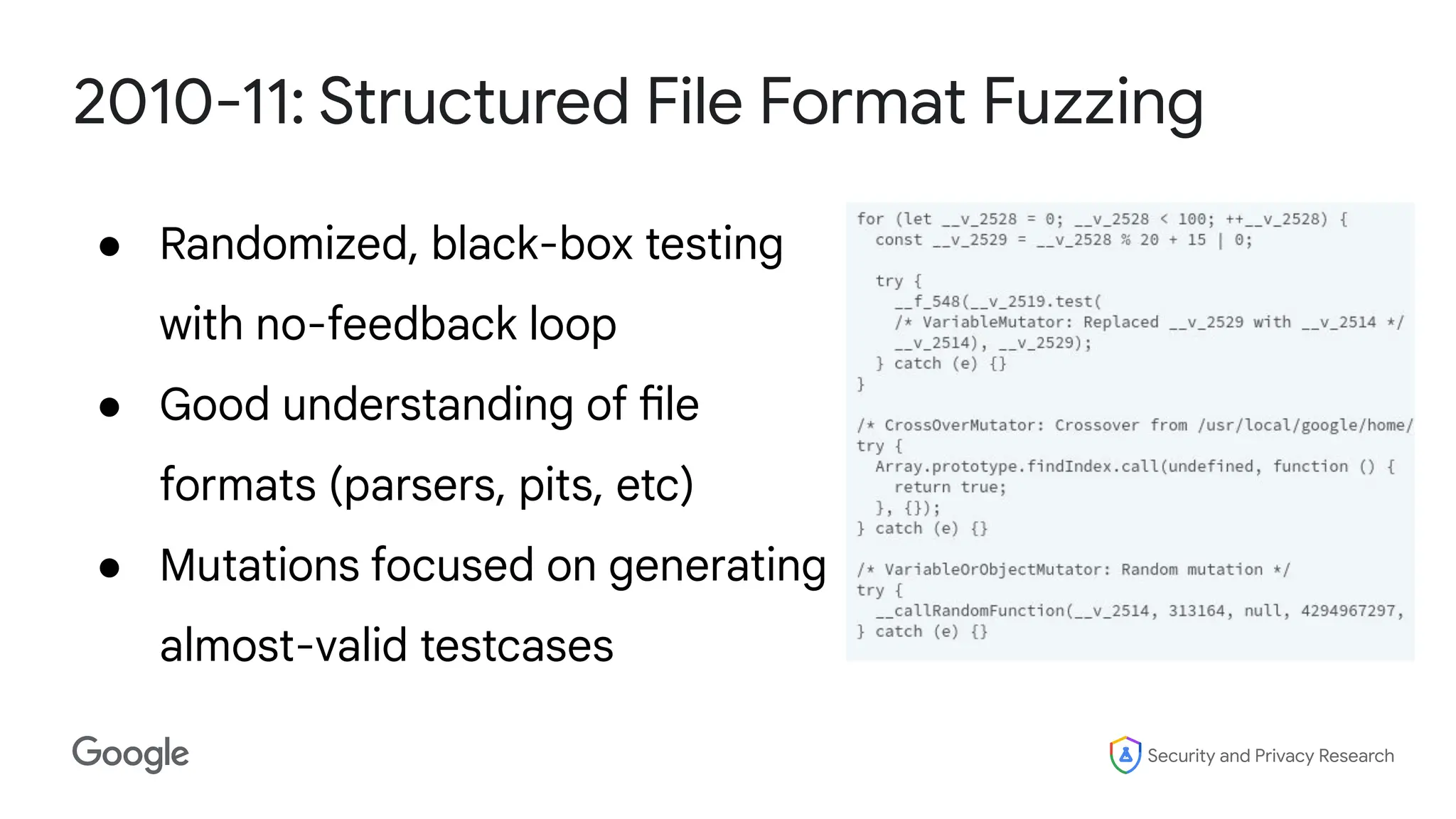

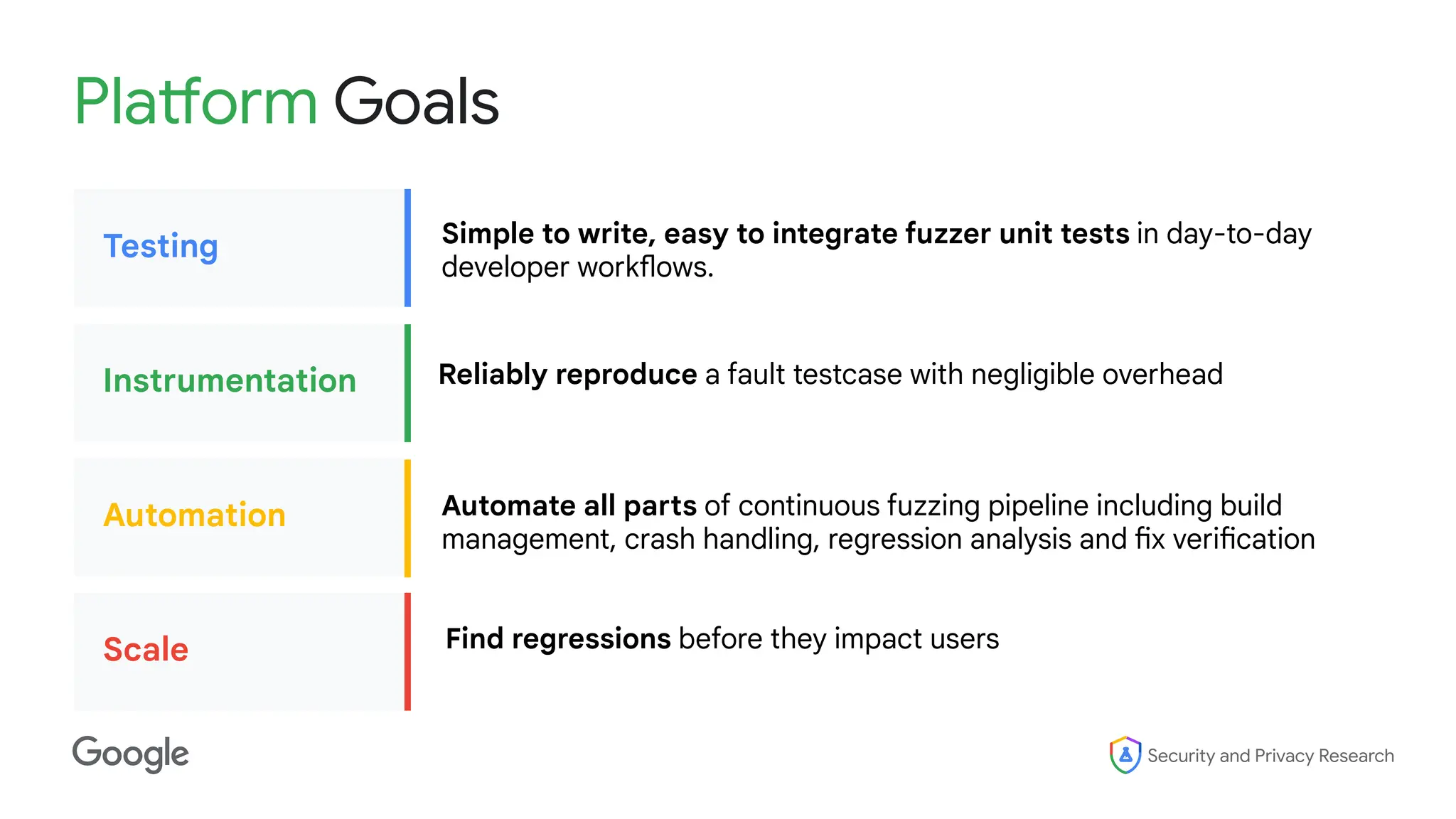

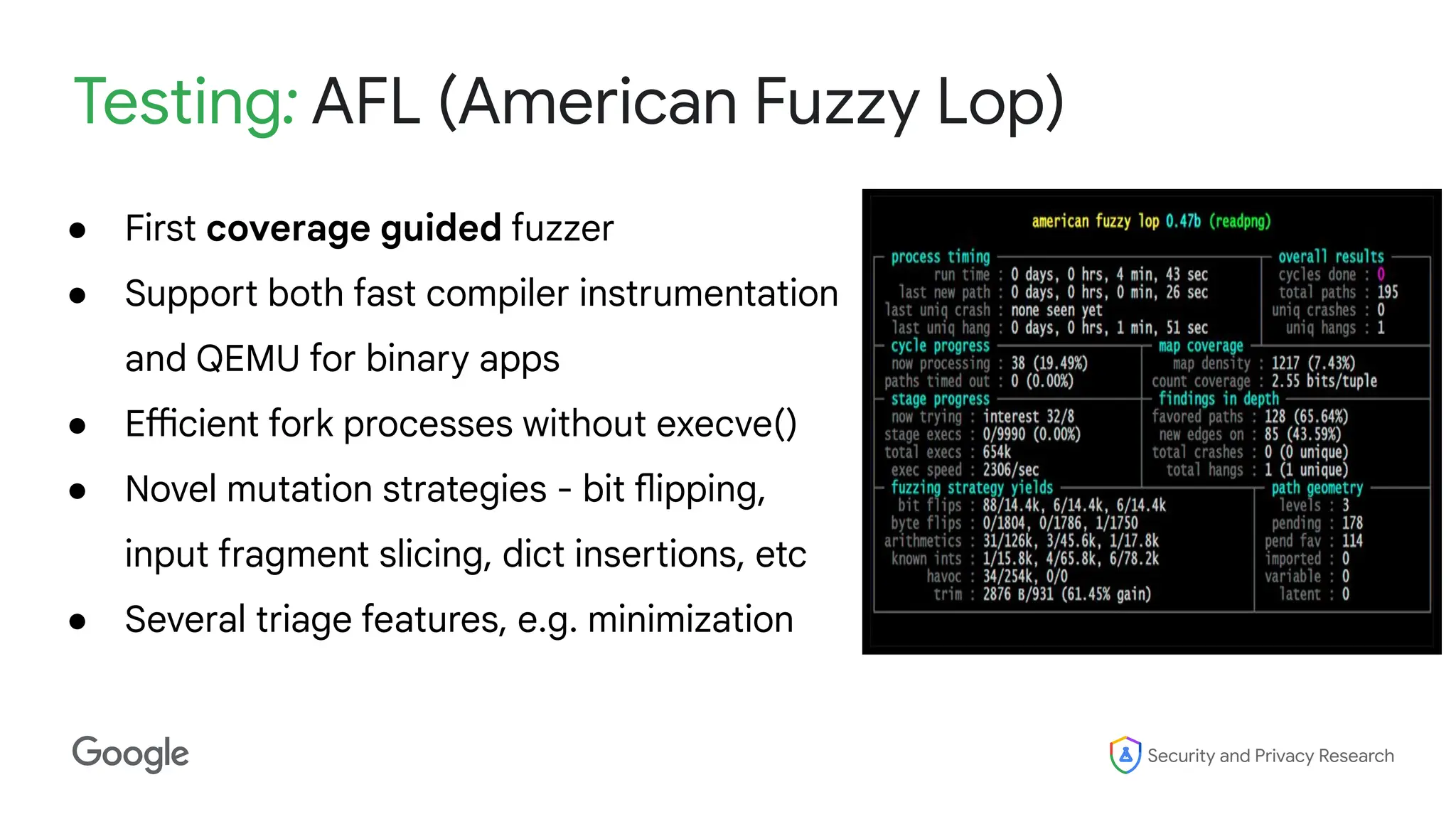

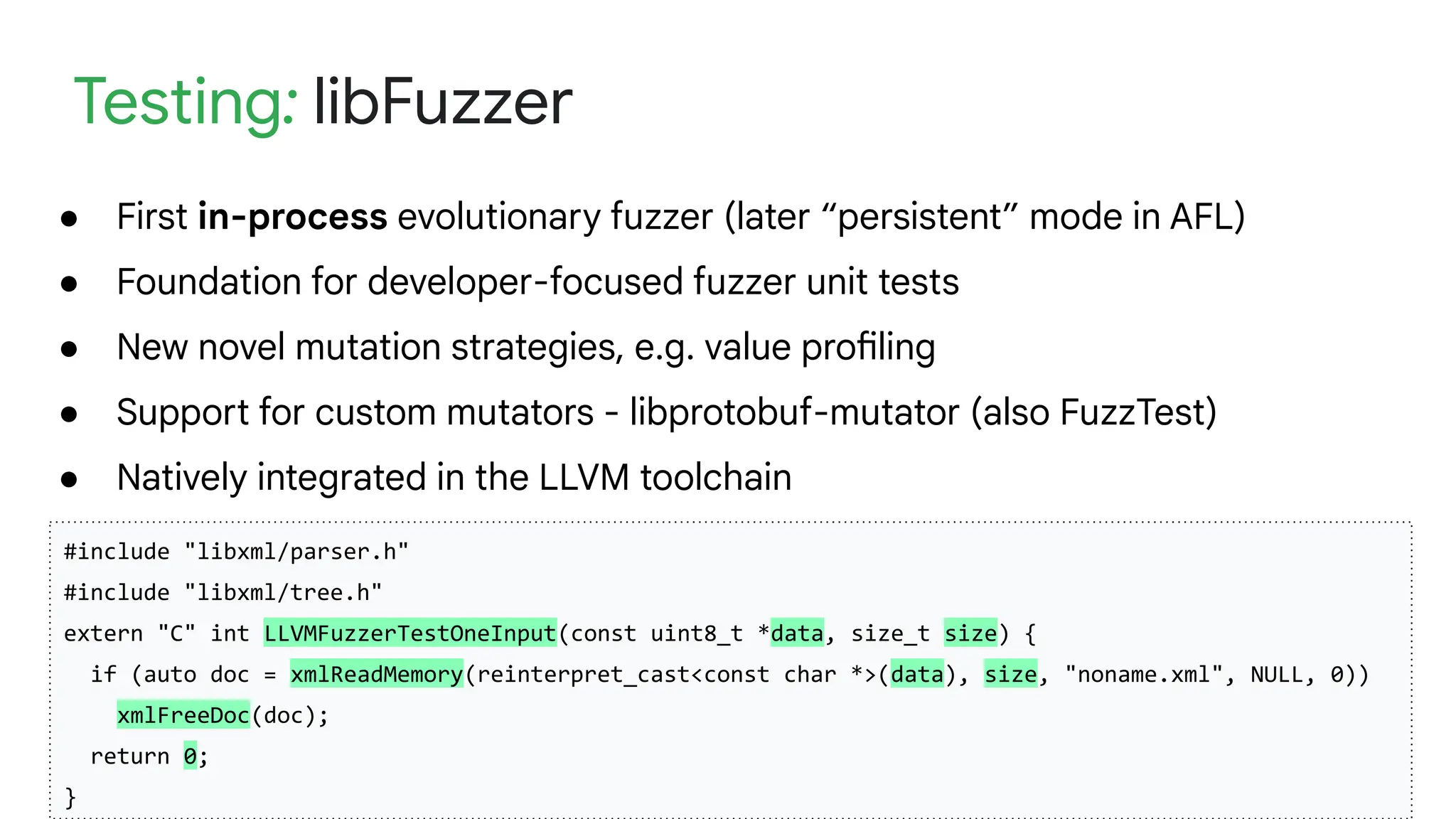

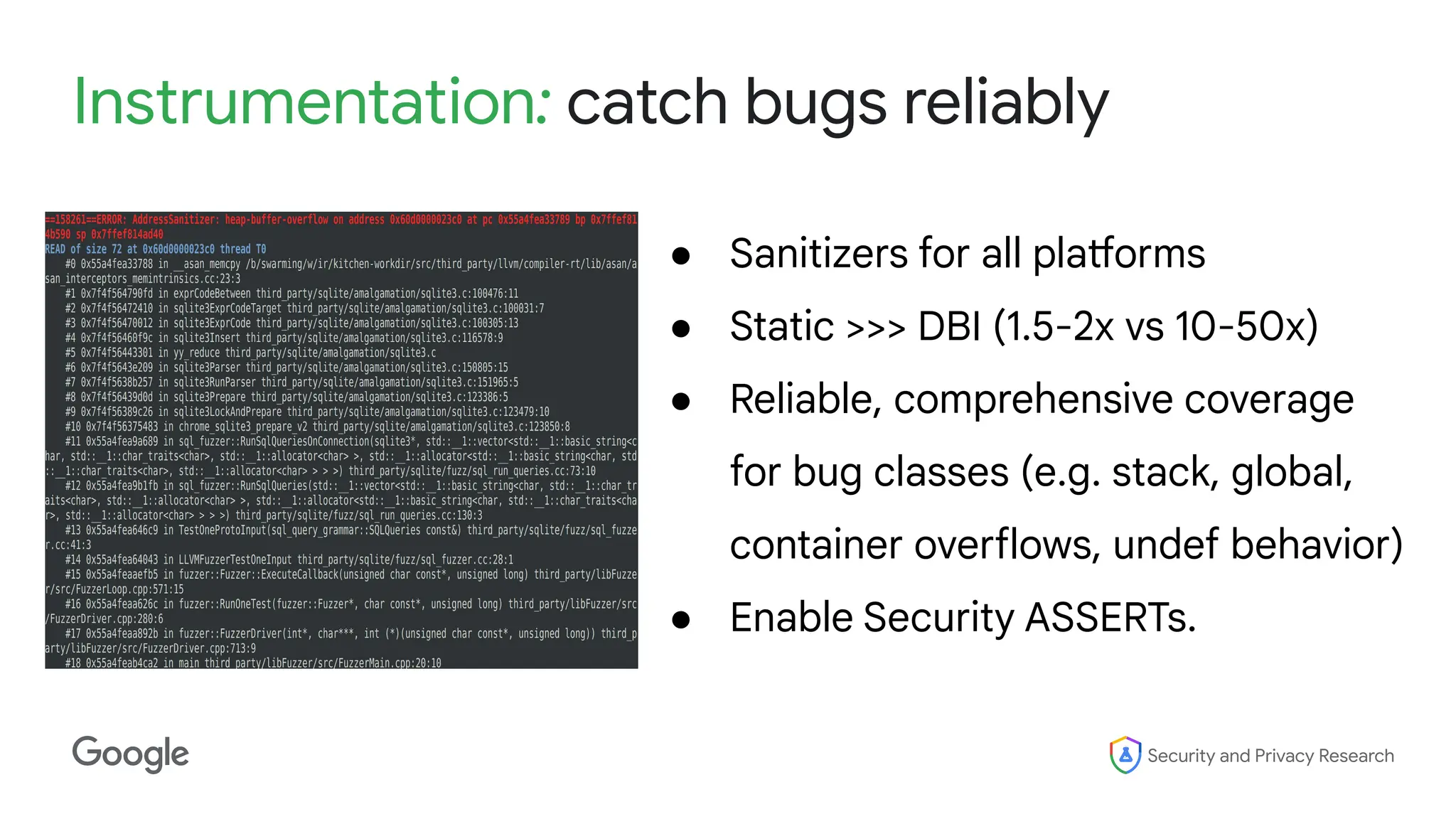

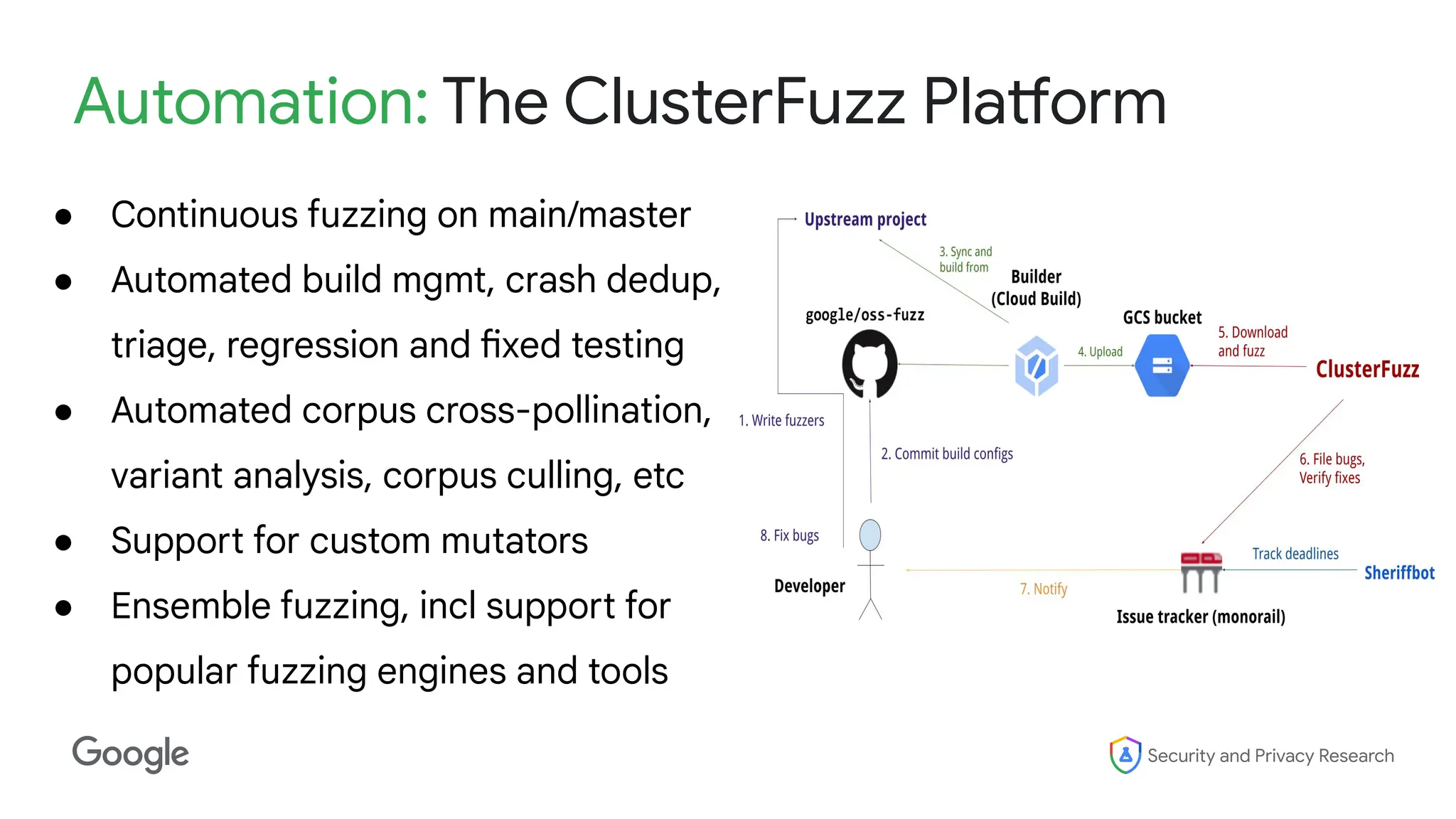

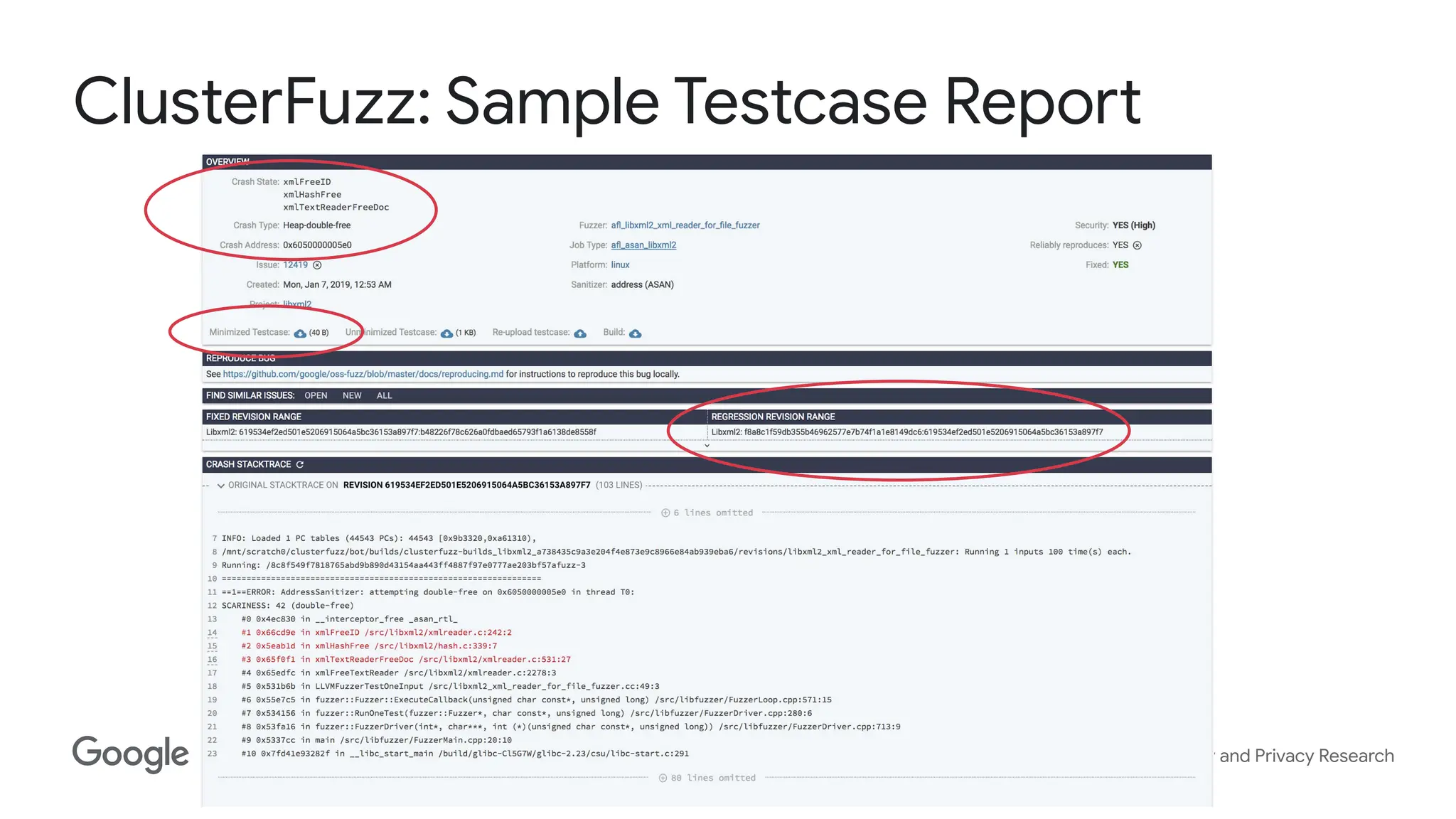

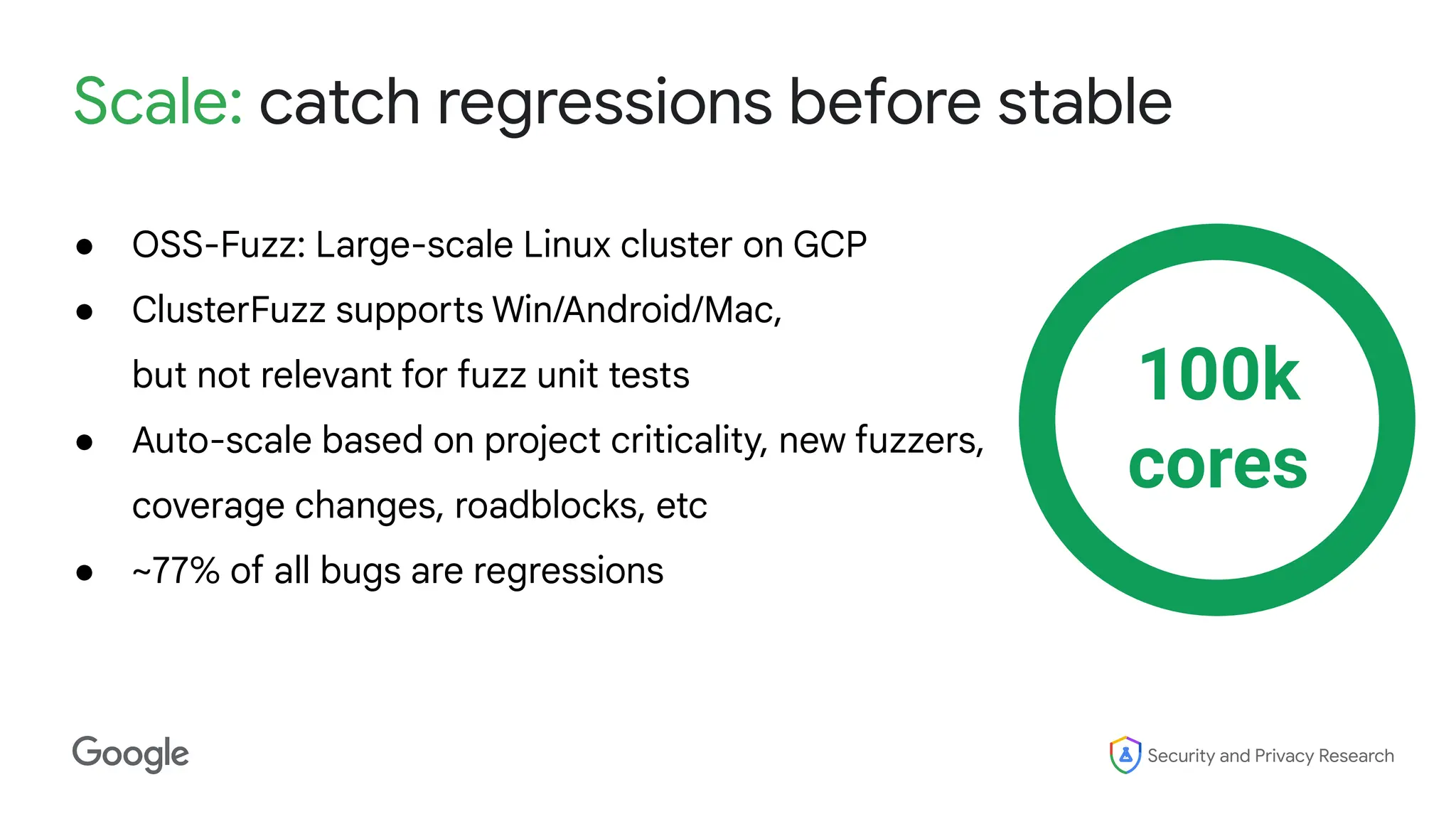

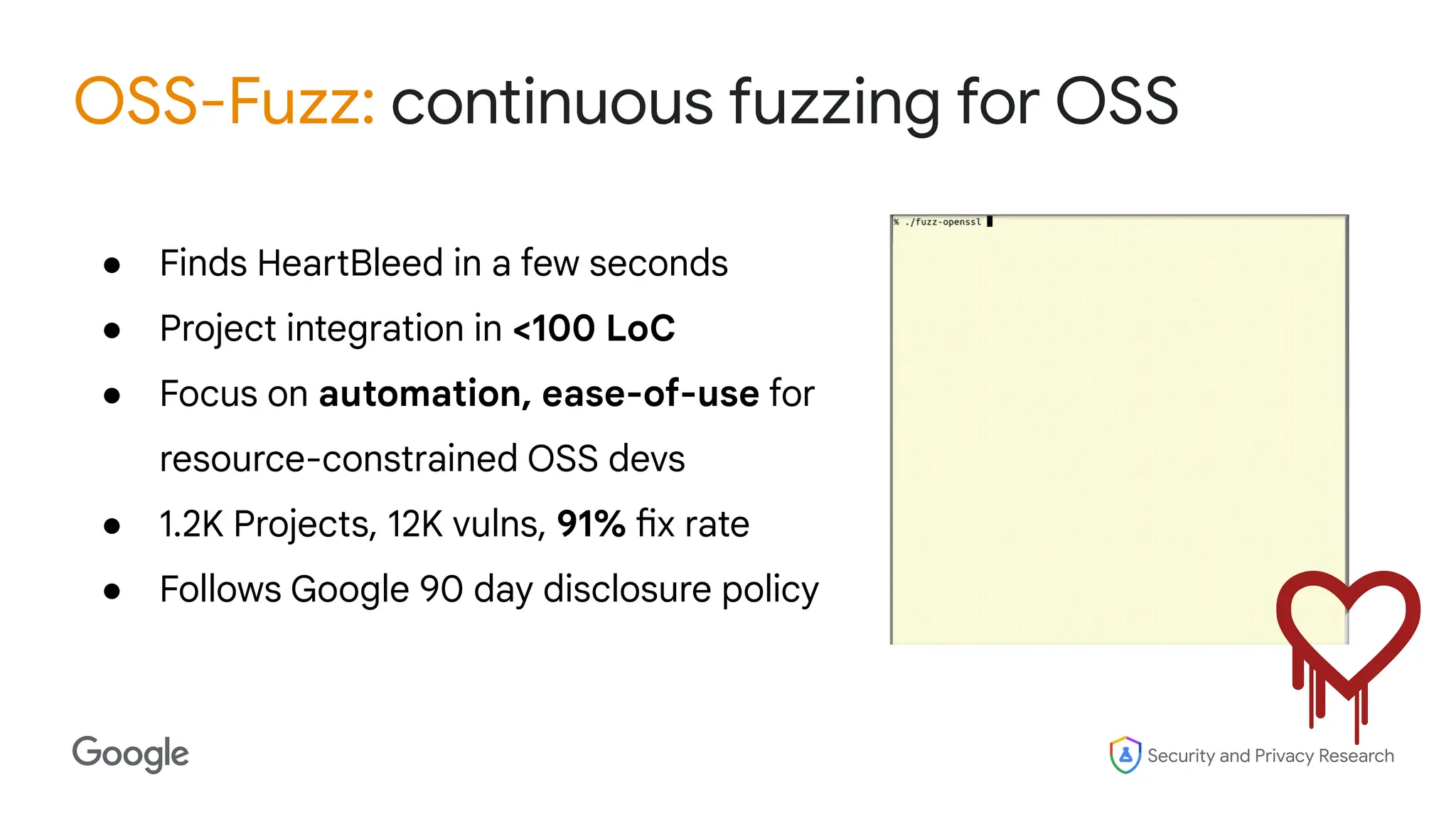

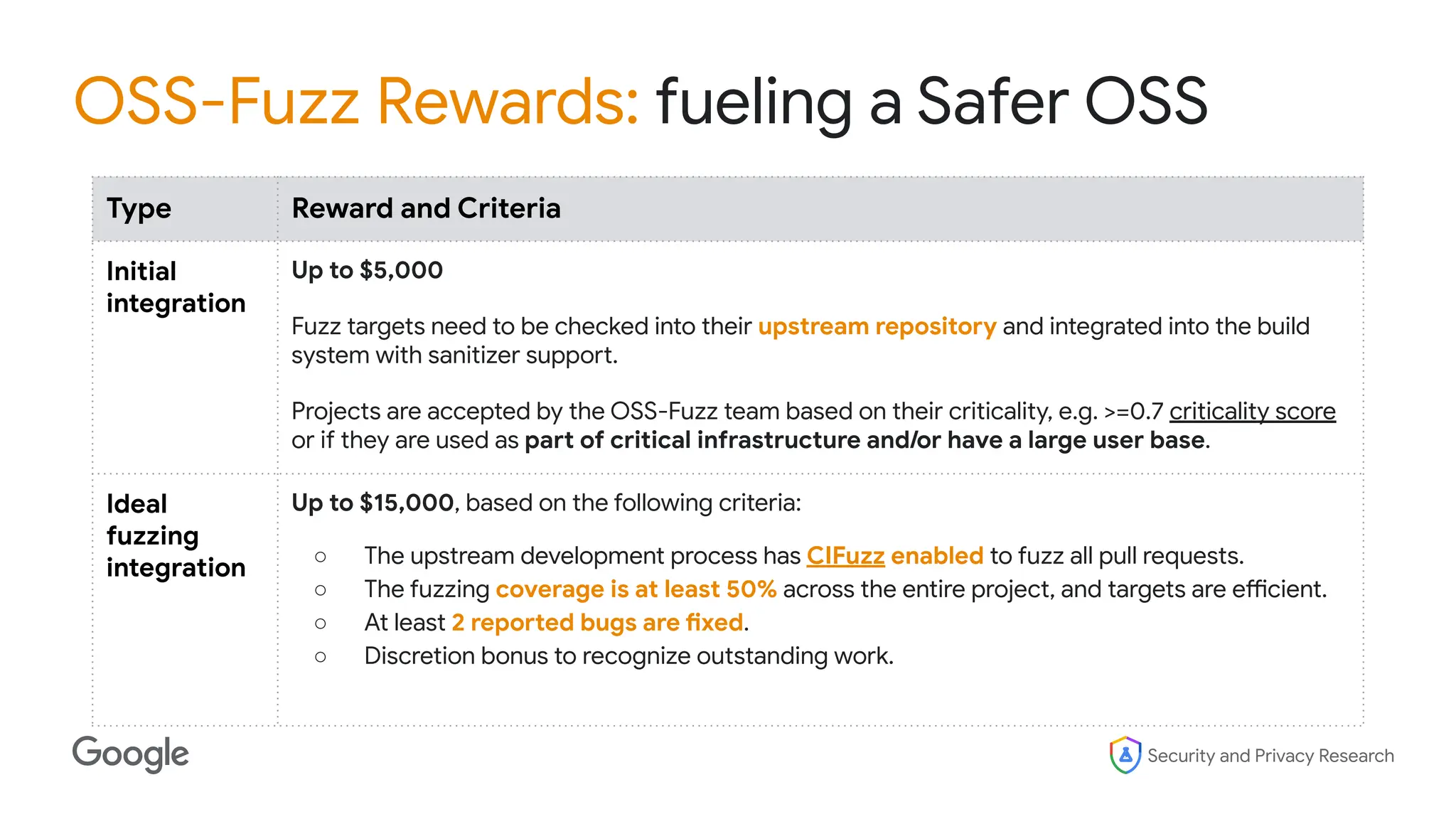

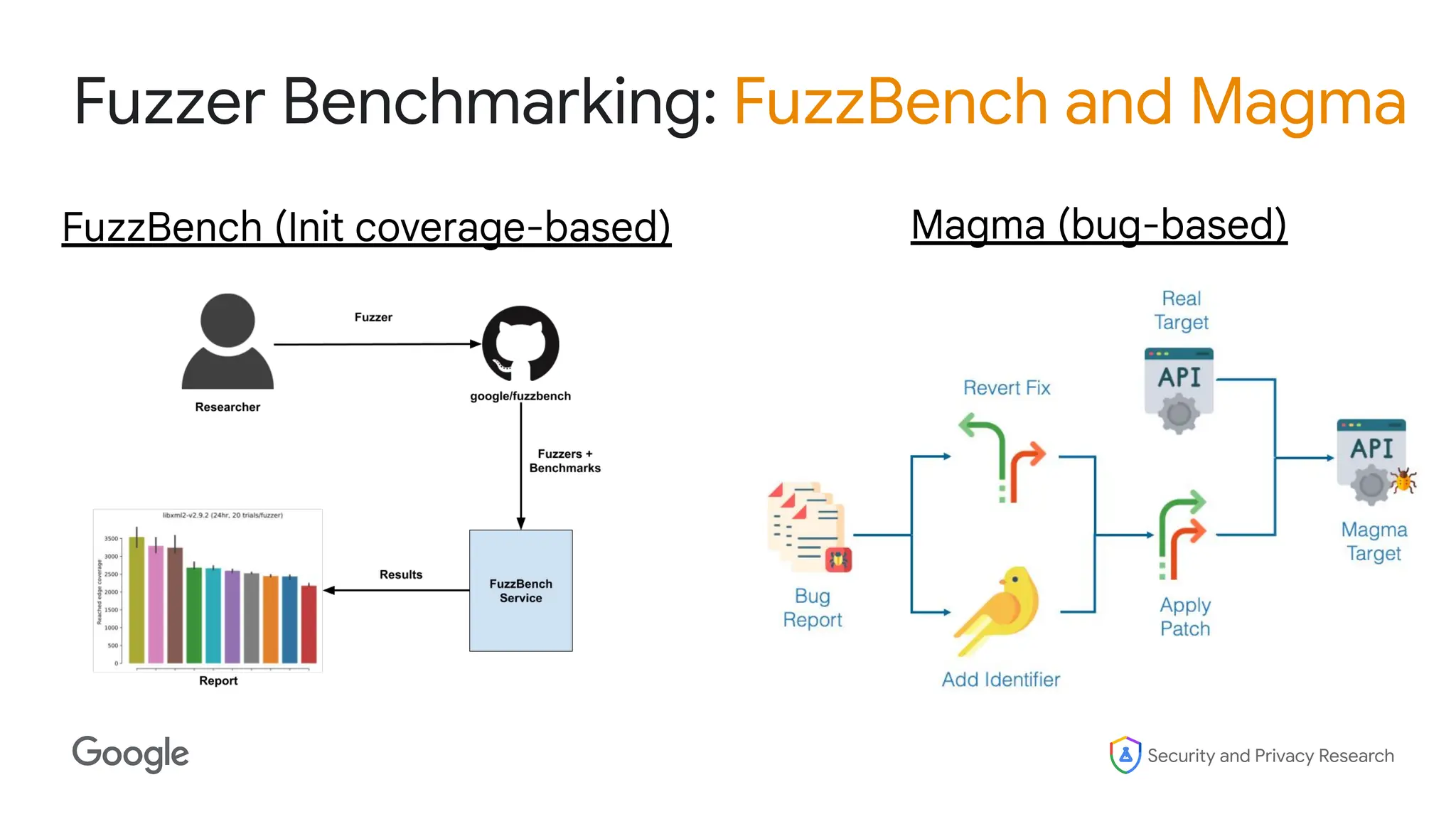

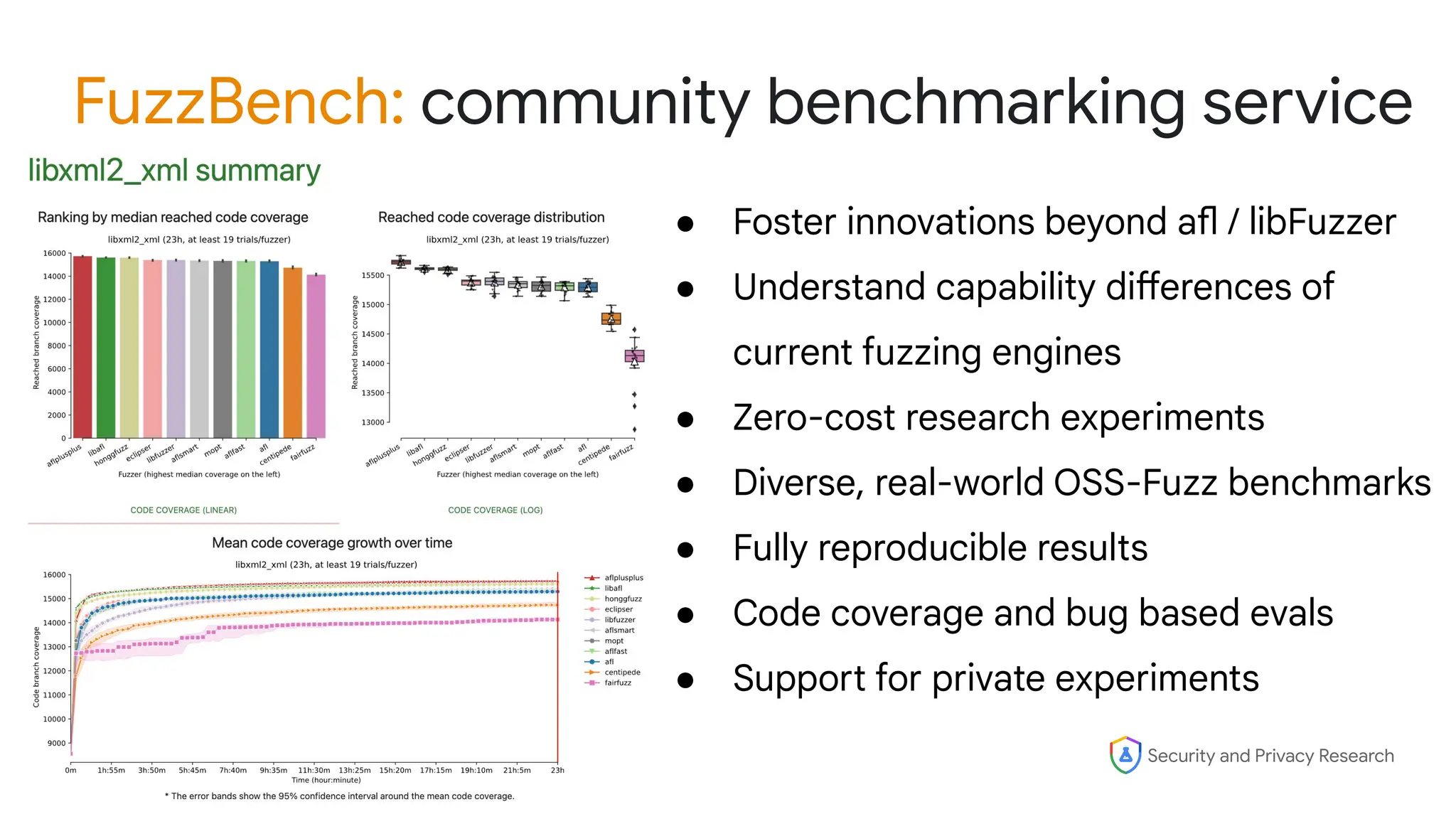

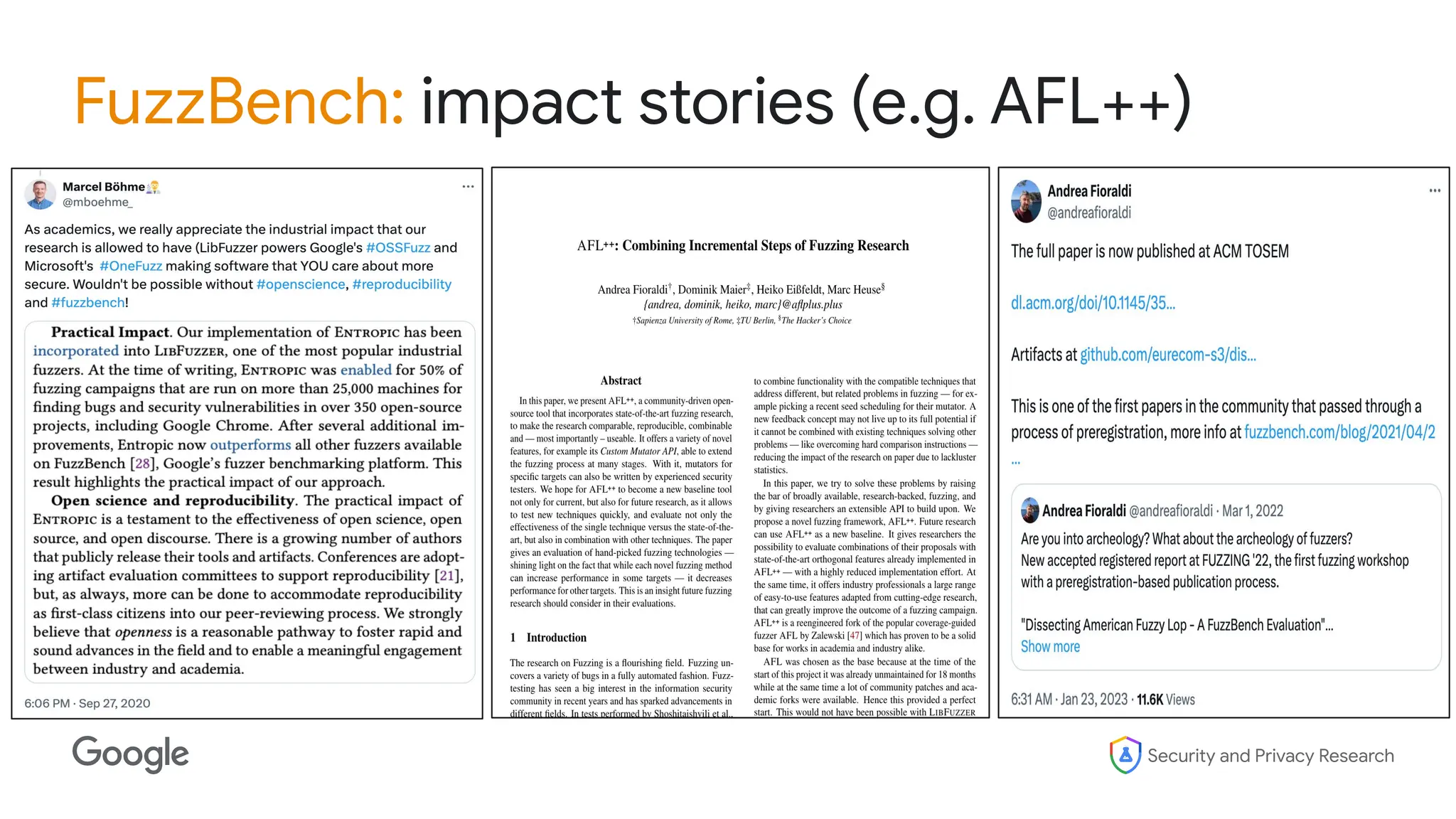

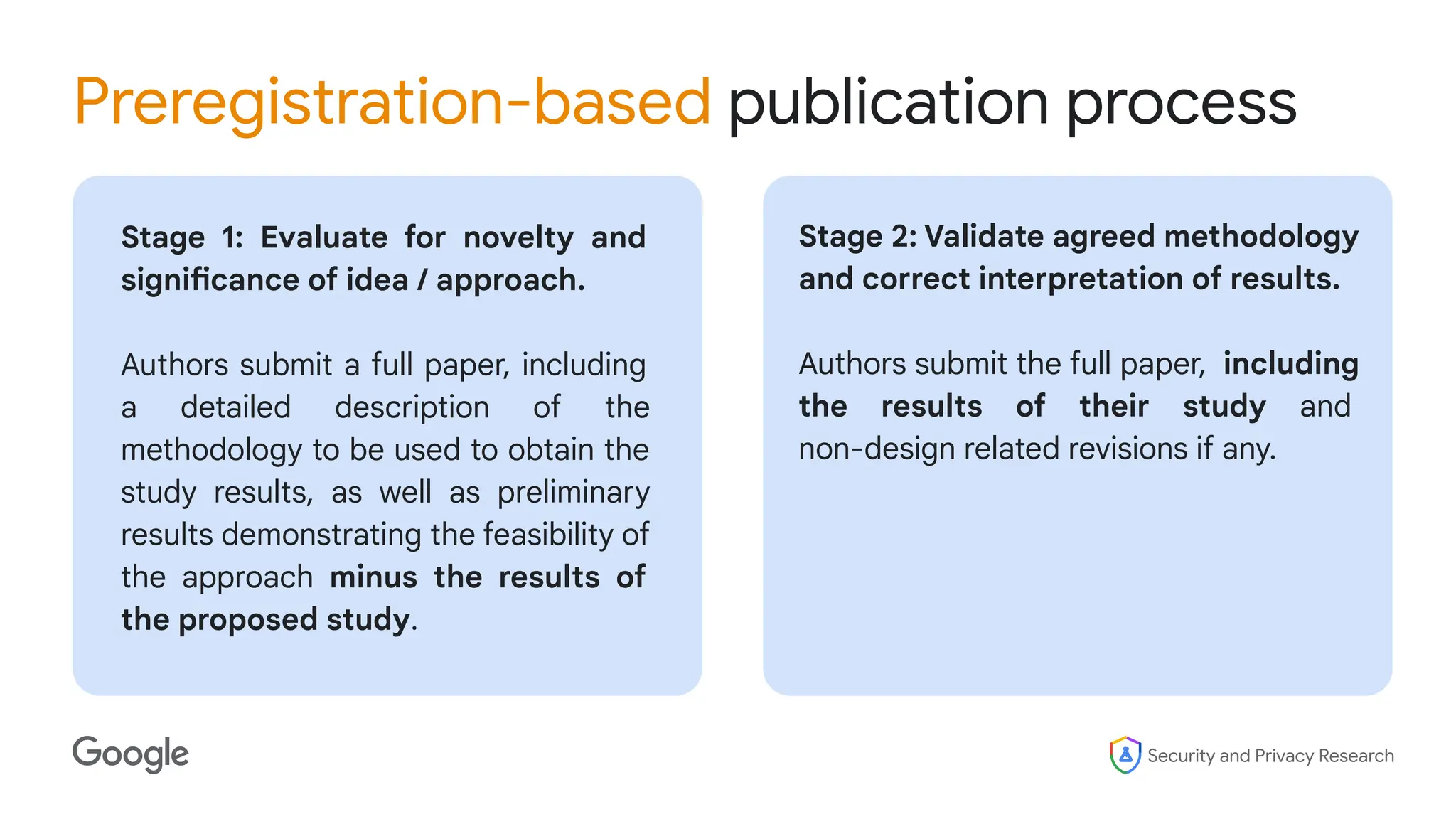

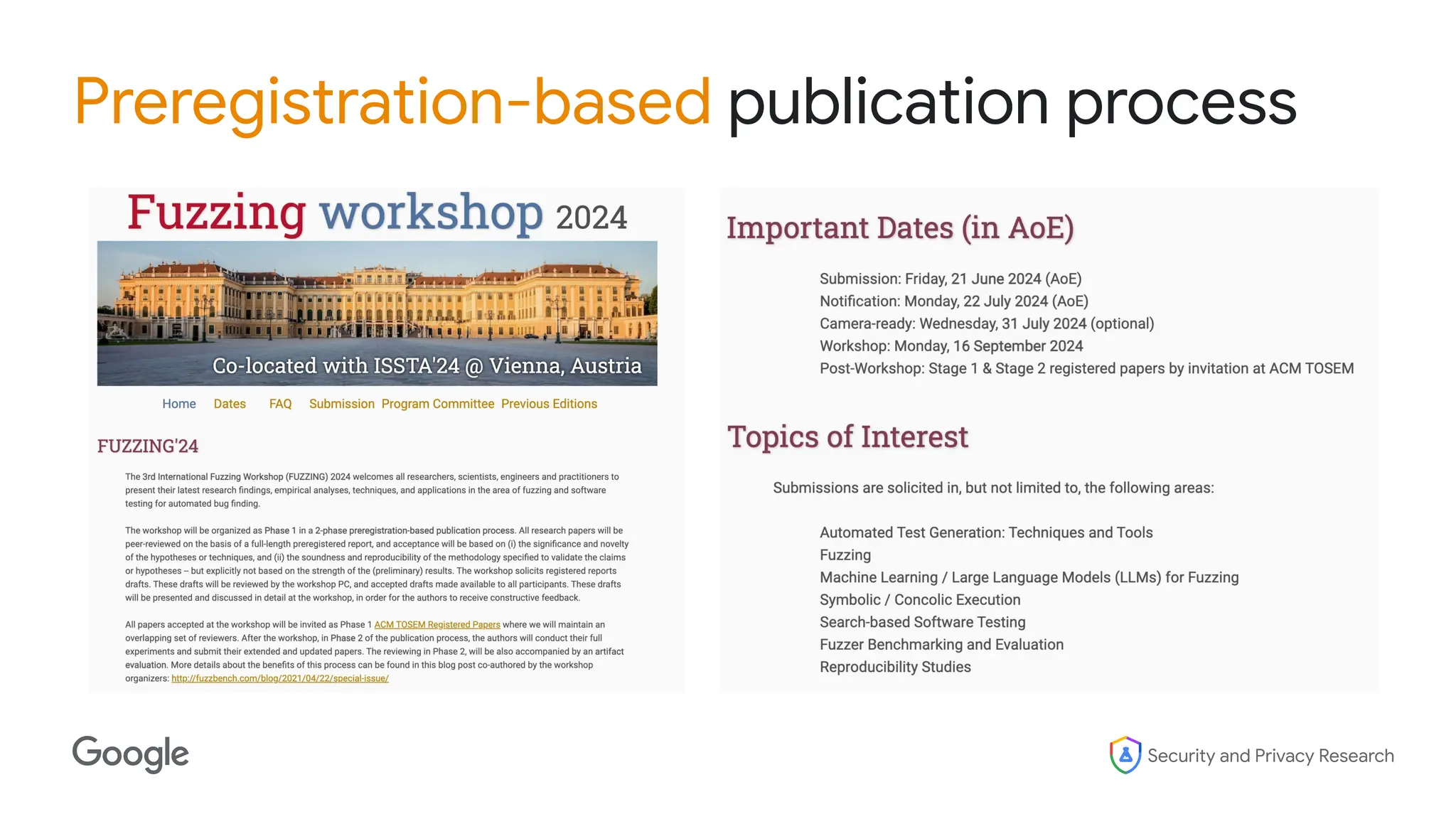

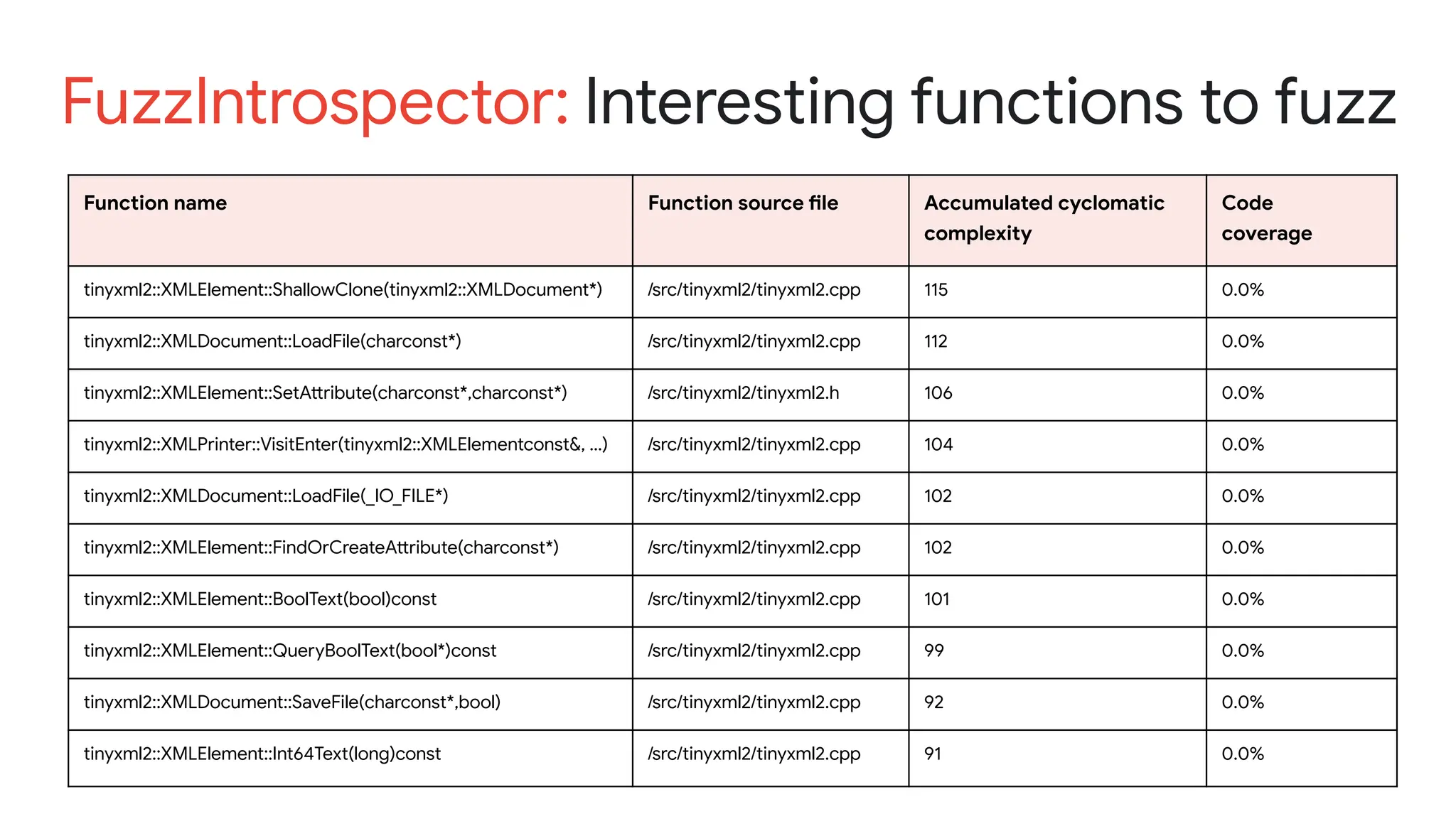

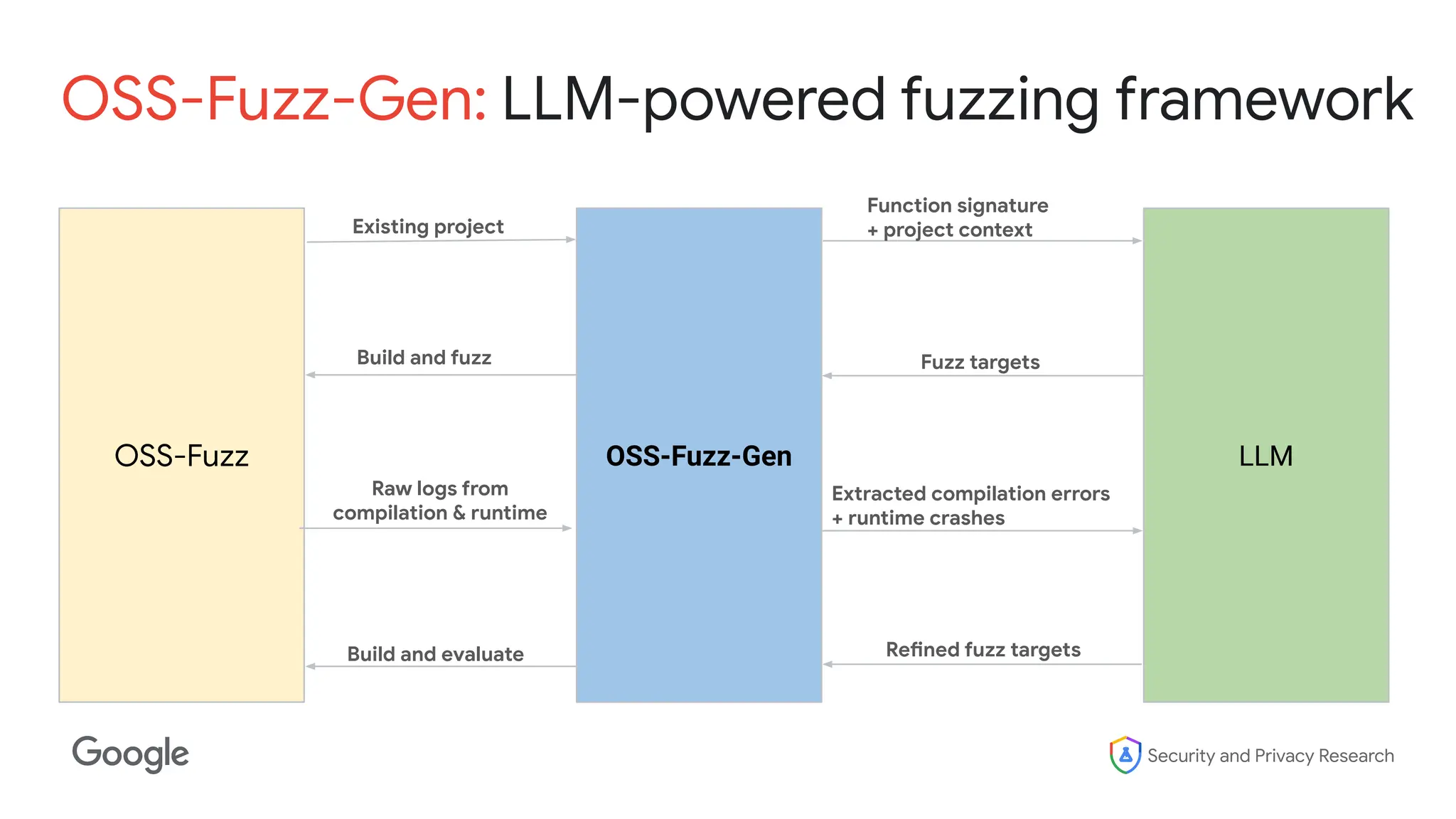

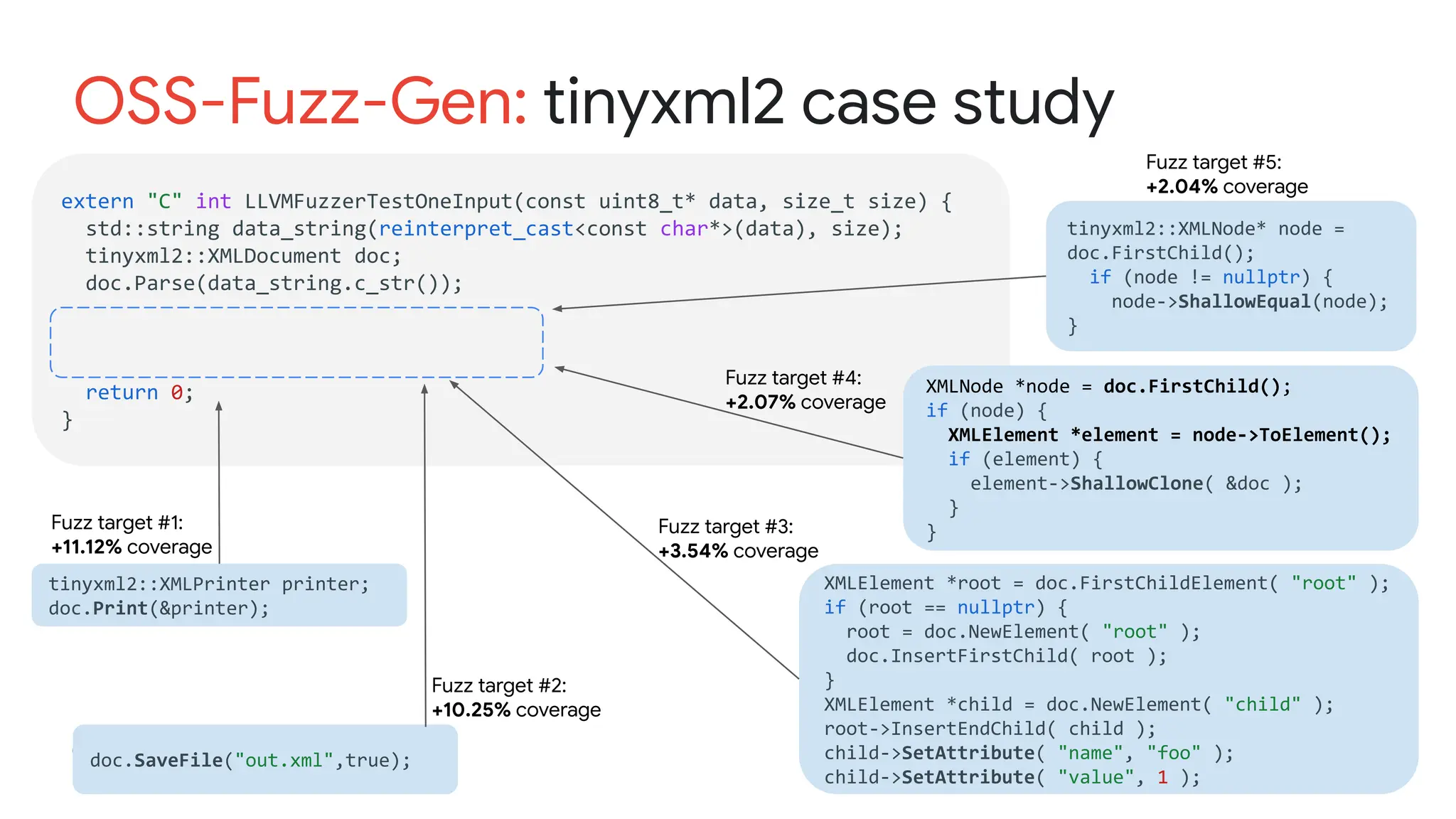

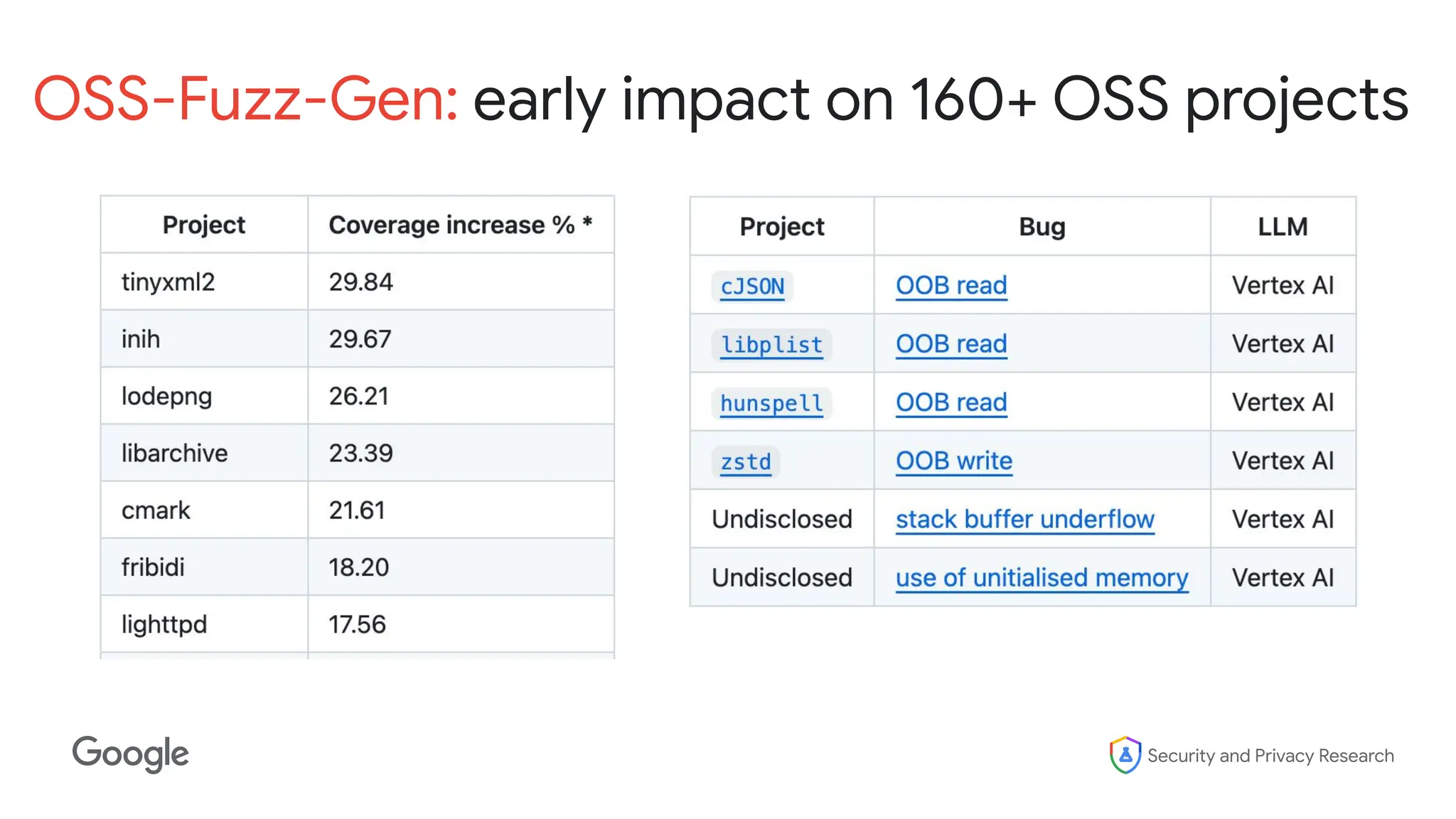

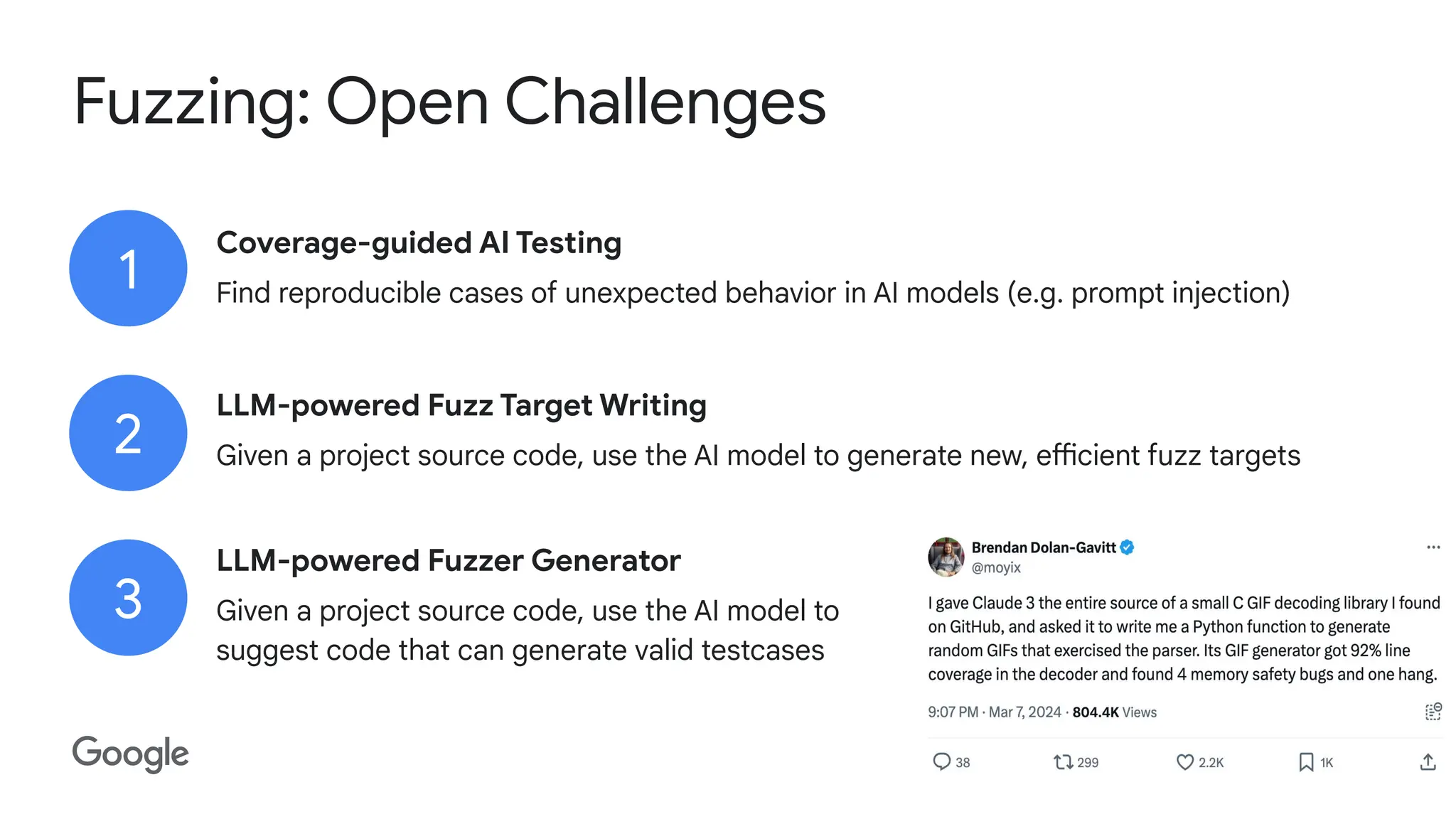

The document discusses the evolution and advancements in fuzzing, an automated bug-finding technique utilizing unexpected inputs to enhance software security. It highlights historical milestones in fuzzing research, the development of tools like AFL and ClusterFuzz, and the transition towards AI-powered fuzzing methodologies that aim to improve coverage and effectiveness. Additionally, it outlines the challenges and future directions of fuzzing research, emphasizing collaboration and the importance of community-driven efforts.