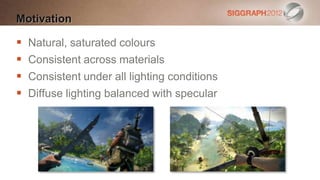

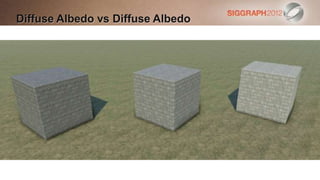

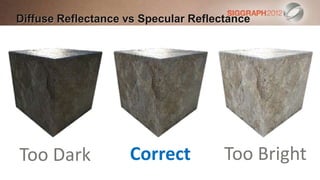

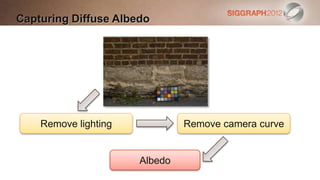

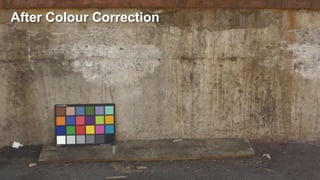

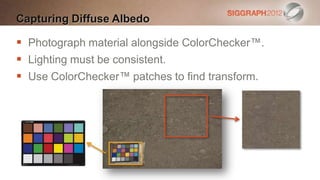

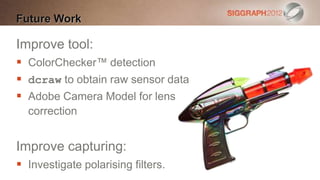

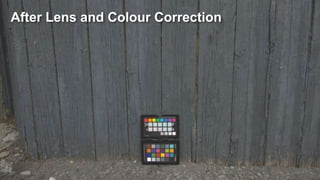

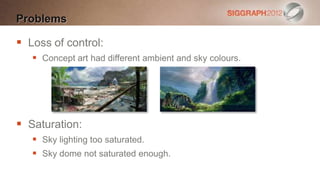

The document details the process of calibrating lighting and materials for 'Far Cry 3', focusing on the accurate capture of diffuse albedo and sky color modeling using the CIE sky model. It discusses techniques such as using the Macbeth Color Checker for color reference, implementing polynomial and affine transforms, and the challenges faced in maintaining consistent lighting and color saturation across different conditions. The paper emphasizes the importance of proper calibration for achieving physically-based rendering and highlights the necessity of good tools to ensure quality results.

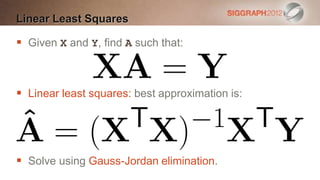

![Affine Transform [Malin11]

Pro: removes cross-talk between channels

Con: linear transform](https://image.slidesharecdn.com/s2012pbsfarcry3slidesv2-120909084220-phpapp02/85/Calibrating-Lighting-and-Materials-in-Far-Cry-3-16-320.jpg)

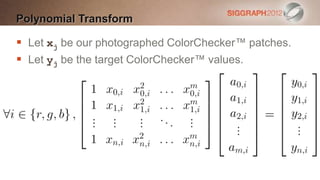

![Polynomial Transform [Malin11]

Pro: accurately adjusts levels

Con: channel independent](https://image.slidesharecdn.com/s2012pbsfarcry3slidesv2-120909084220-phpapp02/85/Calibrating-Lighting-and-Materials-in-Far-Cry-3-17-320.jpg)

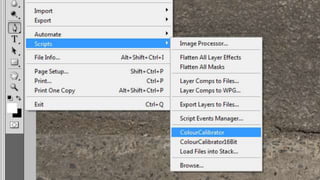

![Colour Correction Tool

Command line tool.

Launched by a Photoshop script.

Operates in xyY colour space.

Applies the following transforms

[Malin11]:

Affine Polynomial Affine](https://image.slidesharecdn.com/s2012pbsfarcry3slidesv2-120909084220-phpapp02/85/Calibrating-Lighting-and-Materials-in-Far-Cry-3-21-320.jpg)

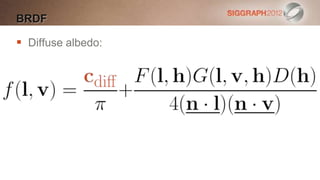

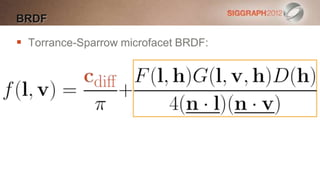

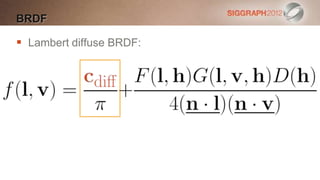

![Microfacet BRDF

Torrance-Sparrow microfacet BRDF: [Lazarov11]](https://image.slidesharecdn.com/s2012pbsfarcry3slidesv2-120909084220-phpapp02/85/Calibrating-Lighting-and-Materials-in-Far-Cry-3-42-320.jpg)

![Distribution Function

Use normalised Blinn-Phong:

Specular power m in range [1, 8192]

Encoded as glossiness g in [0, 1] where:](https://image.slidesharecdn.com/s2012pbsfarcry3slidesv2-120909084220-phpapp02/85/Calibrating-Lighting-and-Materials-in-Far-Cry-3-43-320.jpg)

![Fresnel Term

Schlick’s approximation for Fresnel:

Spherical gaussian approximation: [Lagarde12]](https://image.slidesharecdn.com/s2012pbsfarcry3slidesv2-120909084220-phpapp02/85/Calibrating-Lighting-and-Materials-in-Far-Cry-3-44-320.jpg)

![Visibility Term

Call the remaining terms the visibility term:

Many games have V(l, v, h) = 1 making specular too

dark. [Hoffman10] [Lazarov11]](https://image.slidesharecdn.com/s2012pbsfarcry3slidesv2-120909084220-phpapp02/85/Calibrating-Lighting-and-Materials-in-Far-Cry-3-45-320.jpg)

![Visibility Term

Schlick-Smith:

Roughness dependent.

Calculate a from Beckmann roughness k or Blinn-

Phong specular power m: [Lazarov11]](https://image.slidesharecdn.com/s2012pbsfarcry3slidesv2-120909084220-phpapp02/85/Calibrating-Lighting-and-Materials-in-Far-Cry-3-46-320.jpg)

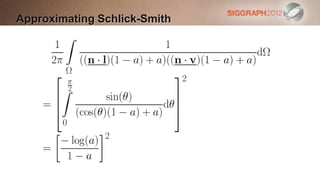

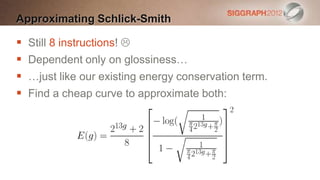

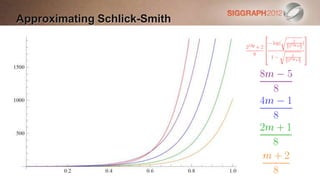

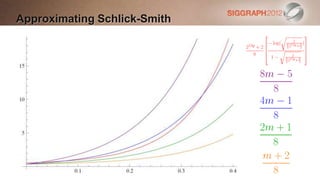

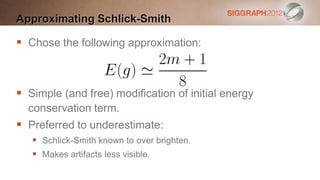

![Approximating Schlick-Smith

Cost of Schlick-Smith is 8 instructions per

light. [Lazarov2011]

Too expensive for our budget:

Especially when applied to every light.

Approximate by integrating Schlick-Smith

over the hemisphere.](https://image.slidesharecdn.com/s2012pbsfarcry3slidesv2-120909084220-phpapp02/85/Calibrating-Lighting-and-Materials-in-Far-Cry-3-47-320.jpg)

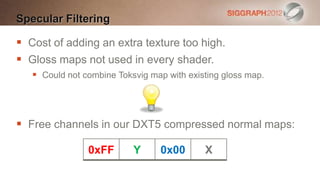

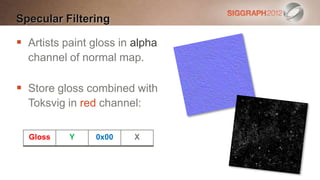

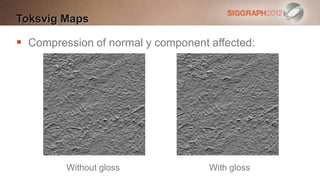

![Specular Filtering

Use Toksvig’s formula to scale the specular power,

using the length of the filtered normal: [Hill11]

Store these scales in a texture.

Ideally use to adjust gloss map.](https://image.slidesharecdn.com/s2012pbsfarcry3slidesv2-120909084220-phpapp02/85/Calibrating-Lighting-and-Materials-in-Far-Cry-3-55-320.jpg)

![References

[Darula02] Stanislav Darula and Richard Kittler, CIE General Sky Standard Defining

Luminance Distributions, eSim 2002, http://mathinfo.univ-reims.fr/IMG/pdf/other2.pdf

[Hill11] Stephen Hill, Specular Showdown in the Wild West,

http://blog.selfshadow.com/2011/07/22/specular-showdown/

[Hoffman10] Naty Hoffman, Crafting Physically Motivated Shading Models for Game

Development, SIGGRAPH 2010, http://renderwonk.com/publications/s2010-shading-

course/

[Lagarde12] Sébastien Lagarde, Spherical Gaussian Approximation for Blinn-Phong,

Phong and Fresnel, 2012, http://http://seblagarde.wordpress.com/2012/06/03/spherical-

gaussien-approximation-for-blinn-phong-phong-and-fresnel/

[Lazarov11] Dimitar Lazarov, Physically Based Lighting in Call of Duty: Black Ops,

SIGGRAPH 2011, http://advances.realtimerendering.com/s2011/index.html

[Malin11], Paul Malin, personal communication](https://image.slidesharecdn.com/s2012pbsfarcry3slidesv2-120909084220-phpapp02/85/Calibrating-Lighting-and-Materials-in-Far-Cry-3-64-320.jpg)