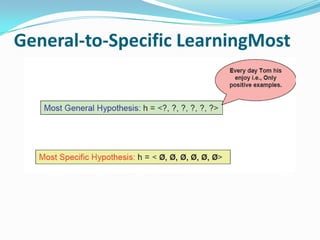

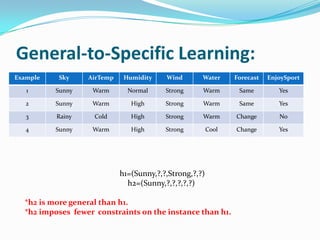

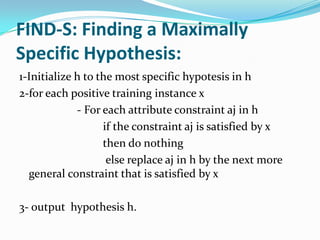

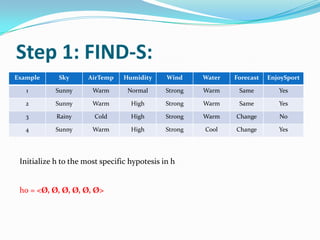

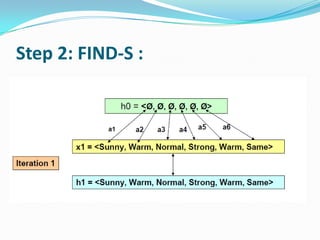

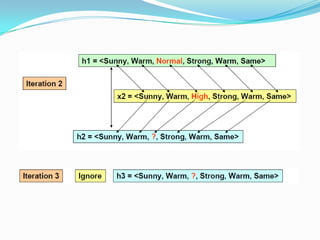

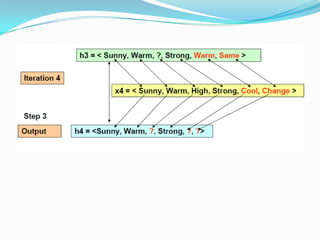

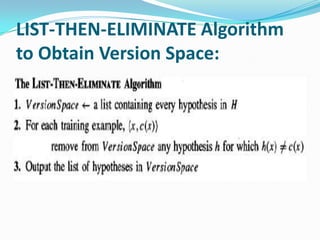

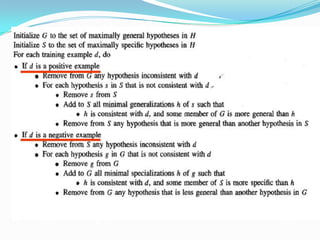

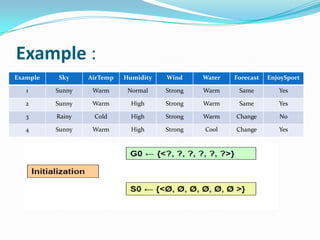

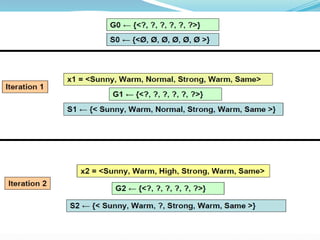

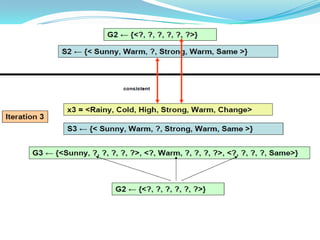

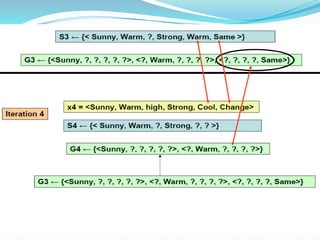

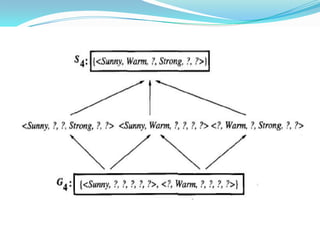

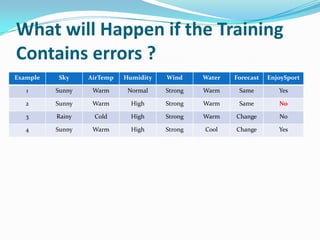

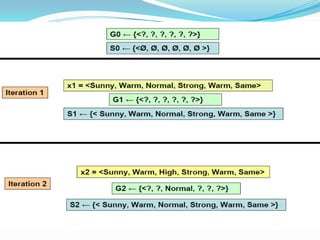

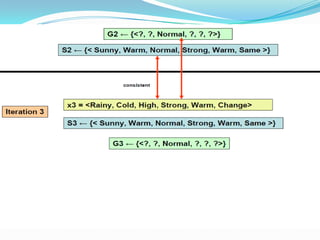

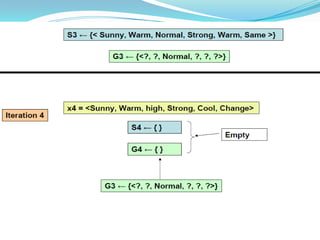

The document discusses different approaches for concept learning from examples, including viewing it as a search problem to find the hypothesis that best fits the training examples. It also describes the general-to-specific learning approach, where the goal is to find the maximally specific hypothesis consistent with the positive training examples by starting with the most general hypothesis and replacing constraints to better fit the examples. The document also discusses the version space and candidate elimination algorithms for obtaining the version space of all hypotheses consistent with the training data.