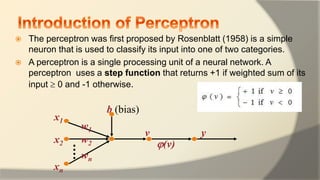

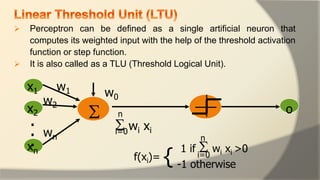

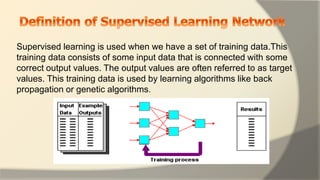

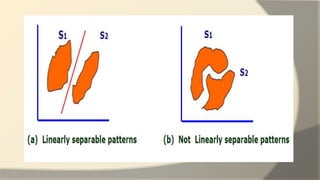

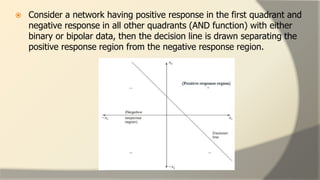

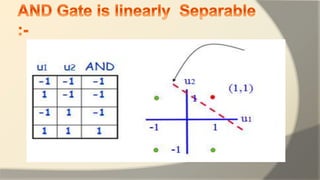

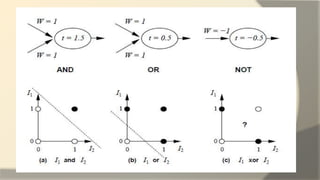

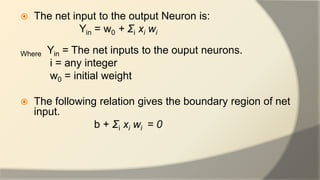

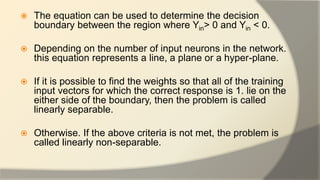

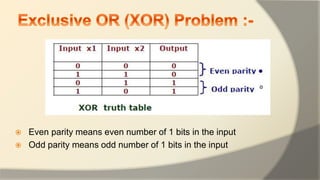

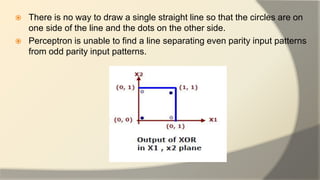

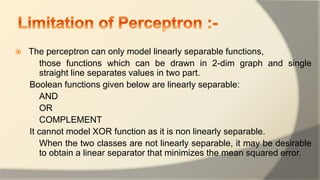

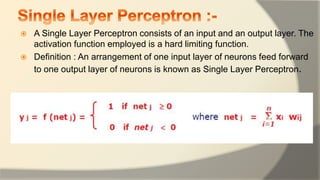

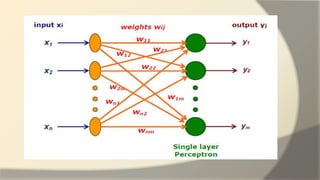

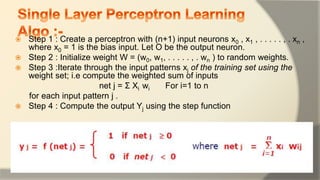

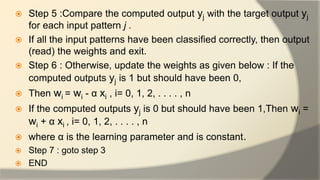

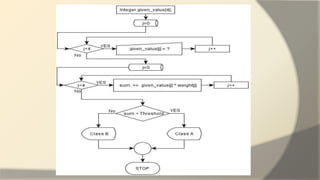

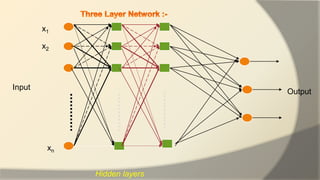

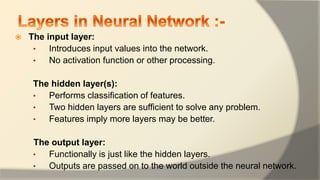

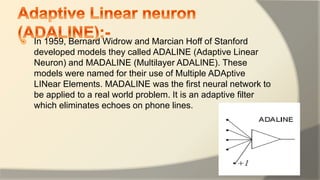

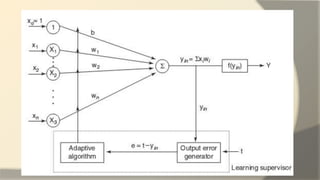

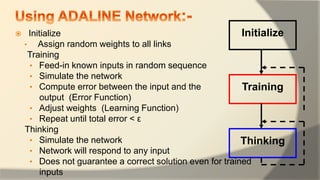

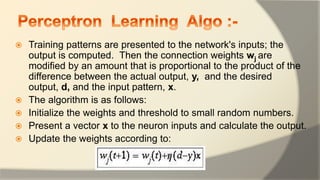

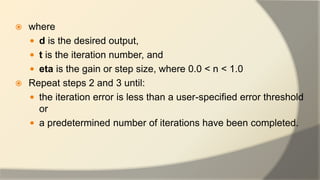

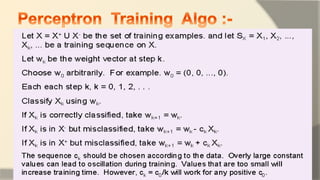

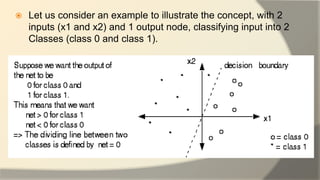

The document discusses the perceptron, a basic artificial neuron model proposed by Rosenblatt in 1958, which classifies inputs into two categories using a step function based on a weighted sum. It highlights the perceptron's limitations, particularly its inability to model non-linearly separable functions, and outlines supervised learning techniques for training it. Additionally, it details the structure and training process of multilayer perceptrons, emphasizing their versatility in handling complex function approximations in machine learning.