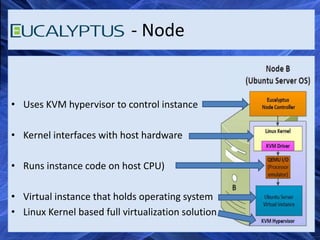

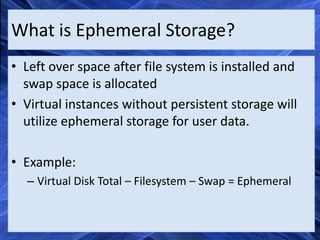

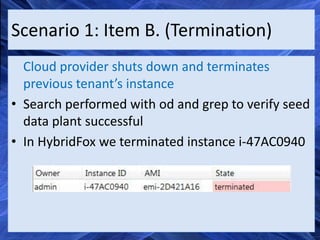

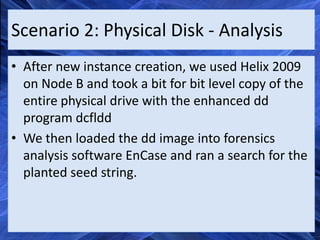

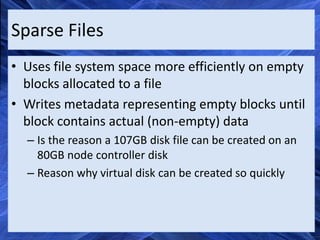

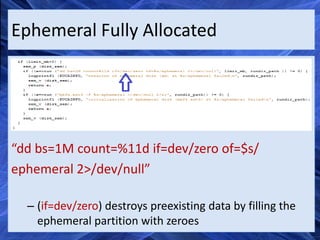

This document summarizes a project on cloud forensics. It discusses cloud computing models like SaaS, PaaS, and IaaS. It describes implementing a private Eucalyptus cloud and testing live forensics via virtual introspection and recovering ephemeral data from previous cloud tenants. It demonstrates recovering data from a physical disk but not from a new virtual instance due to sparse files. The document concludes ephemeral data is not accessible to new tenants in Eucalyptus clouds due to sparse files and zero-filling.