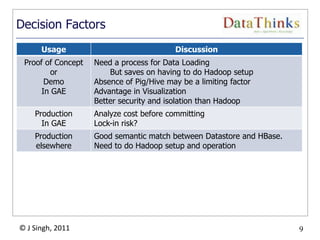

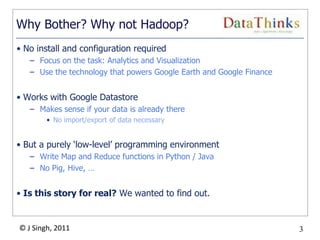

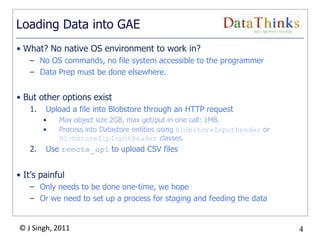

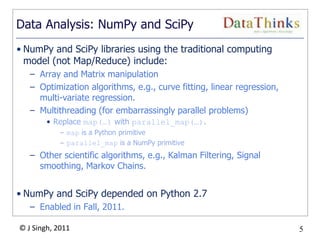

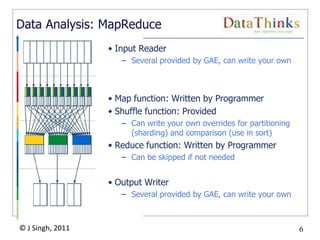

The document evaluates Google App Engine (GAE) as a potential platform for handling big data analytics, contrasting it with Hadoop. It discusses the ease of use with no installation or configuration required, the capabilities for data loading, analysis using libraries like numpy and scipy, and various visualization options. The conclusion suggests GAE offers a rich set of features, albeit with limitations such as the lack of pig and hive environments.

![7

© J Singh, 2011 7

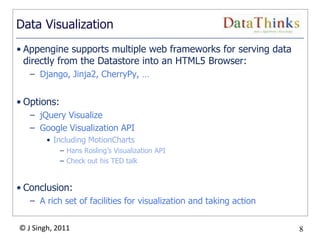

Data Analysis: Pipeline API

• Based on Python Generator functions

• Allows chaining of map reduce jobs

– Primitives for setting up various types of chains

• MapreducePipeline (prev page) was just one type of pipeline

• Available for Python or Java

– Python side better documented

Split and Merge example

class aPipe(pipeline.Pipeline):

def run(self, e_kind, prop_name, *value_list):

all_bs = []

for v in value_list:

stage = yield bPipe(e_kind, prop_name, v)

all_bs.append(stage)

yield common.Append(*all_bs)](https://image.slidesharecdn.com/bigdatalaboratory-120317091029-phpapp01/85/Big-Data-Laboratory-7-320.jpg)