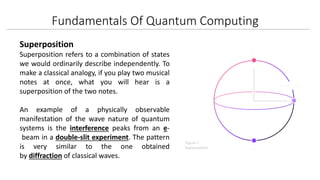

Quantum computing is a cutting-edge field that leverages principles from quantum theory, particularly superposition and entanglement, to perform computations much faster than classical computers. Key applications include machine learning, materials simulation, finance optimization, and cryptography, though challenges remain in scalability, decoherence, and algorithm development. Despite these limitations, the potential for quantum computers to revolutionize technology and problem-solving capabilities is significant.