Embed presentation

Downloaded 65 times

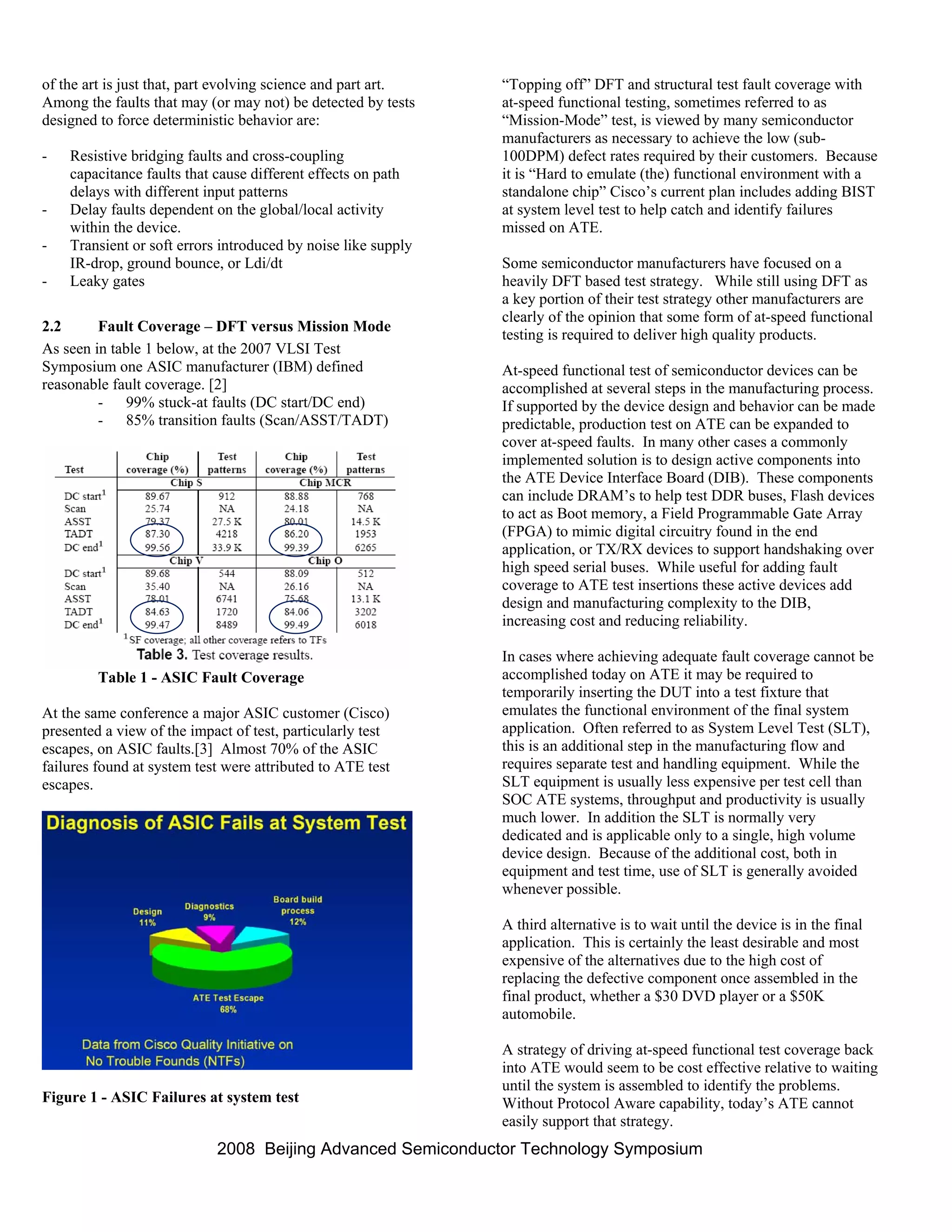

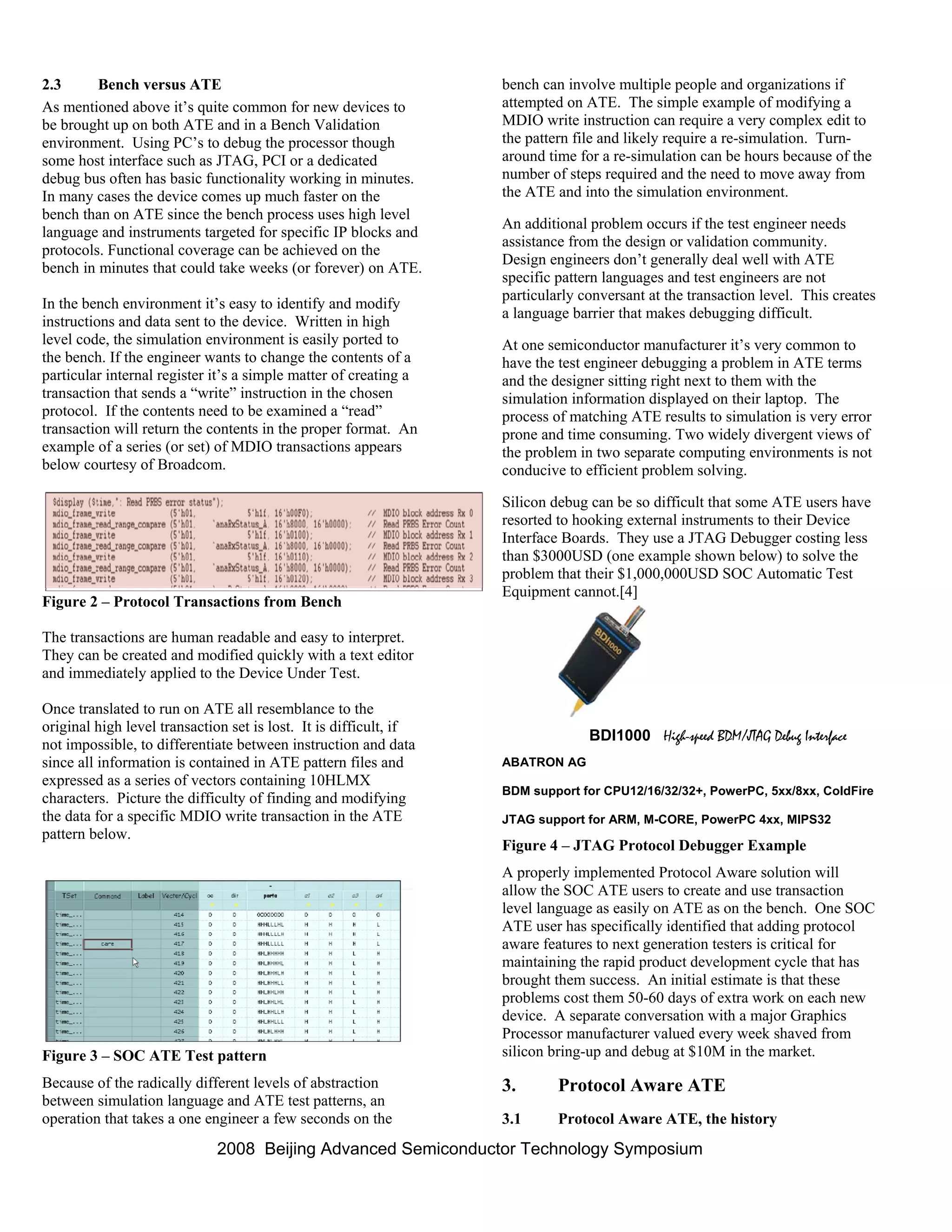

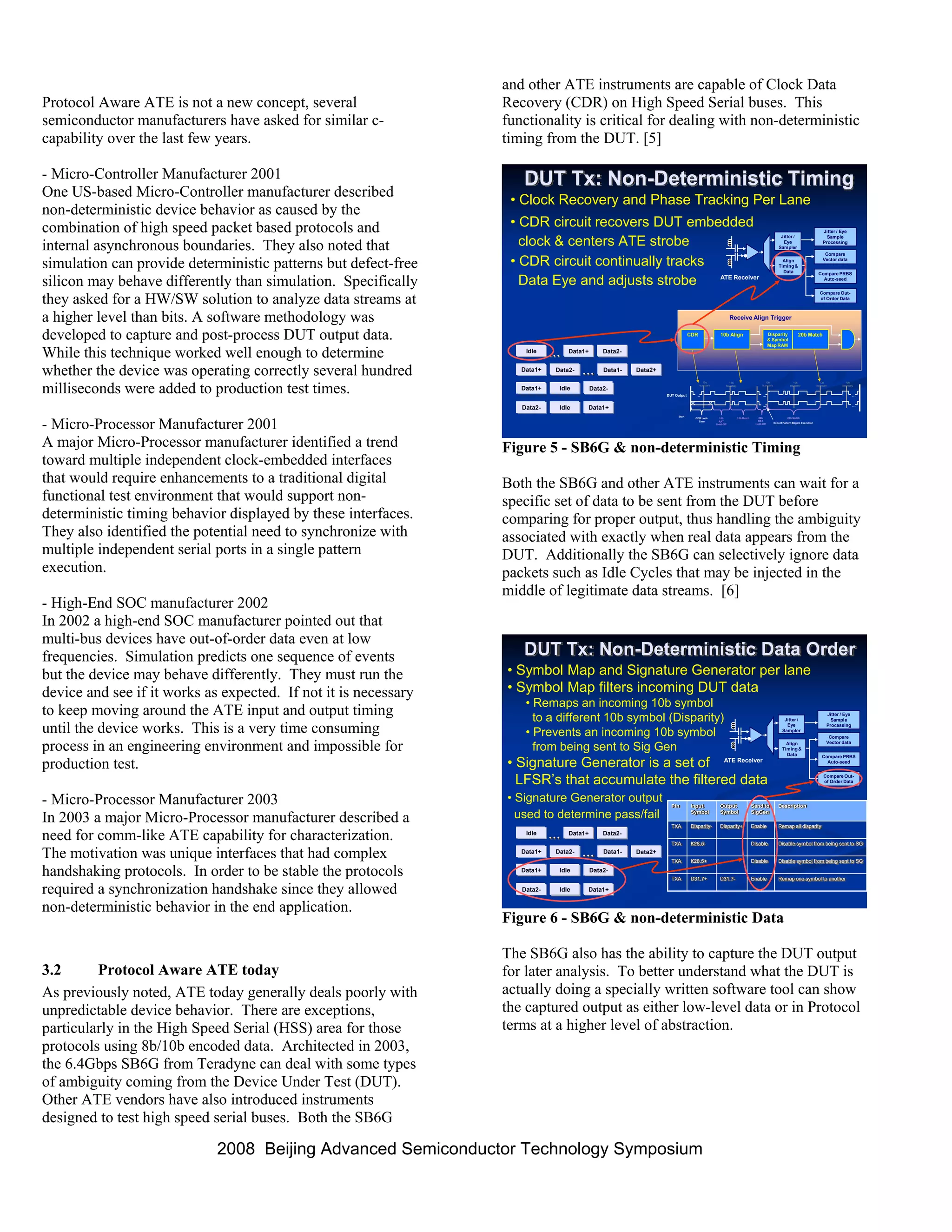

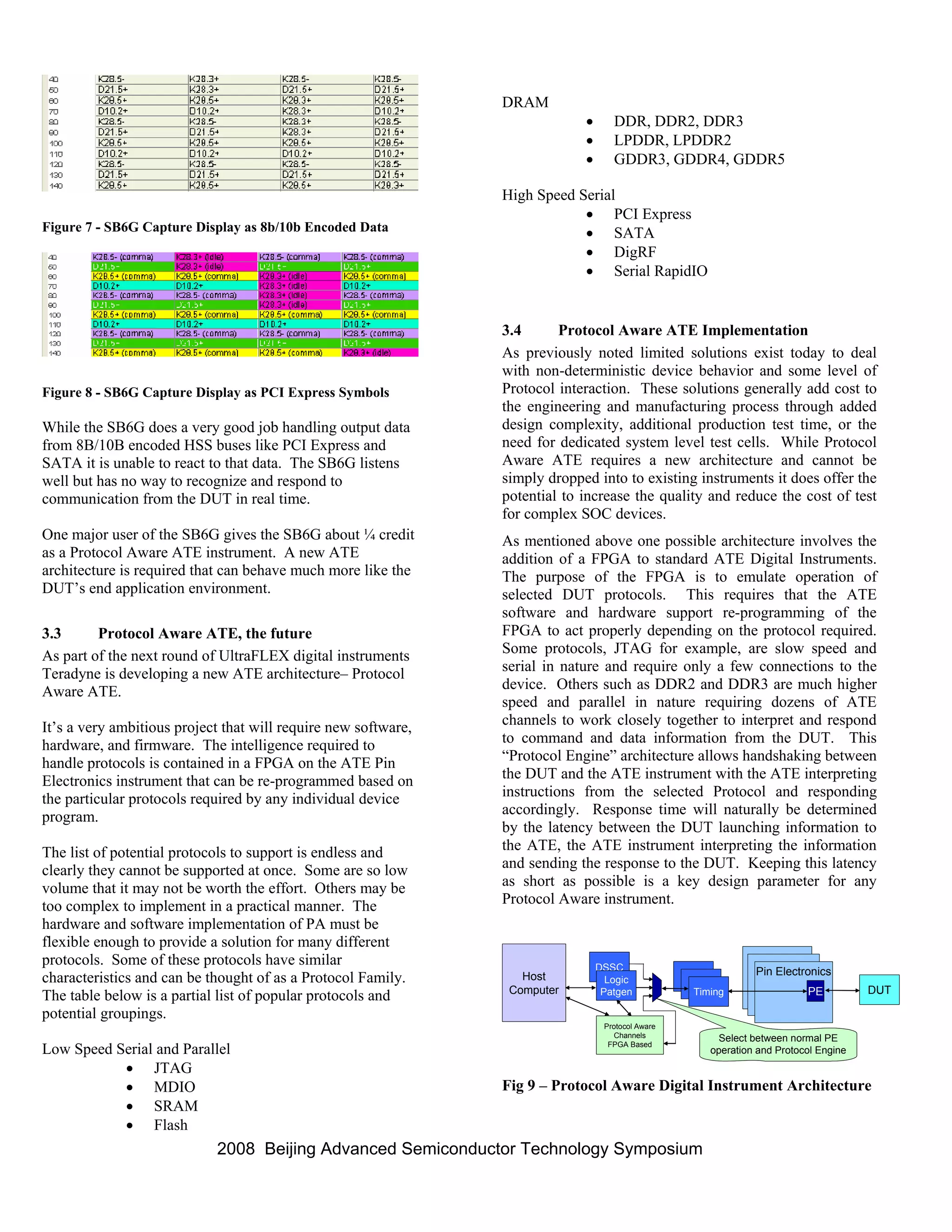

1. Modern semiconductor devices often behave in a non-deterministic manner during testing on automatic test equipment (ATE) due to the use of asynchronous IP blocks and industry standard protocols. 2. Current ATE architectures assume deterministic behavior and have difficulty handling variations in timing and output order, resulting in long test times and inadequate fault coverage. 3. A proposed solution is a protocol aware ATE that can natively emulate real-time chip I/O at the protocol level, enabling more complete functional testing similar to "mission mode" operation.