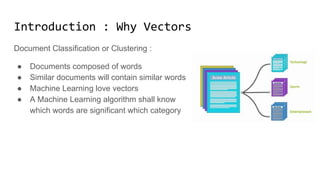

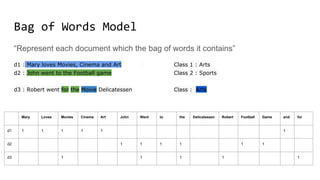

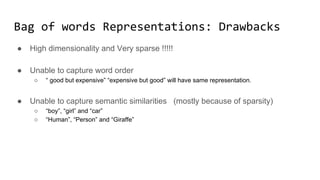

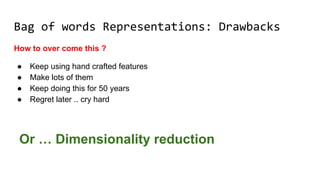

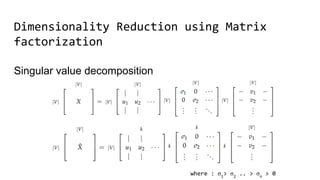

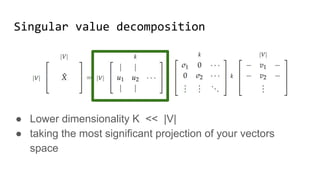

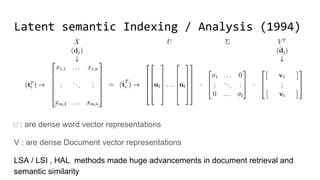

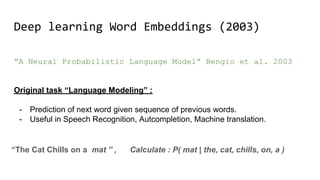

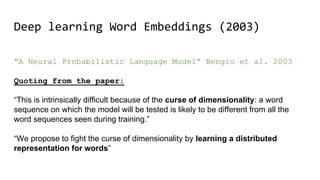

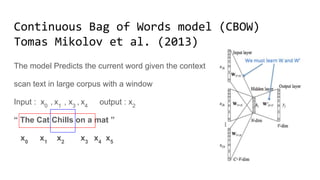

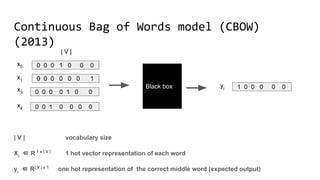

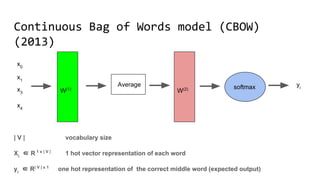

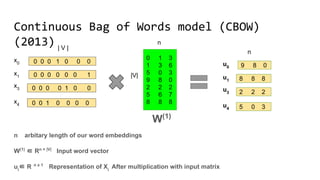

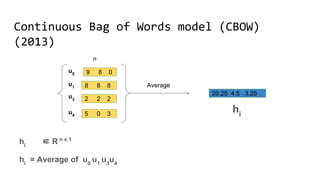

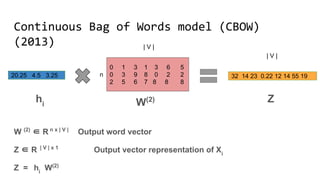

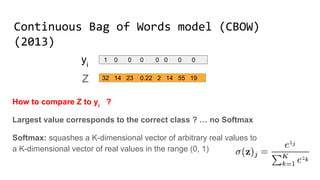

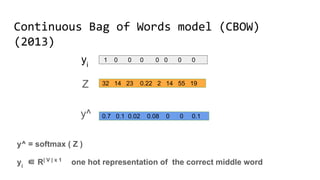

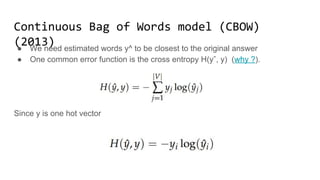

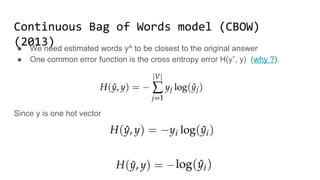

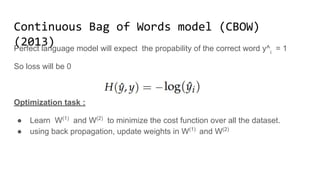

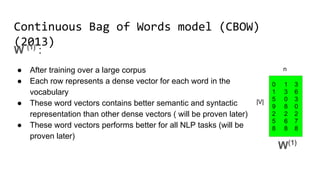

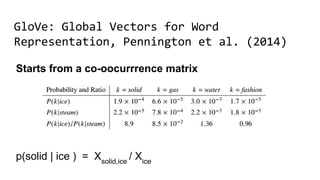

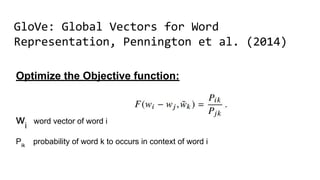

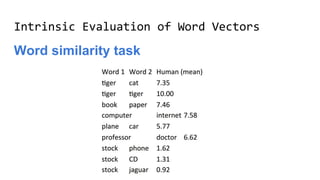

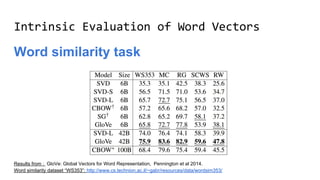

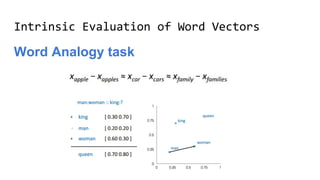

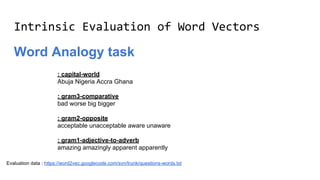

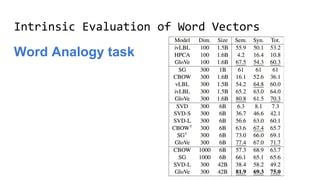

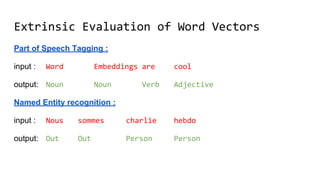

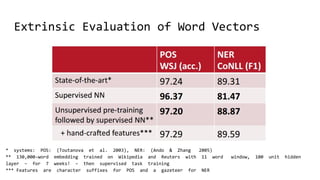

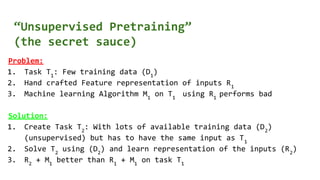

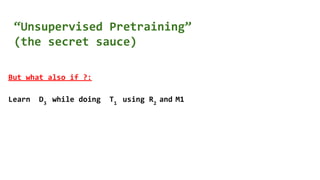

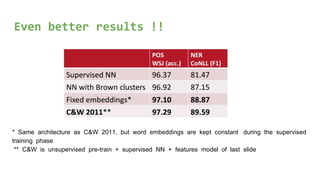

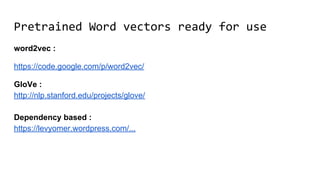

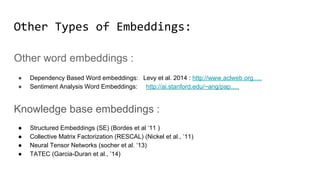

The document discusses the importance of word embeddings in natural language processing, highlighting various models such as Continuous Bag of Words (CBOW) and GloVe for representing words in a high-dimensional vector space. It addresses the challenges of conventional representations, including high dimensionality and sparsity, and introduces methods like dimensionality reduction and evaluation metrics to assess the quality of word vectors. Additionally, the document references various studies and resources for further learning in the field of deep learning and NLP.