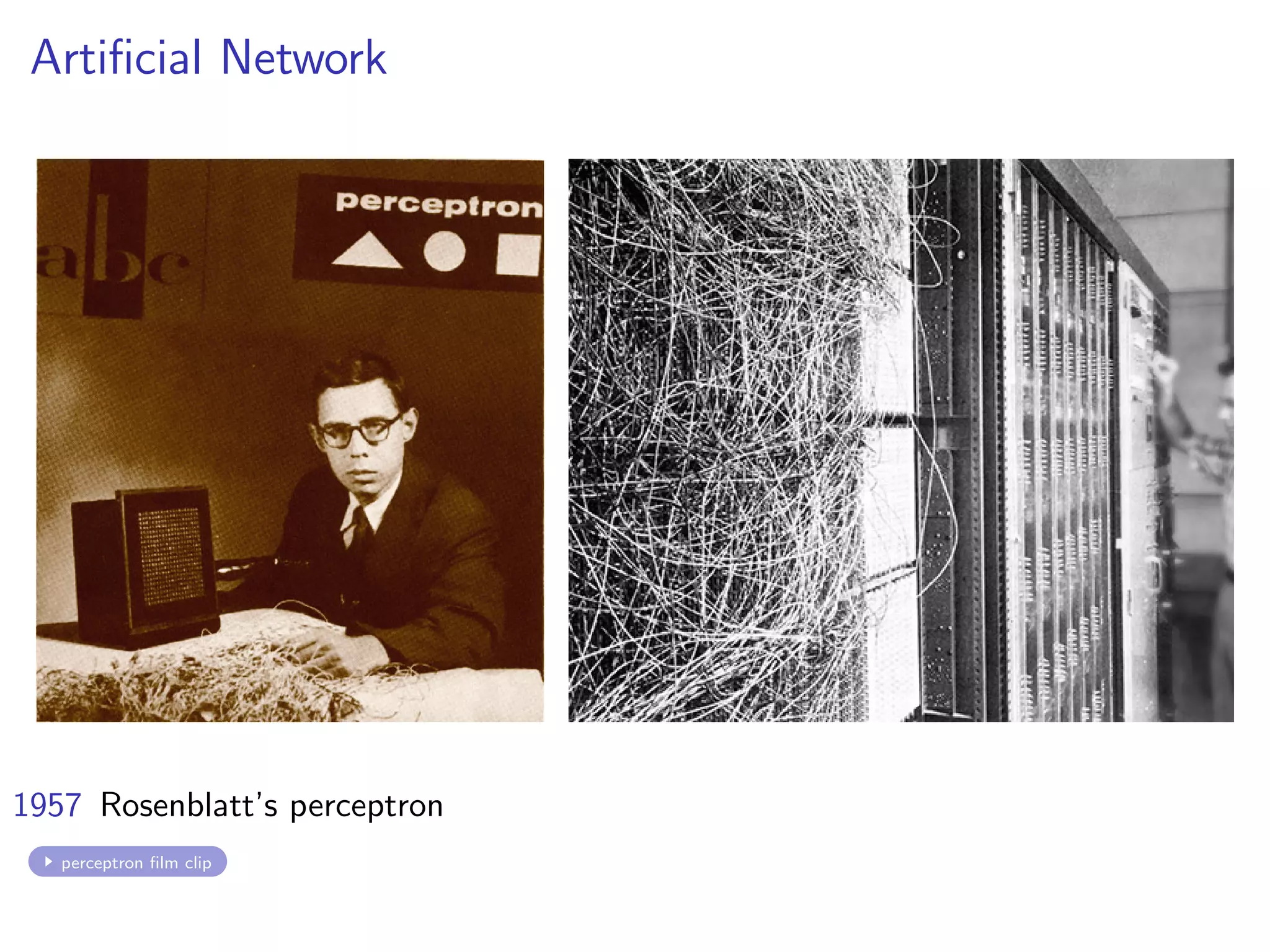

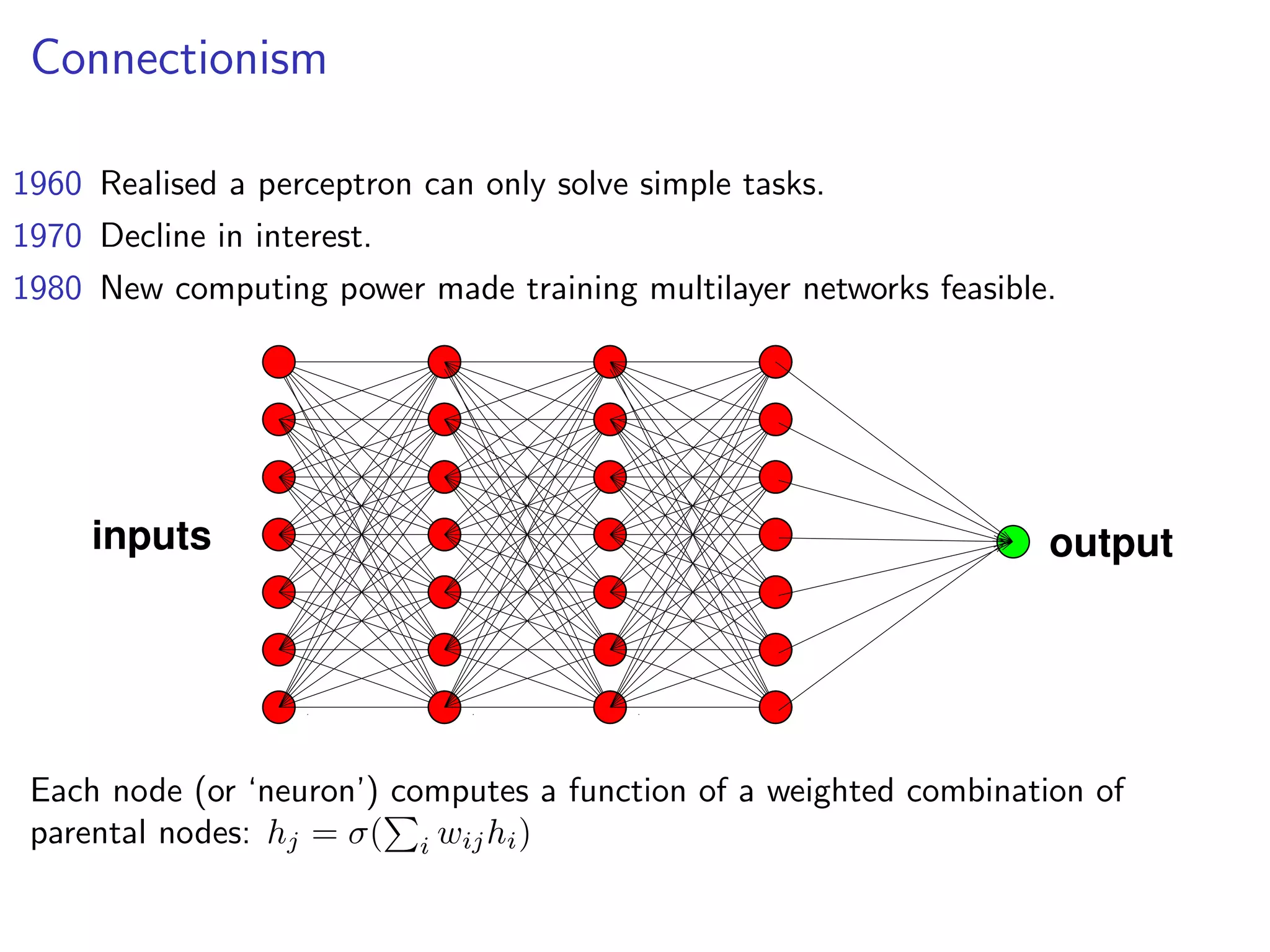

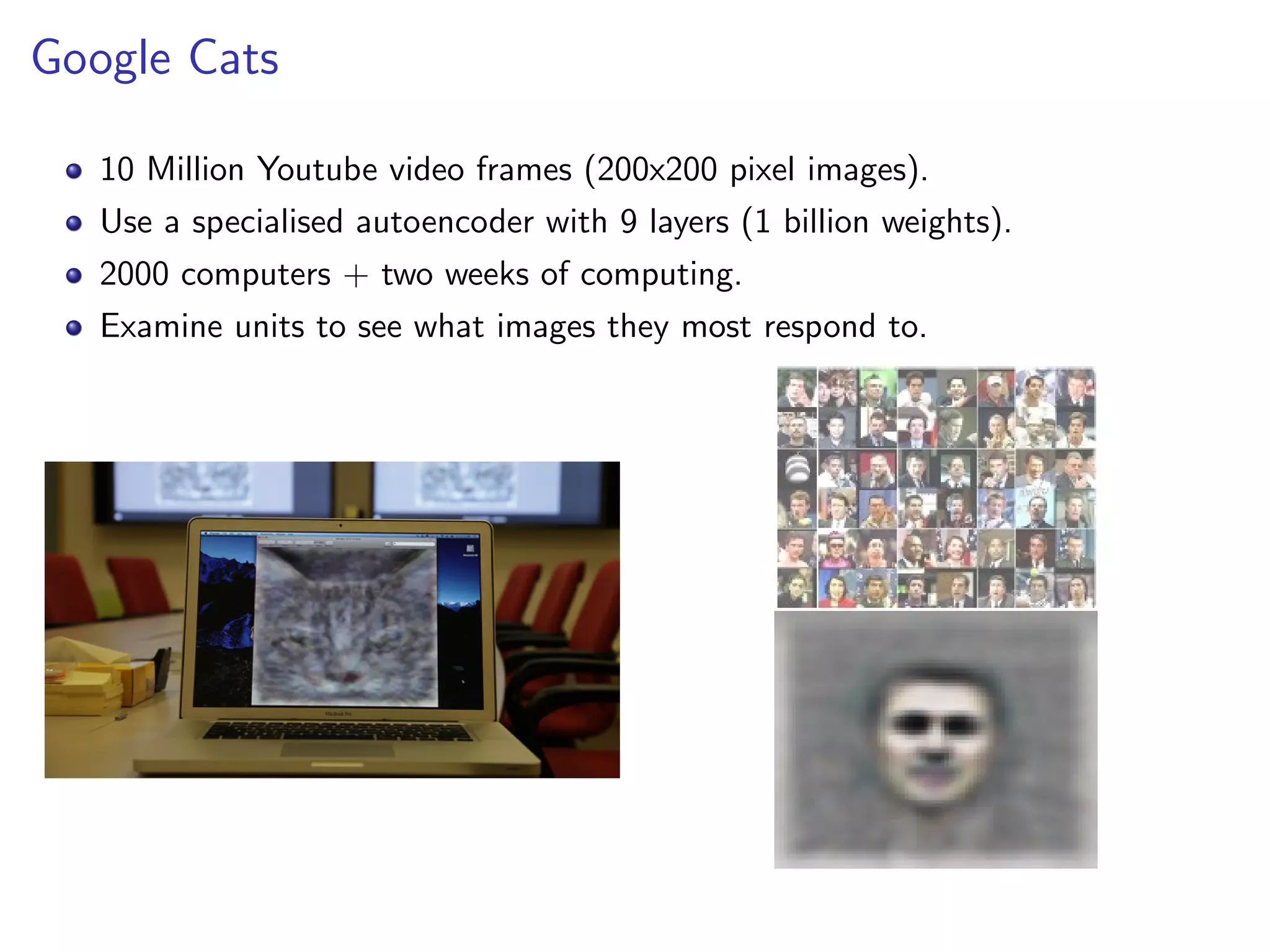

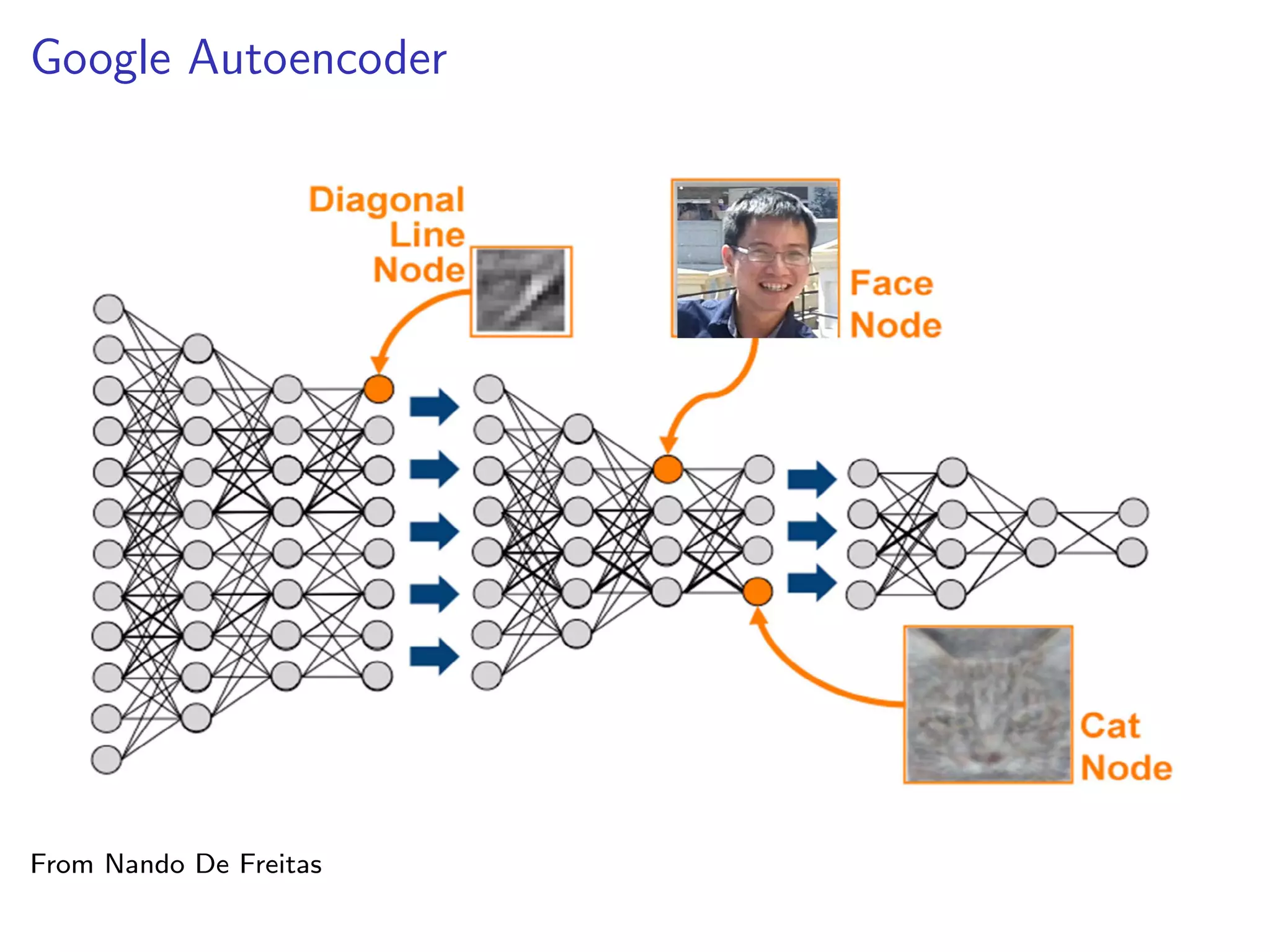

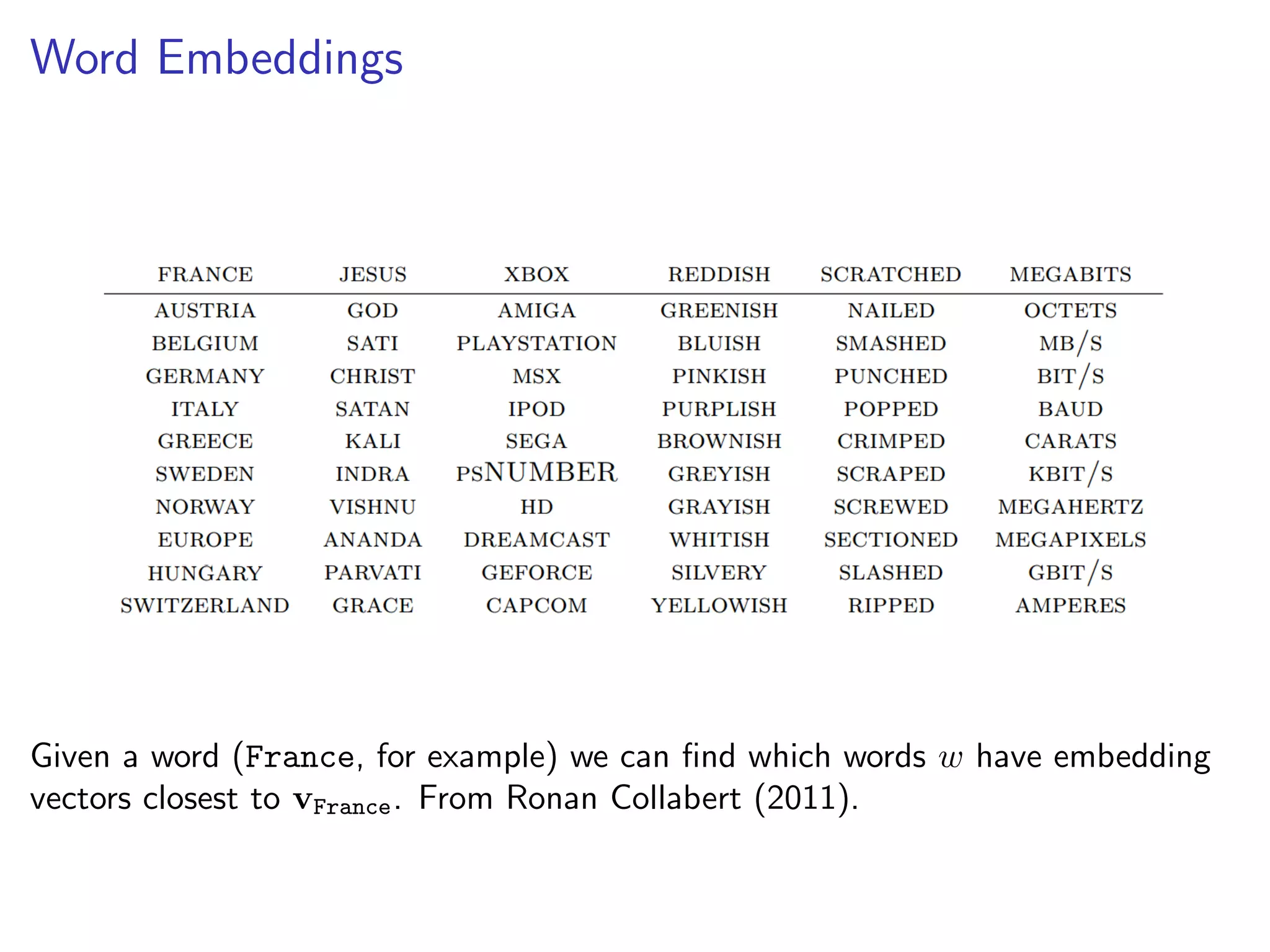

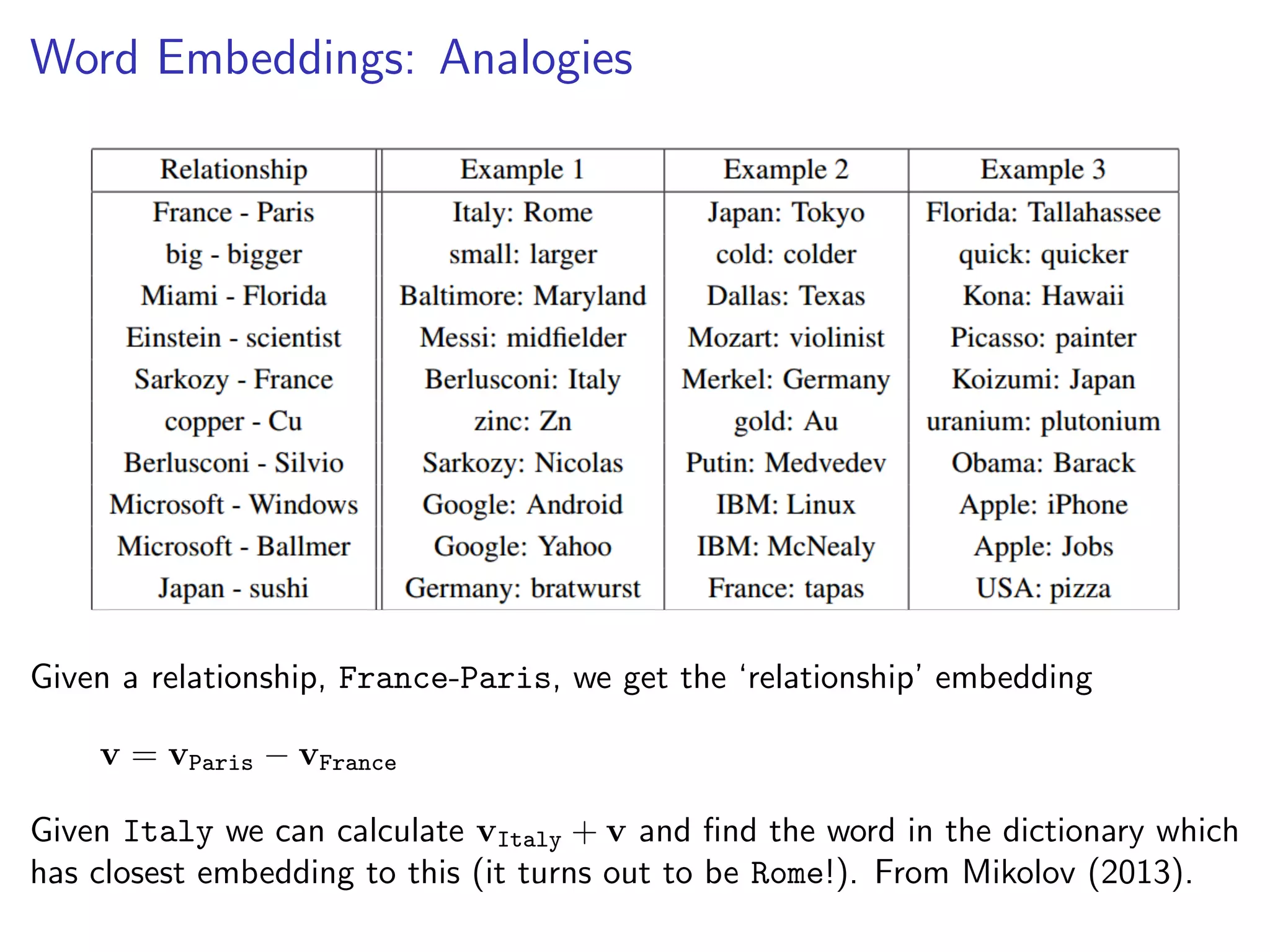

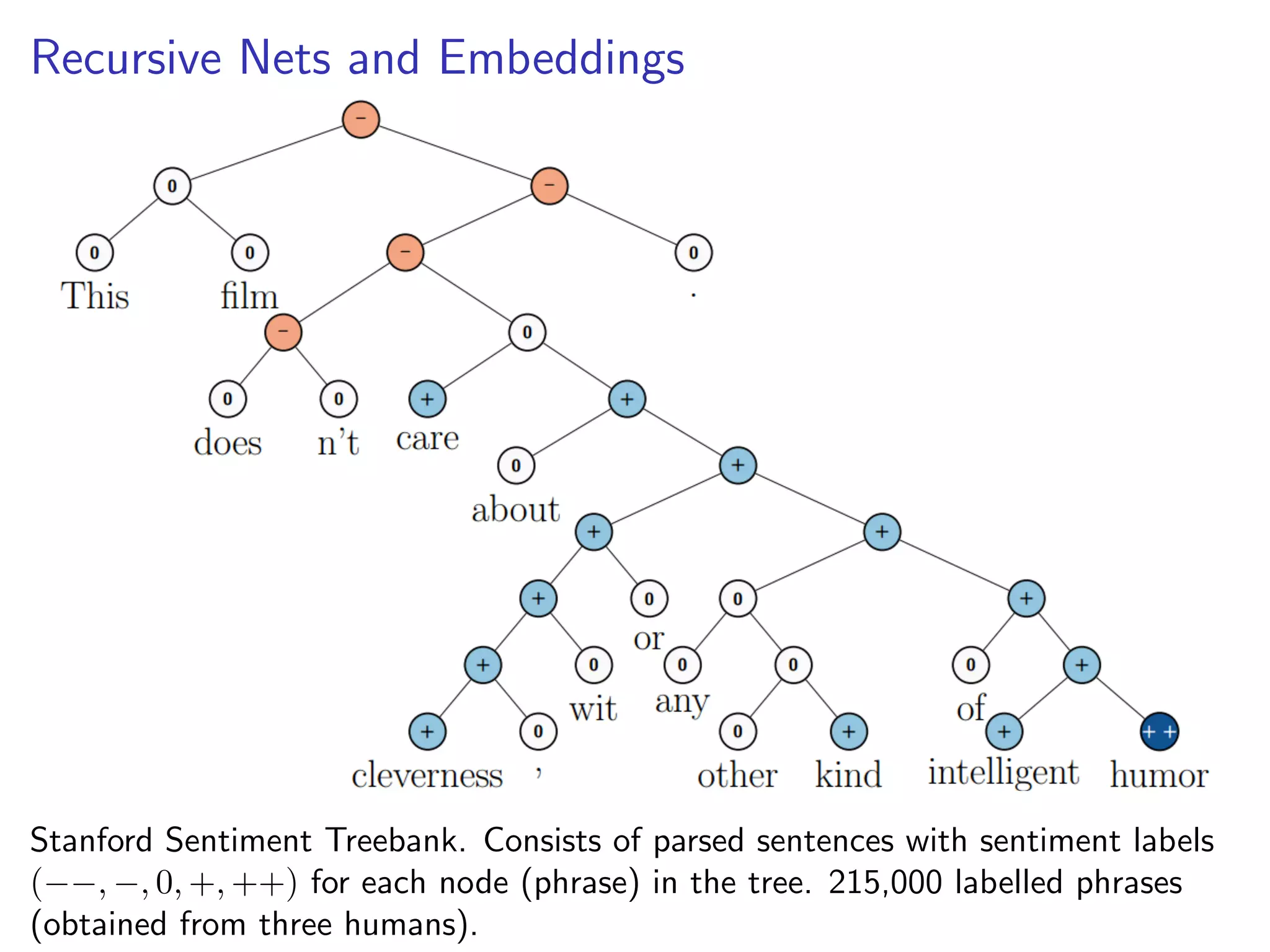

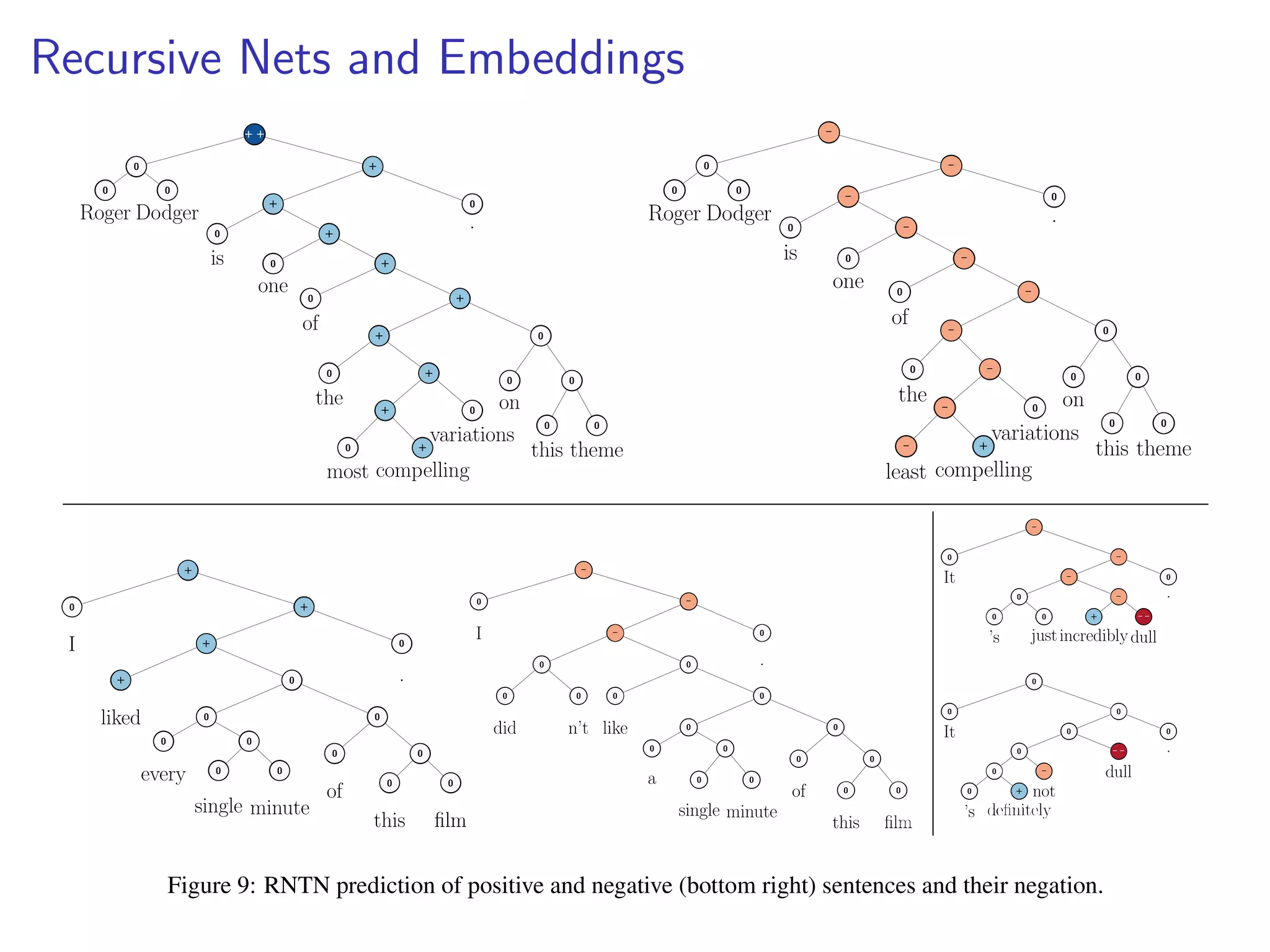

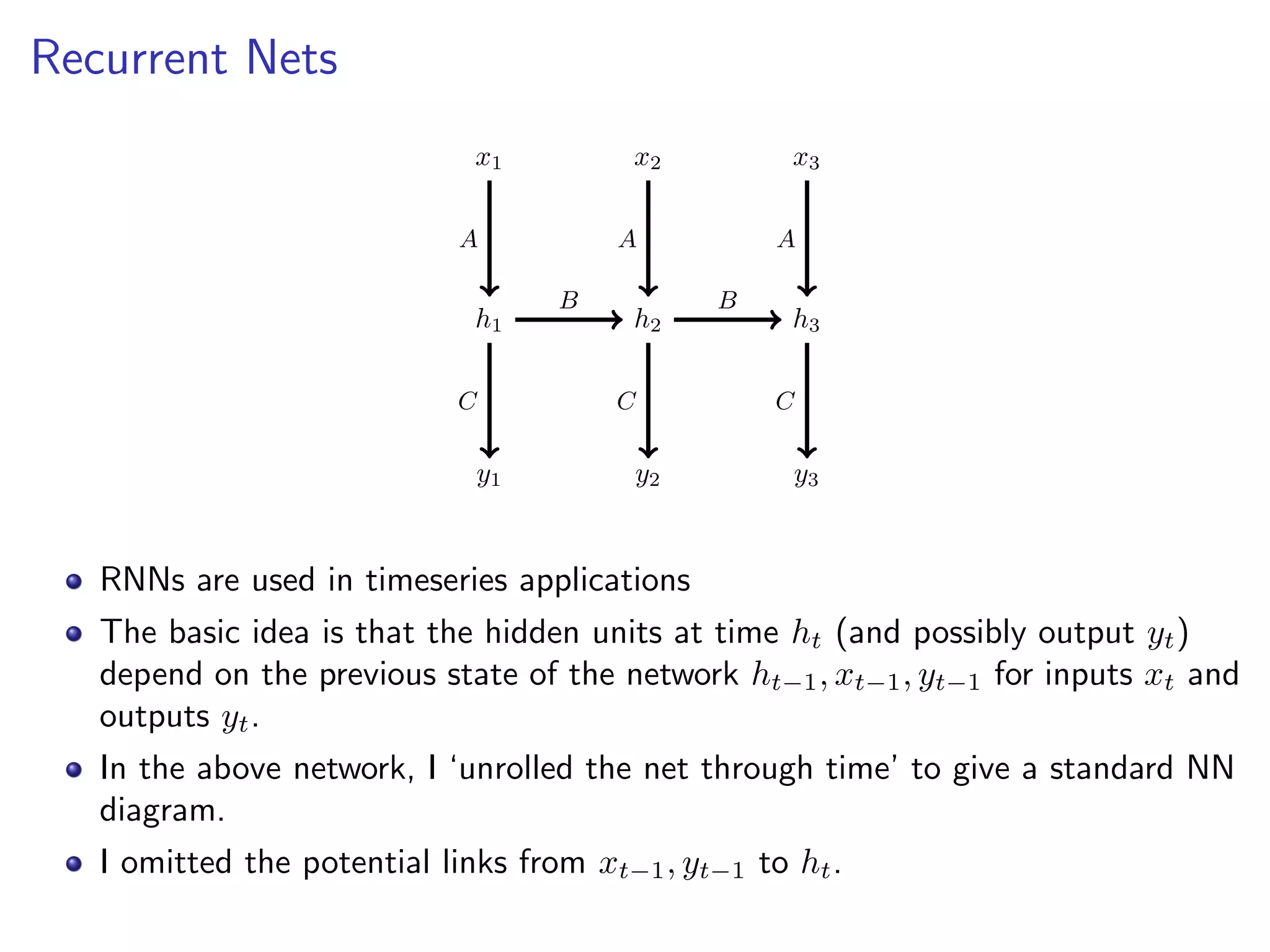

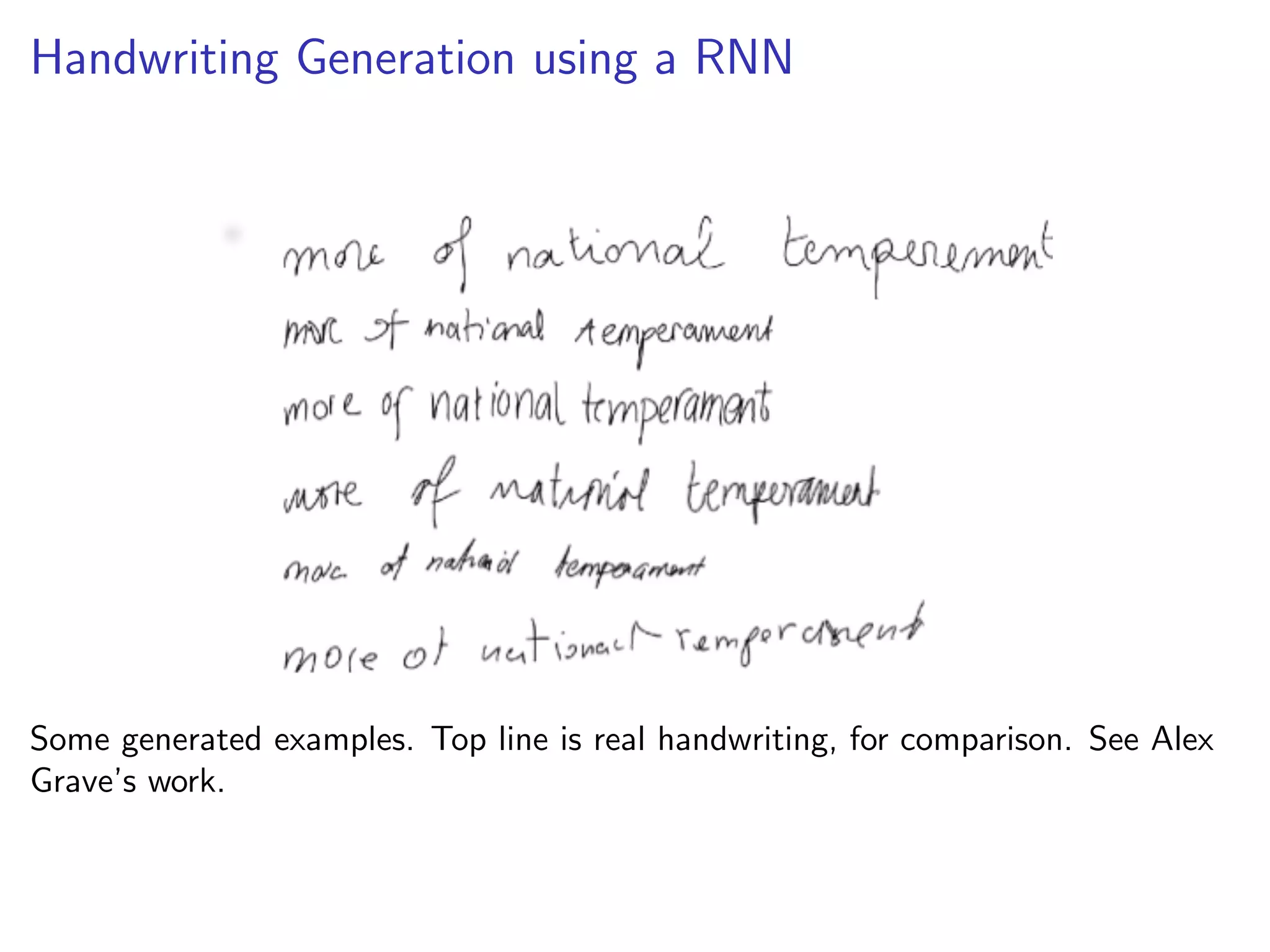

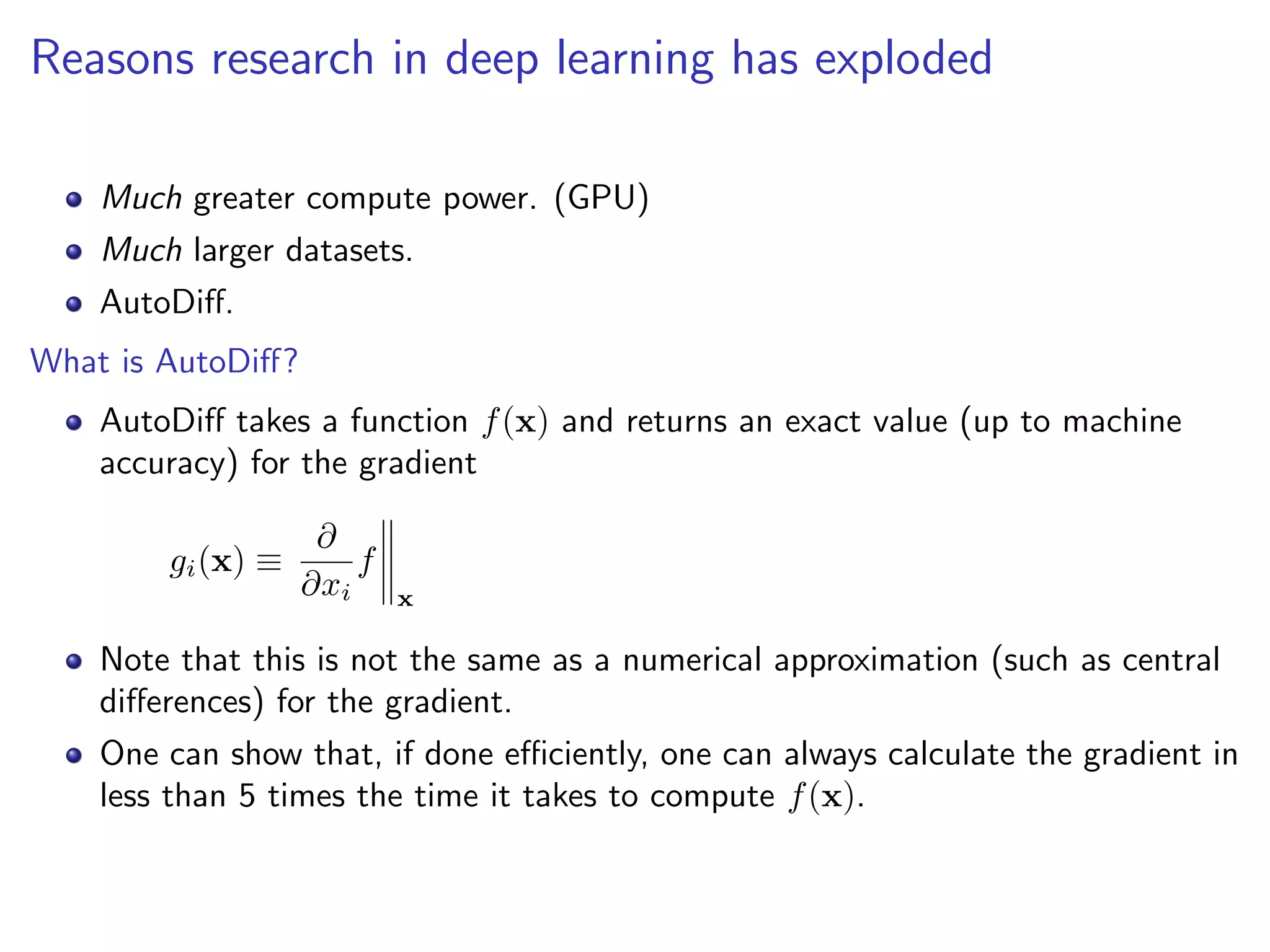

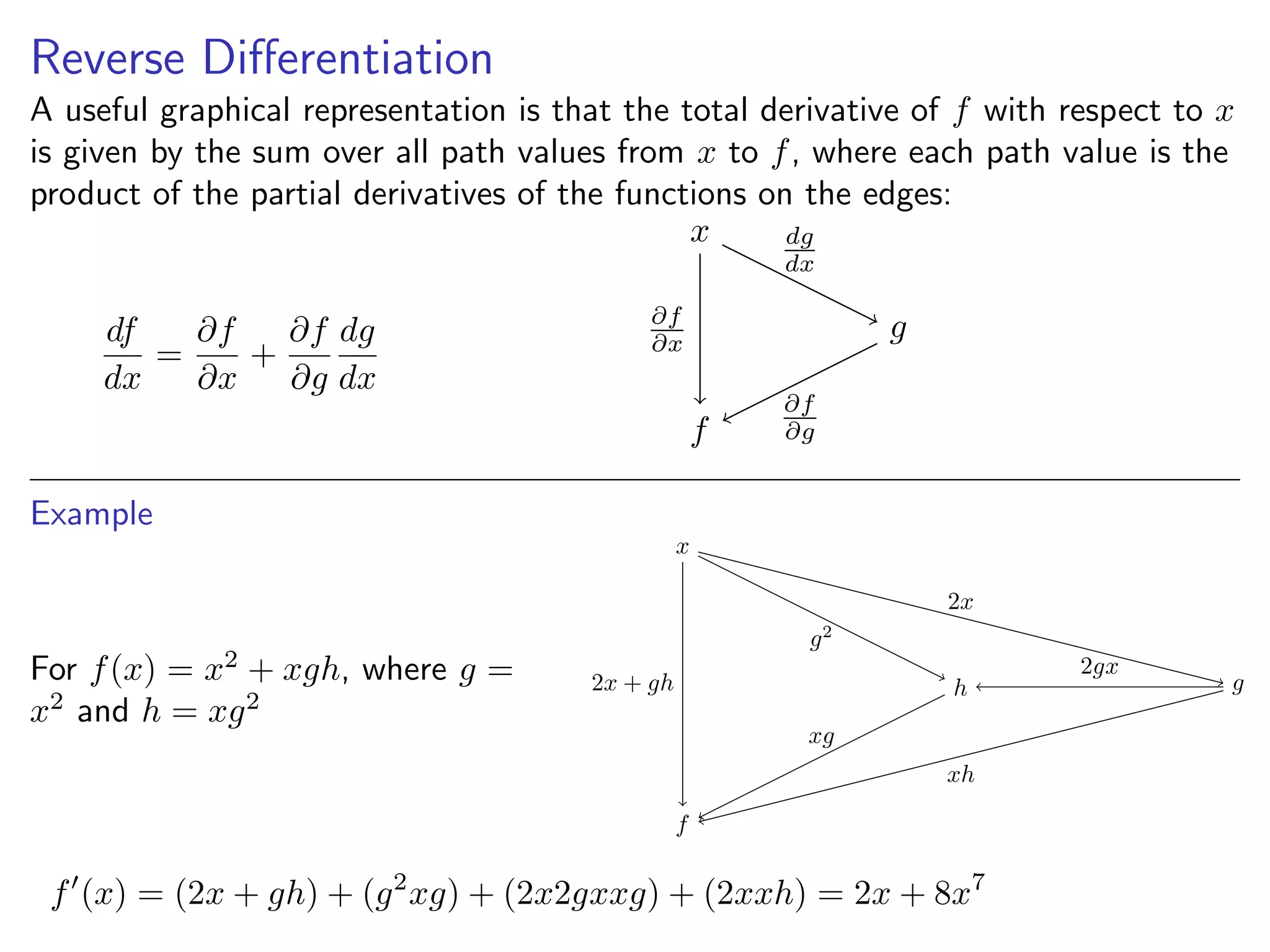

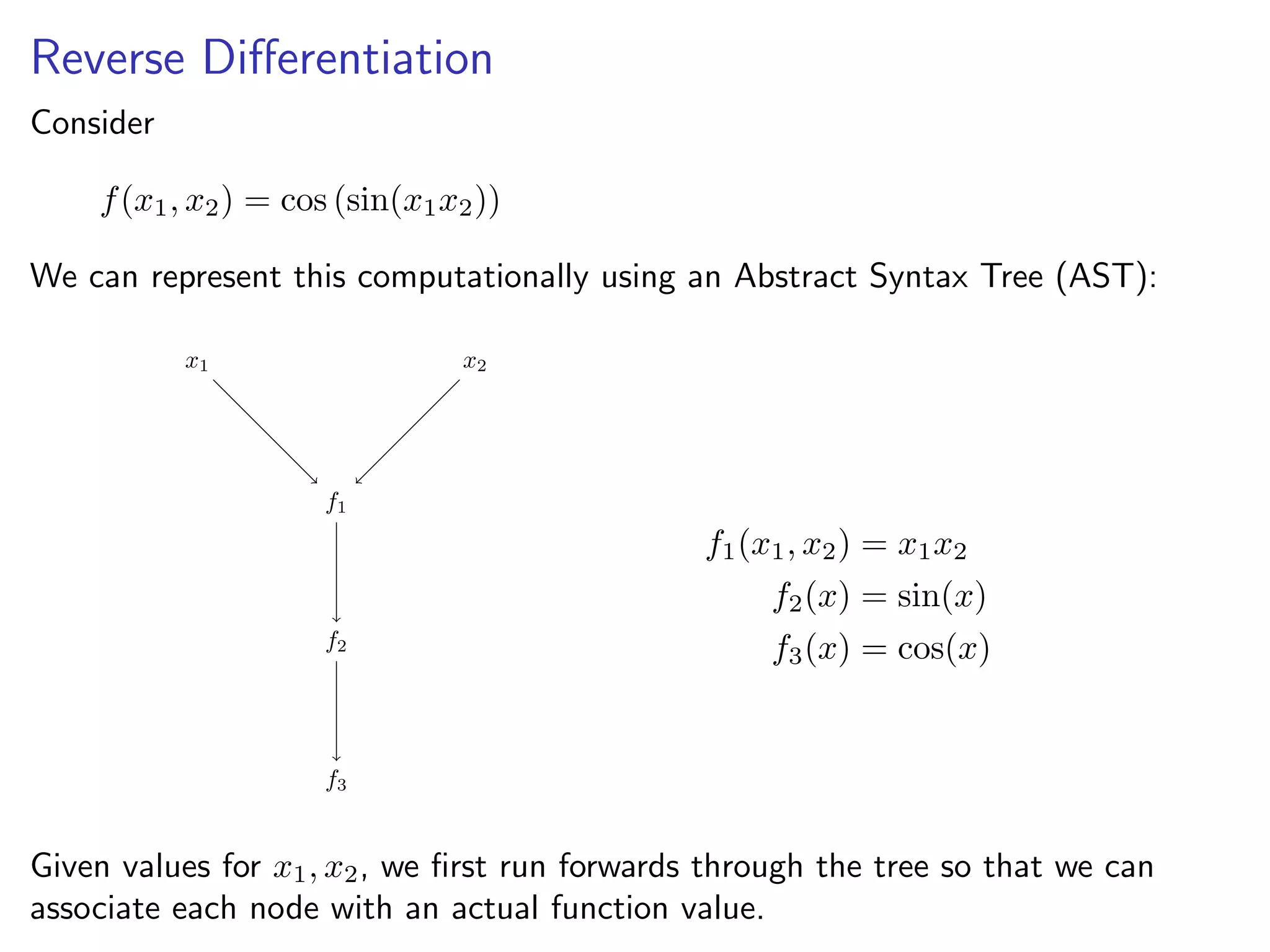

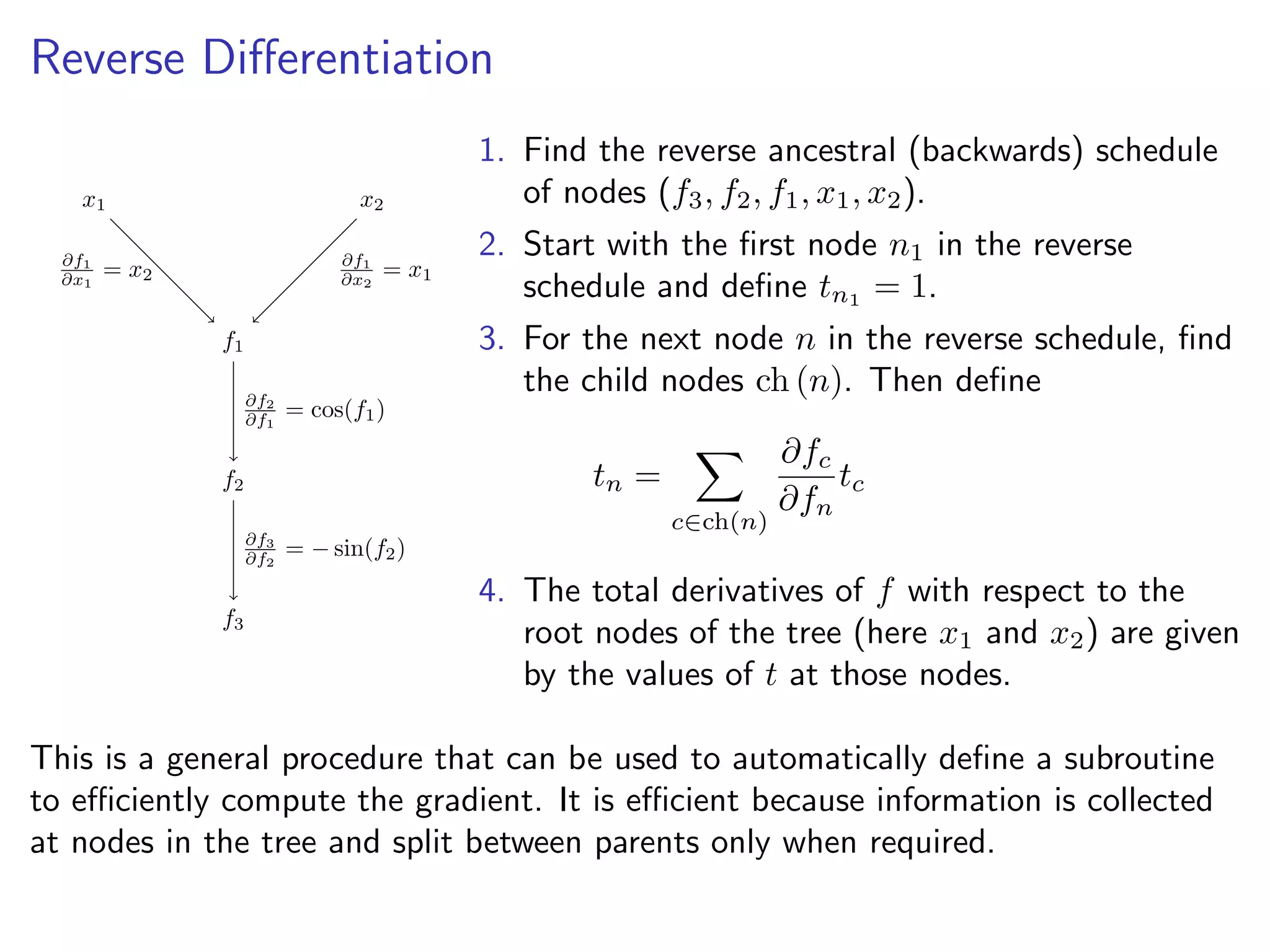

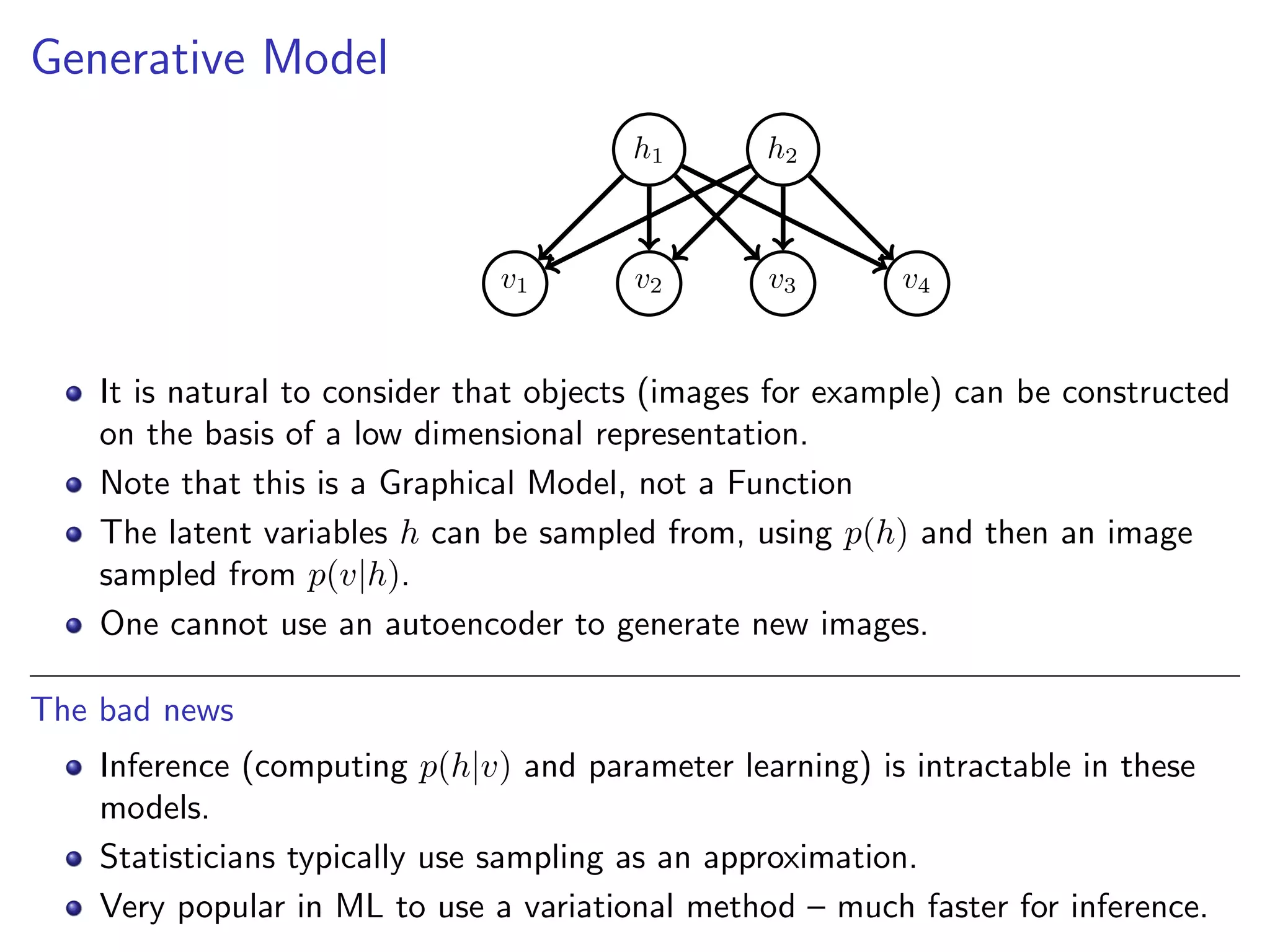

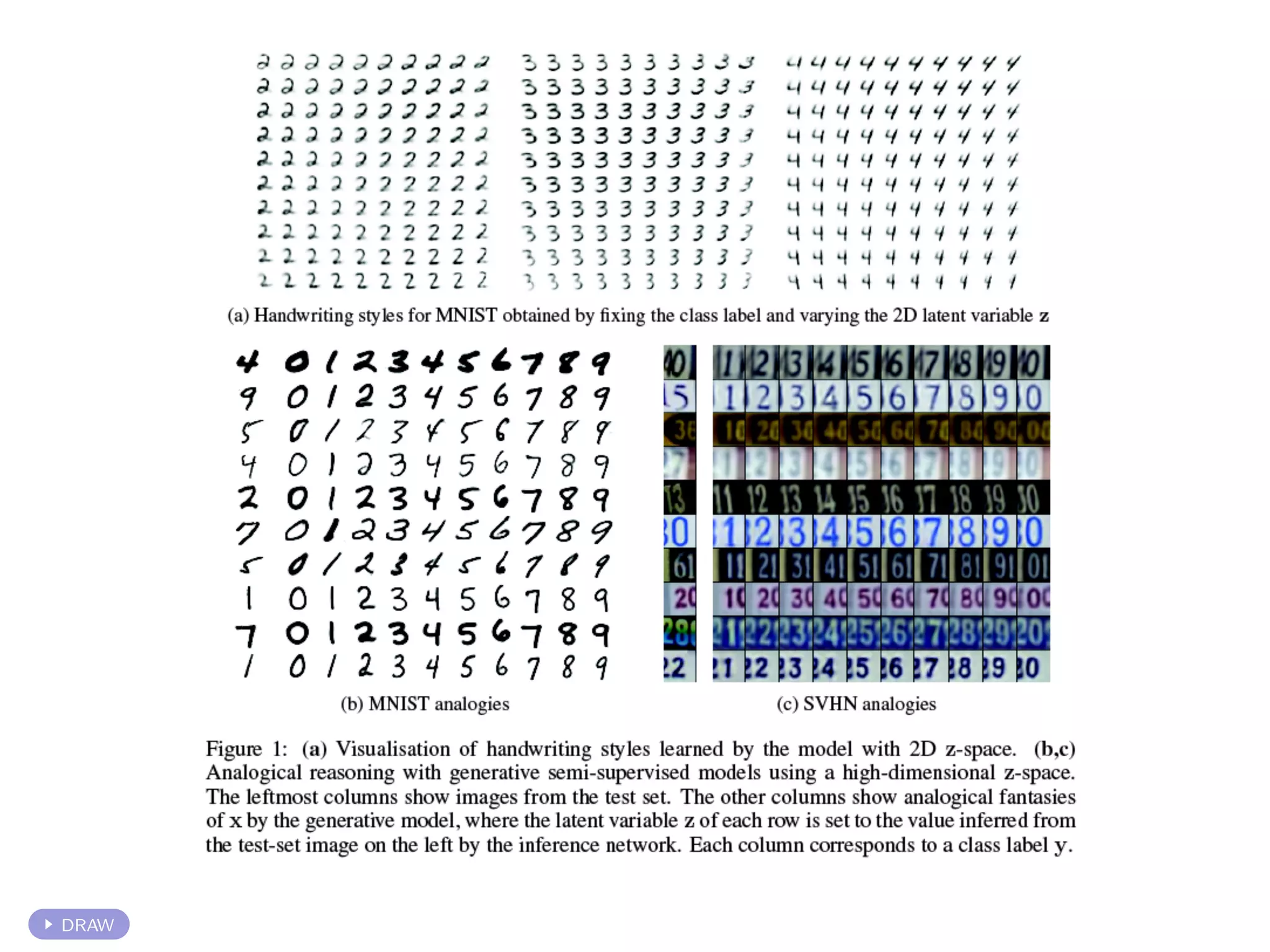

This document discusses the history and recent developments in artificial intelligence and deep learning. It covers early work in neural networks from the 1950s through the 1990s, including perceptrons, autoencoders, and connectionism. More recent progress is attributed to greater computing power, larger datasets, and the development of automatic differentiation techniques. Applications discussed include computer vision, natural language processing using word embeddings, and recurrent neural networks for tasks like handwriting generation.