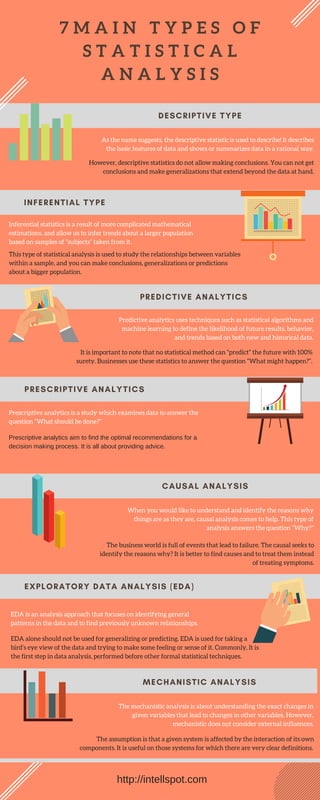

This document outlines 7 main types of statistical analysis:

1. Descriptive statistics describe basic features of data in a summarized way but cannot make conclusions about broader populations.

2. Inferential statistics allow conclusions and generalizations about larger populations based on samples using mathematical estimations.

3. Predictive analytics use statistical algorithms and machine learning to define the likelihood of future results and trends based on historical data, though cannot predict the future with certainty.

4. Causal analysis examines reasons for outcomes by answering why things occurred.