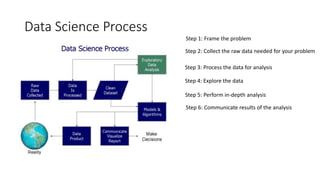

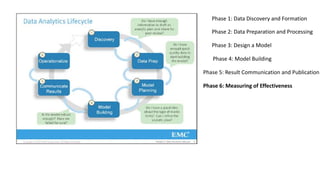

This document provides an introduction to data science concepts. It discusses the components of data science including statistics, visualization, data engineering, advanced computing, and machine learning. It also covers the advantages and disadvantages of data science, as well as common applications. Finally, it outlines the six phases of the data science process: framing the problem, collecting and processing data, exploring and analyzing data, communicating results, and measuring effectiveness.