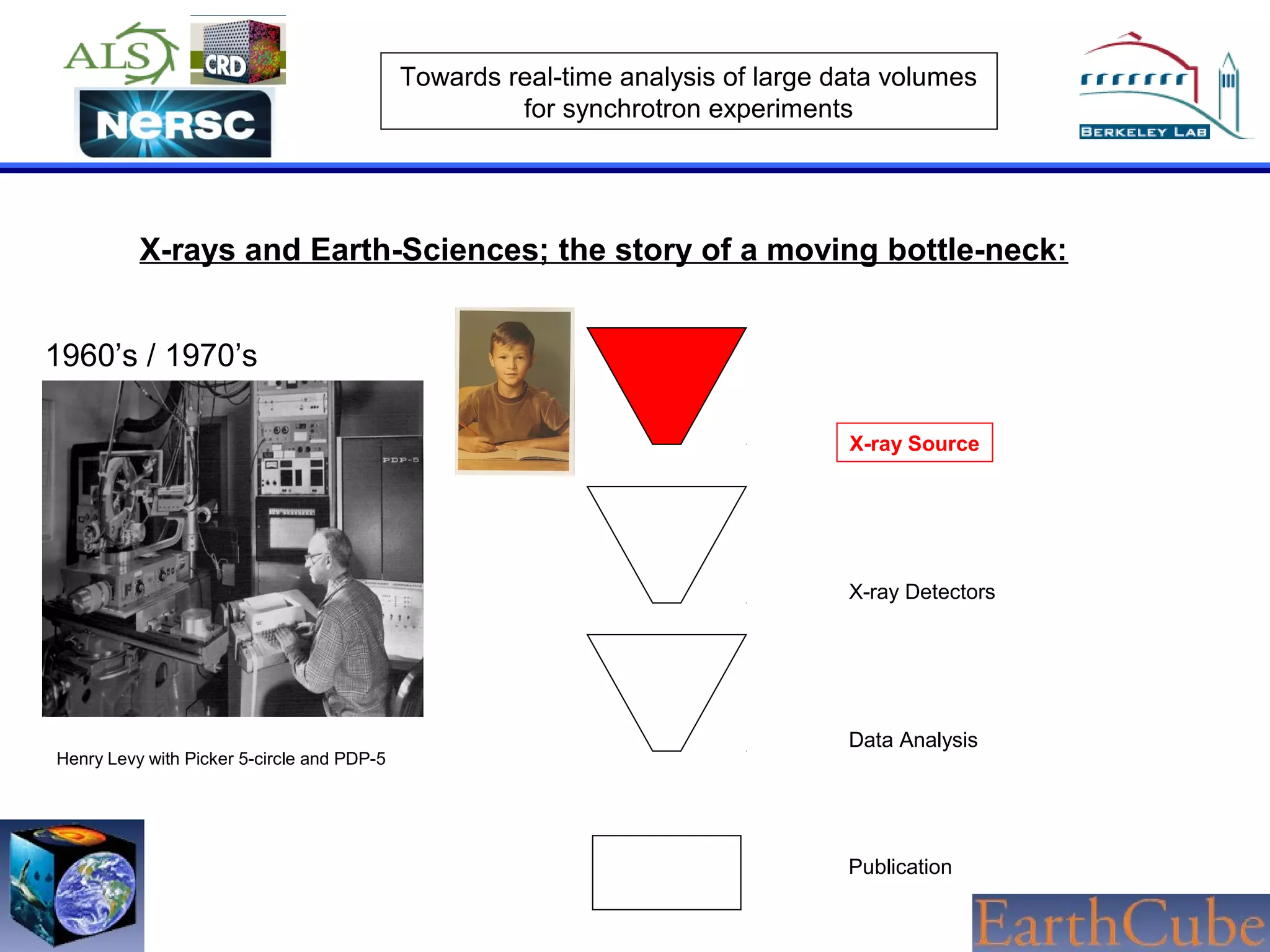

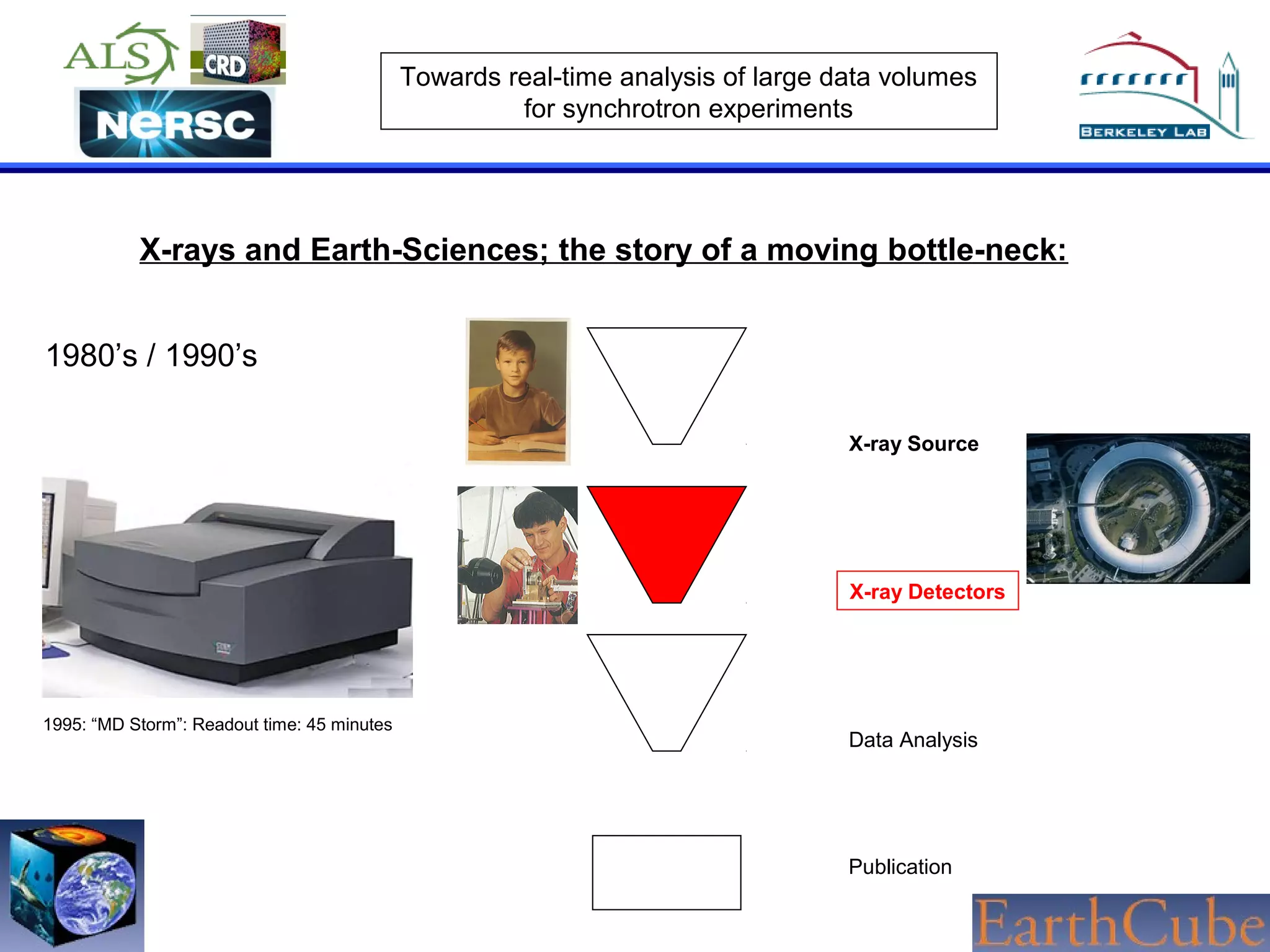

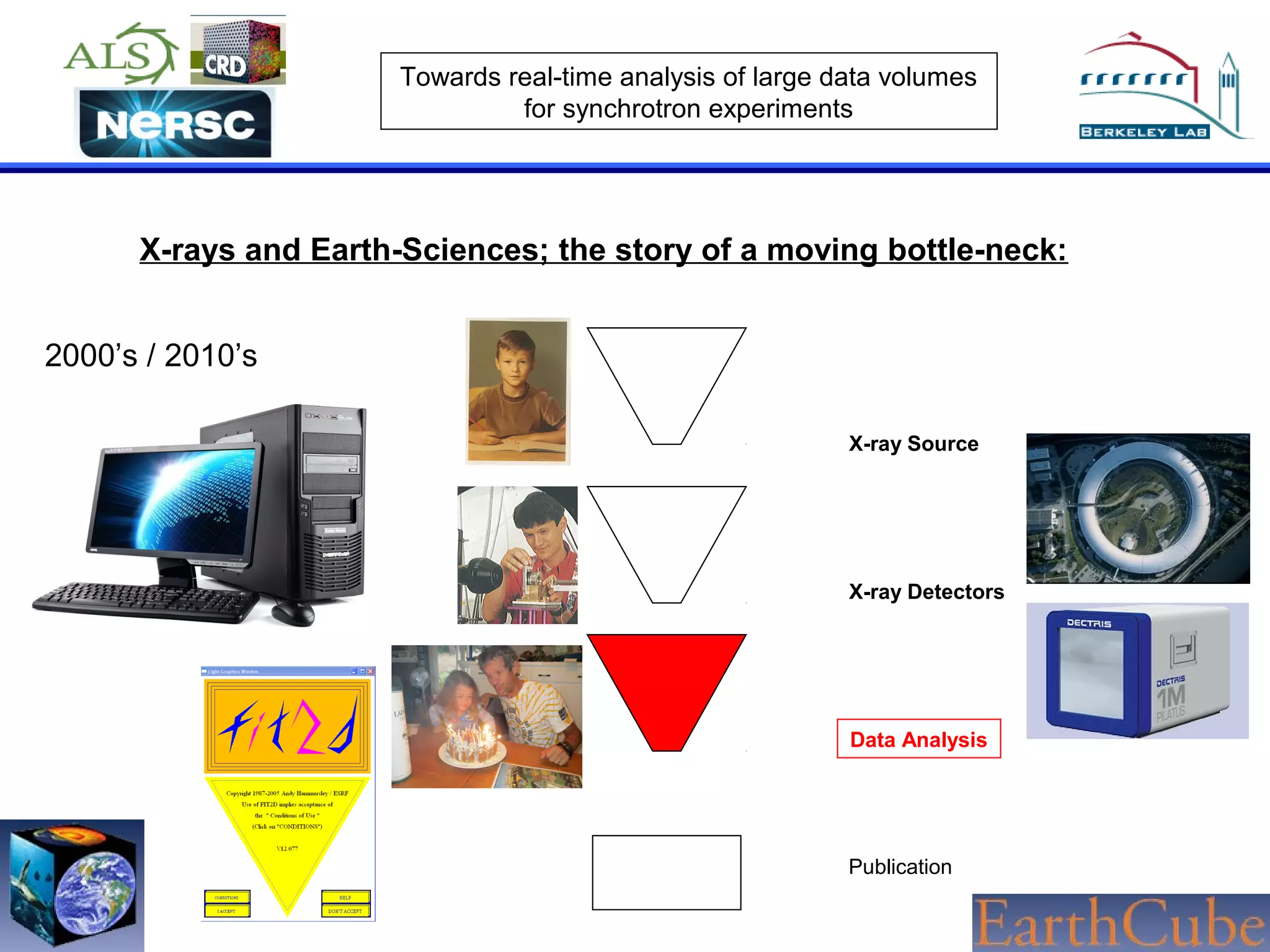

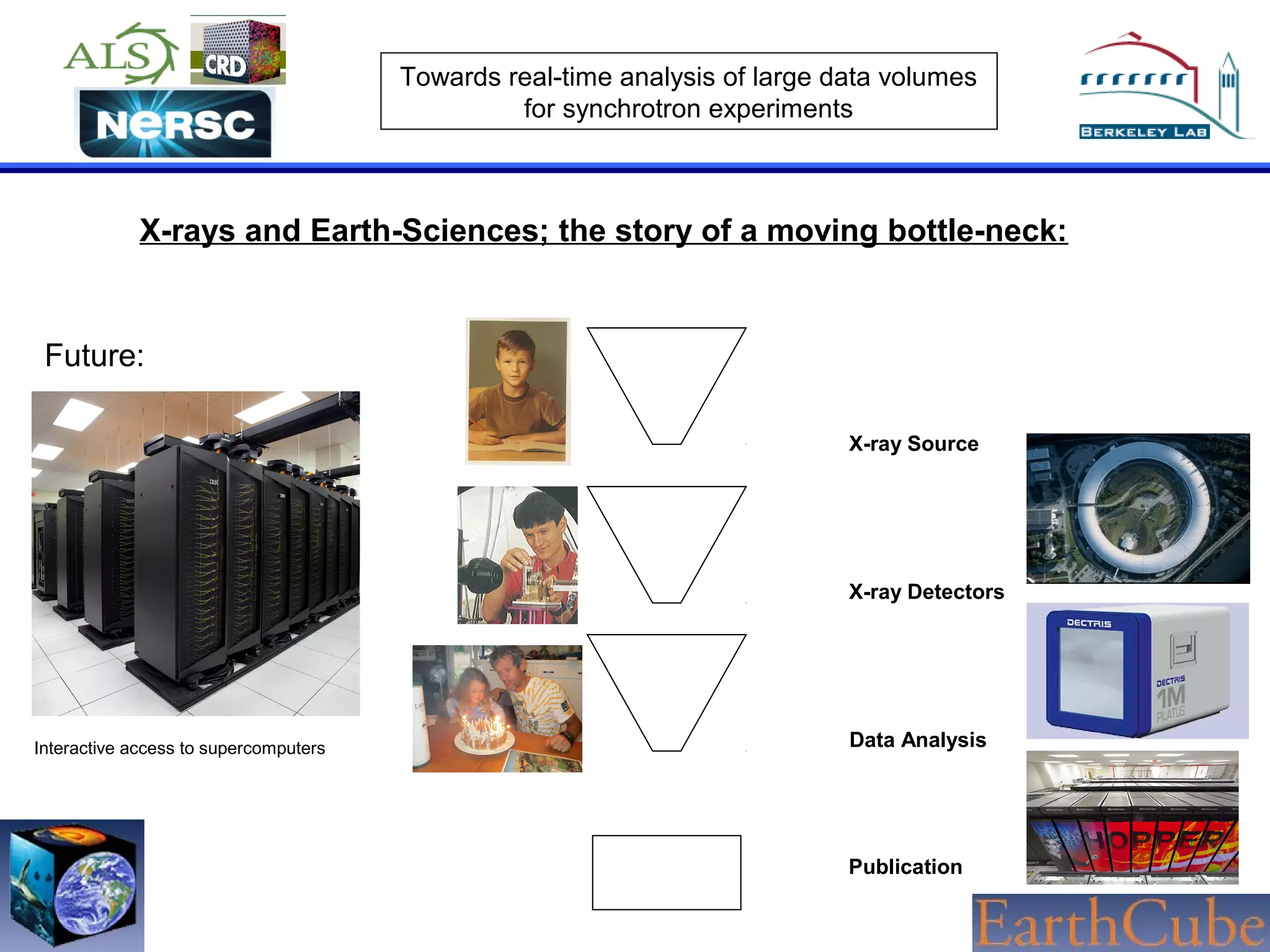

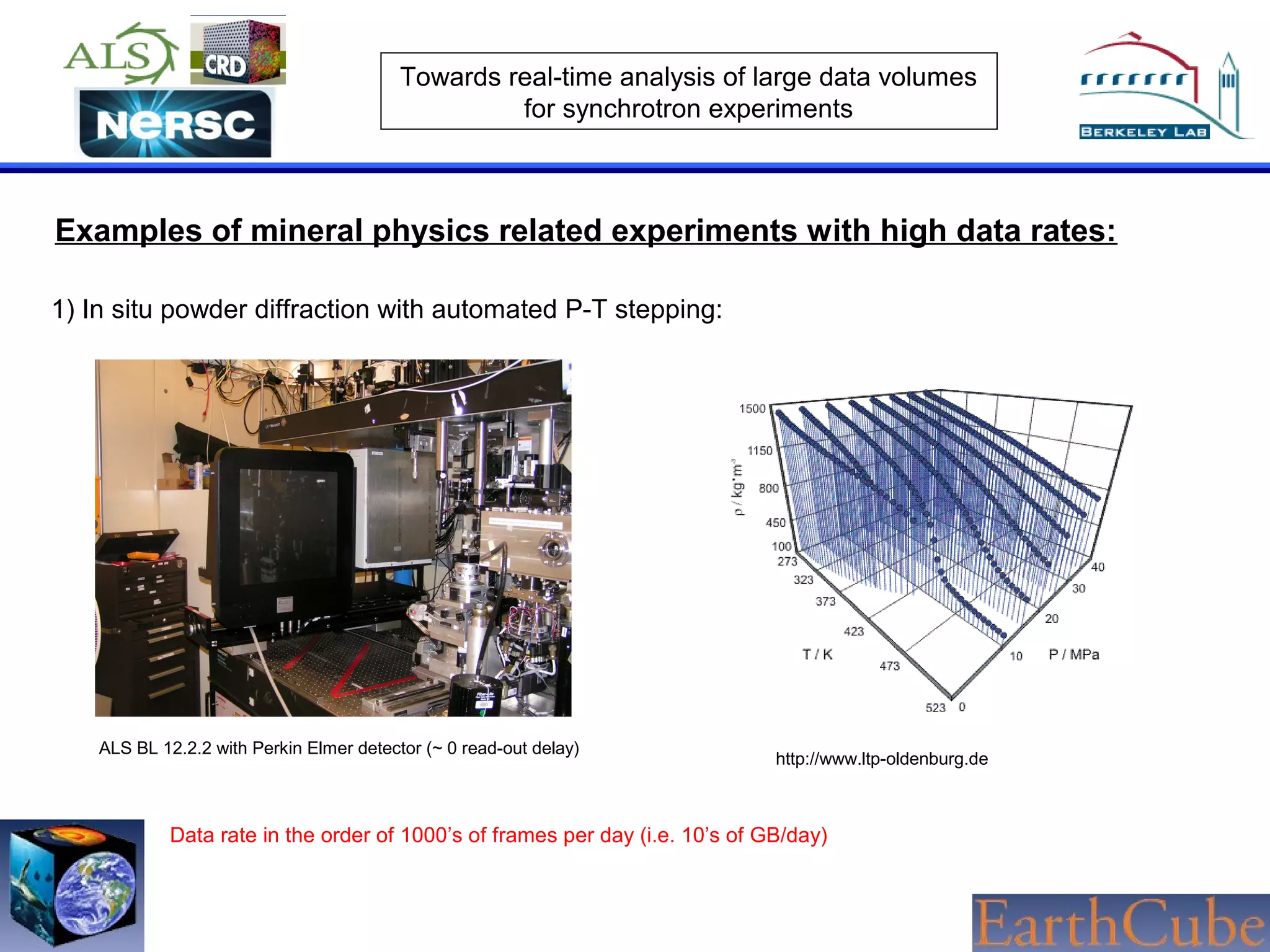

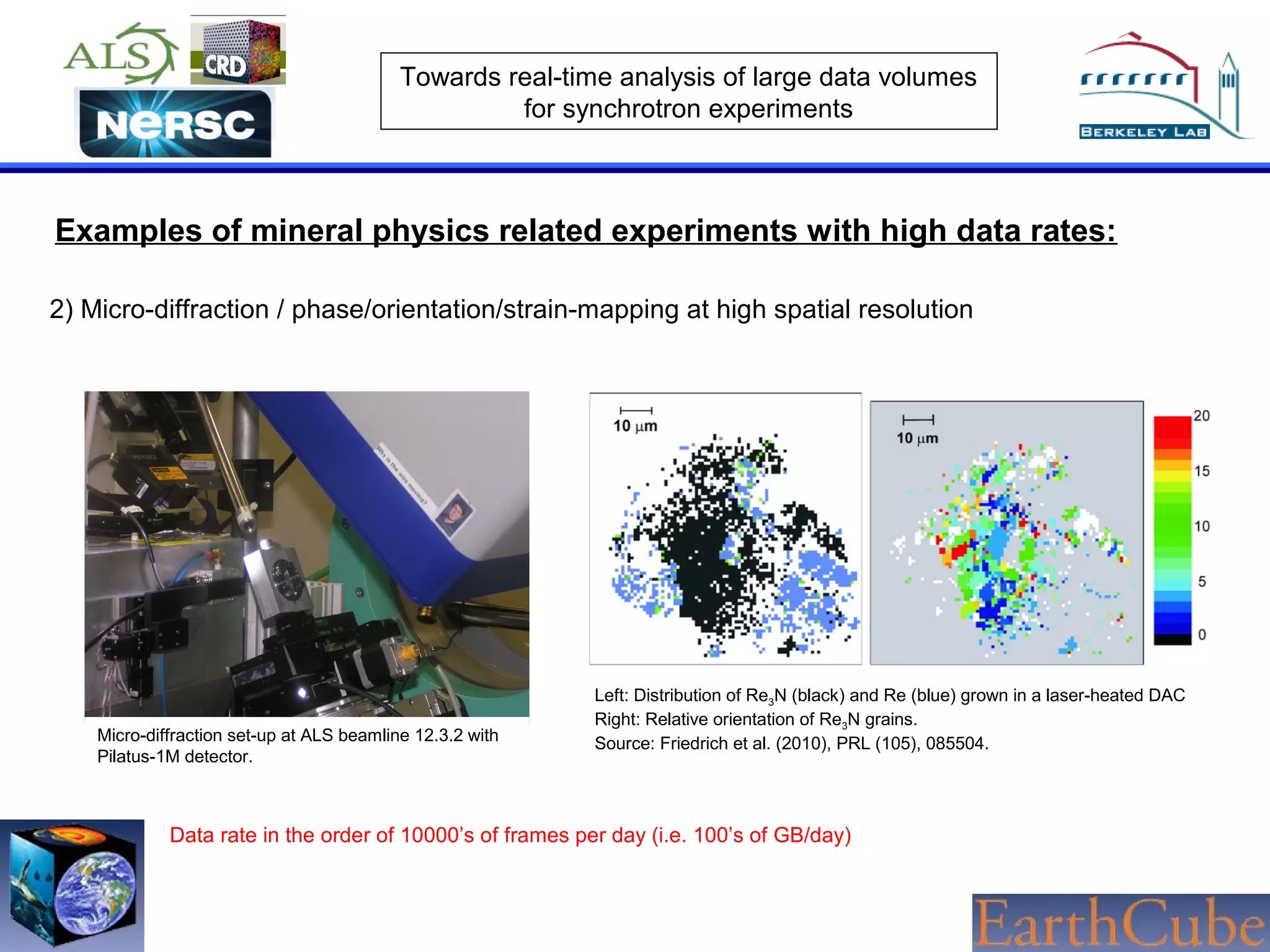

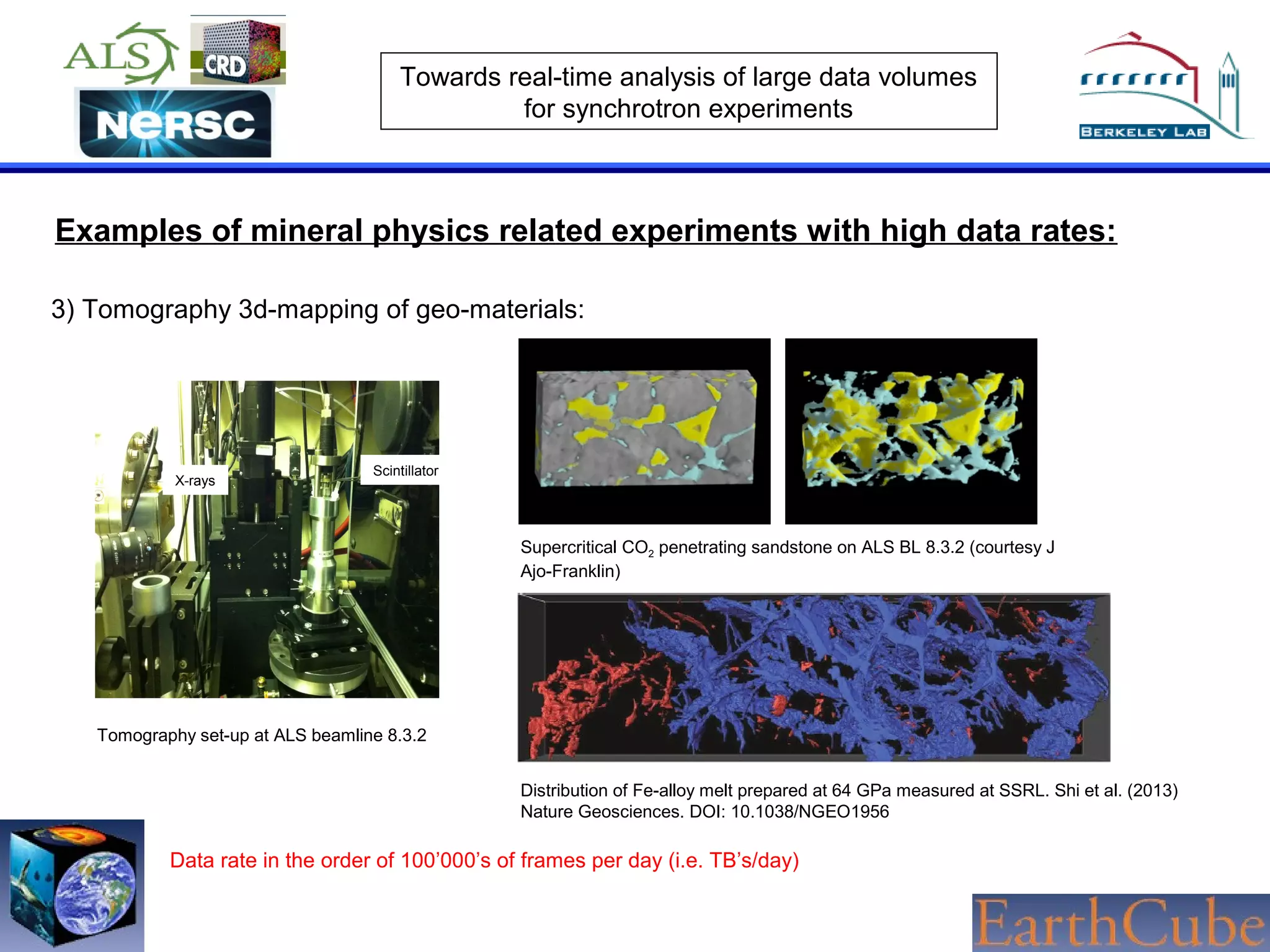

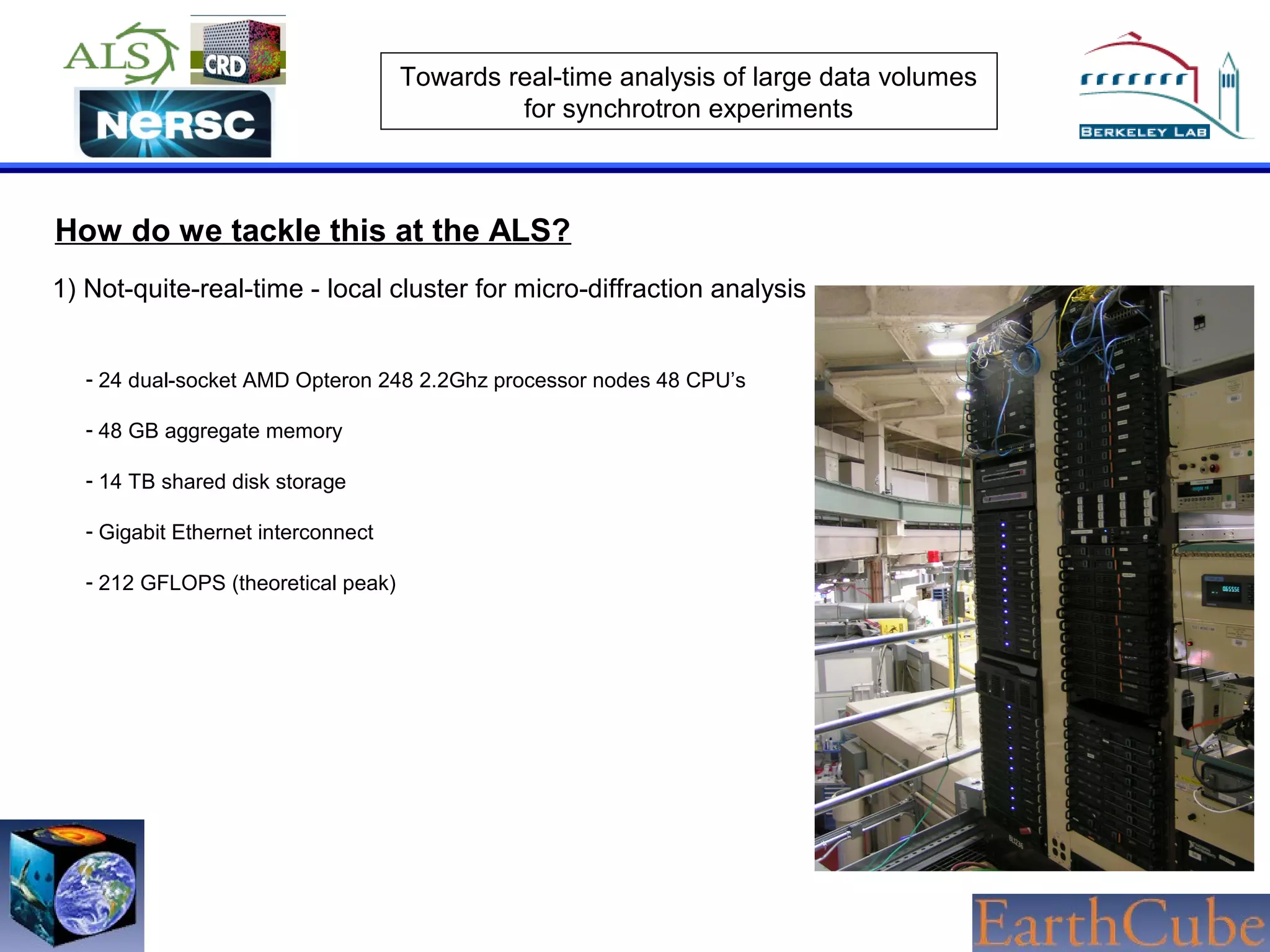

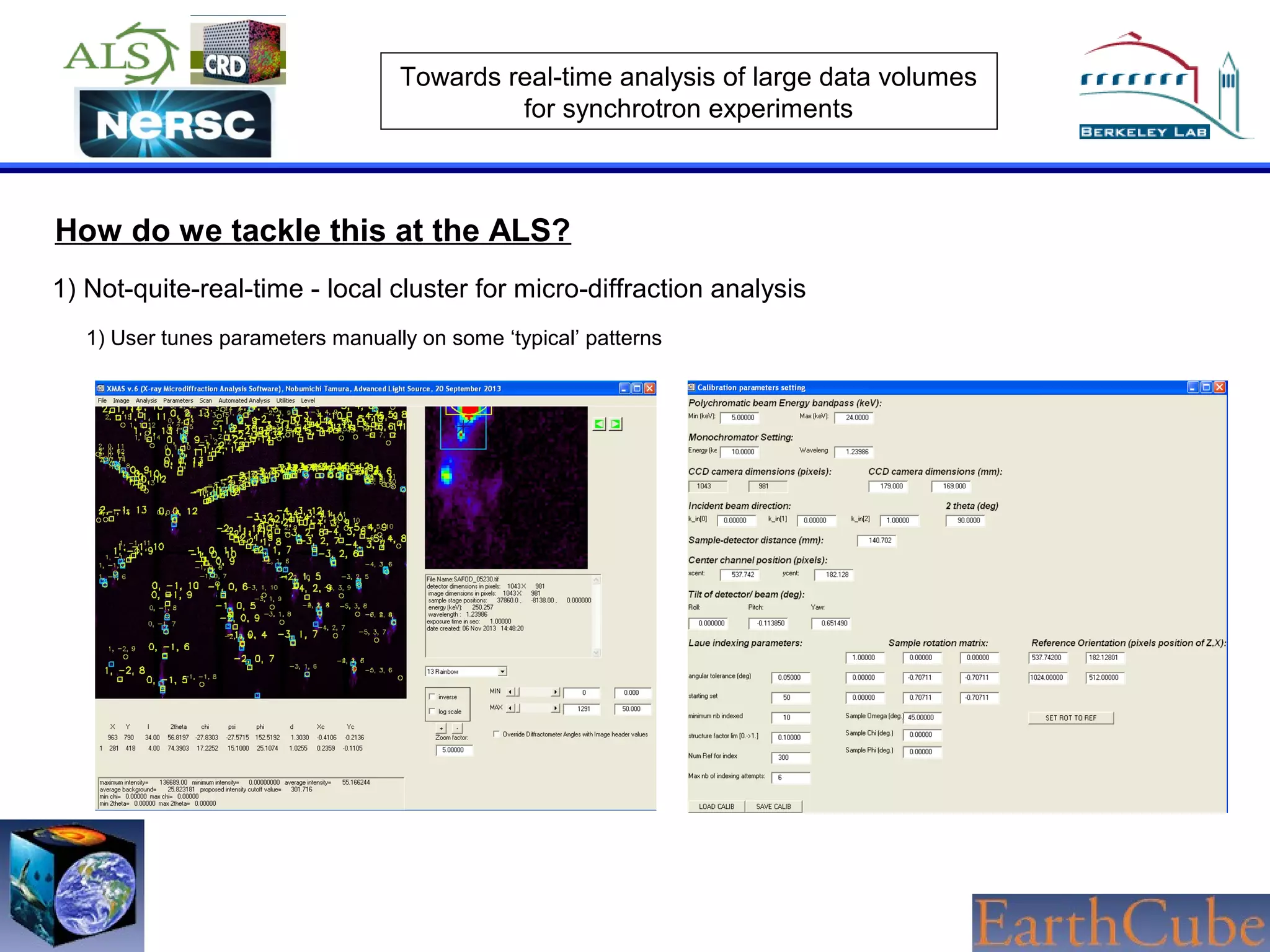

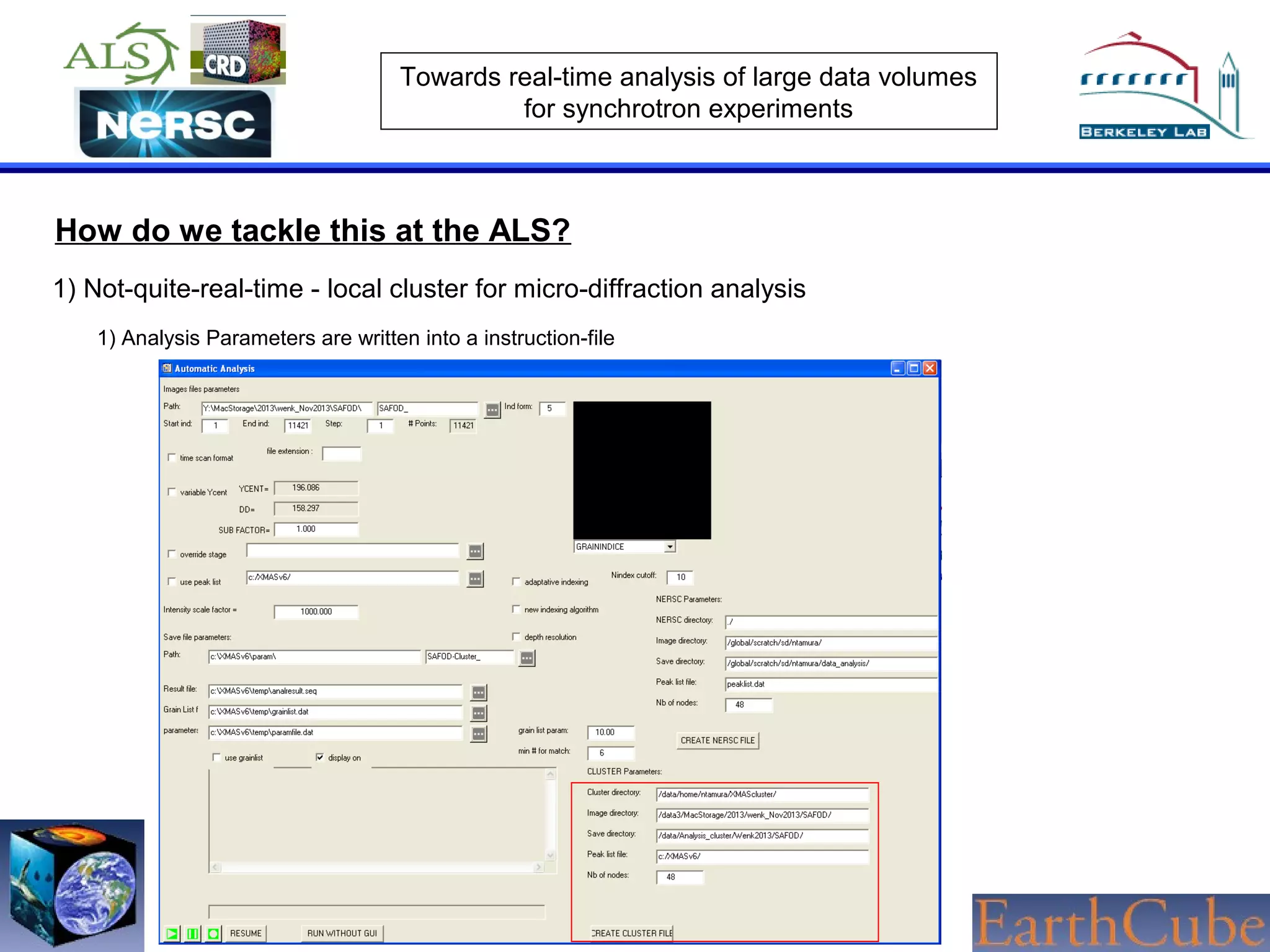

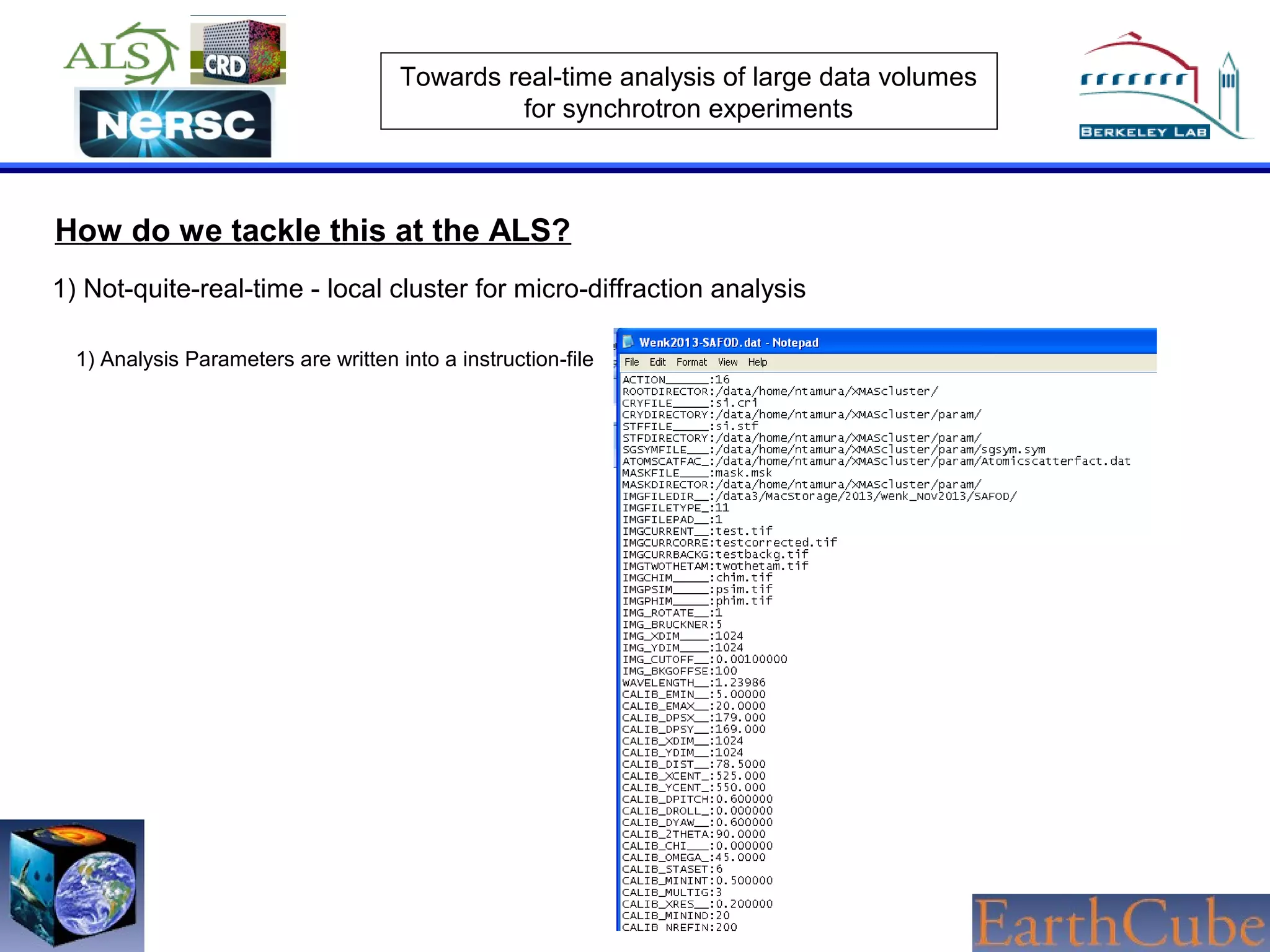

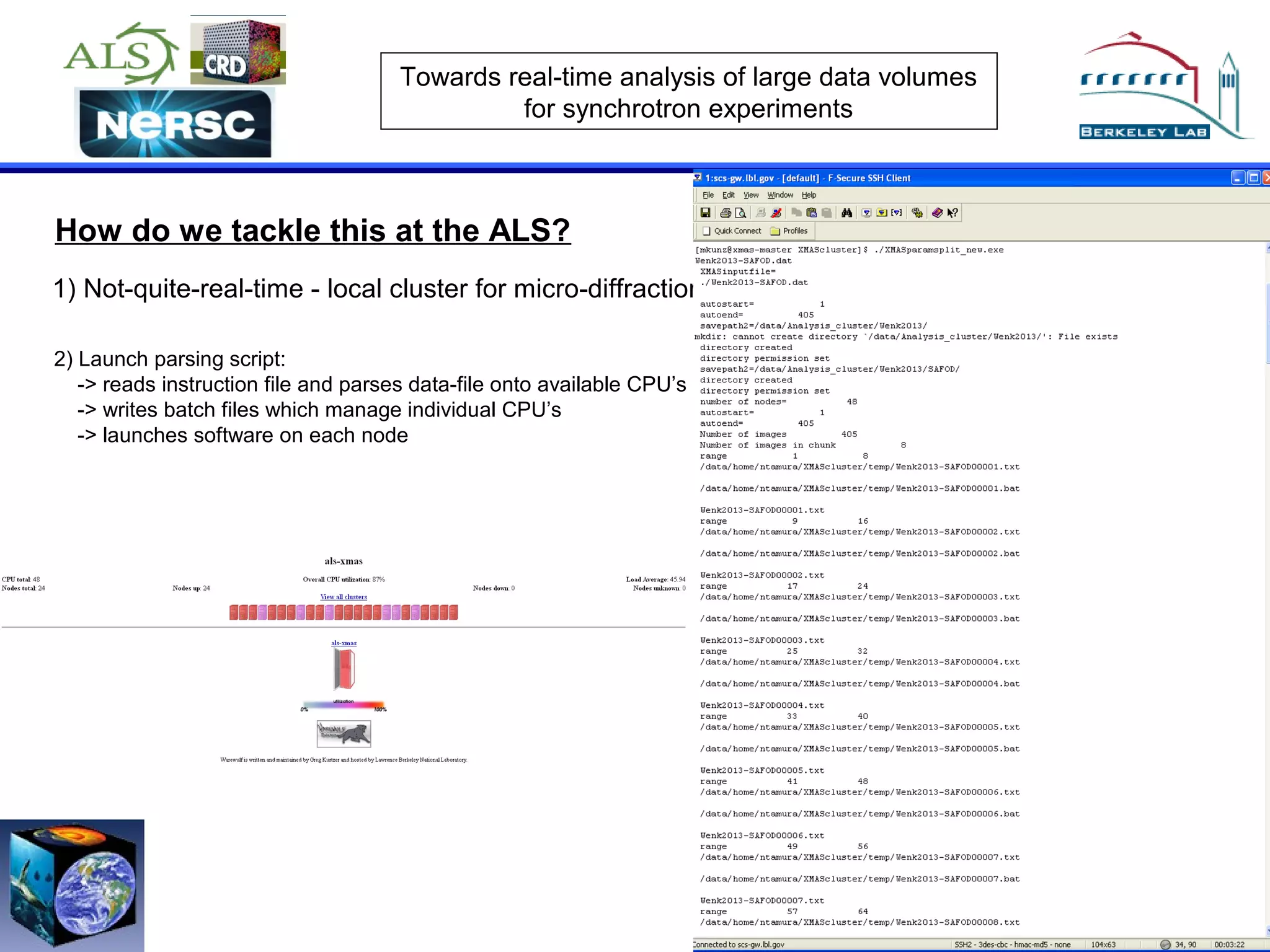

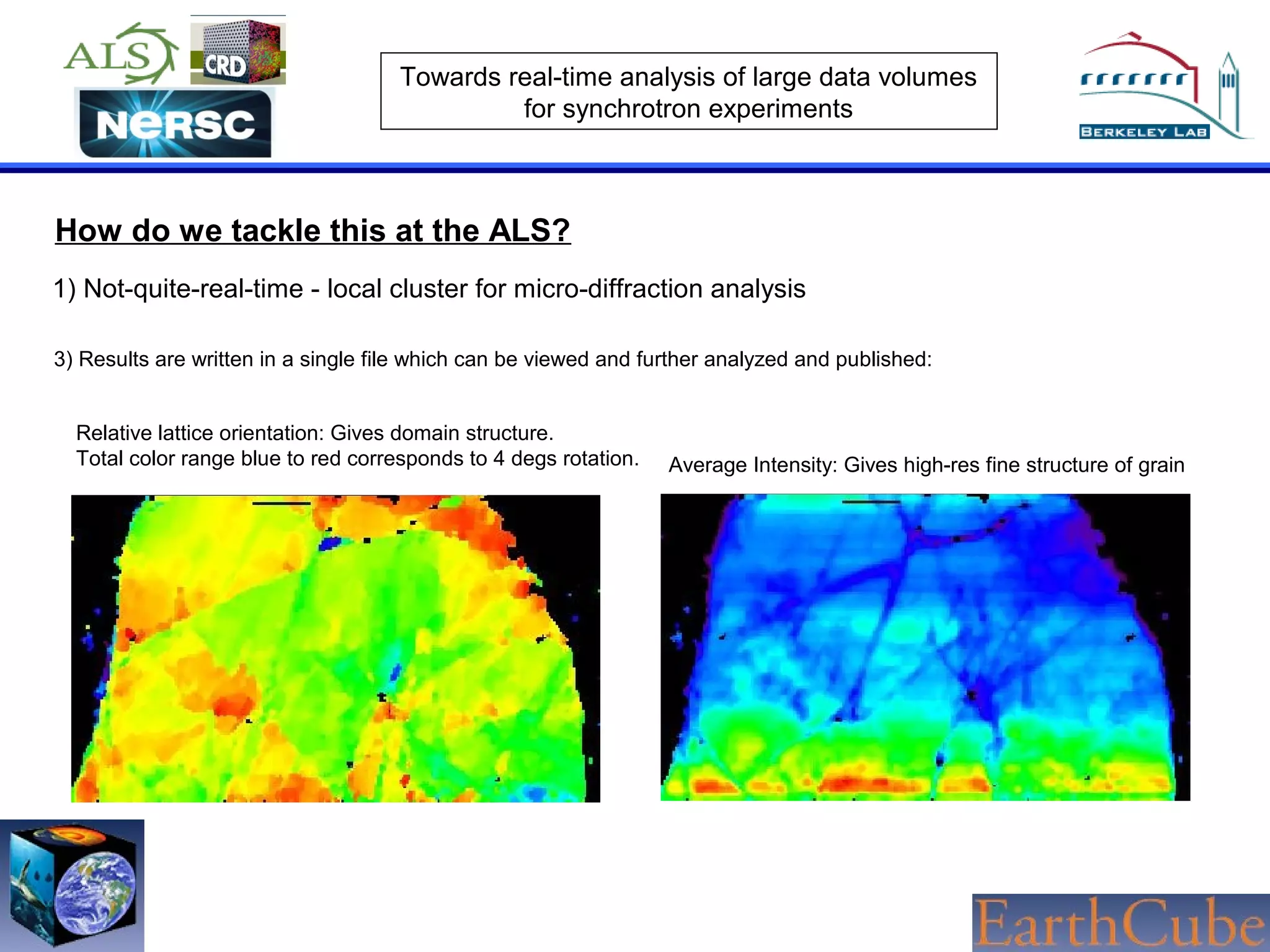

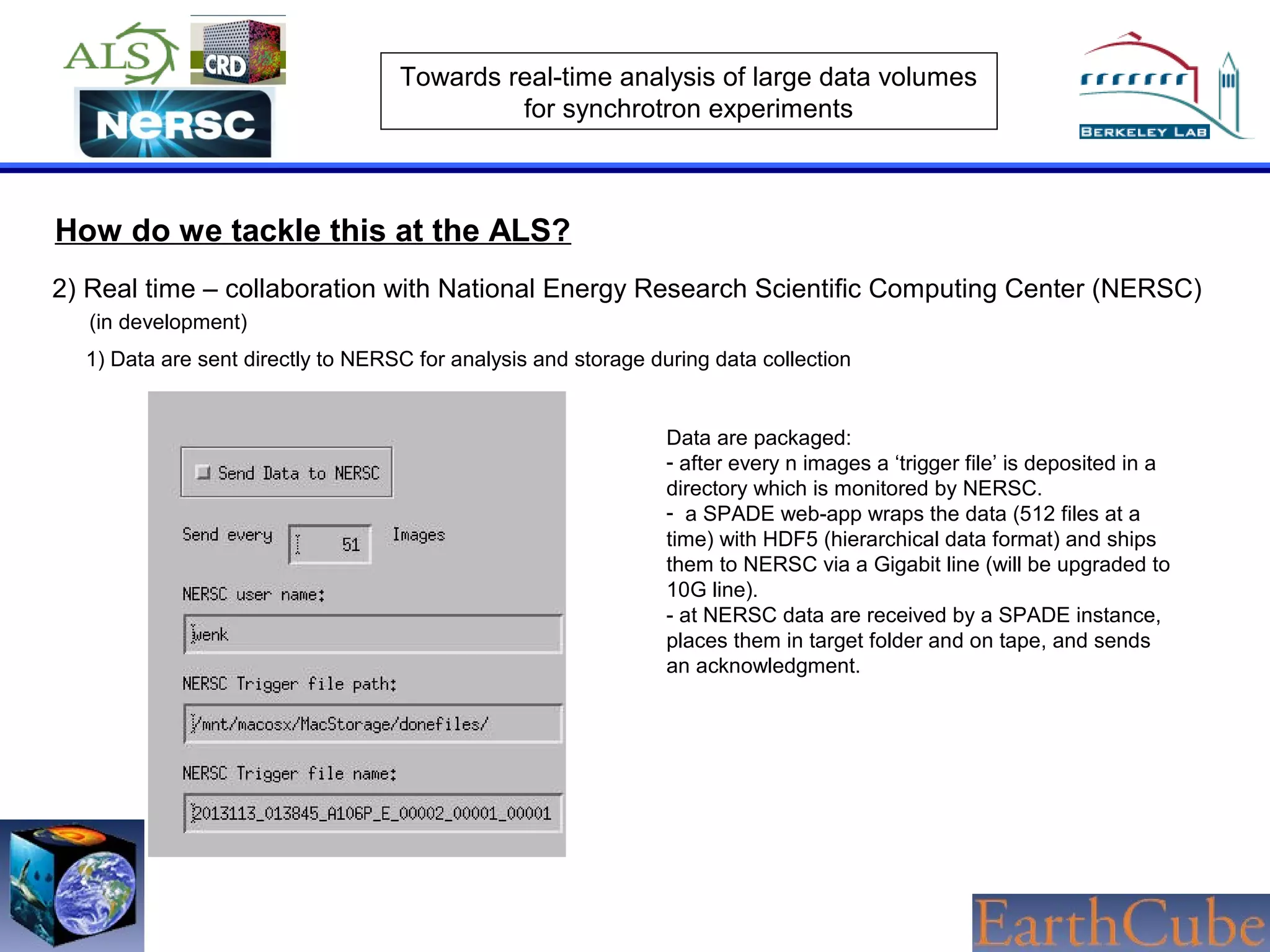

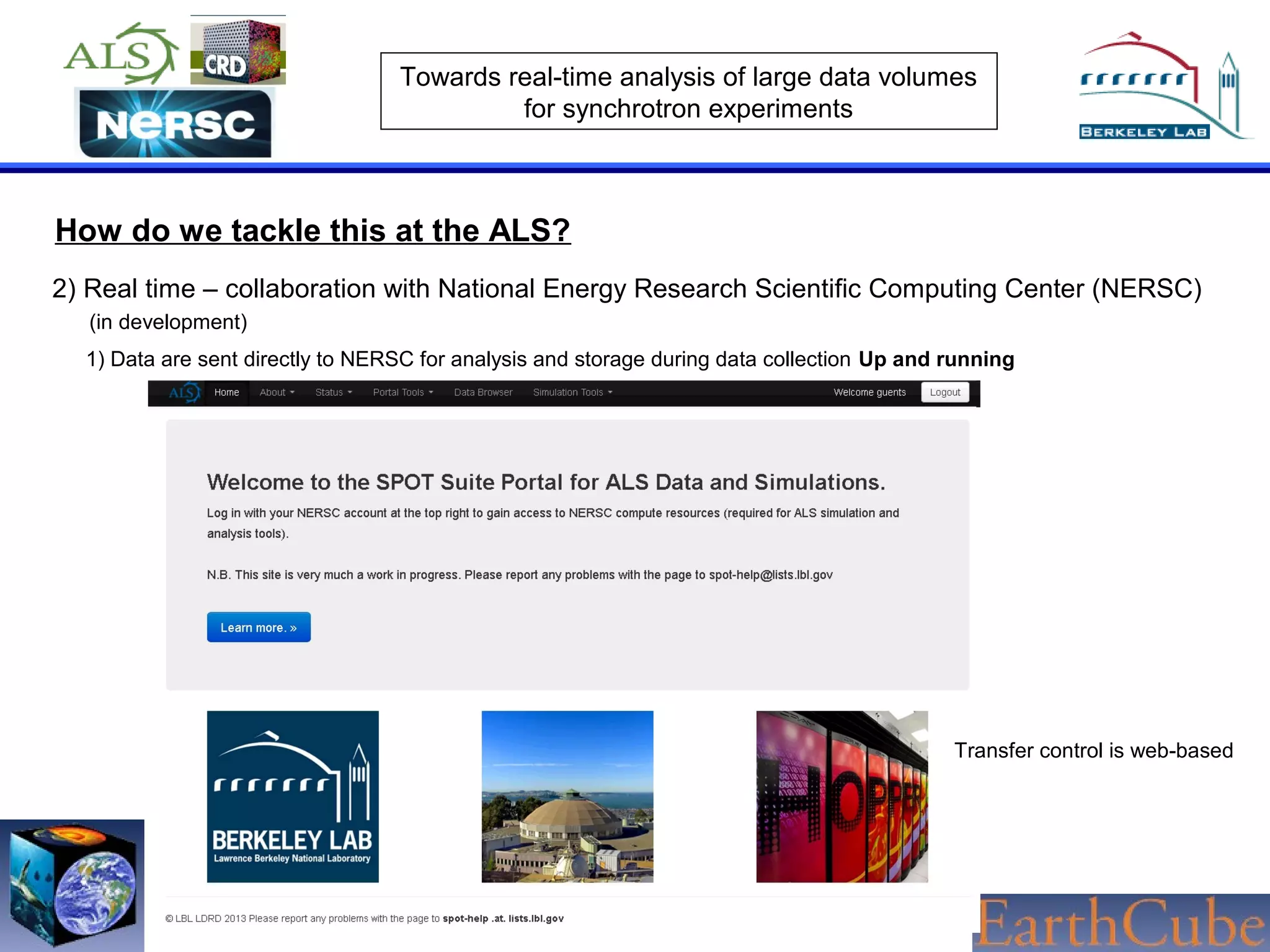

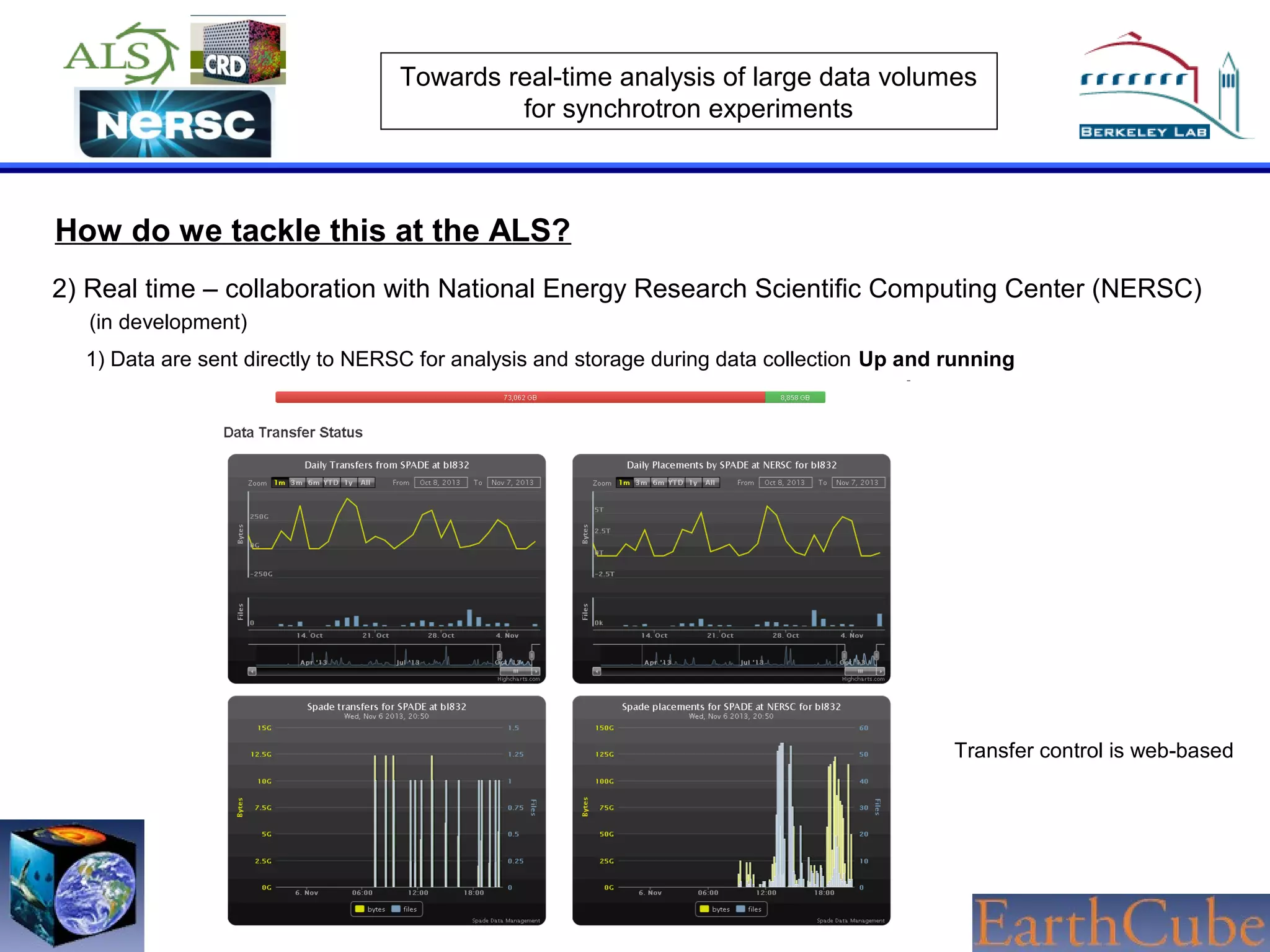

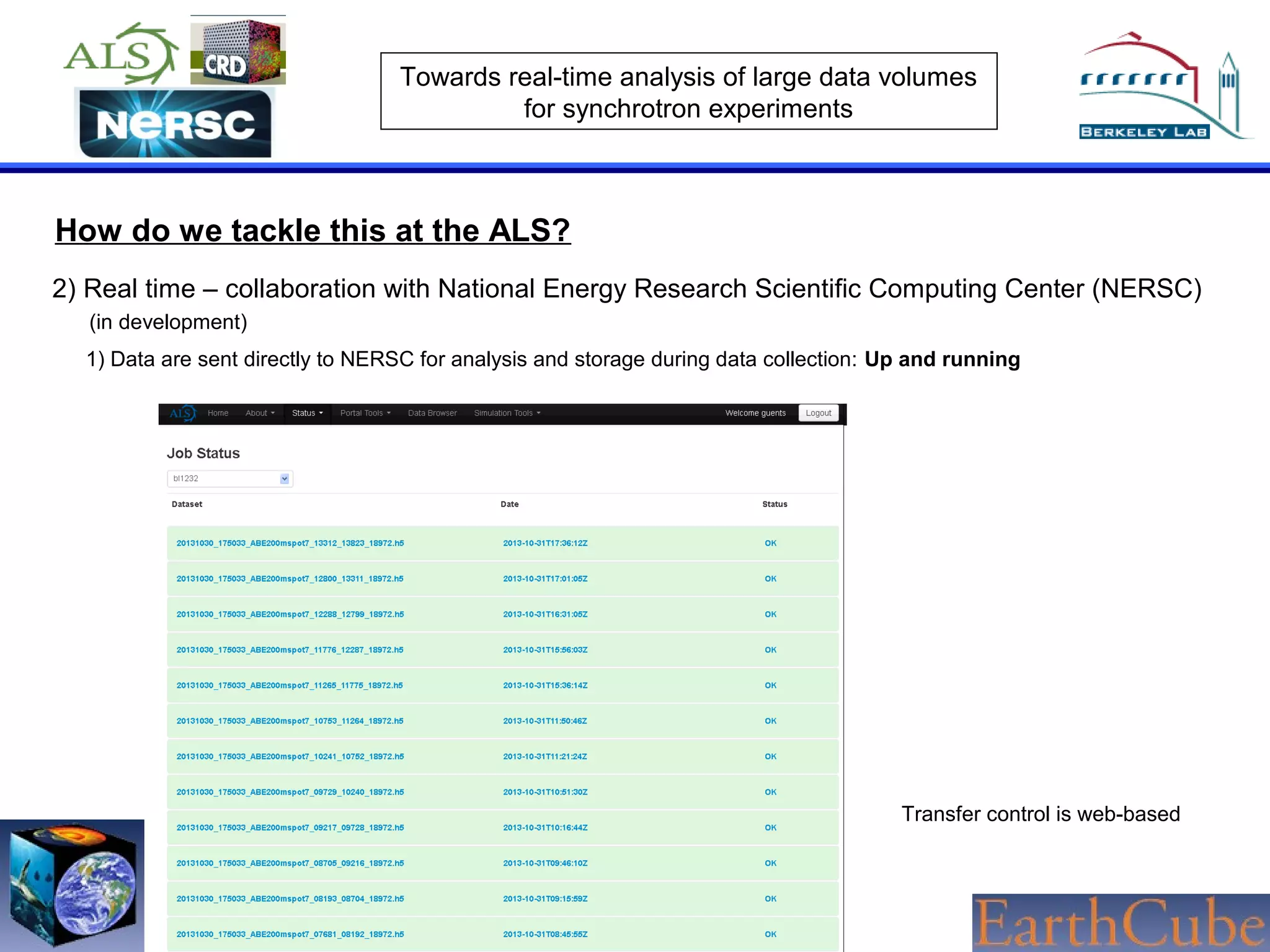

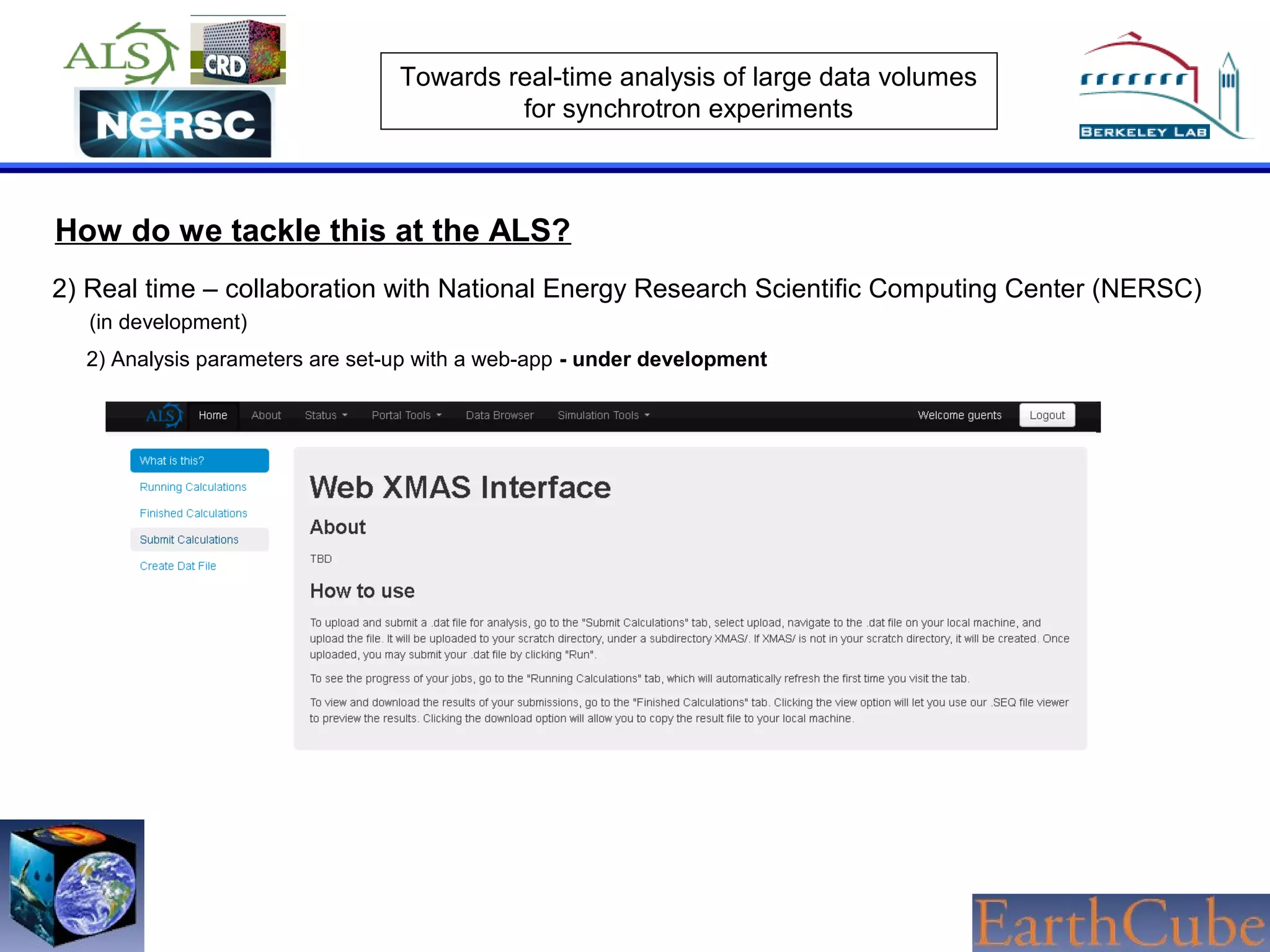

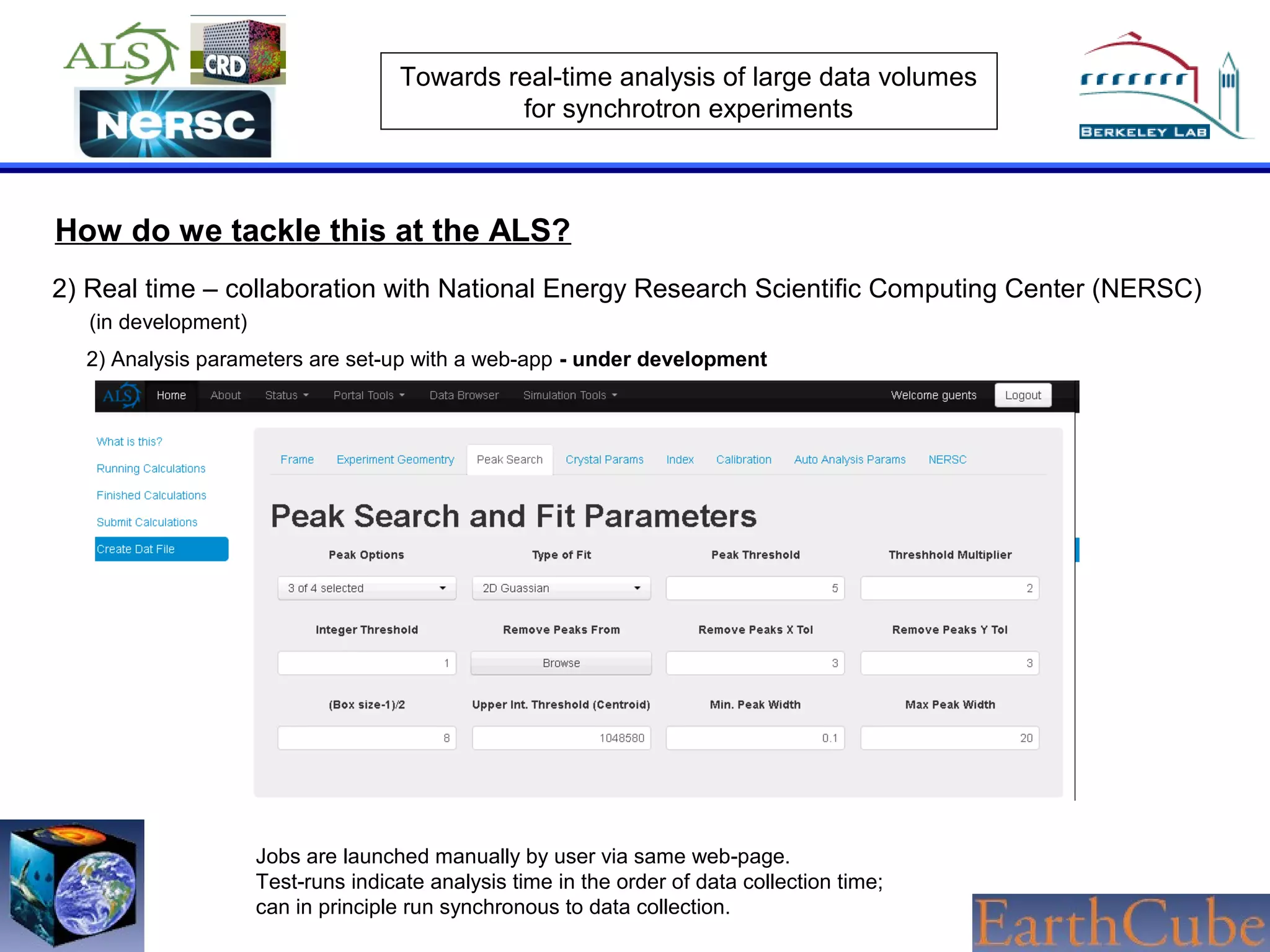

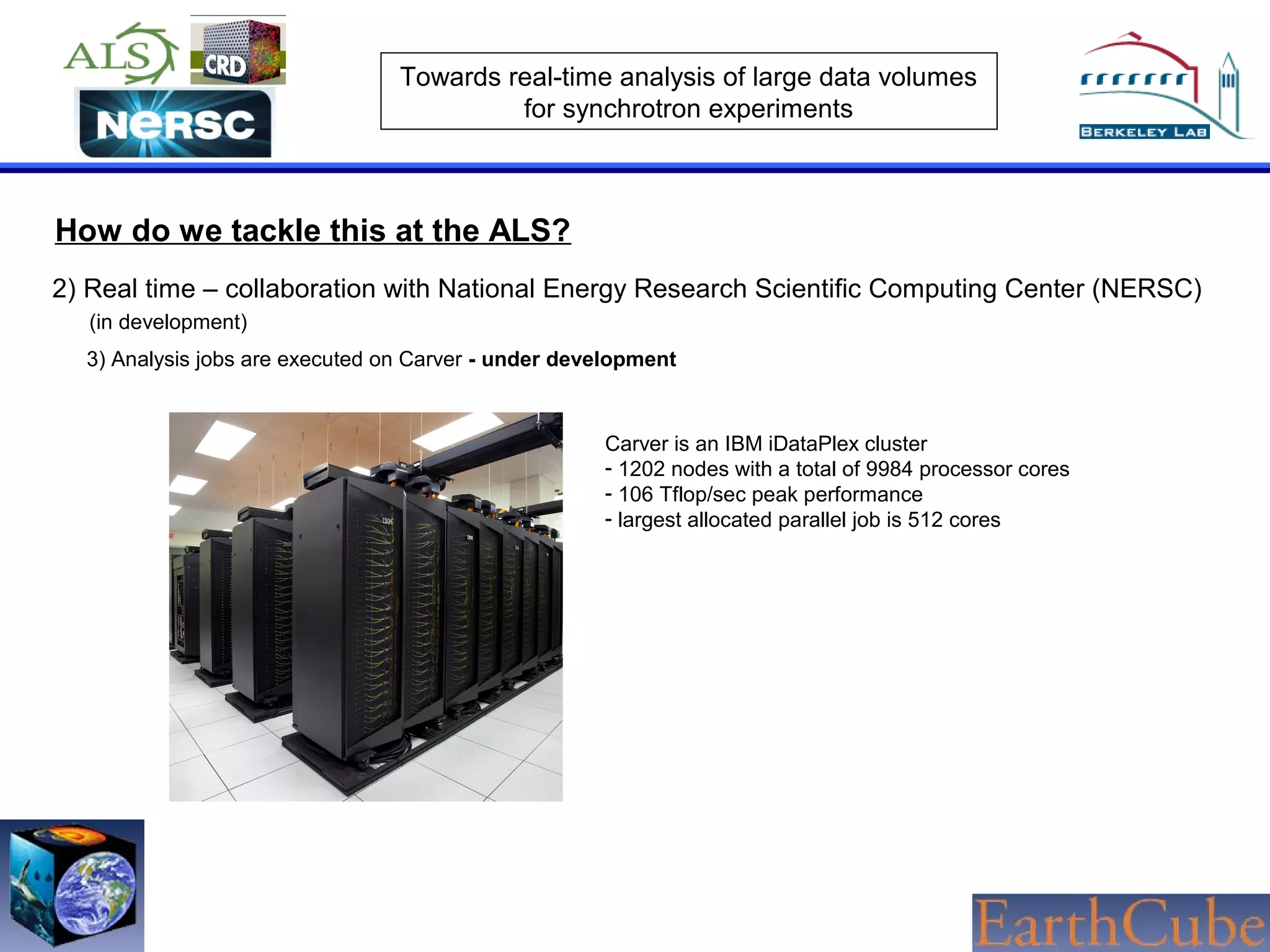

The document discusses the challenges of real-time analysis of large data volumes in synchrotron experiments within experimental mineral physics. It highlights the evolution of data analysis techniques, from traditional methods to the need for real-time, automated systems that leverage supercomputing resources for efficiency. The authors emphasize the importance of collaboration with national computing centers to facilitate high-speed data transmission and analysis.