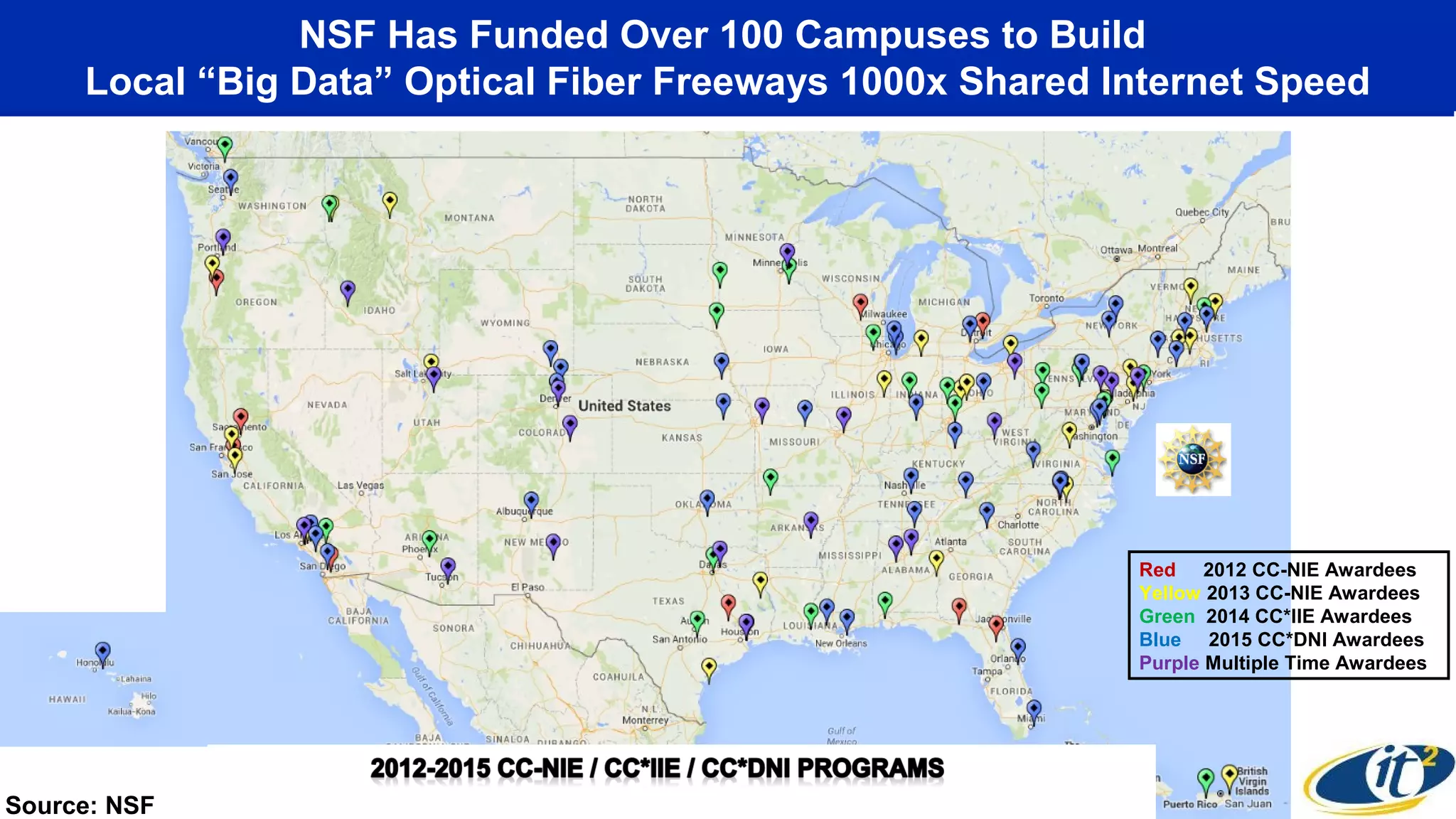

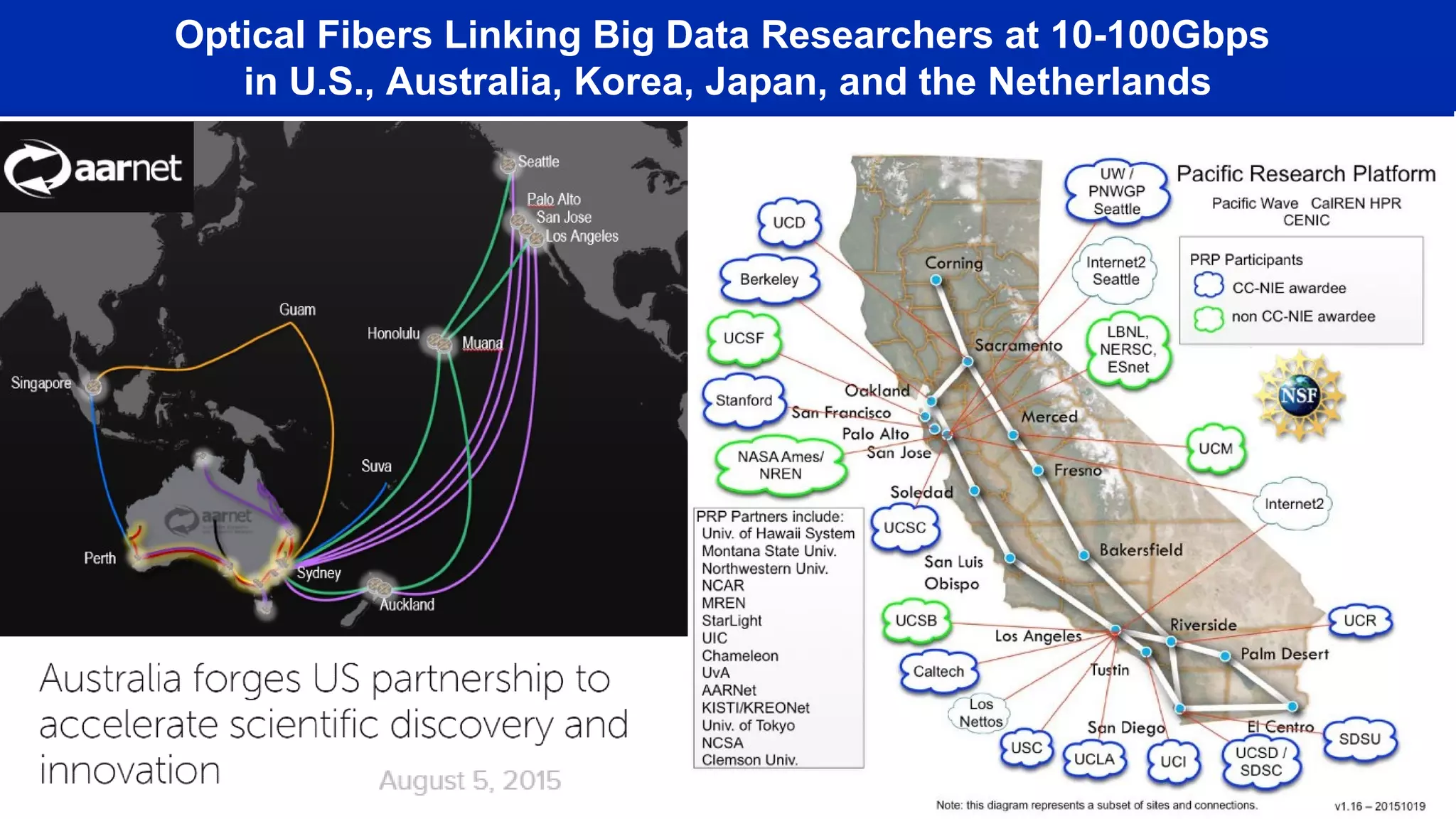

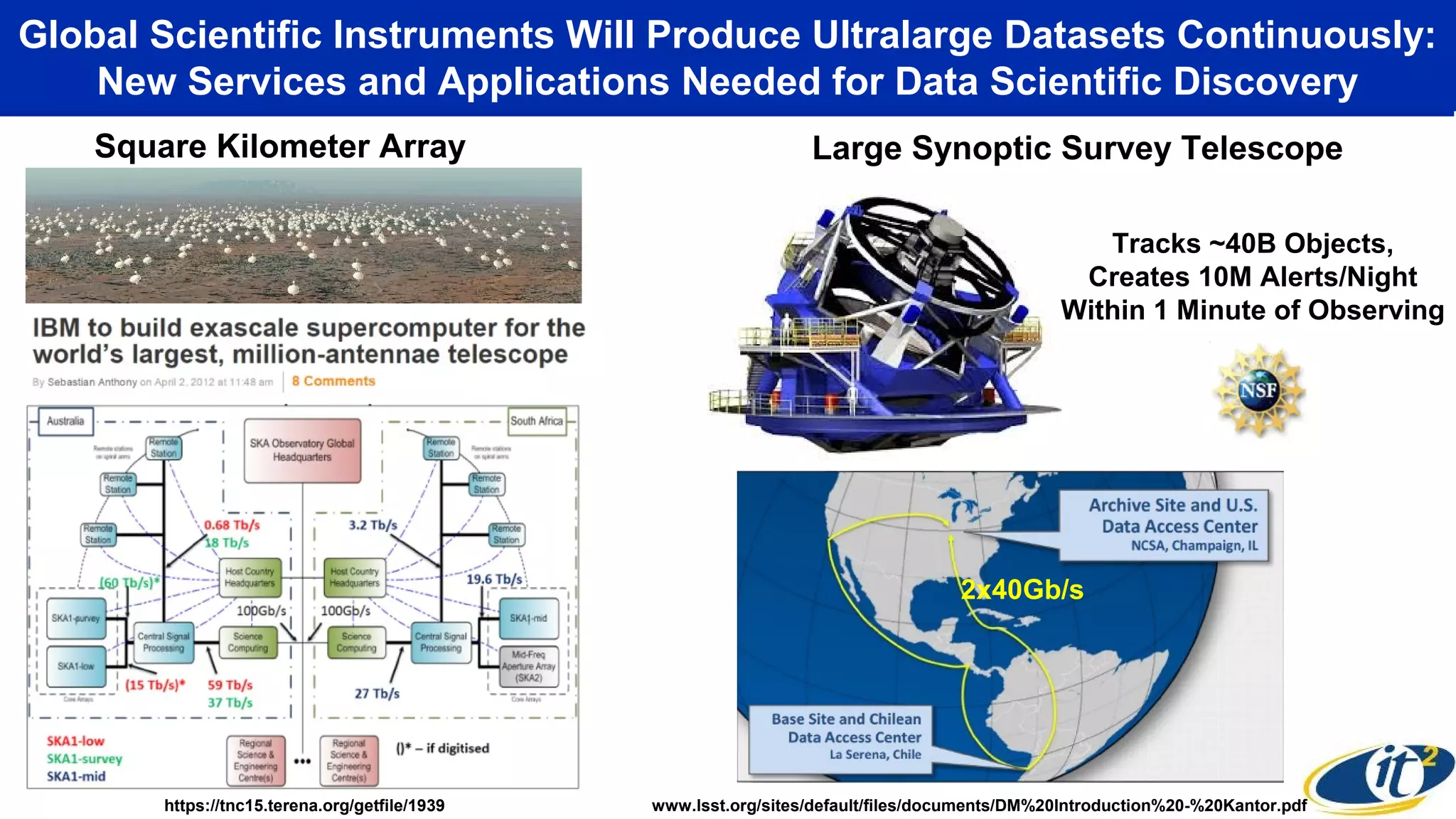

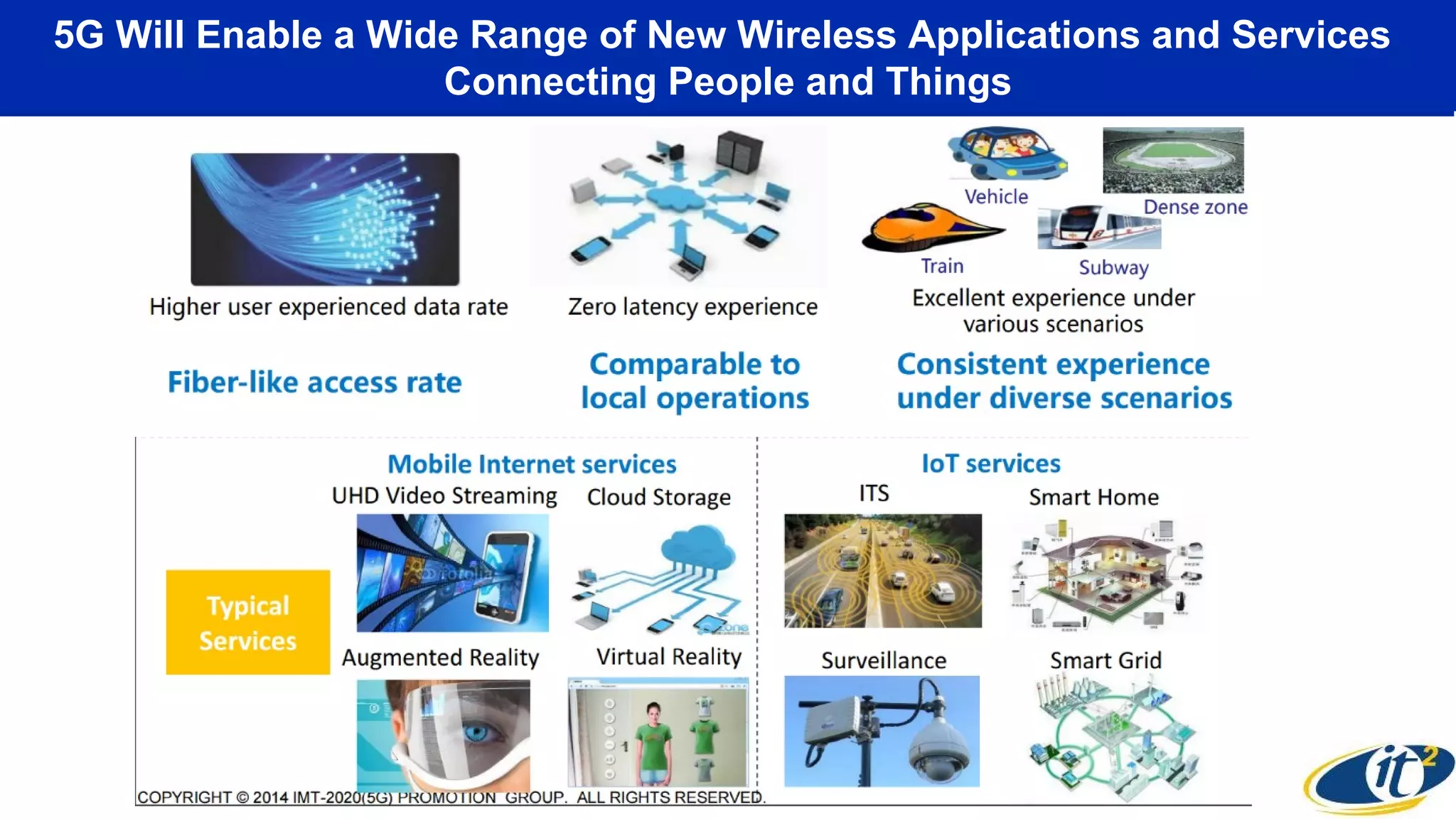

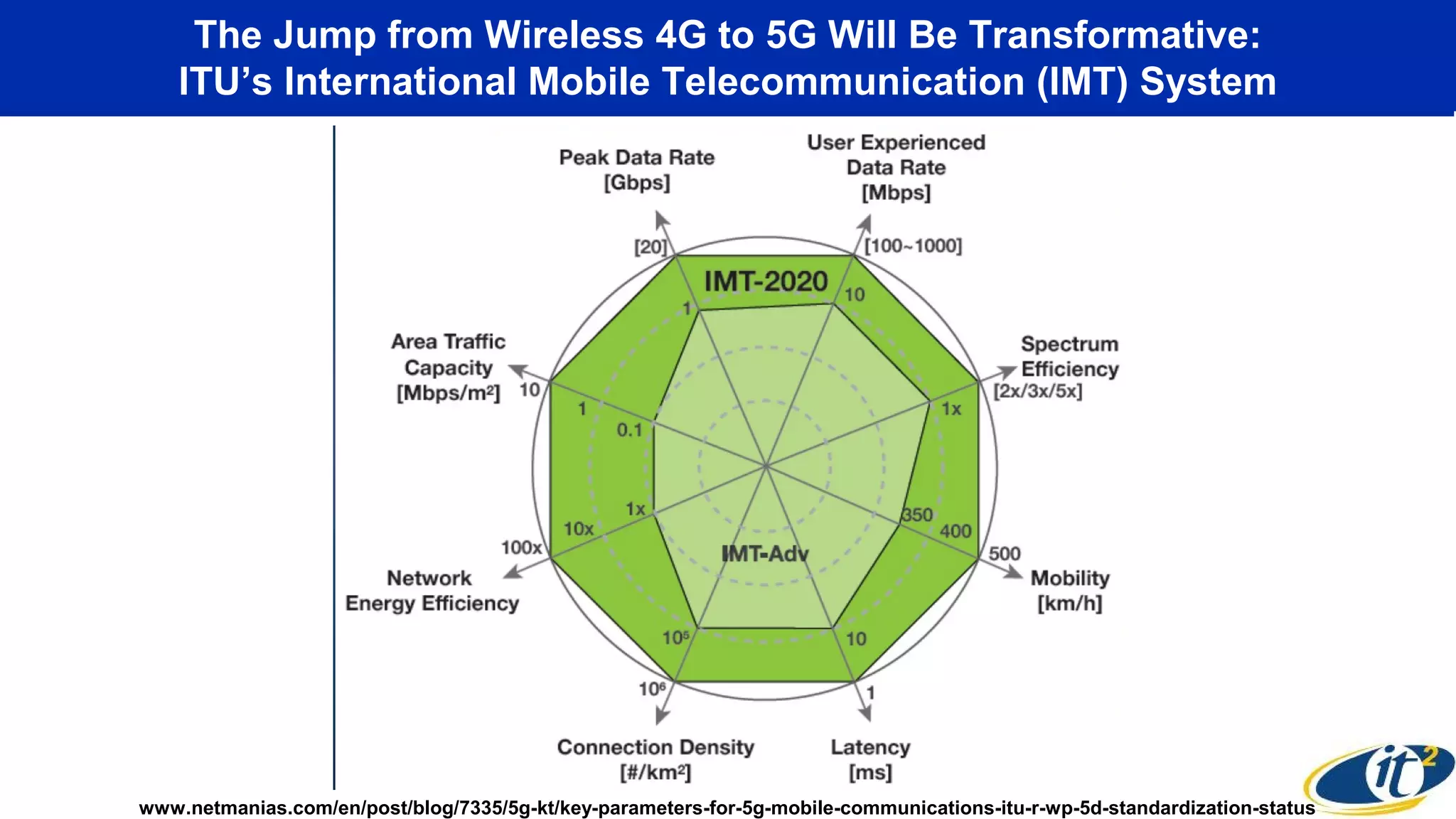

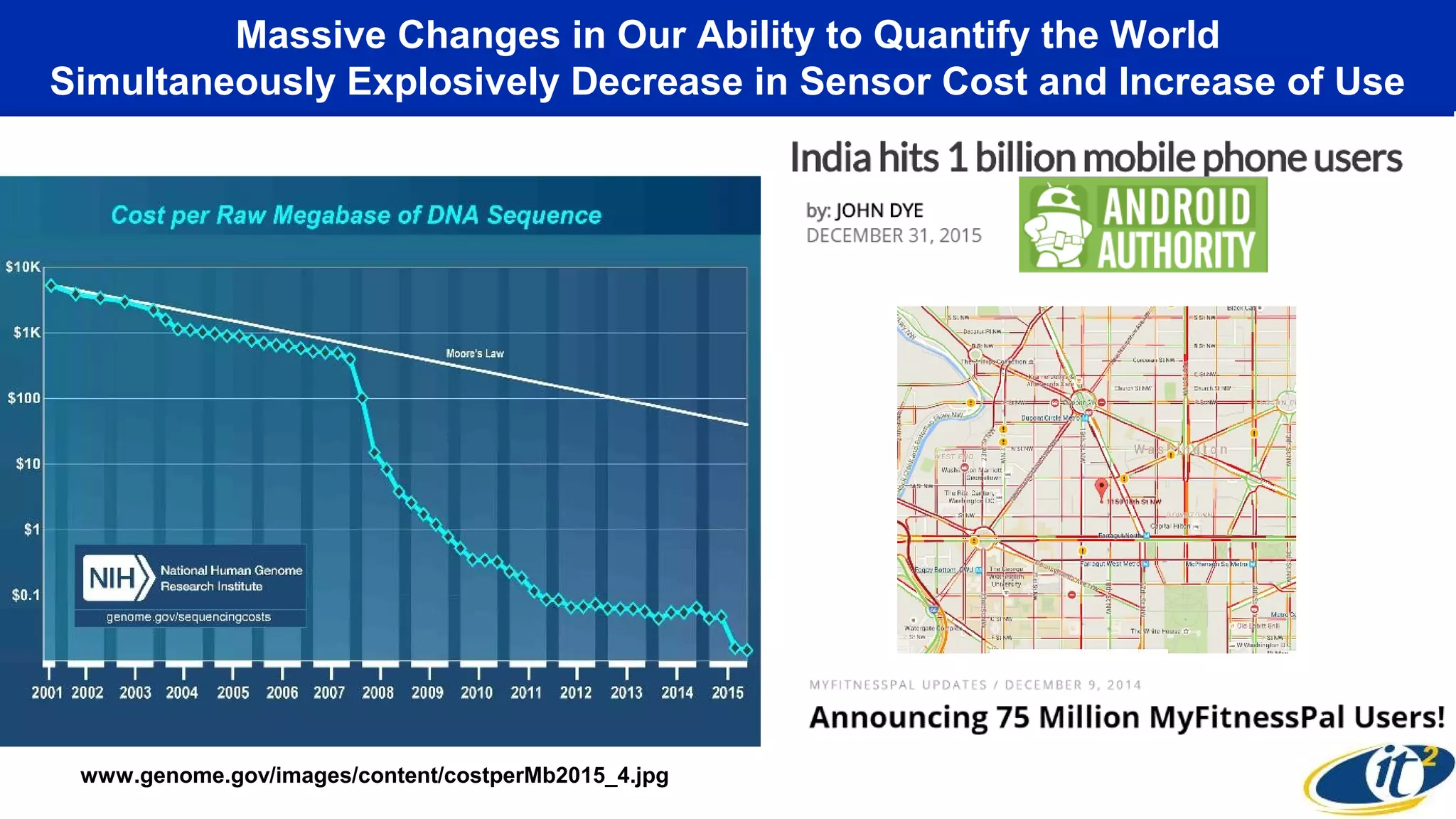

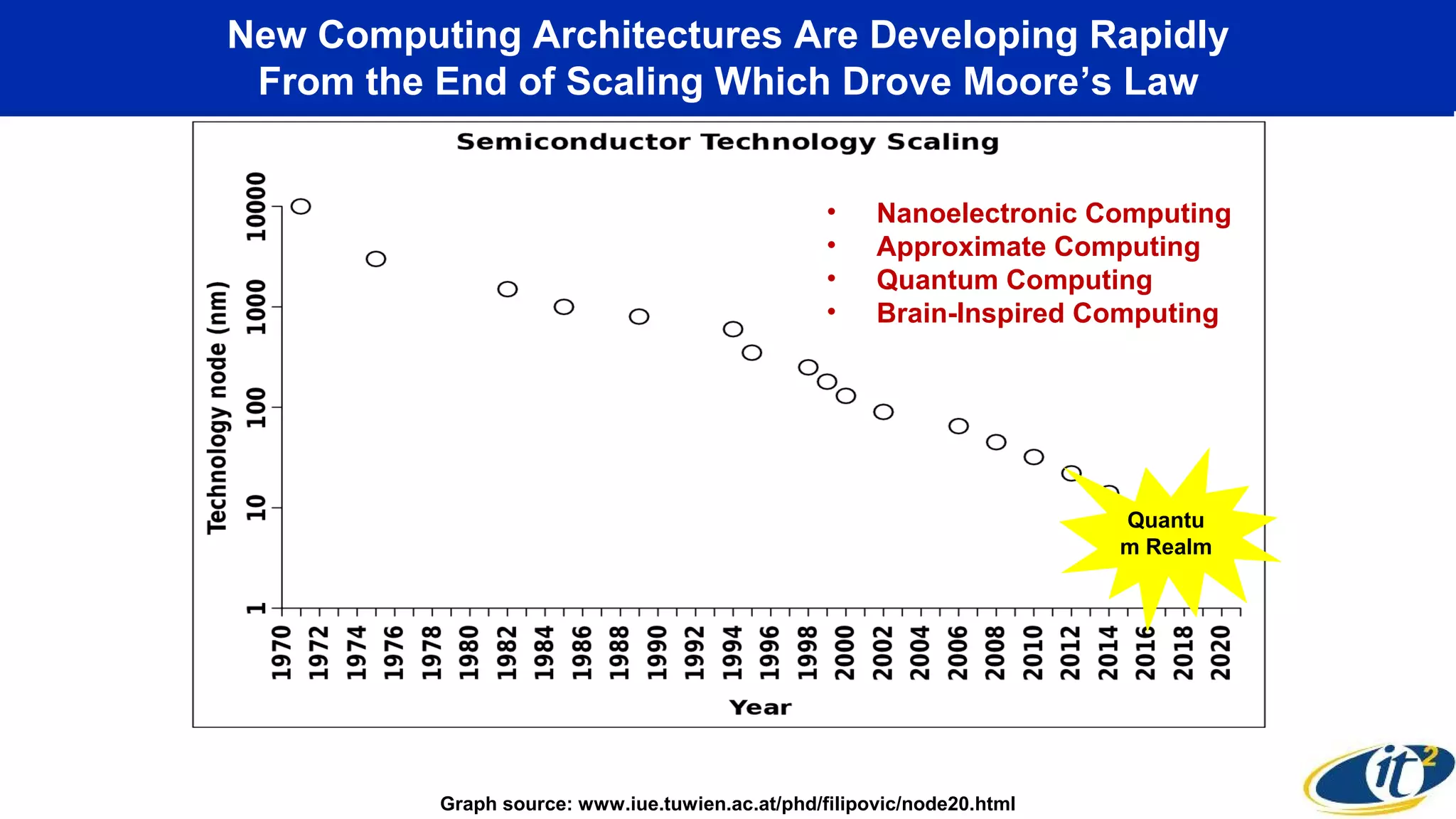

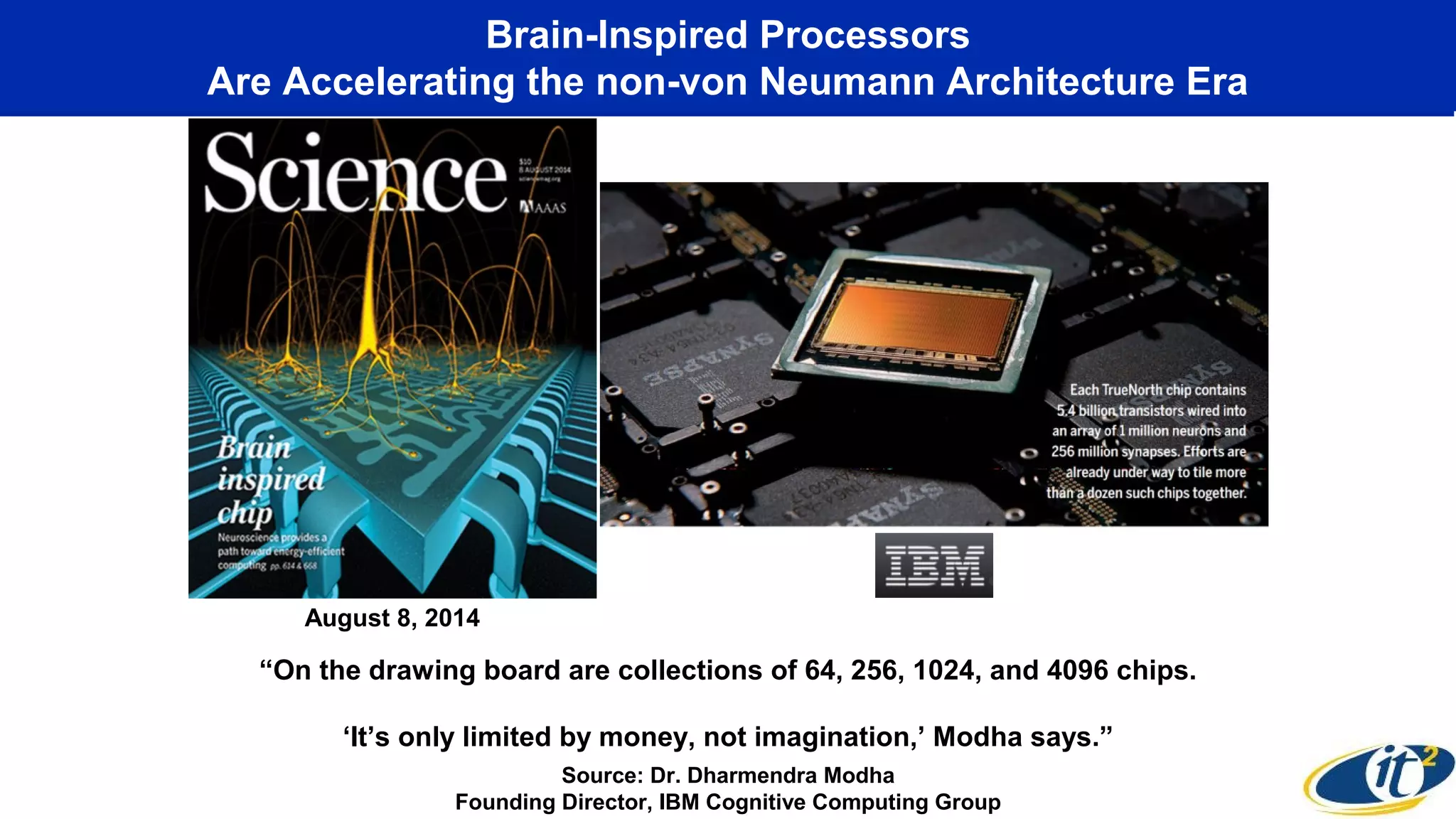

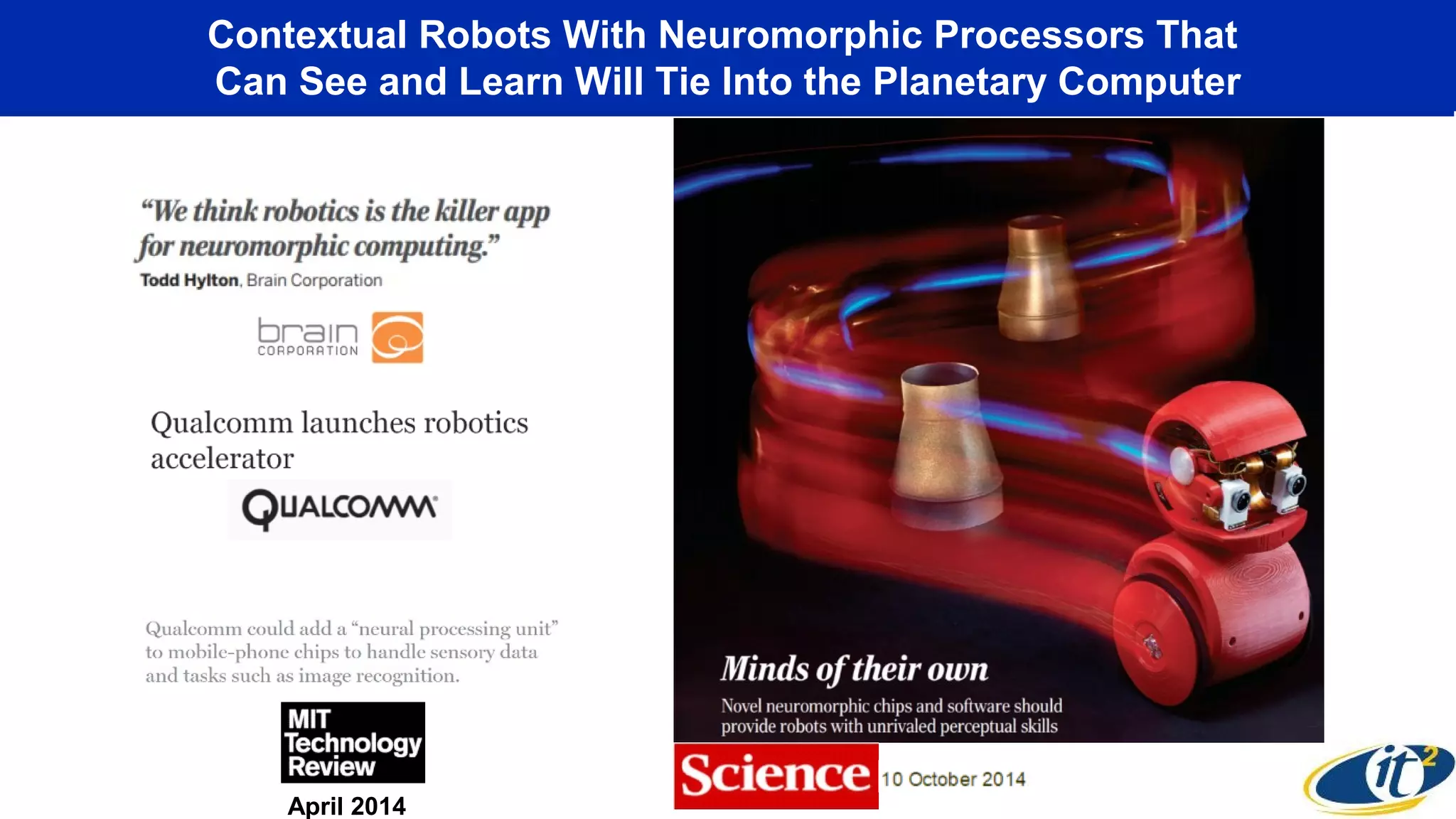

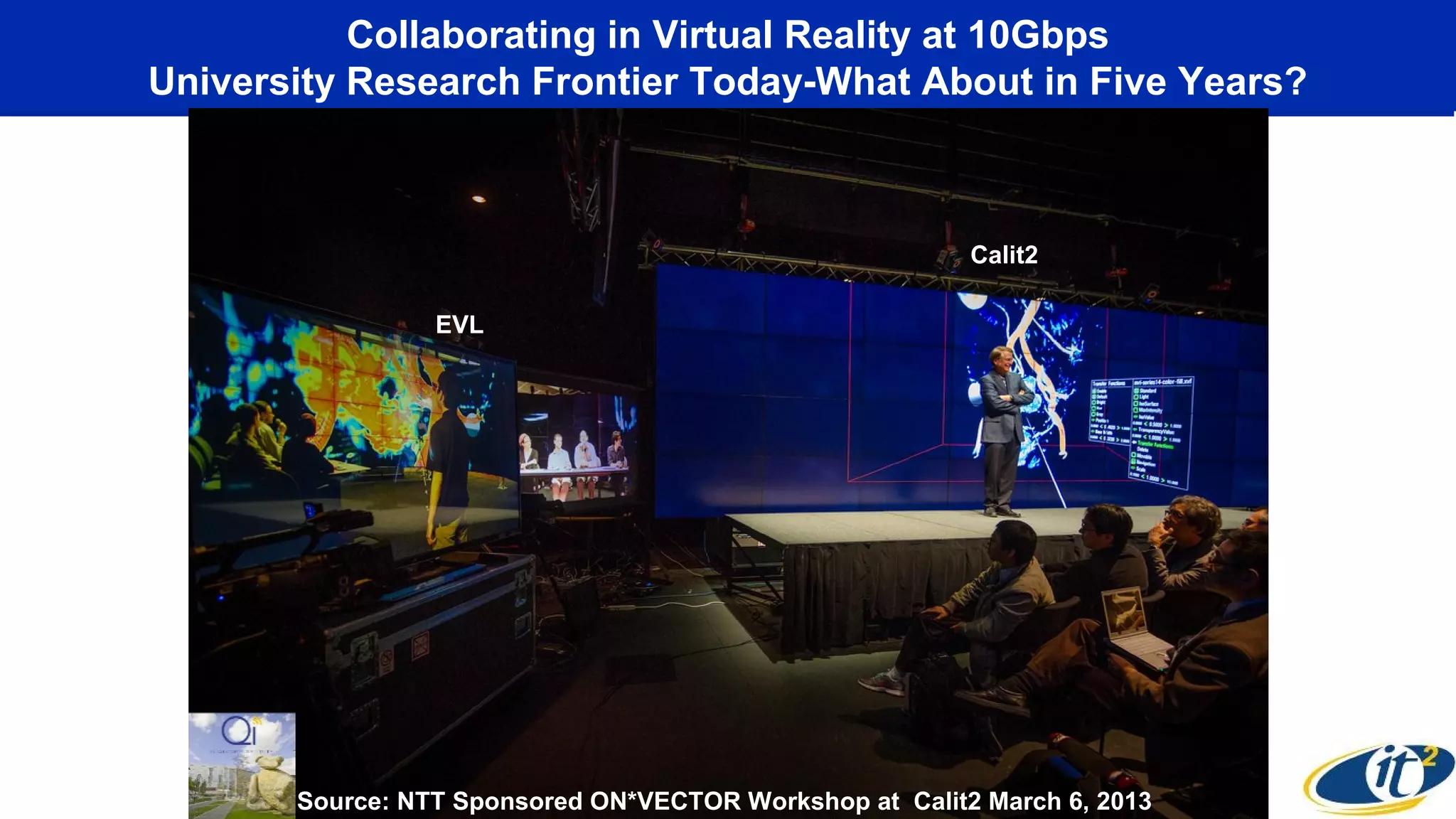

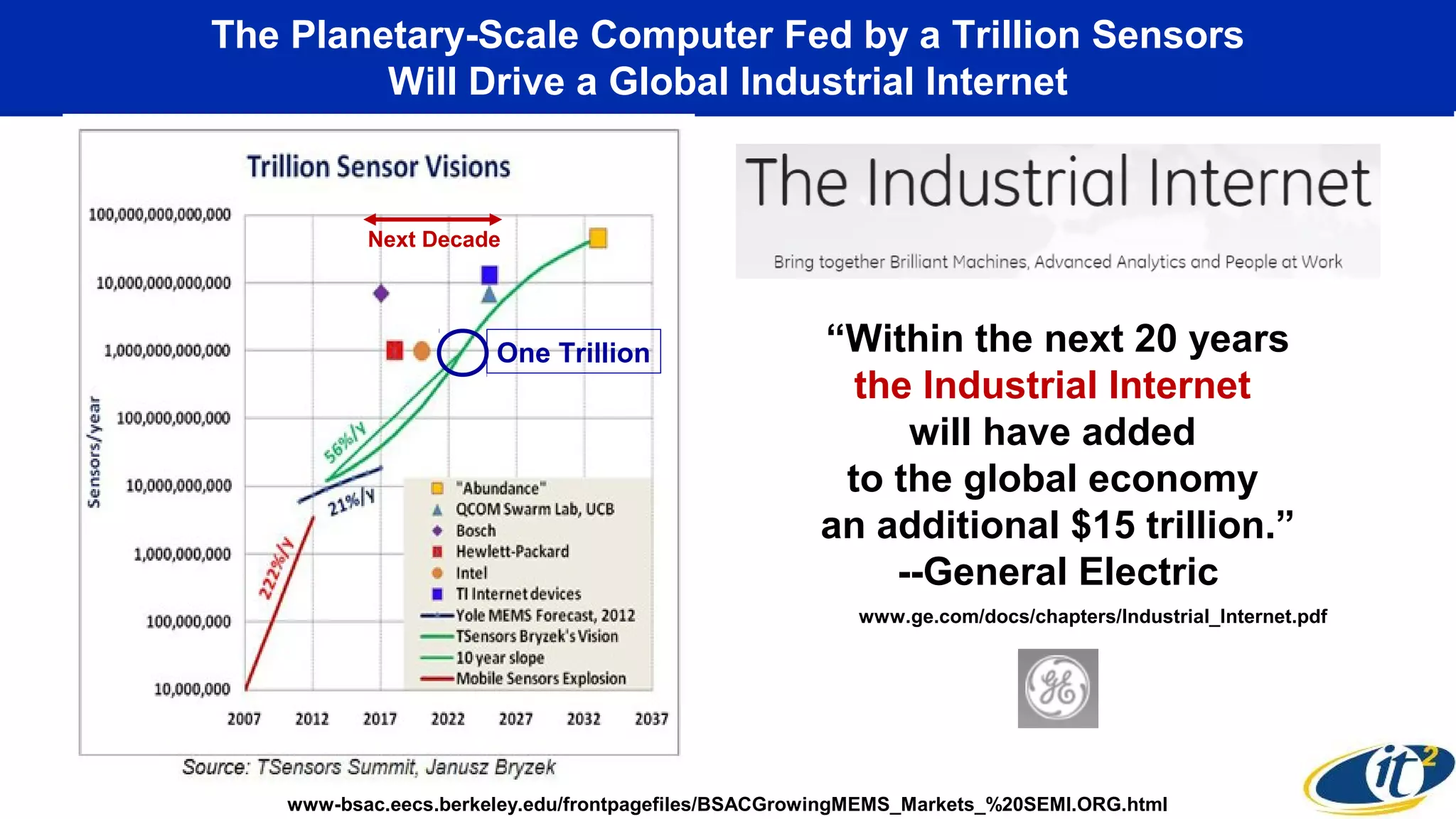

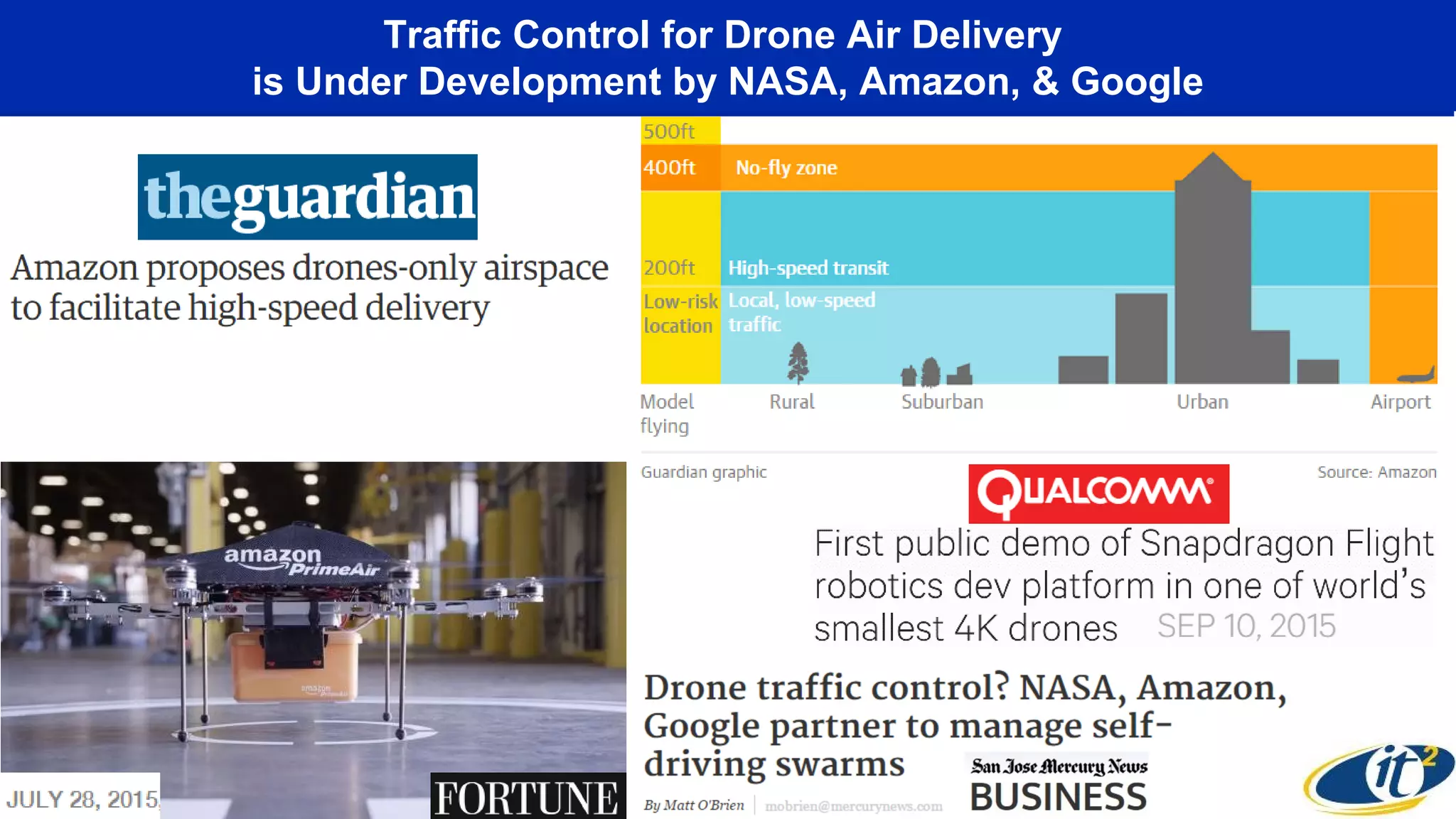

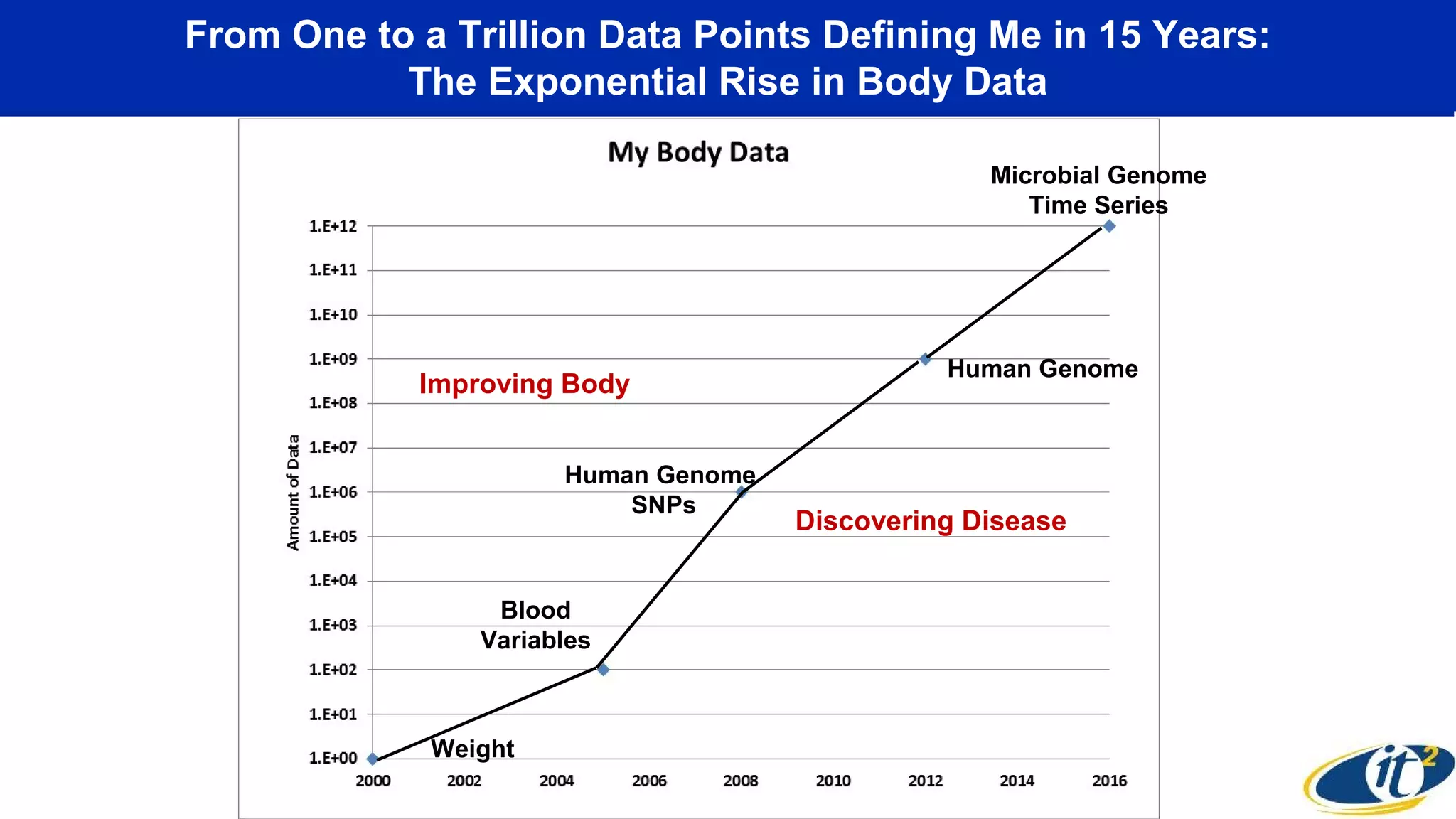

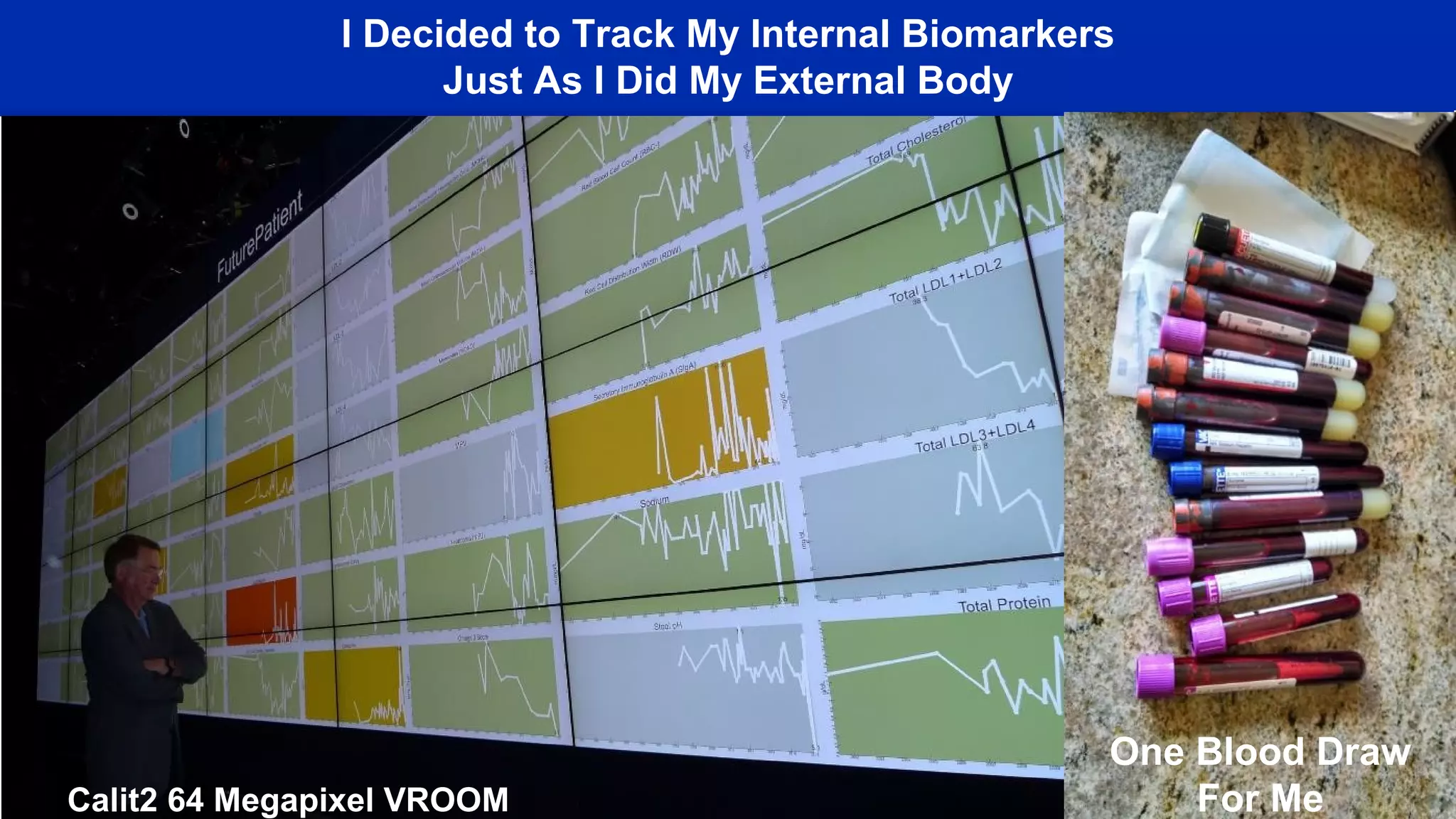

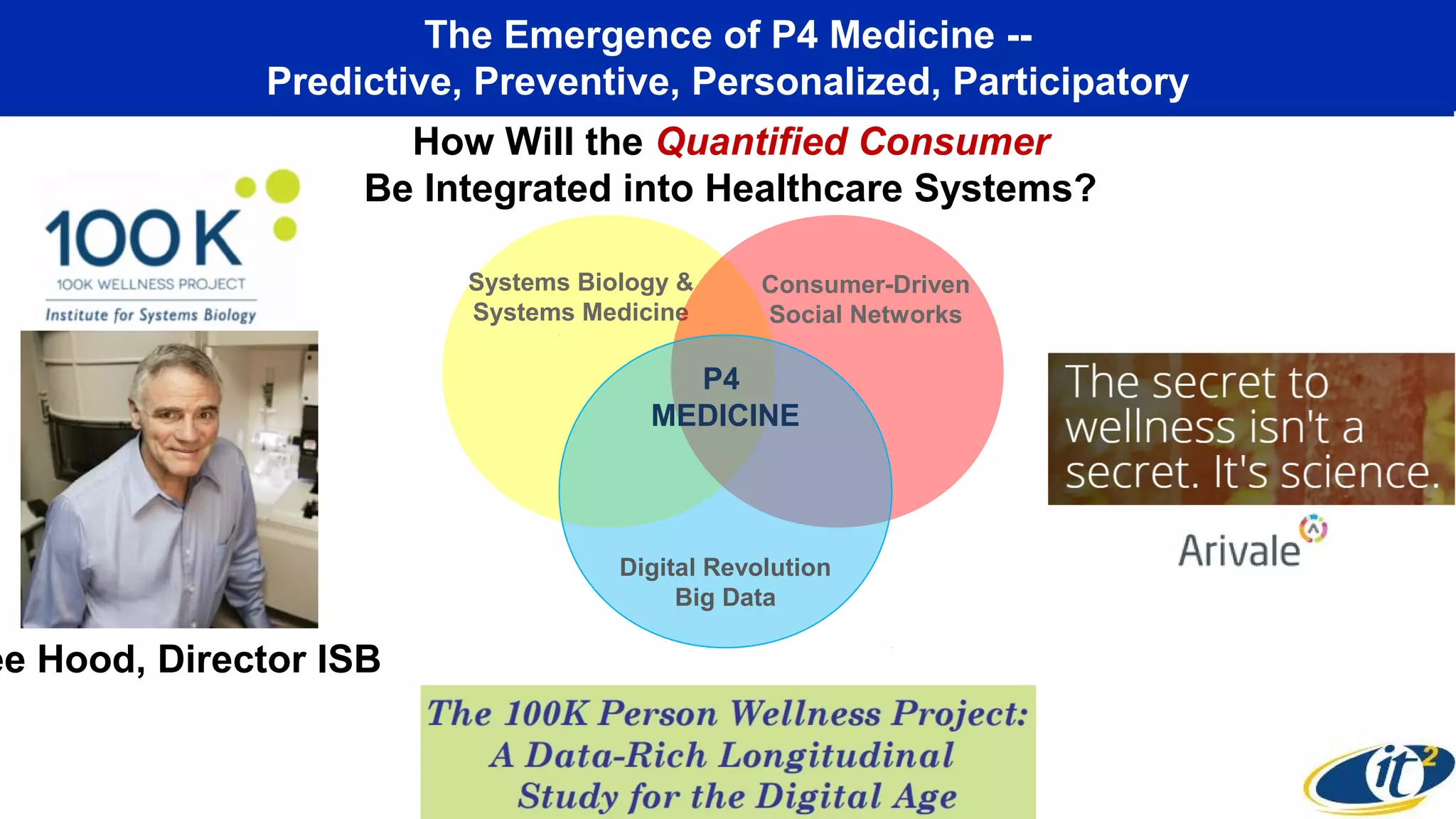

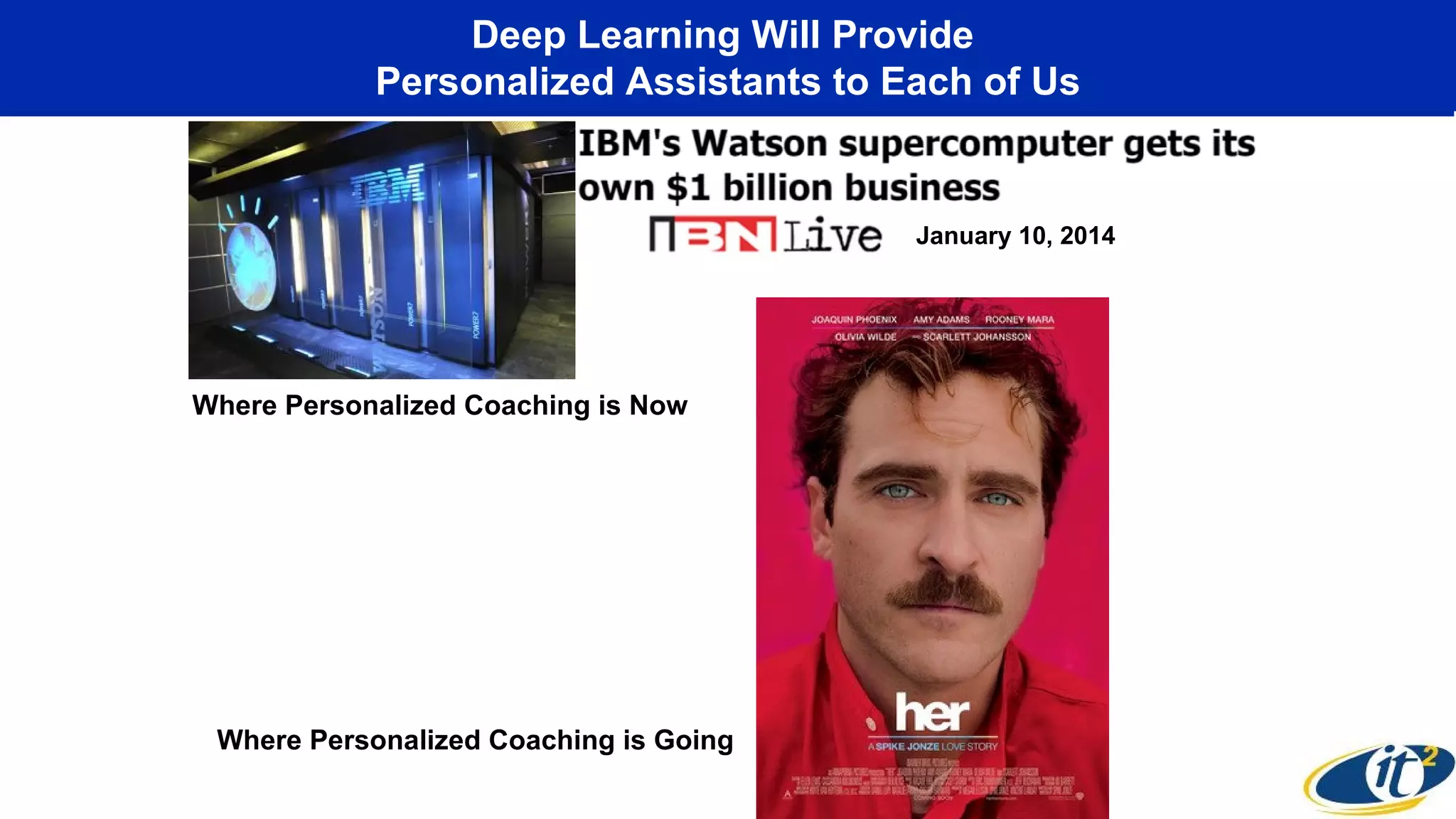

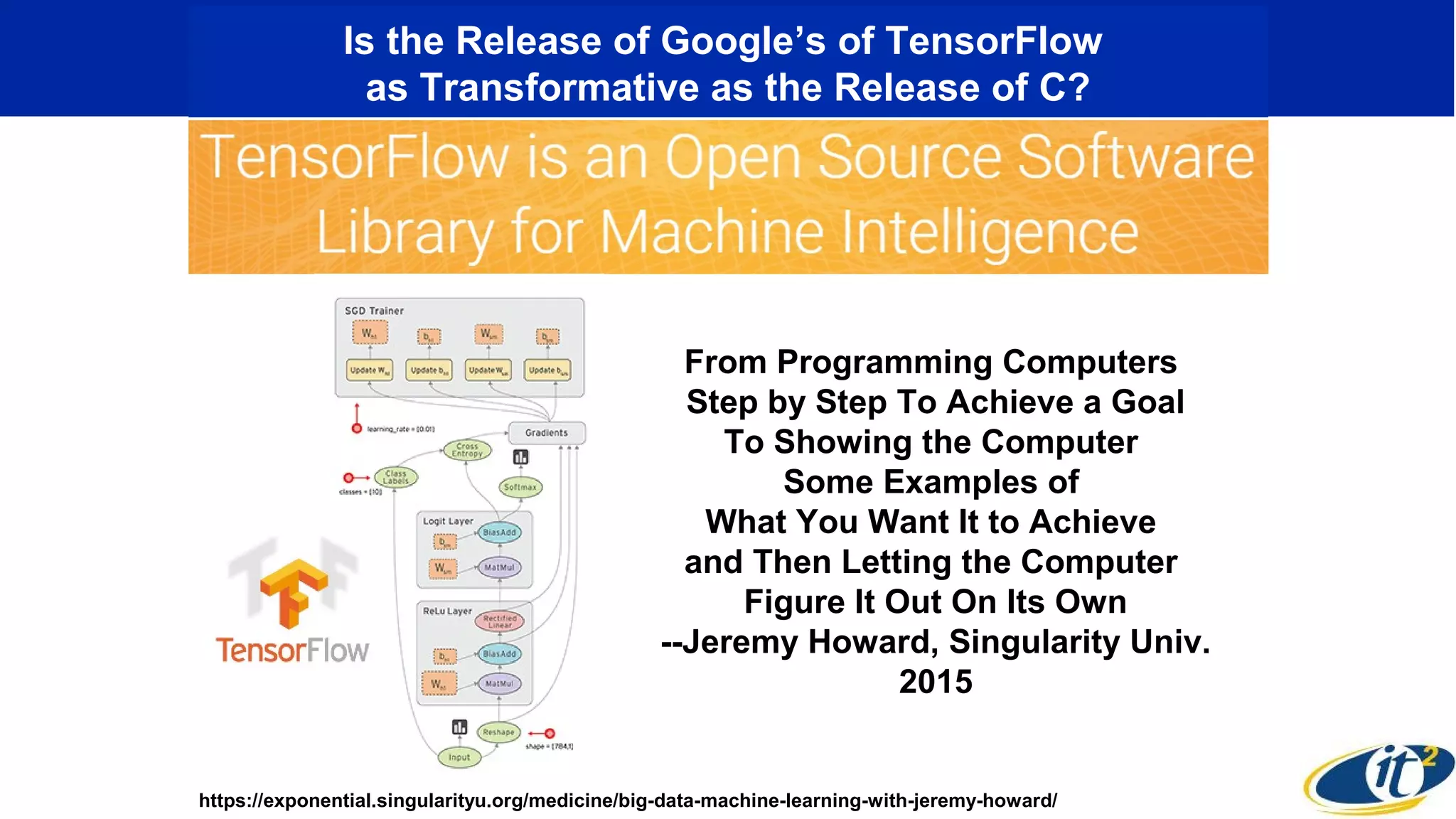

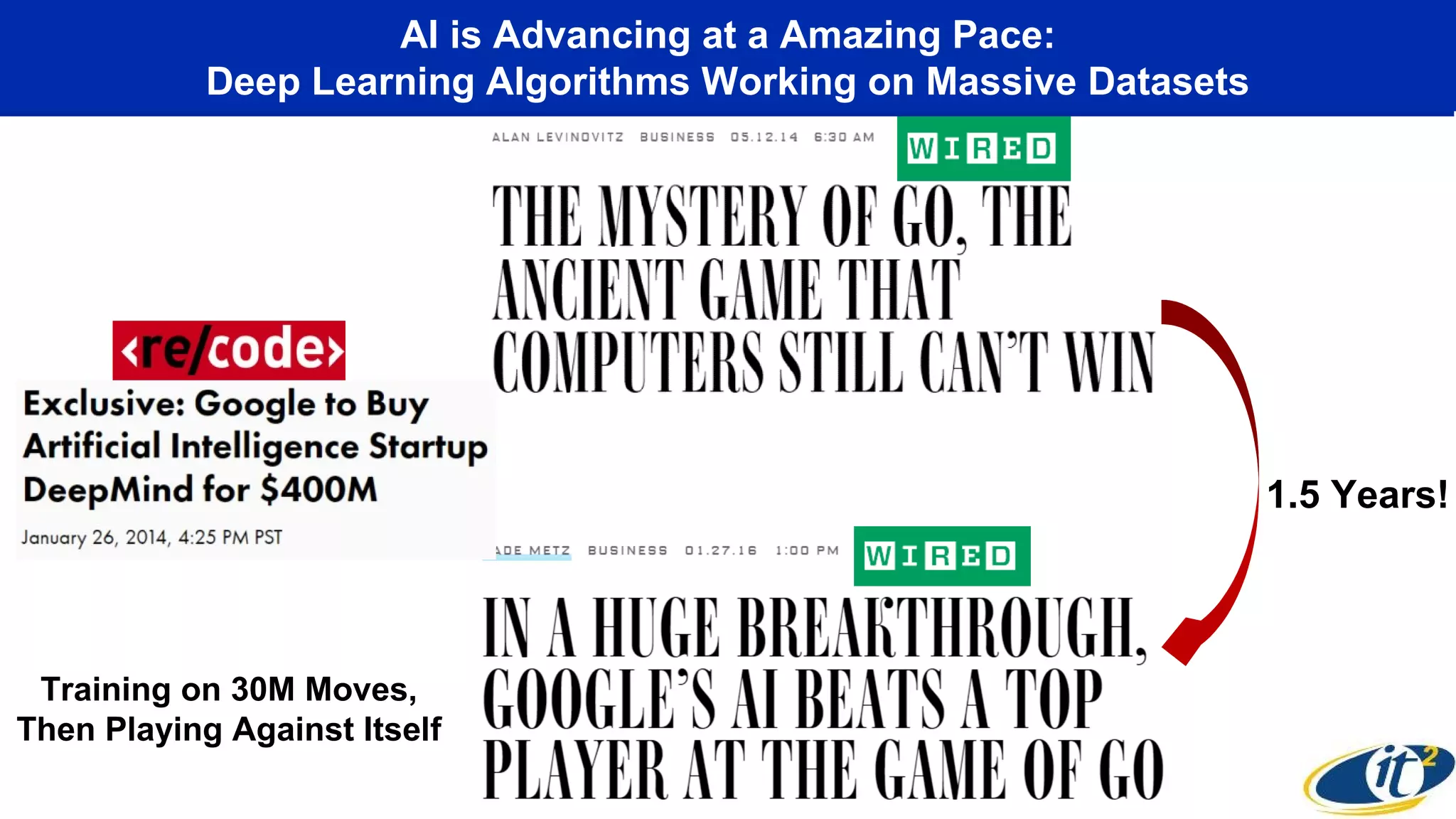

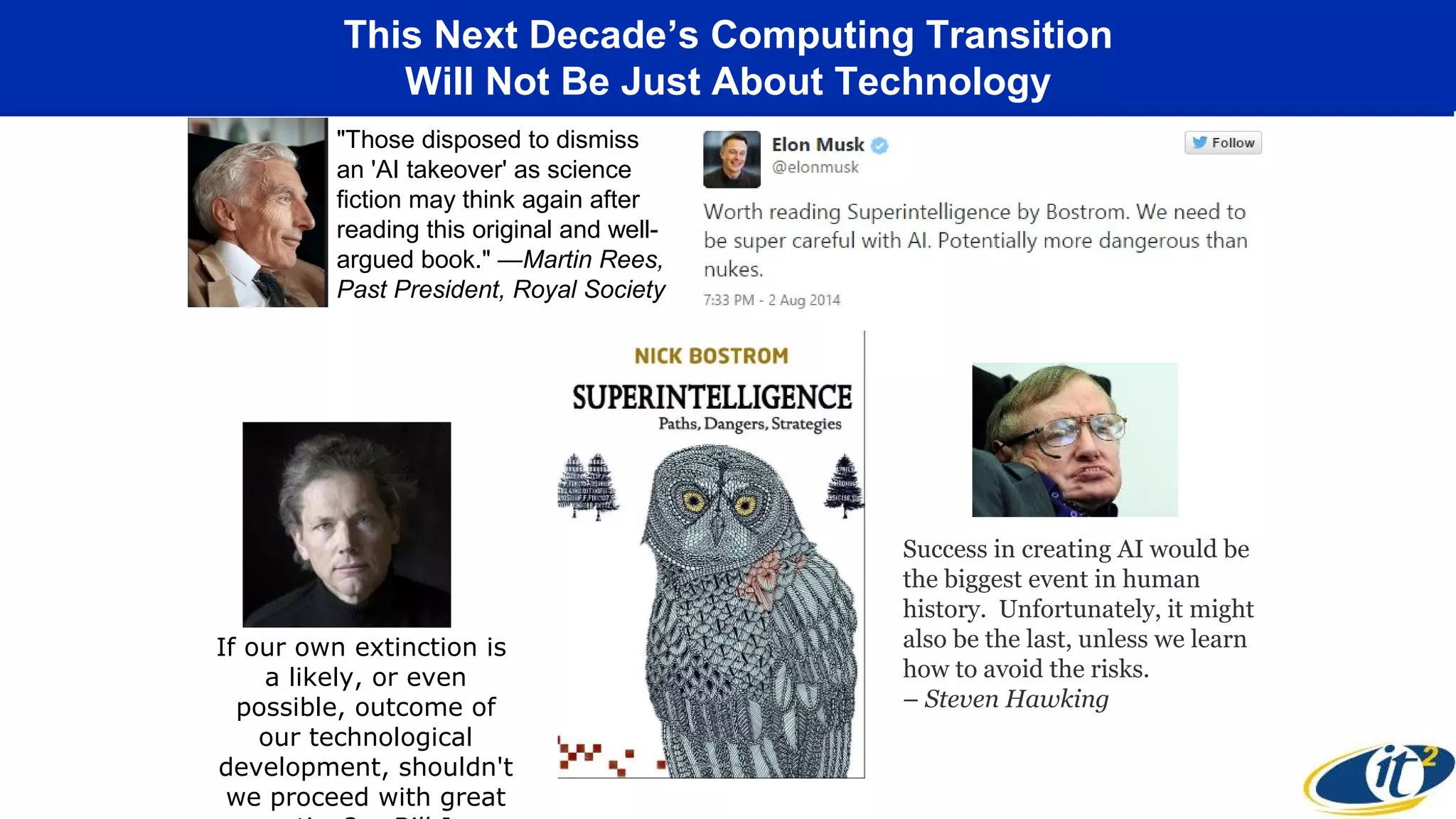

The keynote presentation by Dr. Larry Smarr at the NSF workshop highlighted the transformative advancements in cyberinfrastructure anticipated by 2021, including the integration of 5G technology, novel computing architectures, and vast data generation from global scientific instruments. These advancements promise to reshape various sectors, enabling real-time data mapping, personalized healthcare systems, and the emergence of the industrial internet with massive economic implications. The discussion also focused on the implications of AI and deep learning, emphasizing the need to navigate potential risks associated with rapid technological growth.