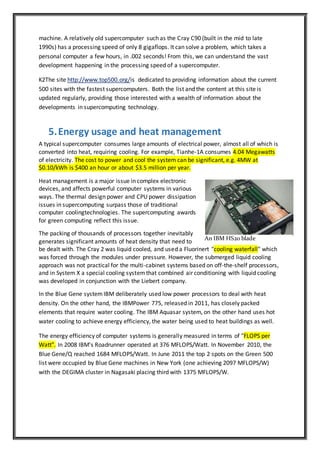

This document provides an overview of supercomputers including their common uses, challenges, history and top systems. Some key points:

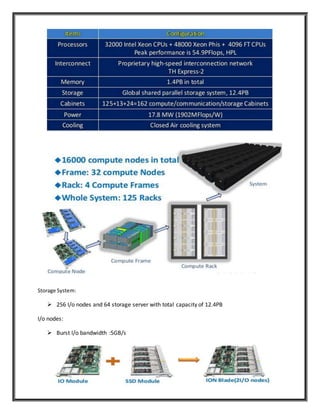

- Supercomputers are used for highly complex tasks like weather forecasting, climate modeling, and simulating nuclear weapons. They can process vast amounts of data and perform quadrillions of calculations per second.

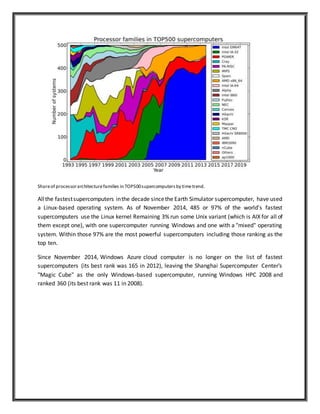

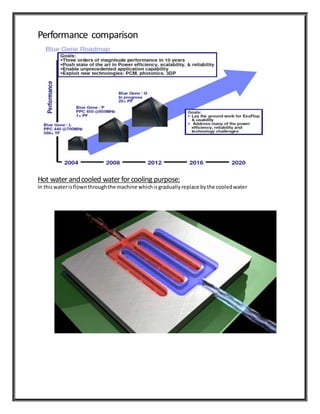

- Major challenges include cooling systems to manage the large amounts of heat generated and high-speed data transfer between components.

- The US and Japan have historically dominated supercomputing. Early systems included the CDC 6600 (1964) and Cray-1 (1976). Modern systems use thousands of processors networked together.

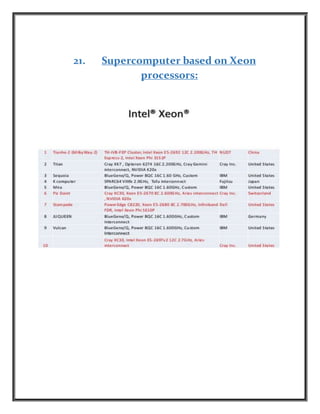

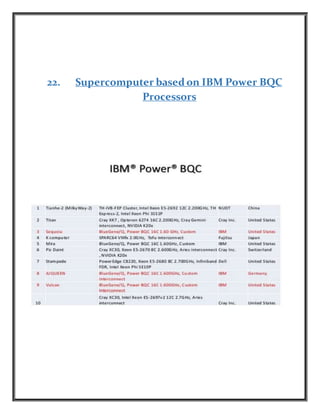

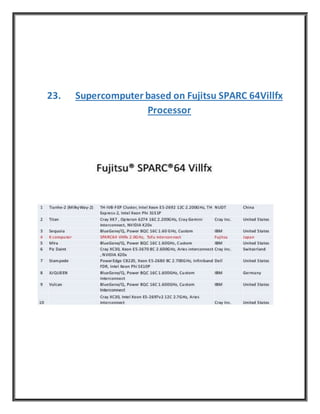

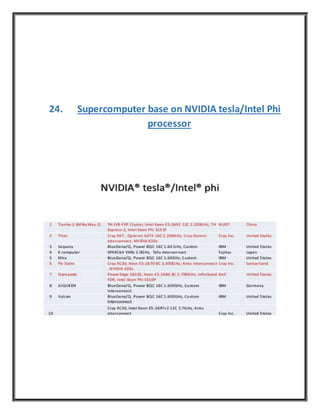

- The top supercomputers today include China's Tianhe