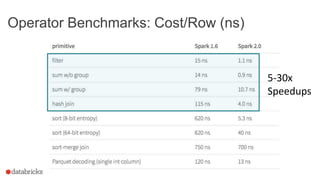

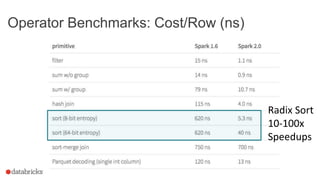

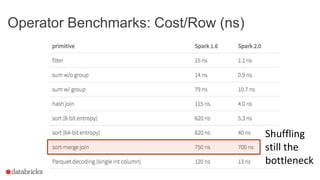

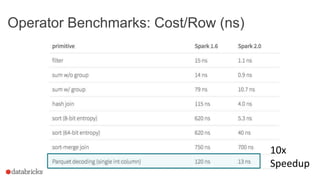

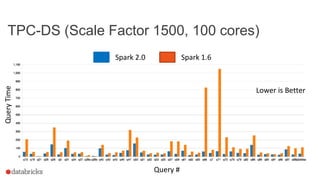

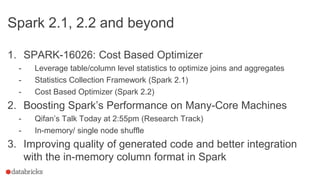

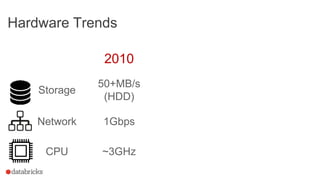

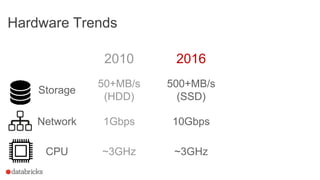

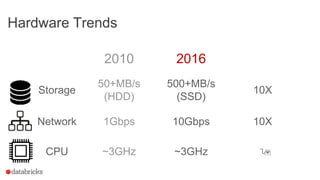

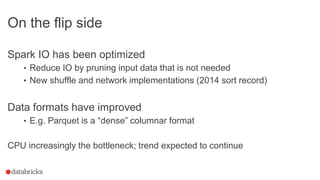

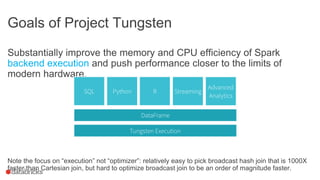

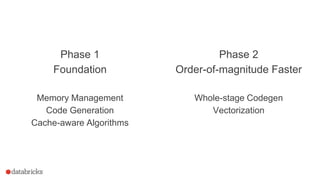

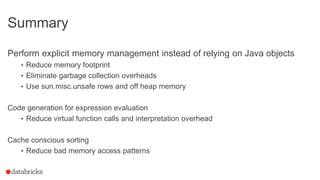

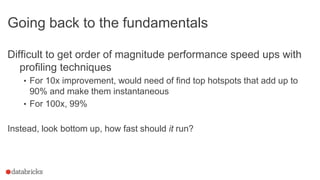

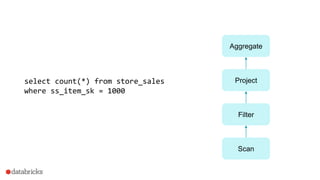

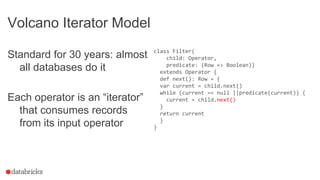

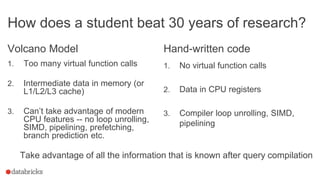

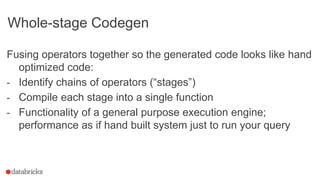

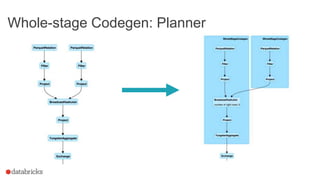

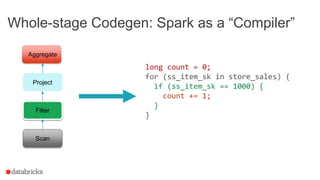

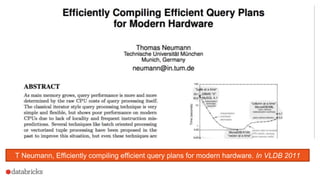

This document discusses Project Tungsten, which aims to substantially improve the memory and CPU efficiency of Spark. It describes how Spark has optimized IO but the CPU has become the bottleneck. Project Tungsten focuses on improving execution performance through techniques like explicit memory management, code generation, cache-aware algorithms, whole-stage code generation, and columnar in-memory data formats. It shows how these techniques provide significant performance improvements, such as 5-30x speedups on operators and 10-100x speedups on radix sort. Future work includes cost-based optimization and improving performance on many-core machines.

![Phase 1

Spark 1.4 - 1.6

Memory Management

Code Generation

Cache-aware Algorithms

Phase 2

Spark 2.0+

Whole-stage Code Generation

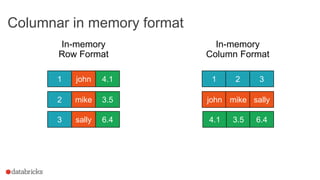

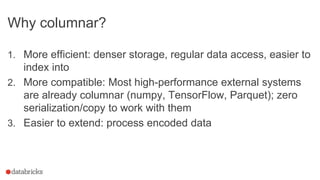

Columnar in Memory Support

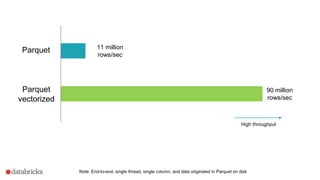

Both whole stage codegen [SPARK-12795] and the vectorized

parquet reader [SPARK-12992] are enabled by default in Spark 2.0+

Demo](https://image.slidesharecdn.com/sparkperf-sparksummiteu16-final-161103182837/85/Spark-Summit-EU-talk-by-Sameer-Agarwal-30-320.jpg)