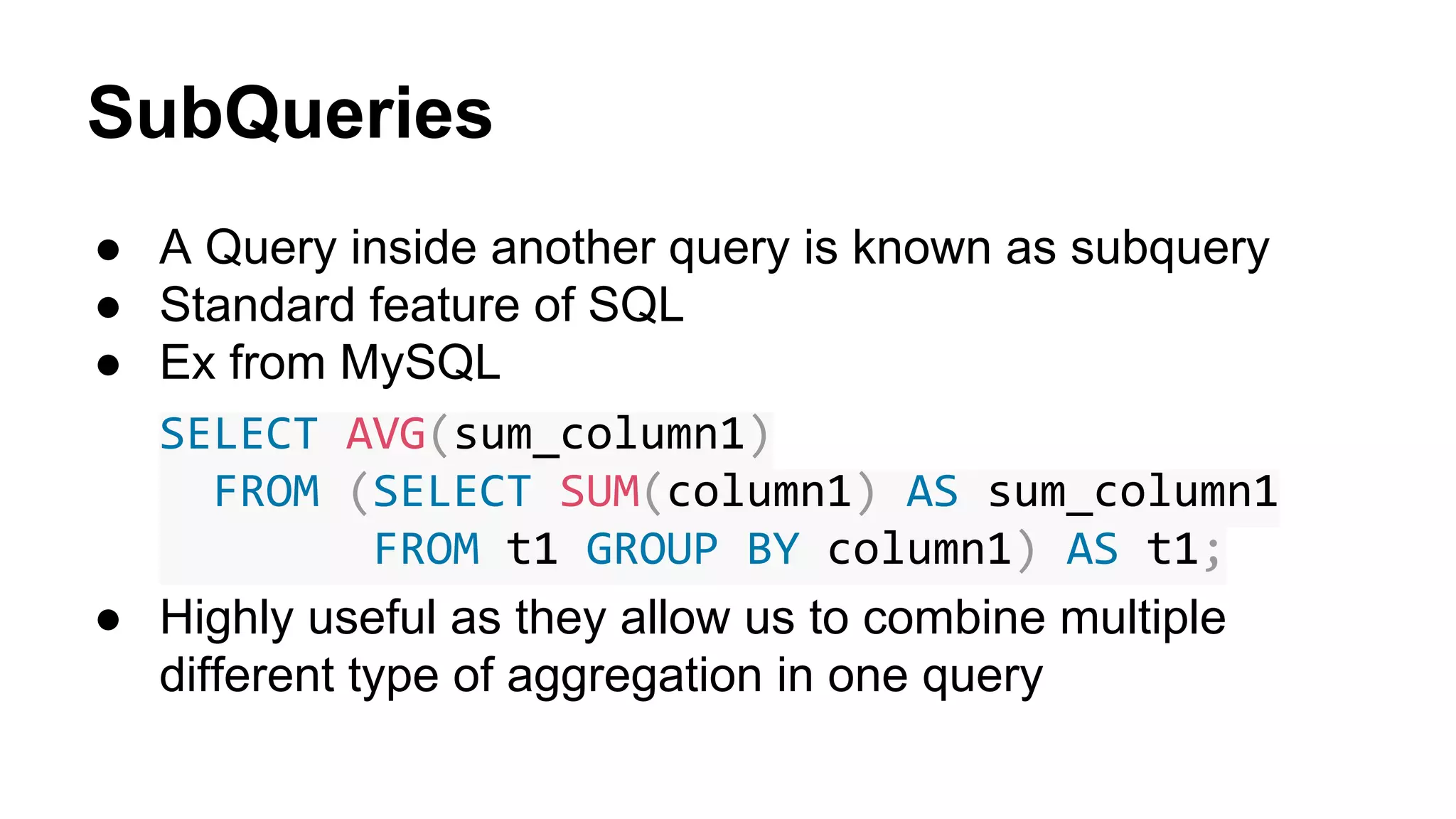

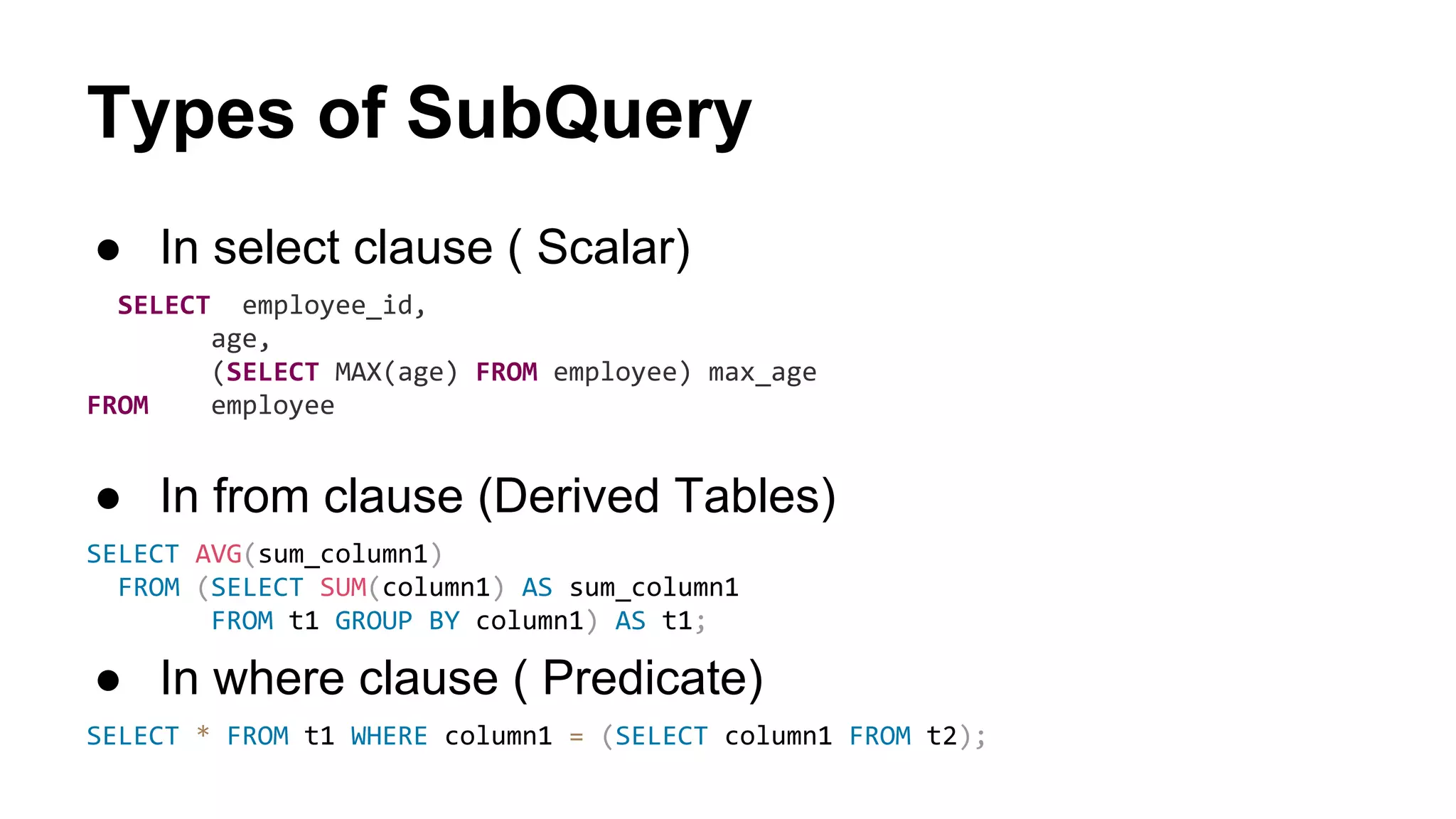

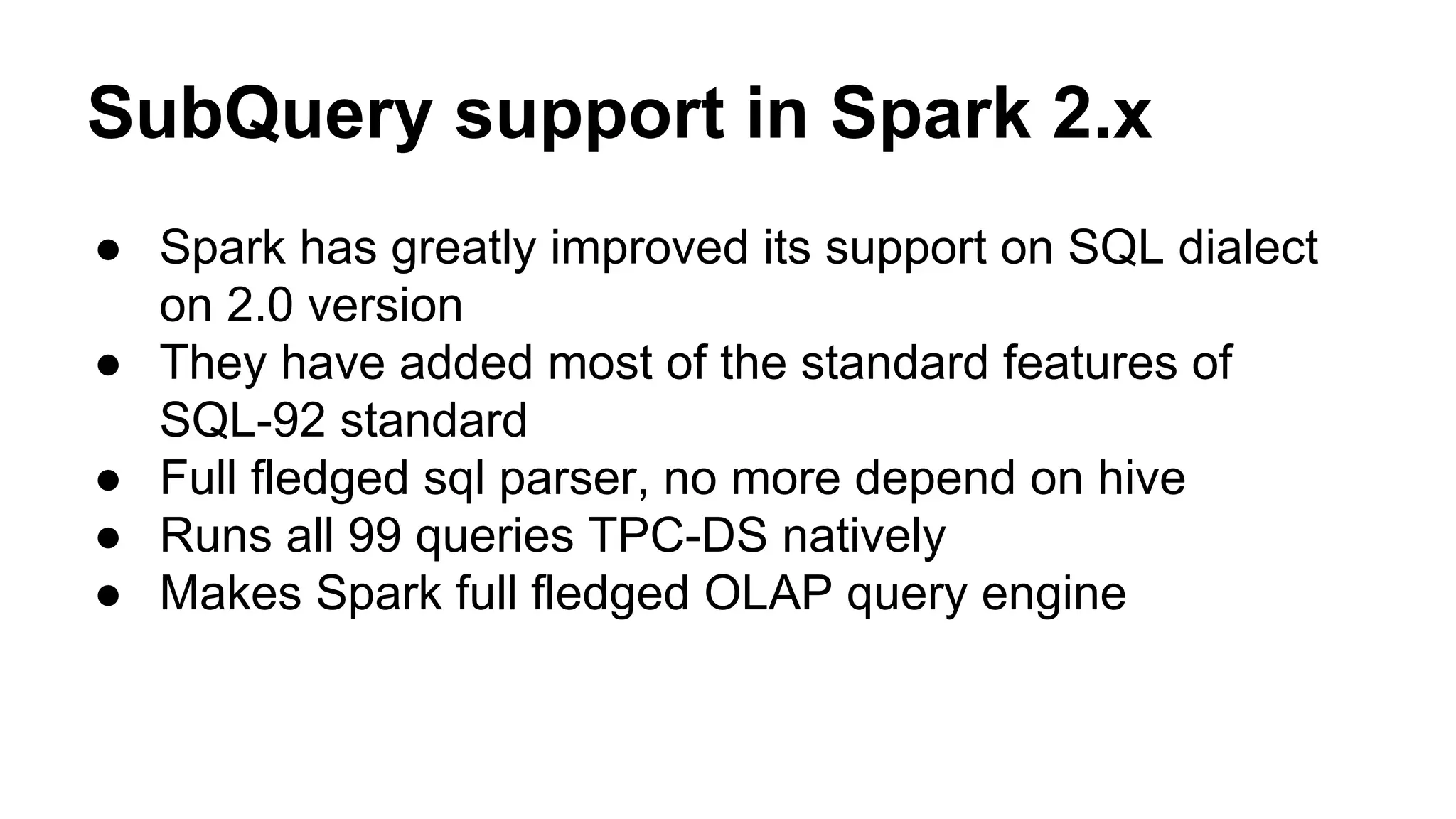

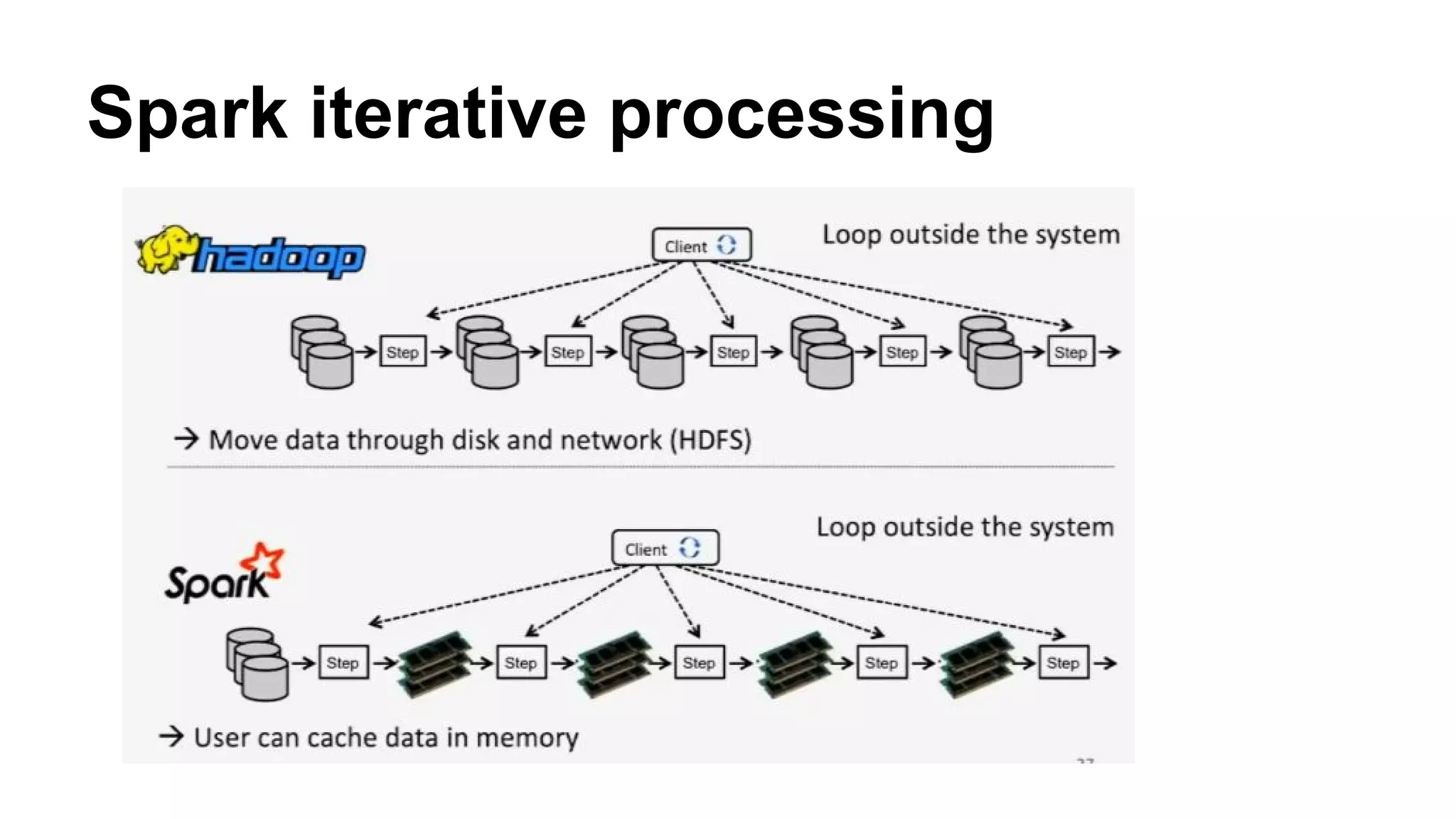

This document discusses best practices for migrating Spark applications from version 1.x to 2.0. It covers new features in Spark 2.0 like the Dataset API, catalog API, subqueries and checkpointing for iterative algorithms. The document recommends changes to existing best practices around choice of serializer, cache format, use of broadcast variables and choice of cluster manager. It also discusses how Spark 2.0's improved SQL support impacts use of HiveContext.