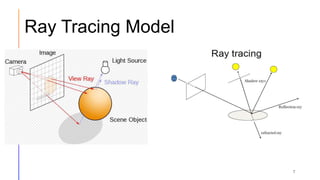

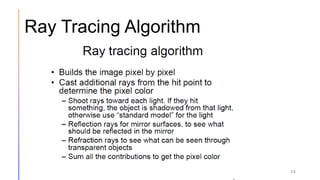

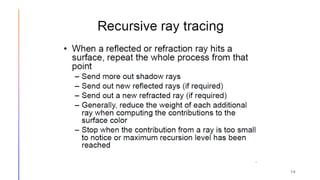

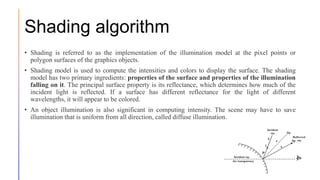

This document discusses rendering algorithms and techniques. It begins by defining rendering as the process of generating 2D or 3D images from 3D models. There are two main categories of rendering: real-time rendering used for interactive graphics, and pre-rendering used where image quality is prioritized over speed. The three main computational techniques are ray casting, ray tracing, and shading. Ray tracing simulates physically accurate lighting by tracing the path of light rays. Shading determines an object's shade based on attributes like diffuse illumination and light source contributions.