This document provides a comprehensive overview of CPU architecture, detailing its history, design principles, the significance of the von Neumann architecture, and challenges such as the von Neumann bottleneck. It also discusses new developments in processor designs, including multi-core architectures and parallel computing innovations, while emphasizing the need for new architectures to overcome existing limitations. The future of CPU design involves addressing complexity and heat management challenges presented by integrating multiple cores into CPUs.

![5

it in the last years a lot of advances were made into the subject,

in order to solve the problems that this architecture presents.

There were some attempts to introduce some “non-von

Neumann” Architectures, but finally the programs developed

into them finally ran into the von Neumann Architecture.

The last investigations and developments gives as to the run

of finding new architectures to develop the parallelism, and it

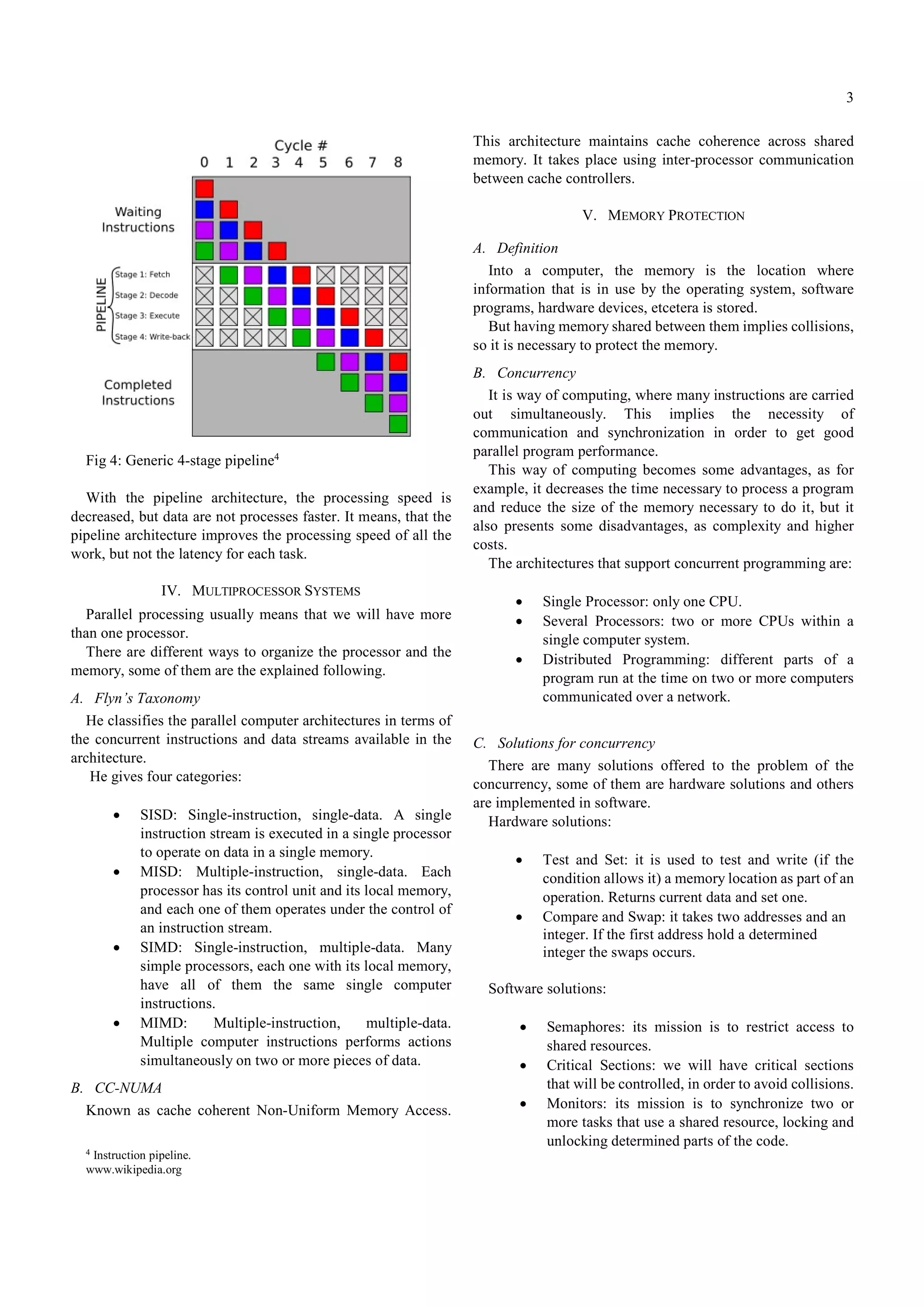

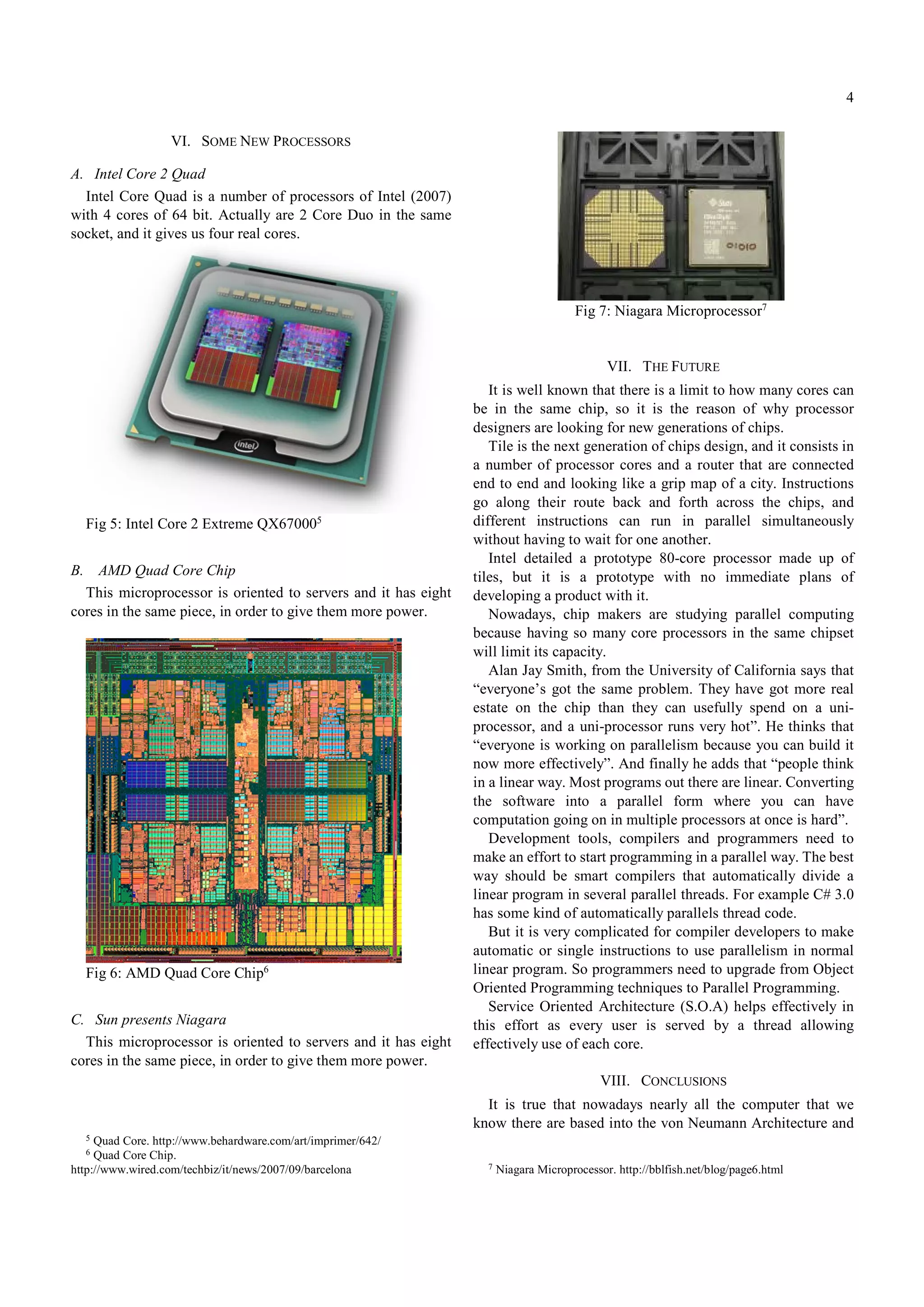

gives us some new microprocessors as the Intel Core 2 Quad,

from Intel, the Quad-Core Chip from AMD and the Niagara

from Sun Microsystems.

But all of this gives as to the obstacle of how many chips

can be in the same CPU avoiding the problems of the increase

of the temperature, the increase of the complex of their circuits

and the communications between them, and it brings us to the

new investigations, the Tiles.

Even efforts in upgrading the ability of computers to handle

CPU temperature have a limit. Air cooling improved in

previous years. Nowadays there are some computers, even

personal computers, with water cooling systems. But these

improvements in heat dissipation are reaching his limit

between cost and efficiency.

A new CPU architecture is needed, others solutions do not

solve the main problem, only extends the life of Von Neumann

architecture.

Integrating more cores into a CPU chip is a complex

engineering task that only leads to a small efficiency upgrade.

High budget on Research and Development is needed for CPU

companies in order to get small amount of improvement. The

budget should be better expended in new architectures.

To sump up, I want to add, that actually some very big

corporations have the need of very powerful CPUs for their

servers. Day after day there are a lot of CPU designers

working to solve it but always, having powerful CPUs

increases the complexity and the costs.

REFERENCES

[1] CPU Architecture. Chapter Four

http://webster.cs.ucr.edu/AoA/Linux/PDFs/CPUArchitecture.pdf

[2] AMD. Next CPU Architecture will be completely different.

http://www.custompc.co.uk/news/602511/amd-next-cpu-architecture-

will-be-completely-different.html

[3] Tile is the next hot multicore chip design.

http://pcworld.about.com/od/cpuarchitecture/Tile-is-the-next-hot-

multicore.htm

[4] CS 6220: Concurrency in Hardware.

http://www.cs.usu.edu/~jerry/Classes/6220/Notes/hardware.html

[5] Concurrency Solutions.

http://www.ayende.com/Blog/archive/2008/01/08/Concurrency-

Solutions.aspx

[6] Concurrency Control.

http://en.wikipedia.org/wiki/Concurrency_control

[7] CPU Socket

http://en.wikipedia.org/wiki/List_of_CPU_sockets

[8] List of Intel Microprocessors

http://en.wikipedia.org/wiki/List_of_Intel_microprocessors

[9] List of AMD Microprocessors

http://en.wikipedia.org/wiki/List_of_AMD_microprocessors

[10] Intel Core 2 Quad

http://es.wikipedia.org/wiki/Core_2_Quad

[11] Intel Core 2 Extreme QX6700 (Quad Core) – BeHardware by Marc

Prieur

http://www.behardware.com/art/imprimer/642/

[12] AMD Pins Hopes on Barcelona. Quad-Core Chips

http://www.wired.com/techbiz/it/news/2007/09/barcelona

[13] Sun presents Niagara

http://www.vnunet.es/Actualidad/Noticias/Infraestructuras/Hardware/20

051115023

[14] The BabelFish Blog

http://bblfish.net/blog/page6.html

[15] Welcome to Hot Chips 19

http://pcworld.about.com/gi/dynamic/offsite.htm?site=http://www.hotch

ips.org/hc19/main_page.htm

[16] Application-Customisez CPU Design by Jeffrey Brown

http://www-128.ibm.com/developerworks/power/library/pa-

fpfxbox/?ca=dgr-lnxw07XBoxDesign

[17] Processor Design. An introduction.

http://www.gamezero.com/team-

0/articles/math_magic/micro/index.html](https://image.slidesharecdn.com/newdevelopmentsinthecpuarchitecture-190225222941/75/New-Developments-in-the-CPU-Architecture-5-2048.jpg)