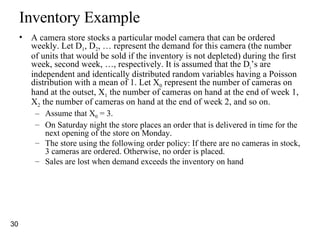

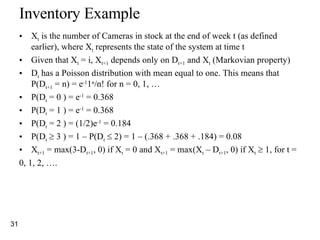

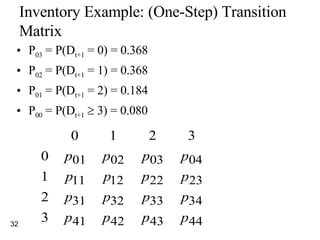

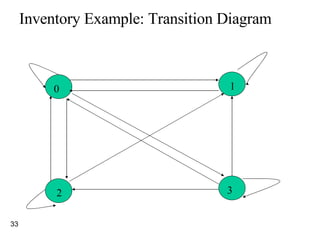

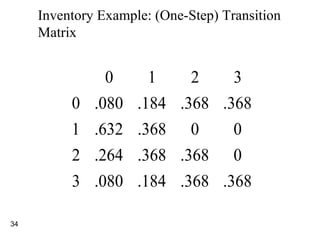

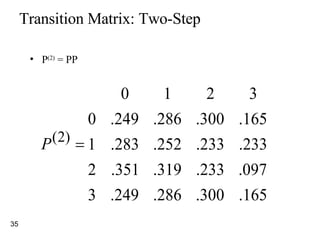

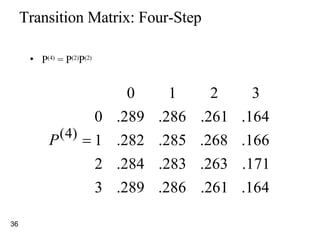

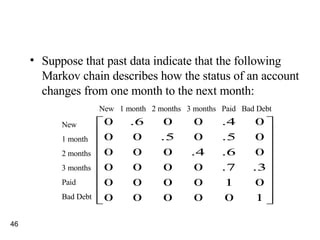

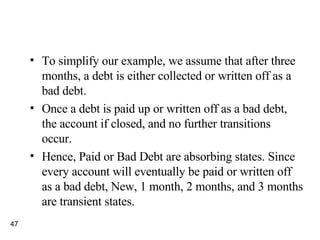

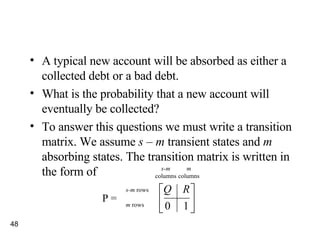

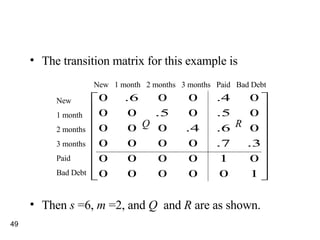

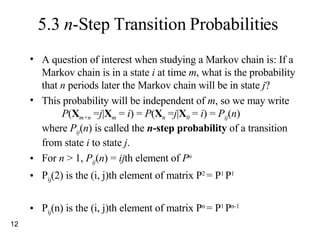

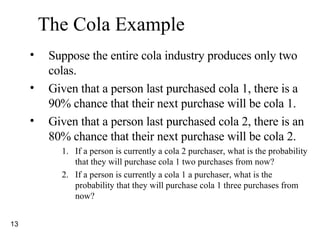

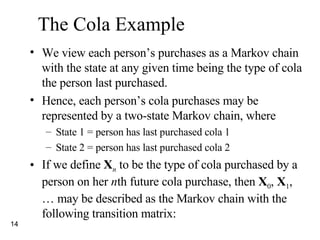

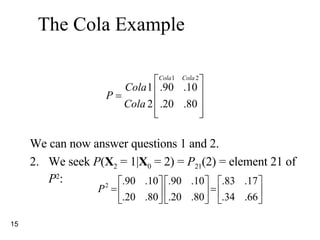

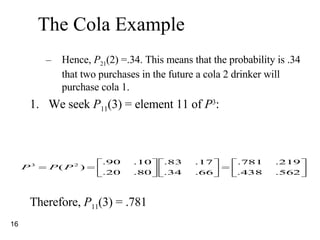

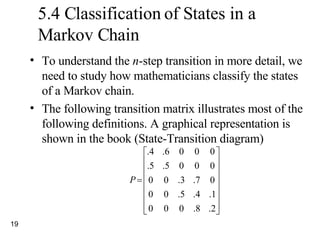

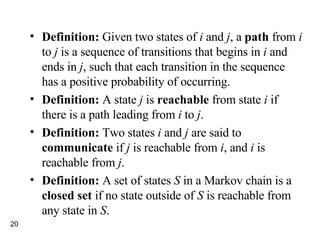

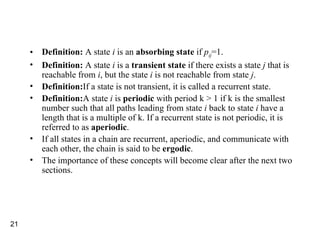

This document provides a summary of Markov chains. It begins by defining stochastic processes and Markov chains. A Markov chain is a stochastic process where the probability of the next state depends only on the current state, not on the sequence of events that preceded it. The document discusses n-step transition probabilities, classification of states, and steady-state probabilities. It provides examples of Markov chains for cola purchases and camera store inventory to illustrate the concepts.

![We call the vector q = [ q 1 , q 2 ,…q s ] the initial probability distribution for the Markov chain. In most applications, the transition probabilities are displayed as an s x s transition probability matrix P . The transition probability matrix P may be written as](https://image.slidesharecdn.com/markov-chains-1203810979963803-4/85/Markov-Chains-8-320.jpg)

![Many times we do not know the state of the Markov chain at time 0. Then we can determine the probability that the system is in state i at time n by using the reasoning. Probability of being in state j at time n where q =[q 1 , q 2 , … q 3 ]. Hence, q n = q o p n = q n-1 p Example, q 0 = (.4,.6) q 1 = (.4,.6) q 1 = (.48,.52)](https://image.slidesharecdn.com/markov-chains-1203810979963803-4/85/Markov-Chains-17-320.jpg)

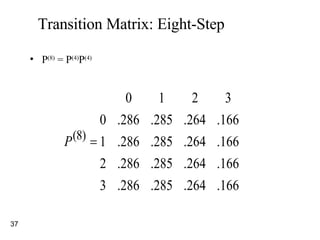

![5.5 Steady-State Probabilities and Mean First Passage Times Steady-state probabilities are used to describe the long-run behavior of a Markov chain. Theorem 1: Let P be the transition matrix for an s -state ergodic chain. Then there exists a vector π = [ π 1 π 2 … π s ] such that](https://image.slidesharecdn.com/markov-chains-1203810979963803-4/85/Markov-Chains-22-320.jpg)

![Theorem 1 tells us that for any initial state i , The vector π = [ π 1 π 2 … π s ] is often called the steady-state distribution , or equilibrium distribution , for the Markov chain. Hence, they are independent of the initial probability distribution defined over the states](https://image.slidesharecdn.com/markov-chains-1203810979963803-4/85/Markov-Chains-23-320.jpg)

![Steady-State Probabilities The vector = [ 1 , 2 , …. , s ] is often known as the steady-state distribution for the Markov chain For large n and all i, P ij (n+1) P ij (n) j In matrix form = P For any n and any i, P i1 (n) + P i2 (n) + … + P is (n) = 1 As n , we have 1 + 2 + …. + s = 1](https://image.slidesharecdn.com/markov-chains-1203810979963803-4/85/Markov-Chains-25-320.jpg)

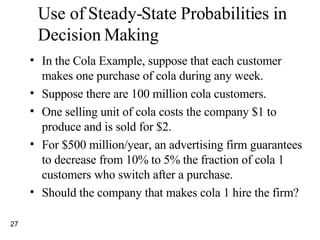

![At present, a fraction π 1 = ⅔ of all purchases are cola 1 purchases, since: π 1 = .90 π 1 +.20 π 2 π 2 = .10 π 1 +.80 π 2 and using the following equation by π 1 + π 2 = 1 Each purchase of cola 1 earns the company a $1 profit. We can calculate the annual profit as $3,466,666,667 [2/3(100 million)(52 weeks)$1]. The advertising firm is offering to change the P matrix to](https://image.slidesharecdn.com/markov-chains-1203810979963803-4/85/Markov-Chains-28-320.jpg)

![For P 1, the steady-state equations become π 1 = .95 π 1 +.20 π 2 π 2 = .05 π 1 +.80 π 2 Replacing the second equation by π 1 + π 2 = 1 and solving, we obtain π 1 =.8 and π 2 = .2. Now the cola 1 company’s annual profit will be $3,660,000,000 [.8(100 million)(52 weeks)$1-($500 million)]. Hence, the cola 1 company should hire the ad agency.](https://image.slidesharecdn.com/markov-chains-1203810979963803-4/85/Markov-Chains-29-320.jpg)