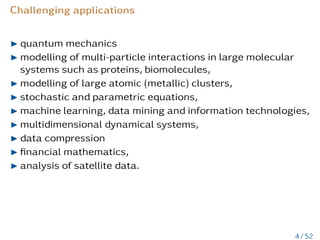

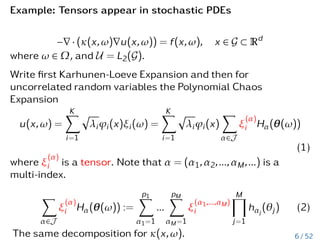

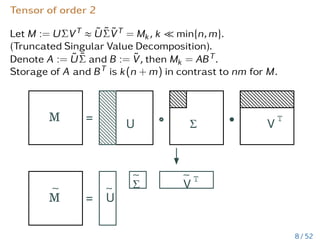

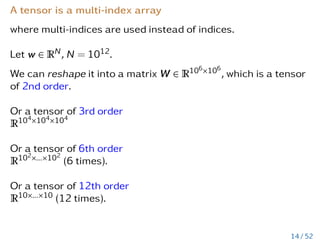

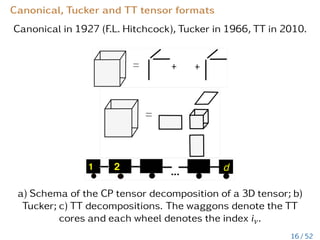

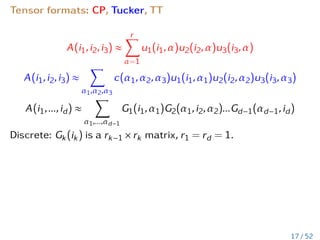

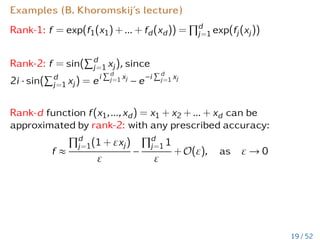

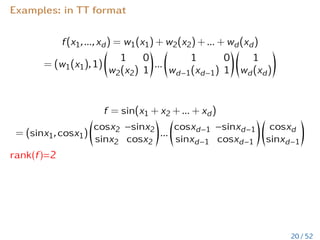

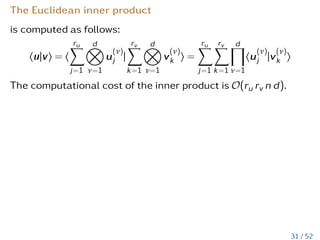

The document discusses low-rank tensor approximations aimed at reducing computational time and memory usage while extracting knowledge from high-dimensional datasets. It outlines historical developments, applications in fields like quantum mechanics and machine learning, and the importance of tensor formats and algorithms in handling complex data. Additionally, it highlights the challenges of high-dimensional data, such as the curse of dimensionality and presents various examples of tensor applications in scientific computing.

![Curse of dimensionality

Assume we have nd data. Our aim is to reduce

storage/complexity from O(nd) to O(dn).

If n = 100 and d = 10, then just to store one needs

8 · 10010 ≈ 8 · 1020 = 8 · 108 TeraBytes.

If we assume that a modern computer compares 107 numbers

per second, then the total time for comparison 1020 elements

will be 1013 seconds or ≈ 3 ∗ 105 years. In some chemical

applications we had n = 100 and d = 800.

I how to compute maxima and minima ?

I how to compute level sets, i.e. all elements from an interval

[a,b] ?

I how to compute the number of elements in an interval [a,b] ?

5 / 52](https://image.slidesharecdn.com/litvinenkolecturelowranktensors2021a-210617114157/85/Low-rank-tensor-approximation-Introduction-6-320.jpg)

![Final discretized stochastic PDE

Au = f, where

A:=

Ps

l=1 Ãl ⊗

NM

µ=1 ∆lµ

, Ãl ∈ RN×N, ∆lµ ∈ RRµ×Rµ,

u:=

Pr

j=1 uj ⊗

NM

µ=1 ujµ

, uj ∈ RN, ujµ ∈ RRµ,

f:=

PR

k=1 f̃k ⊗

NM

µ=1 gkµ, f̃k ∈ RN and gkµ ∈ RRµ.

Examples of stochastic Galerkin matrices:

And then solve iteratively with a tensor preconditioner [PhD of E. Zander, 2012]

M Espig, W Hackbusch, A Litvinenko, HG Matthies, P Wähnert, Efficient low-rank approximation of the stochastic Galerkin matrix in tensor formats Computers

Mathematics with Applications 67 (4), 818-829, 2014

Also see E. Ullmann, Chr. Schwab, B. Khoromskij, Schneider, Ballani, Kressner, Tobler and many-many others.

7 / 52](https://image.slidesharecdn.com/litvinenkolecturelowranktensors2021a-210617114157/85/Low-rank-tensor-approximation-Introduction-8-320.jpg)

![Arithmetic operations with low-rank matrices

Let v ∈ Rm.

Suppose Mk = ABT ∈ Rn×m, A ∈ Rn×k, B ∈ Rm×k is given.

MV product: Mkv = ABTv = (A(BTv)). Cost O(km + kn).

Suppose M

0

= CDT, C ∈ Rn×k and D ∈ Rm×k.

Matrix addition: Mk + M

0

= AnewBT

new, Anew := [A C] ∈ Rn×2k

and Bnew = [B D] ∈ Rm×2k.

Cost of rank truncation from rank 2k to k is O((n + m)k2 + k3).

9 / 52](https://image.slidesharecdn.com/litvinenkolecturelowranktensors2021a-210617114157/85/Low-rank-tensor-approximation-Introduction-10-320.jpg)

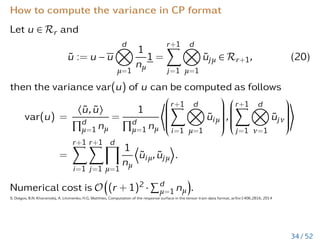

![Post-processing: Compute mean and variance

Let W := [v1,v2,...,vm], where vi are vectors (e.g., solution

vectors of a Navier-Stokes equation).

Given tSVD Wk = ABT ≈ W ∈ Rn×m, A := UkSk, B := Vk.

v =

1

m

m

X

i=1

vi =

1

m

m

X

i=1

A · bi = Ab, (3)

C =

1

m − 1

WcWT

c =

1

m − 1

ABT

BAT

=

1

m − 1

AAT

. (4)

Diagonal of C can be computed with the complexity

O(k2(m + n)).

If kW − Wkk ≤ ε, then

a) kv − vkk ≤ 1

√

n

ε,

b) kC − Ckk ≤ 1

m−1ε2.

10 / 52](https://image.slidesharecdn.com/litvinenkolecturelowranktensors2021a-210617114157/85/Low-rank-tensor-approximation-Introduction-11-320.jpg)

![Example from CFD and aerodynamics

Inflow and air-foil shape uncertain.

Data compression achieved by updated SVD:

Made from m = 600 MC Simulations, SVD is updated every 10

samples.

n = 260,000

Updated SVD: Relative errors, memory requirements:

rank k pressure turb. kin. energy memory [MB]

10 1.9e-2 4.0e-3 21

20 1.4e-2 5.9e-3 42

50 5.3e-3 1.5e-4 104

Dense matrix M ∈ R260000×600 costs 1250 MB storage.

1.A. Litvinenko, H.G. Matthies, T.A. El-Moselhy, Sampling and low-rank tensor approximation of the response surface, Monte Carlo and Quasi-Monte Carlo Methods 2012,

535-551, 2013

2. A. Litvinenko, H.G. Matthies, Numerical methods for uncertainty quantification and bayesian update in aerodynamics, Management and Minimisation of Uncertainties

and Errors in Numerical Aerodynamics, pp 265-282, Springer, Berlin, 2013

11 / 52](https://image.slidesharecdn.com/litvinenkolecturelowranktensors2021a-210617114157/85/Low-rank-tensor-approximation-Introduction-12-320.jpg)

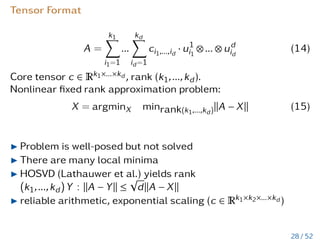

![Example: a high-dimensional PDE

−∇2

u = f on G = [0,1]d

with u|∂G = 1,

and the right-hand-side

f(x1,...,xd) ∝

d

X

k=1

d

Y

`=1,`,k

x`(1 − x`).

Solved via finite-difference method with n = 100 grid-points in

each direction.

Tensor u has N = nd entries.

Applications: computing electron density and Hartree potential

of molecules (see Diss. of M. Espig).

12 / 52](https://image.slidesharecdn.com/litvinenkolecturelowranktensors2021a-210617114157/85/Low-rank-tensor-approximation-Introduction-13-320.jpg)

![Comp. time to compute the maximum

d # loc’s.: ≈ years [a] actual time [s]

N = nd inspect. N (see Espig’s diss.)

25 1050 1.6 × 1033 0.16

50 10100 1.6 × 1083 0.42

75 10150 1.6 × 10133 1.16

100 10200 1.6 × 10183 2.58

125 10250 1.6 × 10233 4.97

150 10300 1.6 × 10283 8.56

Assumed 2 × 109 FLOPs/sec. on an 2 GHz CPU.

M. Espig, W. Hackbusch, A. Litvinenko, H.G. Matthies, E. Zander, Iterative algorithms for the post-processing of high-dimensional data Journal of Computational Physics

410, 109396, 2020

13 / 52](https://image.slidesharecdn.com/litvinenkolecturelowranktensors2021a-210617114157/85/Low-rank-tensor-approximation-Introduction-14-320.jpg)

![Definition of tensor of order d

Tensor of order d is a multidimensional array over a d-tuple

index set I = I1 × ··· × Id,

A = [ai1...id

: i` ∈ I`] ∈ RI

, I` = {1,...,n`}, ` = 1,..,d.

A is an element of the linear space

Vn =

d

O

`=1

V`, V` = RI`

equipped with the Euclidean scalar product h·,·i : Vn × Vn → R,

defined as

hA,Bi :=

X

(i1...id)∈I

ai1...id

bi1...id

, forA, B ∈ Vn.

15 / 52](https://image.slidesharecdn.com/litvinenkolecturelowranktensors2021a-210617114157/85/Low-rank-tensor-approximation-Introduction-16-320.jpg)

![Tensor and Matrices

Rank-1 tensor

A = u1 ⊗ u2 ⊗ ... ⊗ ud =:

d

O

µ=1

uµ

Ai1,...,id

= (u1)i1

· ... · (ud)id

Rank-1 tensor A = u ⊗ v is equivalent to rank-1 matrix A = uvT,

where u ∈ Rn, v ∈ Rm,

Rank-k tensor A =

Pk

i=1 ui ⊗ vi, matrix A =

Pk

i=1 uivT

i .

Kronecker product A ⊗ B ∈ Rnm×nm is a block matrix whose ij-th

block is [AijB].

18 / 52](https://image.slidesharecdn.com/litvinenkolecturelowranktensors2021a-210617114157/85/Low-rank-tensor-approximation-Introduction-19-320.jpg)

![Examples where you can apply low-rank tensor approx.

eiκkx−yk

kx − yk

Helmholtz kernel (5)

f(x) =

Z

[−a,a]3

e−iµkx−yk

kx − yk

u(y)dy Yukawa potential (6)

Classical Green kernels, x,y ∈ Rd

log(kx − yk),

1

kx − yk

(7)

Other multivariate functions

(a)

1

x2

1 + .. + x2

d

, (b)

1

q

x2

1 + .. + x2

d

, (c)

e−λkxk

kxk

. (8)

For (a) use (ρ = x2

1 + ... + x2

d ∈ [1, R], R 1)

1

ρ

=

Z

R+

e−ρt

dt. (9)

21 / 52](https://image.slidesharecdn.com/litvinenkolecturelowranktensors2021a-210617114157/85/Low-rank-tensor-approximation-Introduction-22-320.jpg)

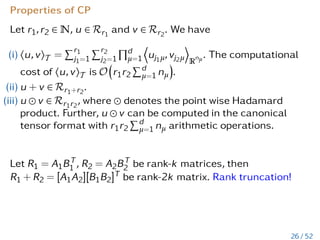

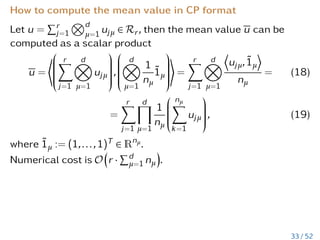

![v can be computed in the canonical

tensor format with r1r2

Pd

µ=1 nµ arithmetic operations.

Let R1 = A1BT

1 , R2 = A2BT

2 be rank-k matrices, then

R1 + R2 = [A1A2][B1B2]T be rank-2k matrix. Rank truncation!

26 / 52](https://image.slidesharecdn.com/litvinenkolecturelowranktensors2021a-210617114157/85/Low-rank-tensor-approximation-Introduction-30-320.jpg)

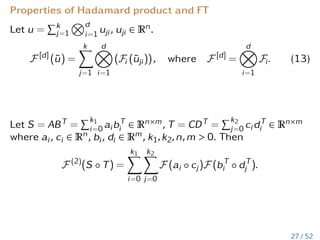

![Properties of Hadamard product and FT

Let u =

Pk

j=1

Nd

i=1 uji, uji ∈ Rn.

F [d]

(ũ) =

k

X

j=1

d

O

i=1

(Fi (ũji)), where F [d]

=

d

O

i=1

Fi. (13)

Let S = ABT =

Pk1

i=0 aibT

i ∈ Rn×m, T = CDT =

Pk2

j=0 cidT

i ∈ Rn×m

where ai, ci ∈ Rn, bi, di ∈ Rm, k1,k2,n,m 0. Then

F (2)

(S ◦ T) =

k1

X

i=0

k2

X

j=0

F (ai ◦ cj)F (bT

i ◦ dT

j ).

27 / 52](https://image.slidesharecdn.com/litvinenkolecturelowranktensors2021a-210617114157/85/Low-rank-tensor-approximation-Introduction-31-320.jpg)

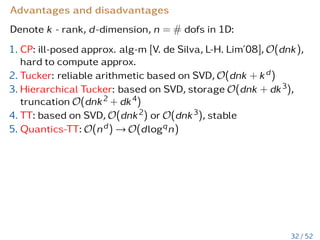

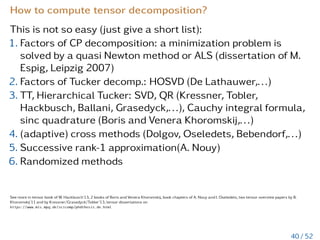

![Advantages and disadvantages

Denote k - rank, d-dimension, n = # dofs in 1D:

1. CP: ill-posed approx. alg-m [V. de Silva, L-H. Lim’08], O(dnk),

hard to compute approx.

2. Tucker: reliable arithmetic based on SVD, O(dnk + kd)

3. Hierarchical Tucker: based on SVD, storage O(dnk + dk3),

truncation O(dnk2 + dk4)

4. TT: based on SVD, O(dnk2) or O(dnk3), stable

5. Quantics-TT: O(nd) → O(dlogqn)

32 / 52](https://image.slidesharecdn.com/litvinenkolecturelowranktensors2021a-210617114157/85/Low-rank-tensor-approximation-Introduction-39-320.jpg)

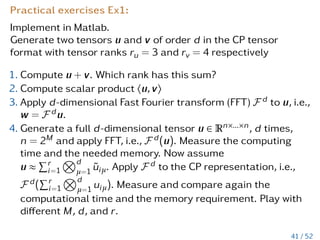

![Practical exercise Ex2:

Prove numerically that for the Laplace operator, discretised

with a finite difference on a 3d axis-parallel grid [0,1]3 with a

step size h, hold:

∆3

= ∆1

⊗ I ⊗ I + I ⊗ ∆1

⊗ I + I ⊗ I ⊗ ∆1

,

where ∆1 is the discretised Laplace operator in 1d.

Hint:

1. You may use this Matlab code

https://www.mathworks.com/matlabcentral/fileexchange/

27279-laplacian-in-1d-2d-or-3d to generate ∆3

2. Use Matlab operators kron() and eye() to compute ∆1 ⊗ I ⊗ I

42 / 52](https://image.slidesharecdn.com/litvinenkolecturelowranktensors2021a-210617114157/85/Low-rank-tensor-approximation-Introduction-49-320.jpg)