- The human attributes that are important for computer interaction include vision, hearing, touch, movement, and memory.

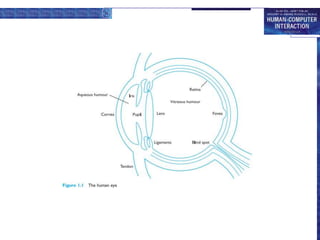

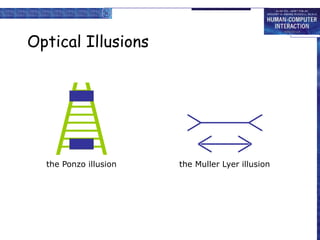

- Vision is the primary sense but has limitations in visual acuity, color perception, and ability to interpret signals. The eye physically receives images which the brain then interprets.

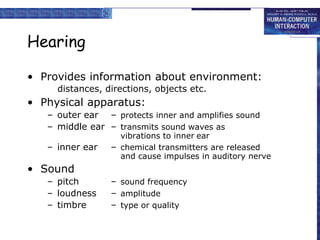

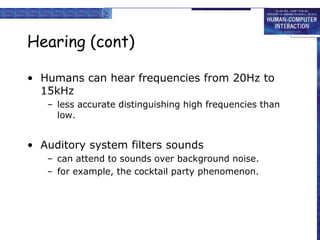

- Hearing provides environmental information and has limitations in distinguishing high frequencies. Sound is processed in the inner ear.

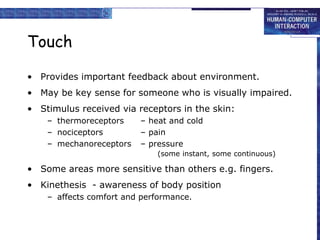

- Touch provides feedback through receptors in the skin and some areas are more sensitive than others.

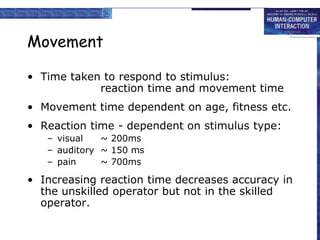

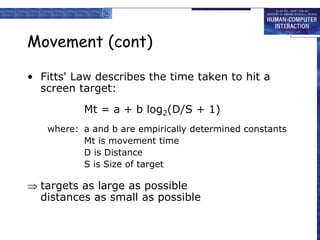

- Movement abilities like reaction time and accuracy are important considerations in interface design. Fitts' law describes time to hit targets based on distance and size.

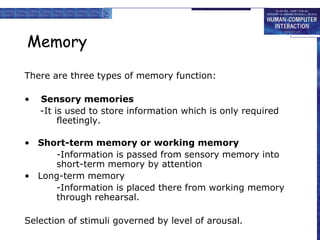

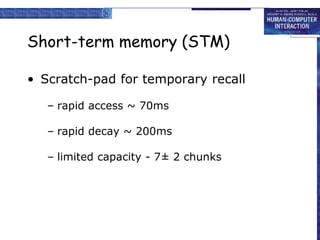

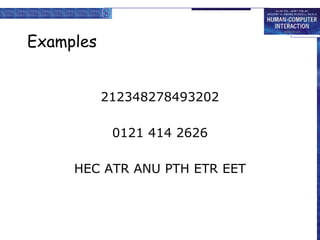

- Memory includes sensory, short-term, and long-