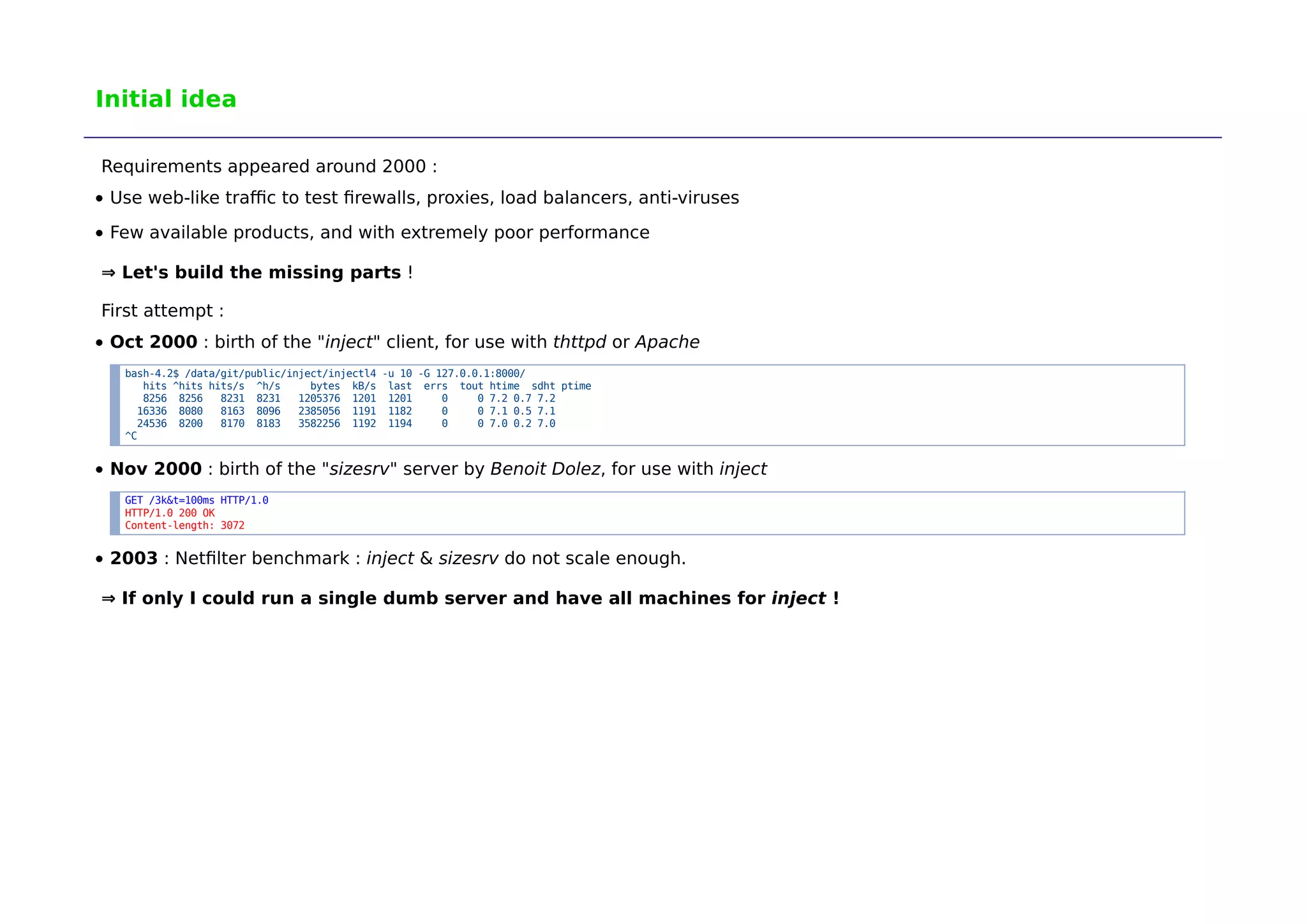

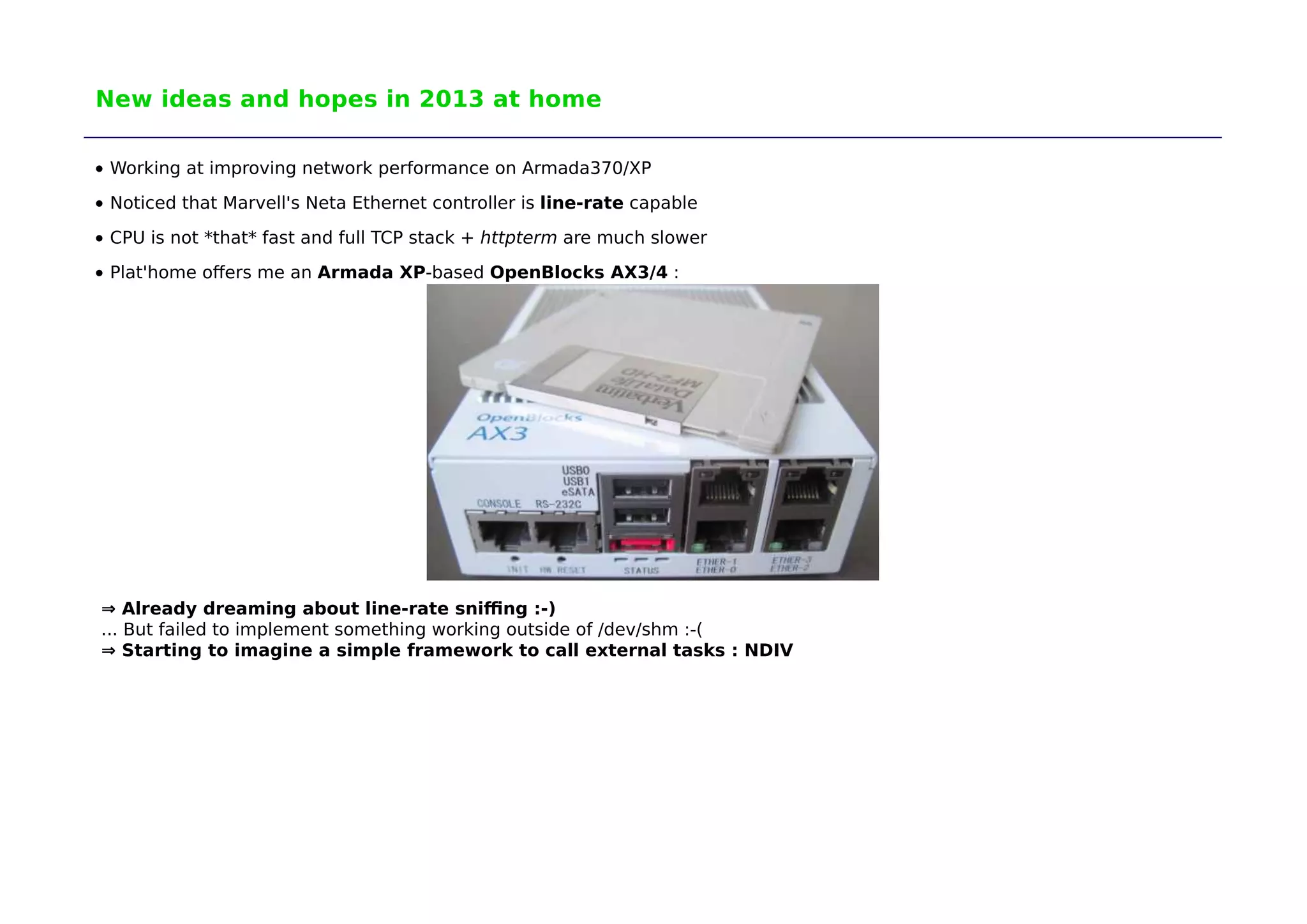

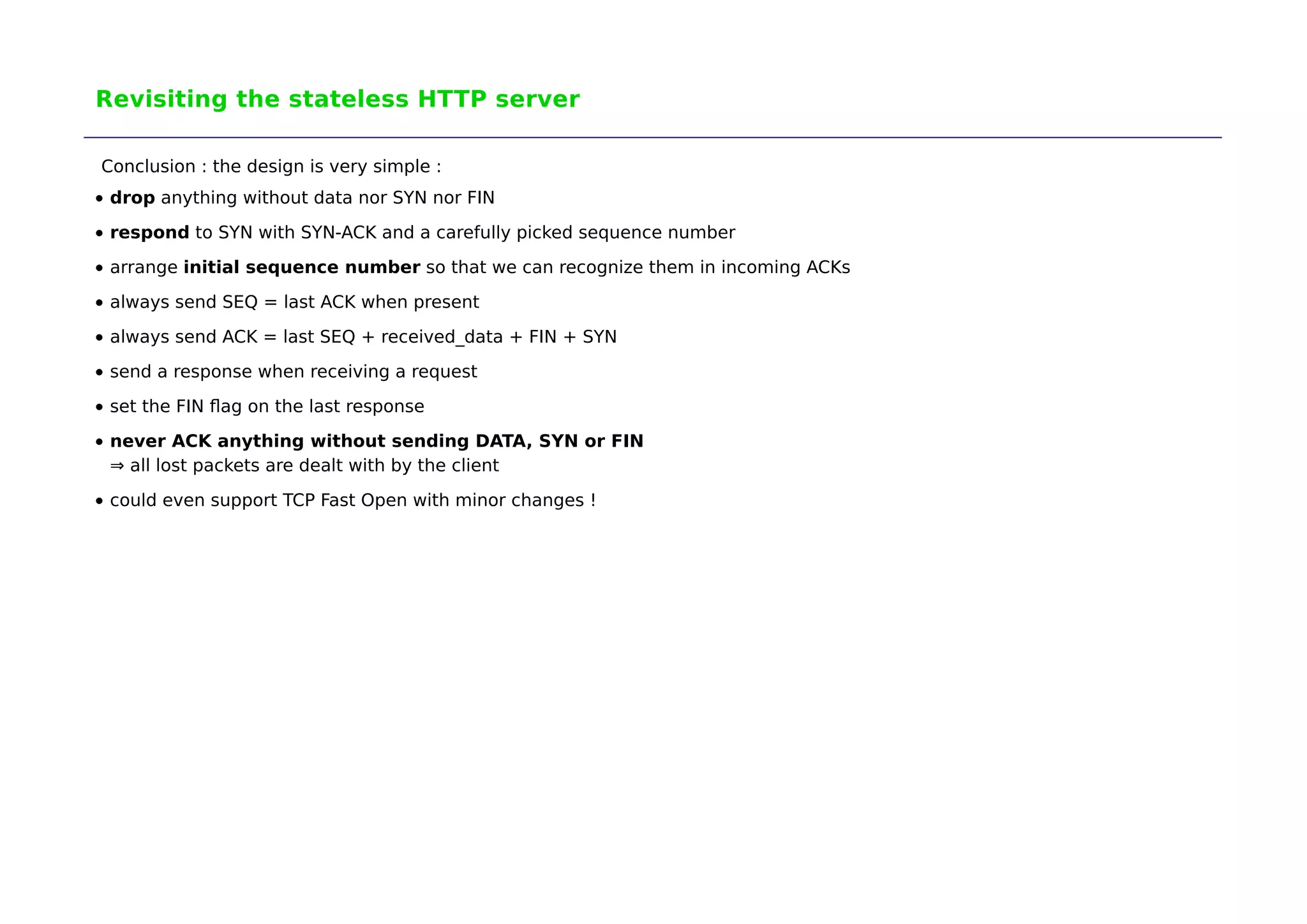

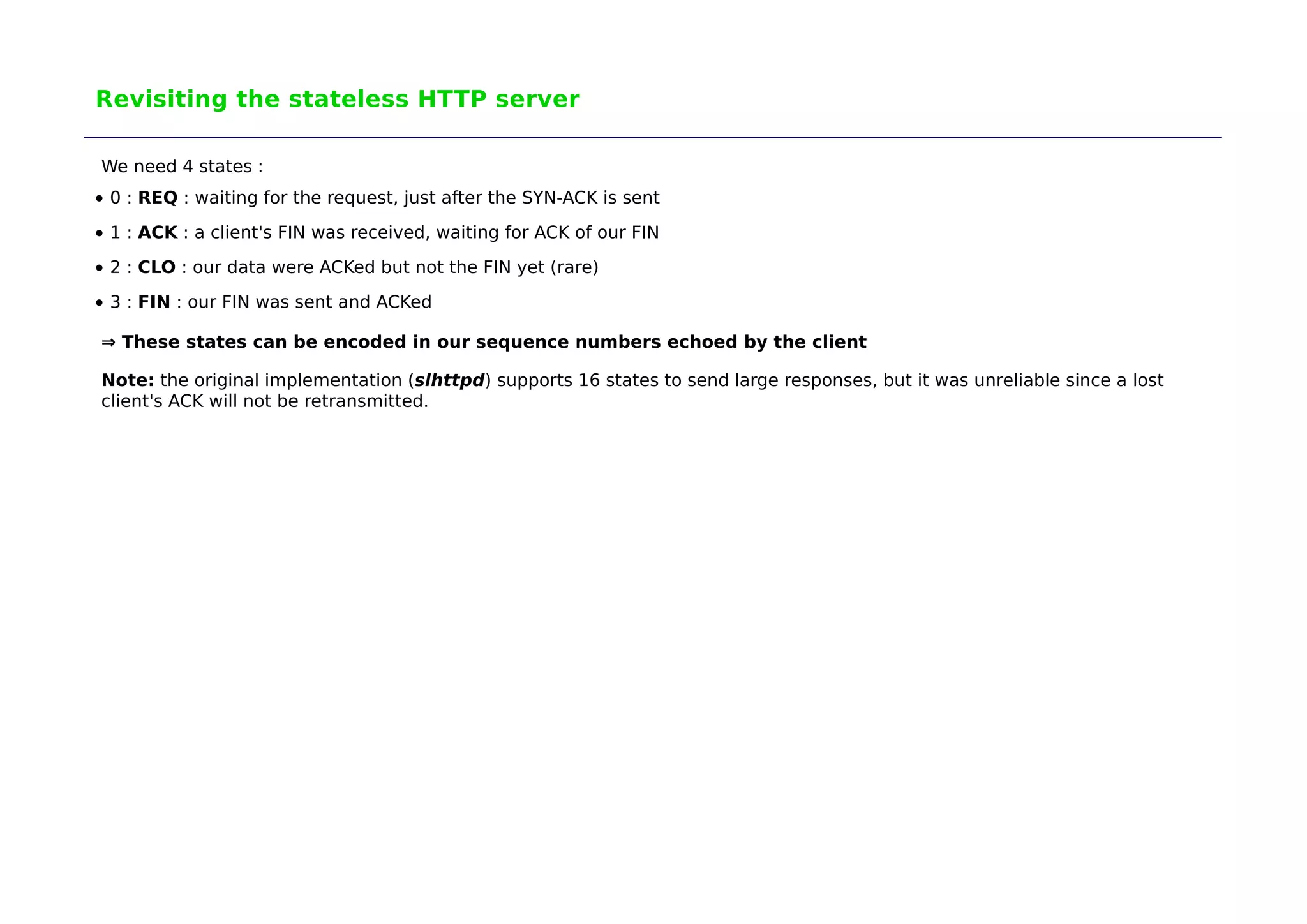

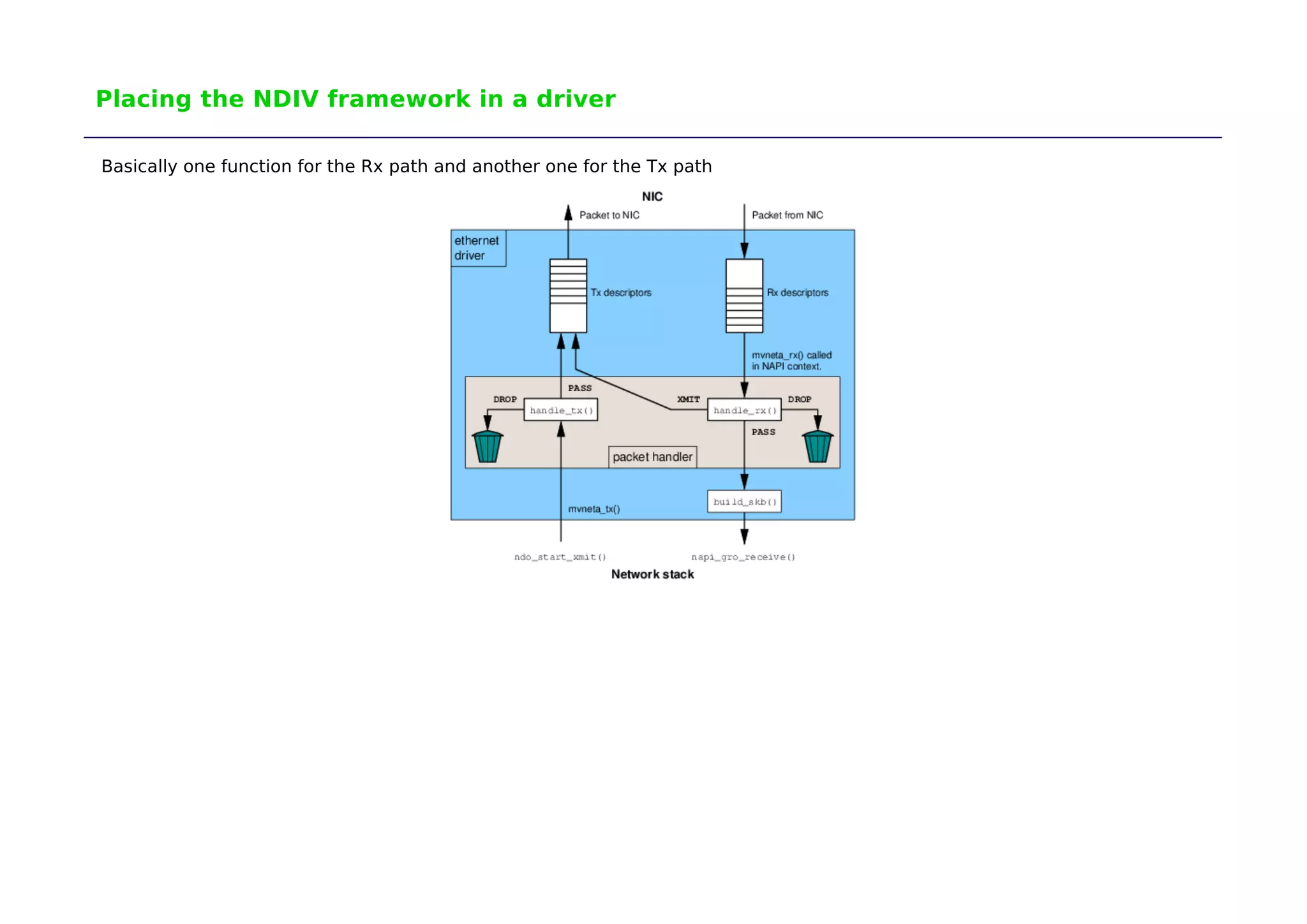

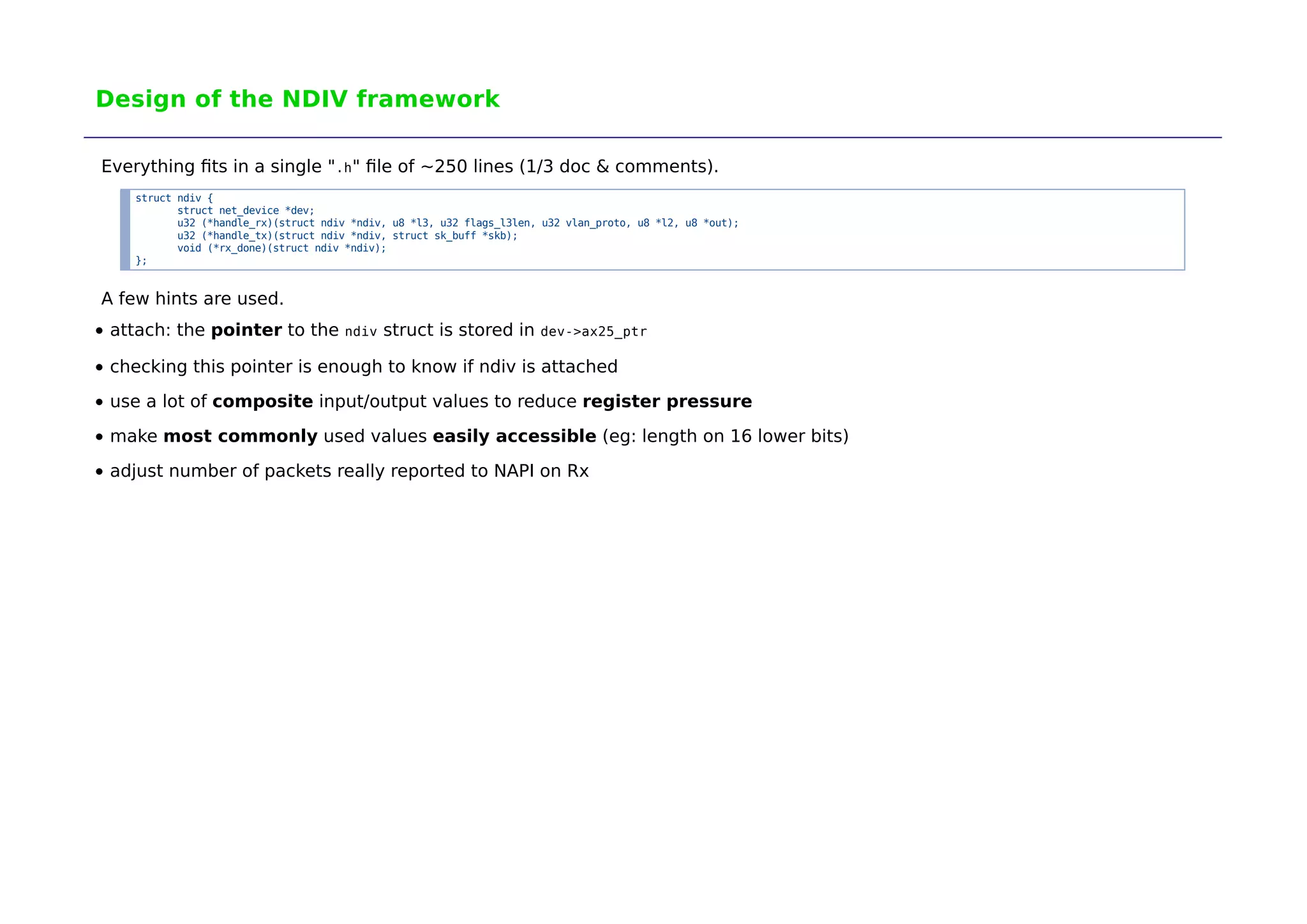

The document discusses the development of 'ndiv', a low overhead network traffic diverter aimed at improving performance for testing firewalls and servers. It outlines the history of related tools, design challenges, and the framework's implementation, which allows rapid packet processing with minimal overhead. Potential applications include network testing, packet capture, and traffic management, with further advancements expected in the future.