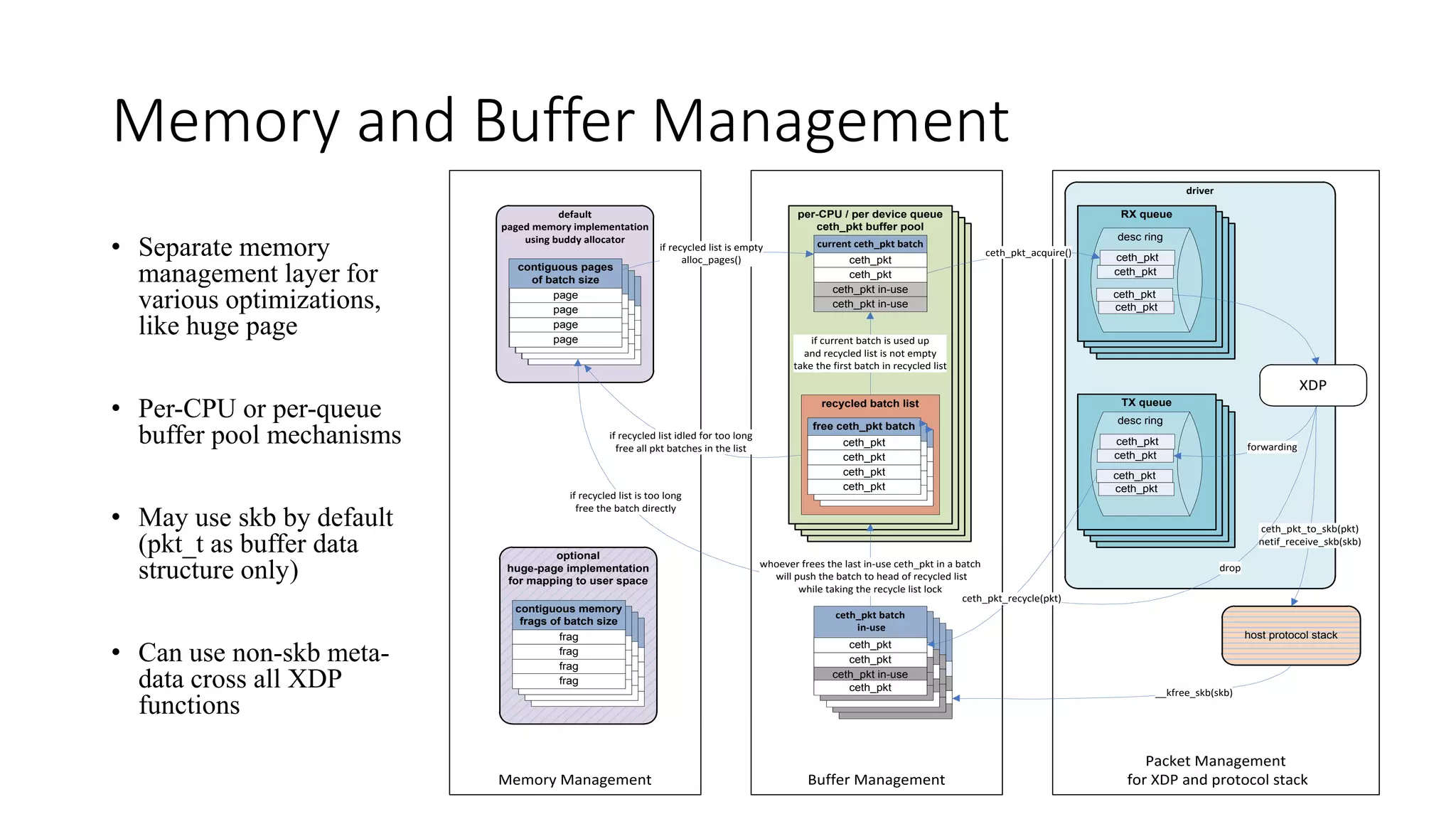

This document discusses CETH (Common Ethernet Driver Framework), which aims to improve kernel networking performance for virtualization. CETH simplifies NIC drivers by consolidating common functions. It supports various NICs and accelerators. CETH features efficient memory and buffer management, flexible TX/RX scheduling, and a customizable metadata structure. It is being simplified to work with XDP for even higher performance network I/O processing in the kernel. Next steps include further optimizations and measuring performance gains when using CETH with XDP and virtualized environments.

![CETH pkt_t Structure

• Use one page for one packet

• Customizable meta data (for XDP)

• Header room for overlay

• SKB data structure ready

• Easy conversion between pkt_t and skb_buff

(with cost)

• Reuse skb_shared_info for fragments

frags[17]

end

skb_shared_info

head room

128 (64x2)

data

skb

data

sk_buff

232x2+8(64x8)

320 (64x5)

128 (64x2)

2880(64x45)

4K (64*64)

sk_buff2

fclone_ref=2

sk_buff_fclones

head

data

end

head

data

end

handle

data_offset

signature

meda data

ceth_pkt

list head

ceth_pkt_buffer](https://image.slidesharecdn.com/ceth5overview1-160801192921/75/CETH-for-XDP-Linux-Meetup-Santa-Clara-July-2016-9-2048.jpg)