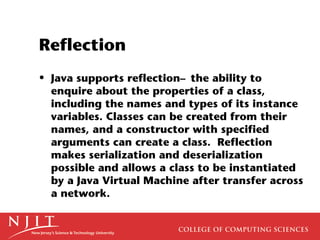

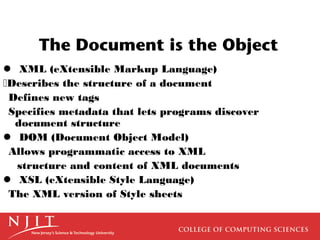

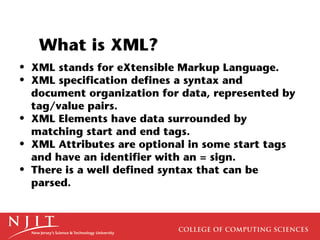

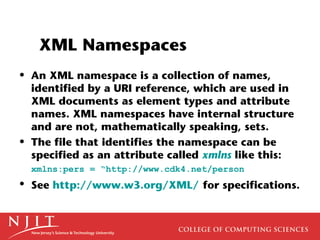

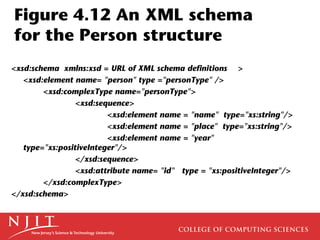

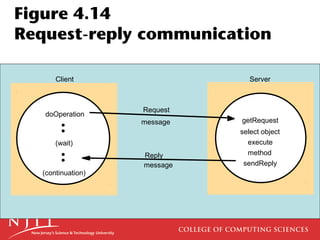

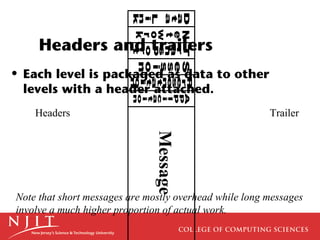

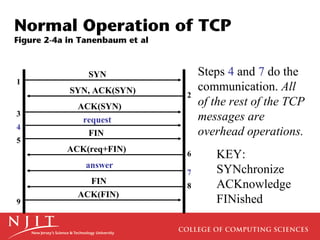

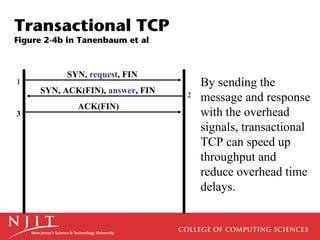

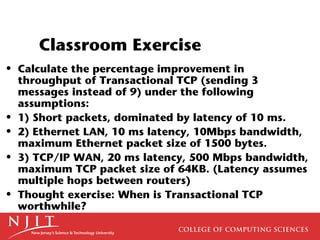

The document discusses interprocess communication and distributed systems, noting that distributed systems rely on defined communication protocols like interprocess communication using message passing over networks. It also covers topics like overhead in communication systems, different data storage and transfer formats between systems like XML, and Java object serialization for complex data transfer. Effective communication protocols aim to minimize overhead while maximizing throughput.

![Ethernet Jumbo Frames

• Ethernet Jumbo Frames of 9KB are possible

if supported end to end. A 9KB Ethernet

frame can hold an 8 KB TCP/IP datagram

(NFS standard) plus packet overhead.

Ethernet cannot use 64KB packets because

it uses CRC for error correction, and CRC

has an upper limit of 12KB, which is hard

to change. [P. Dykstra]](https://image.slidesharecdn.com/interprocesscommunication-120808050511-phpapp01/85/Interprocess-communication-10-320.jpg)