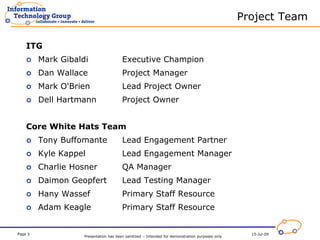

The 2008 Security Vulnerability Assessment (SVA) project kick-off meeting introduced team members and outlined the project approach, focusing on critical systems and risk-based target selection. The project phases include planning/scoping, assessment/execution, and data analysis/reporting, with key milestones scheduled throughout August and October 2008. The project relies on the White Hats team for assessments and emphasizes collaboration while excluding them from remediation tracking.