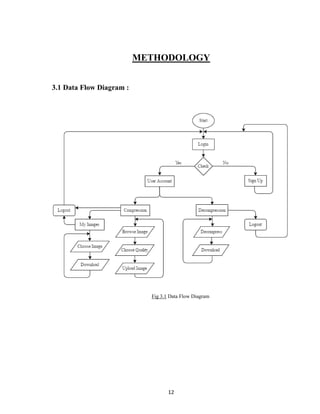

The document presents an integrated project report on an image compression and decompression system, aimed at fulfilling academic requirements for a computer science engineering course. It discusses the significance of image compression in reducing data redundancy for efficient storage and transmission, along with various techniques and their advantages and disadvantages. The project was conducted by students under the guidance of a faculty member and includes methodologies, literature surveys, and future scope for the system.

![29

Upload Image

We convert a BufferedImage to byte array in order to send it to server. We use Java

class ByteArrayOutputStream, which can be found underjava.io package. Its syntax is

given below:

ByteArrayOutputStream baos = new ByteArrayOutputStream();

ImageIO.write(image, "jpg", baos);

In order to convert the image to byte array, we use toByteArray() method of

ByteArrayOutputStream class. Its syntax is given below:

byte[] bytes = baos.toByteArray();

Fig 5.2.2 Image Upload Syntax

Download Image

To get the image from the databse and download it on system follwing functins are

executed:

Fig 5.2.3 Image Download Syntax](https://image.slidesharecdn.com/finalreport-160607181120/85/IMAGE-COMPRESSION-AND-DECOMPRESSION-SYSTEM-38-320.jpg)