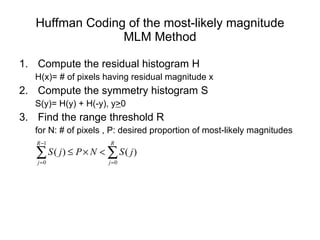

The document provides an overview of Huffman coding, a lossless data compression algorithm. It begins with a simple example to illustrate the basic idea of assigning shorter codes to more frequent symbols. It then defines key terms like entropy and describes the Huffman coding algorithm, which constructs an optimal prefix code from the frequency of symbols in the data. The document discusses how Huffman coding can be applied to image compression by first predicting pixel values and then encoding the residuals. It notes some disadvantages of Huffman coding and describes variations like adaptive Huffman coding.

![A simple example Suppose we have a message consisting of 5 symbols, e.g. [ ►♣♣♠☻►♣☼►☻] How can we code this message using 0/1 so the coded message will have minimum length (for transmission or saving!) 5 symbols at least 3 bits For a simple encoding, length of code is 10*3=30 bits](https://image.slidesharecdn.com/huffmancoding-100208222005-phpapp01/85/Huffman-Coding-3-320.jpg)

![Variations n -ary Huffman coding Uses {0, 1, .., n-1} (not just {0,1}) Adaptive Huffman coding Calculates frequencies dynamically based on recent actual frequencies Huffman template algorithm Generalizing probabilities any weight Combining methods (addition) any function Can solve other min. problems e.g. max [w i +length(c i )]](https://image.slidesharecdn.com/huffmancoding-100208222005-phpapp01/85/Huffman-Coding-18-320.jpg)