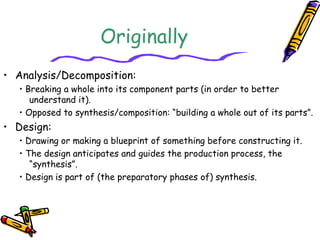

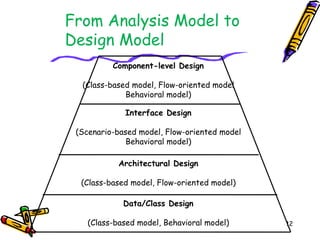

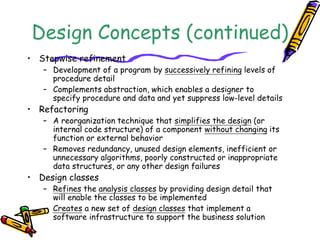

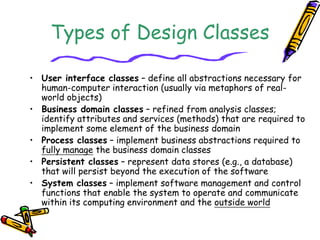

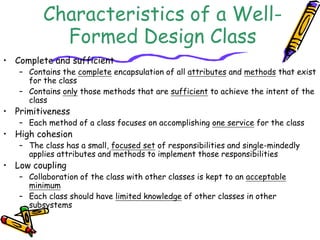

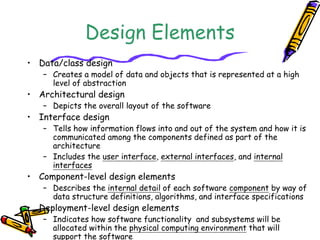

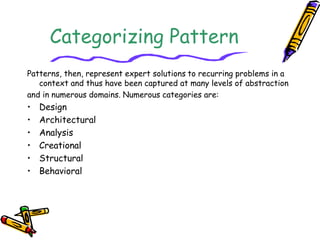

The document discusses the differences between analysis modeling and design engineering/modeling. Analysis involves understanding a problem, while design focuses on creating a solution. Models can be used to better understand problems in analysis or solutions in design. The key aspects of design engineering are then outlined, including translating requirements into a software blueprint, iterating on the design, and ensuring quality through guidelines such as modularity, information hiding, and functional independence. Common design concepts like abstraction, architecture, patterns, and classes are also explained.