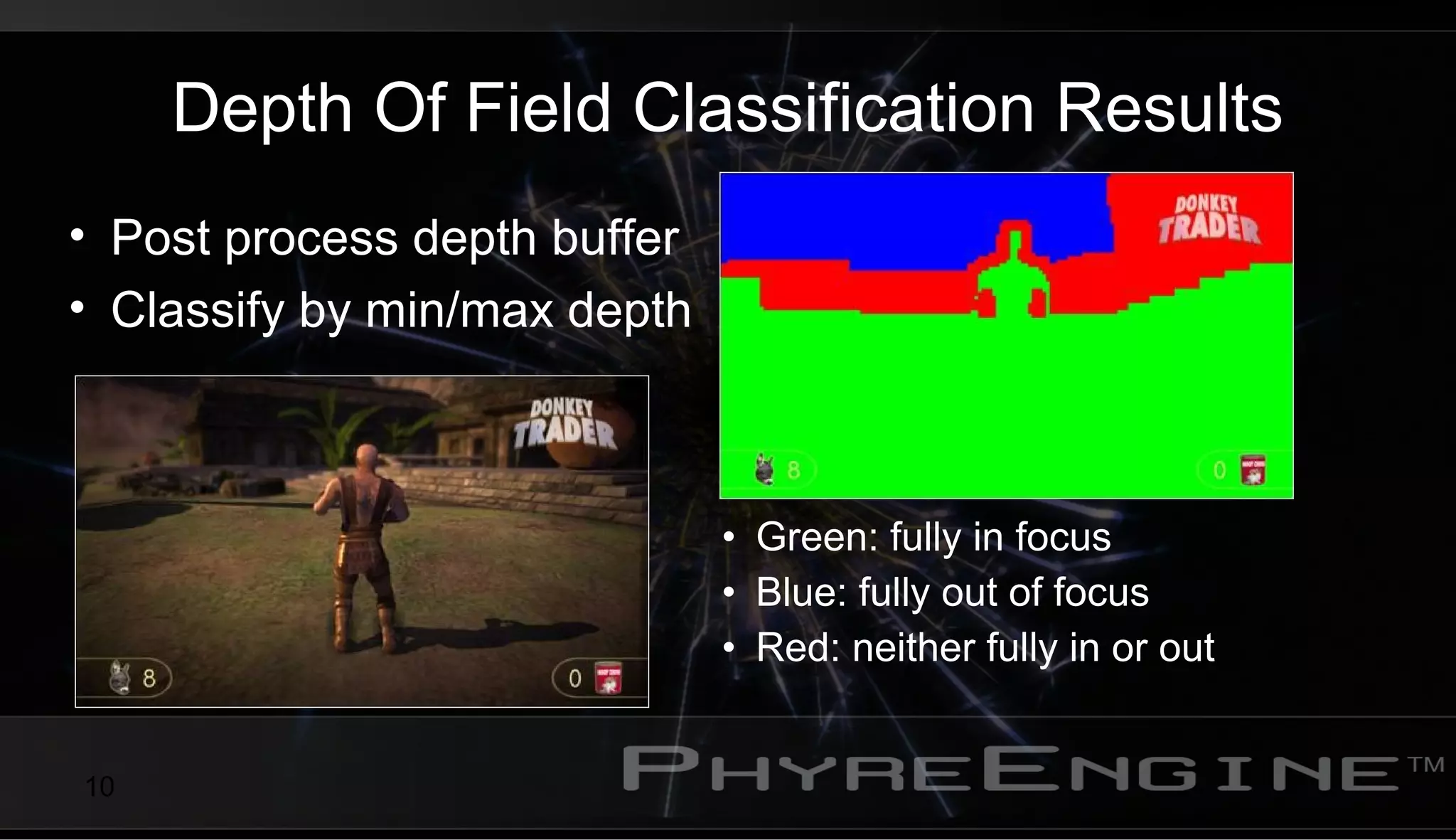

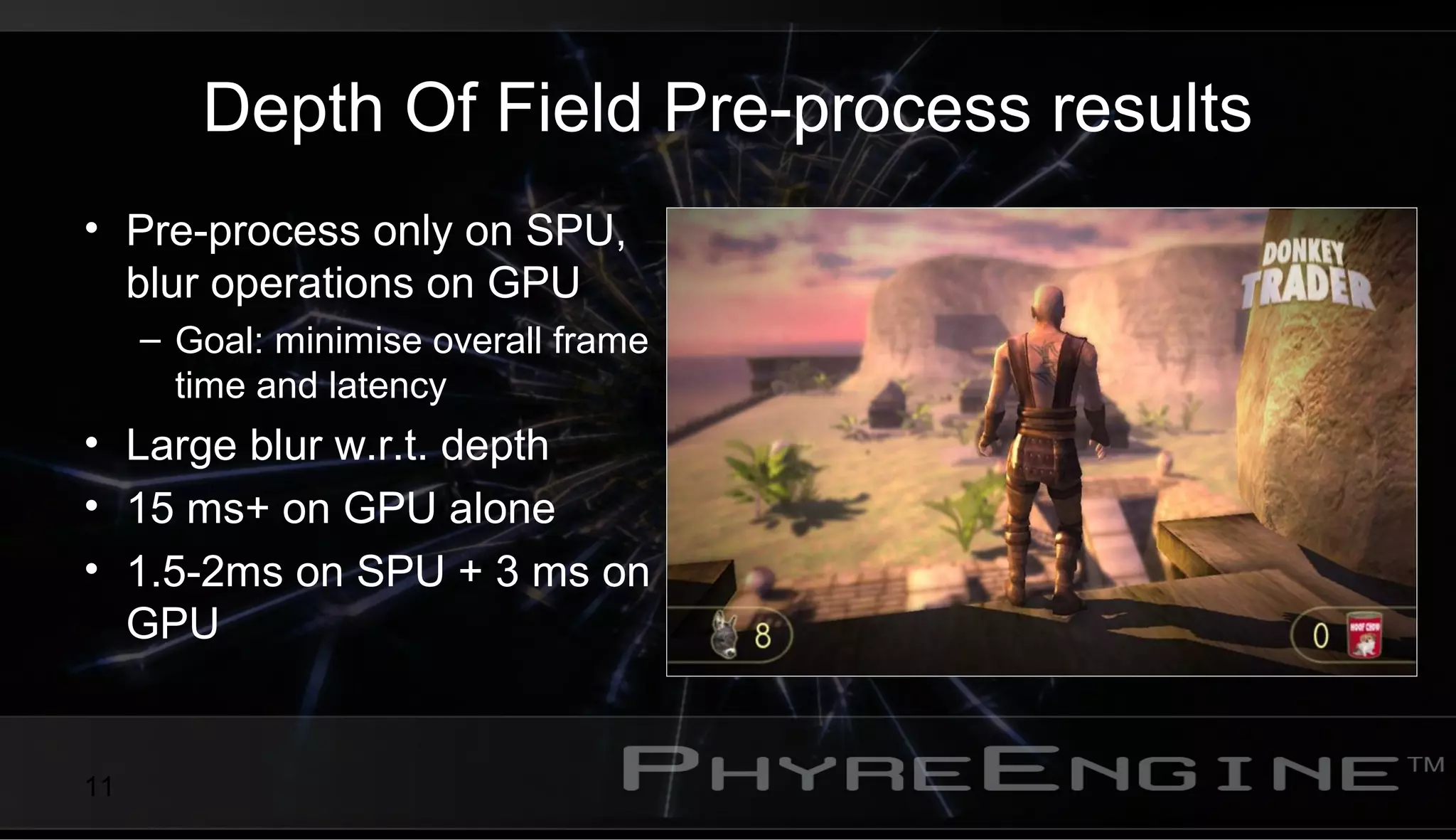

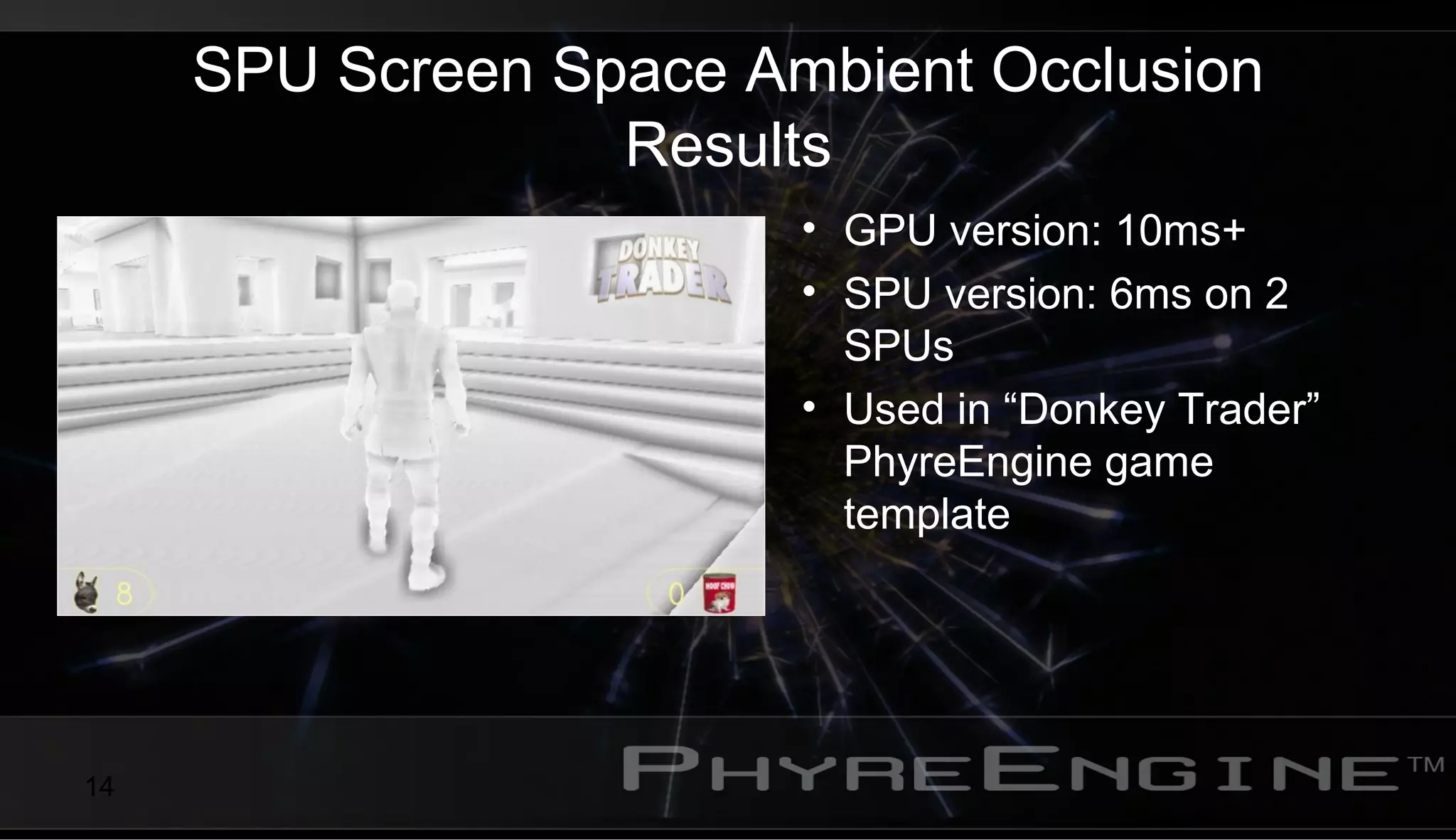

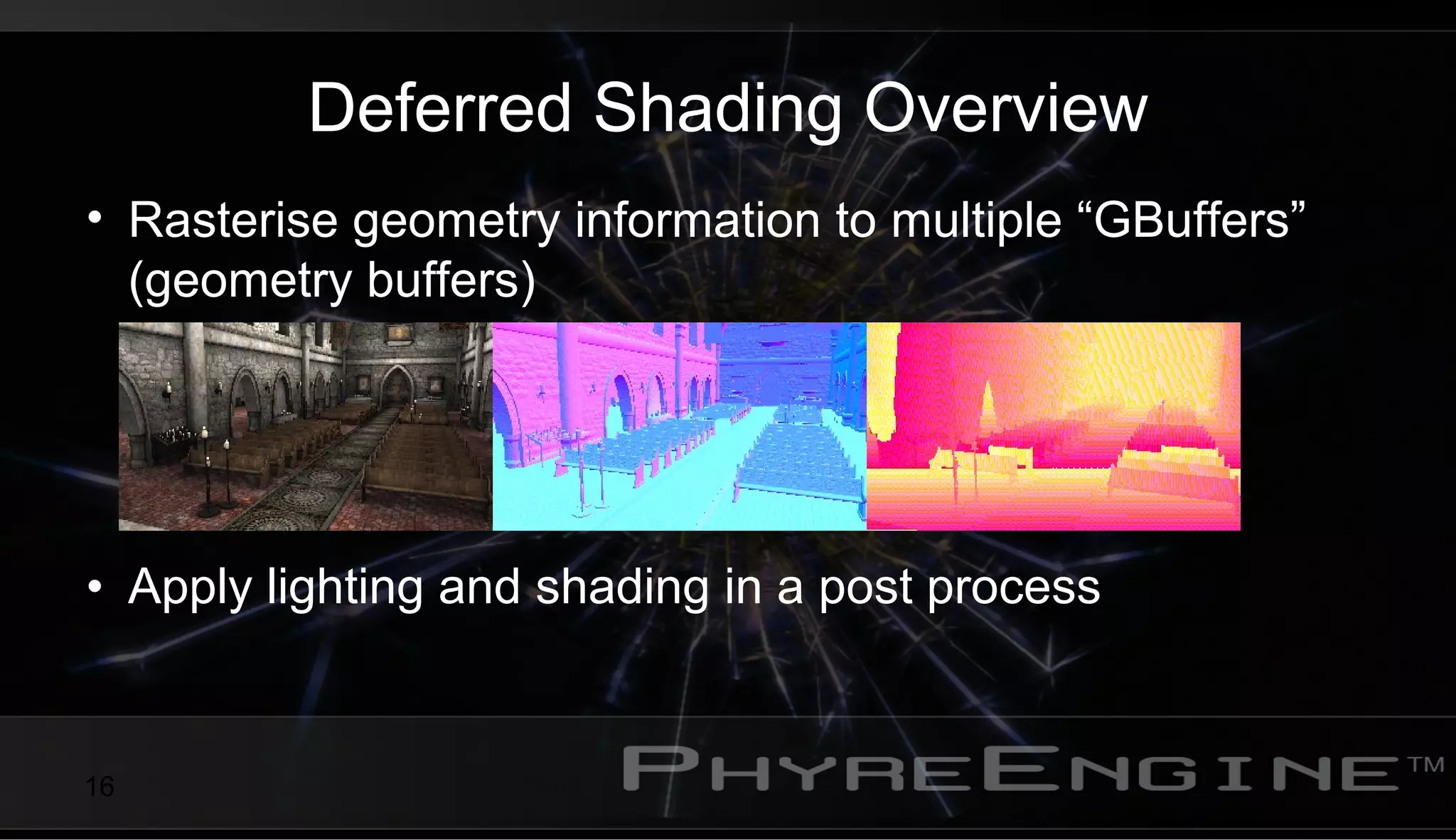

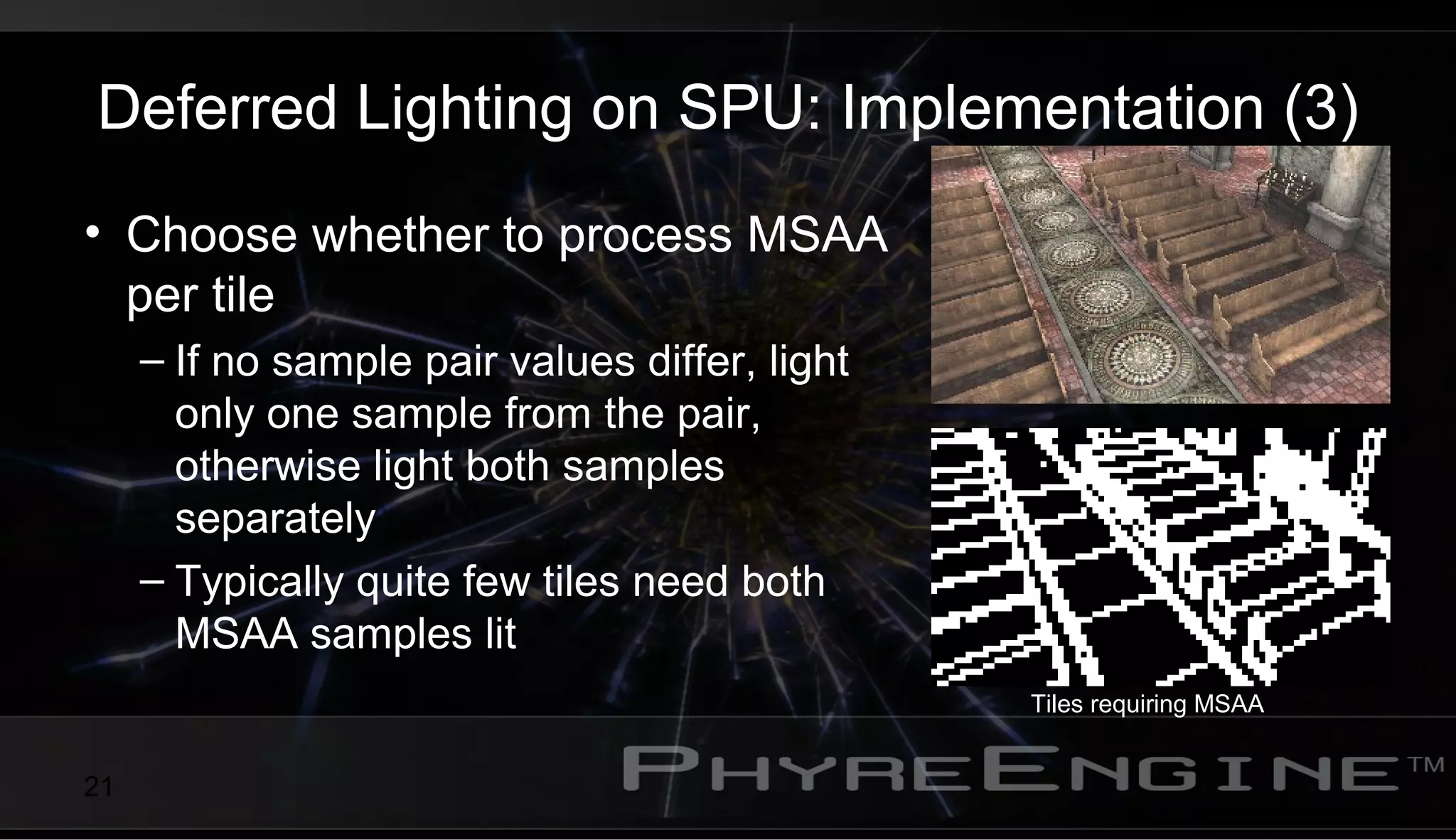

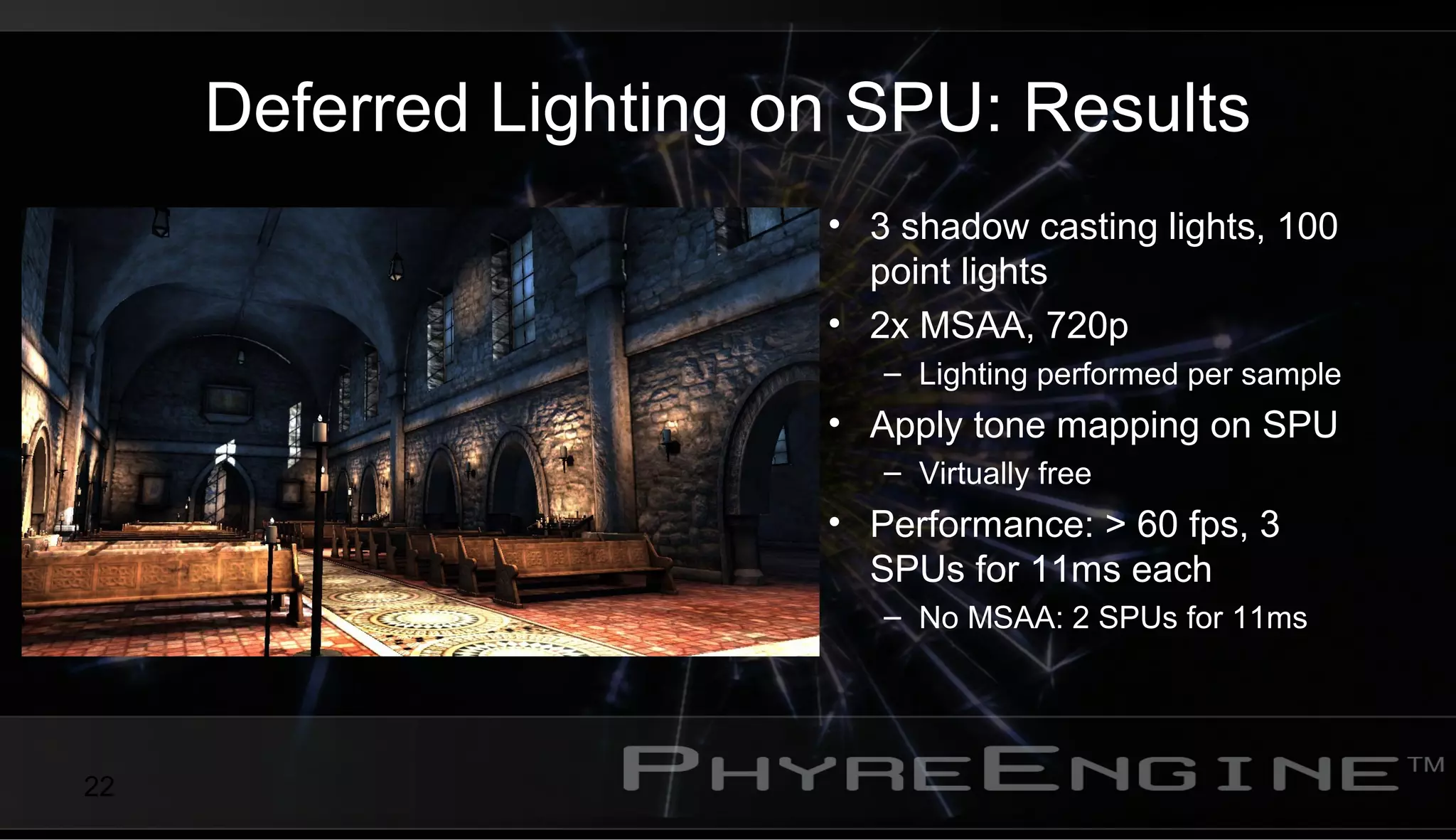

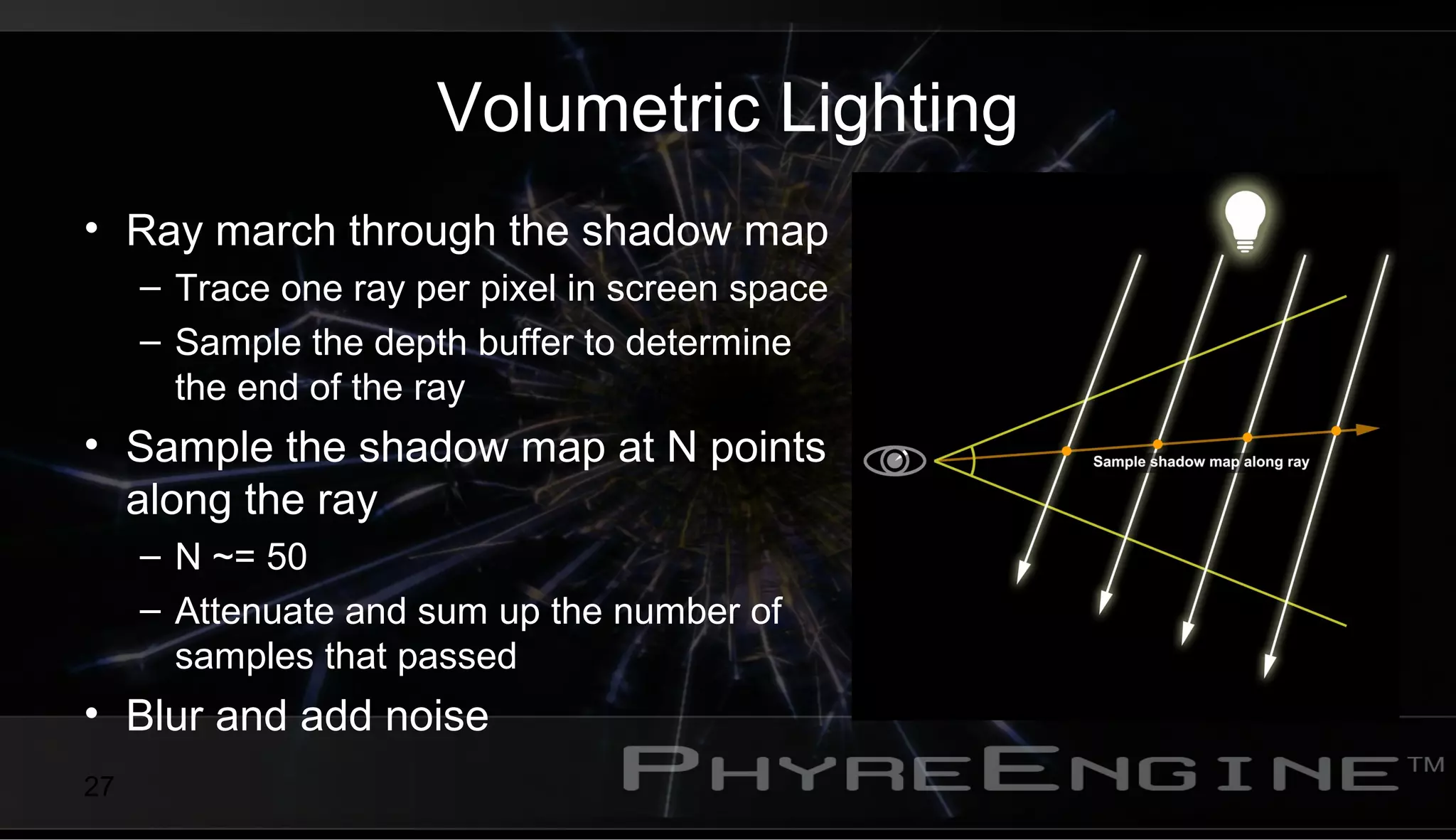

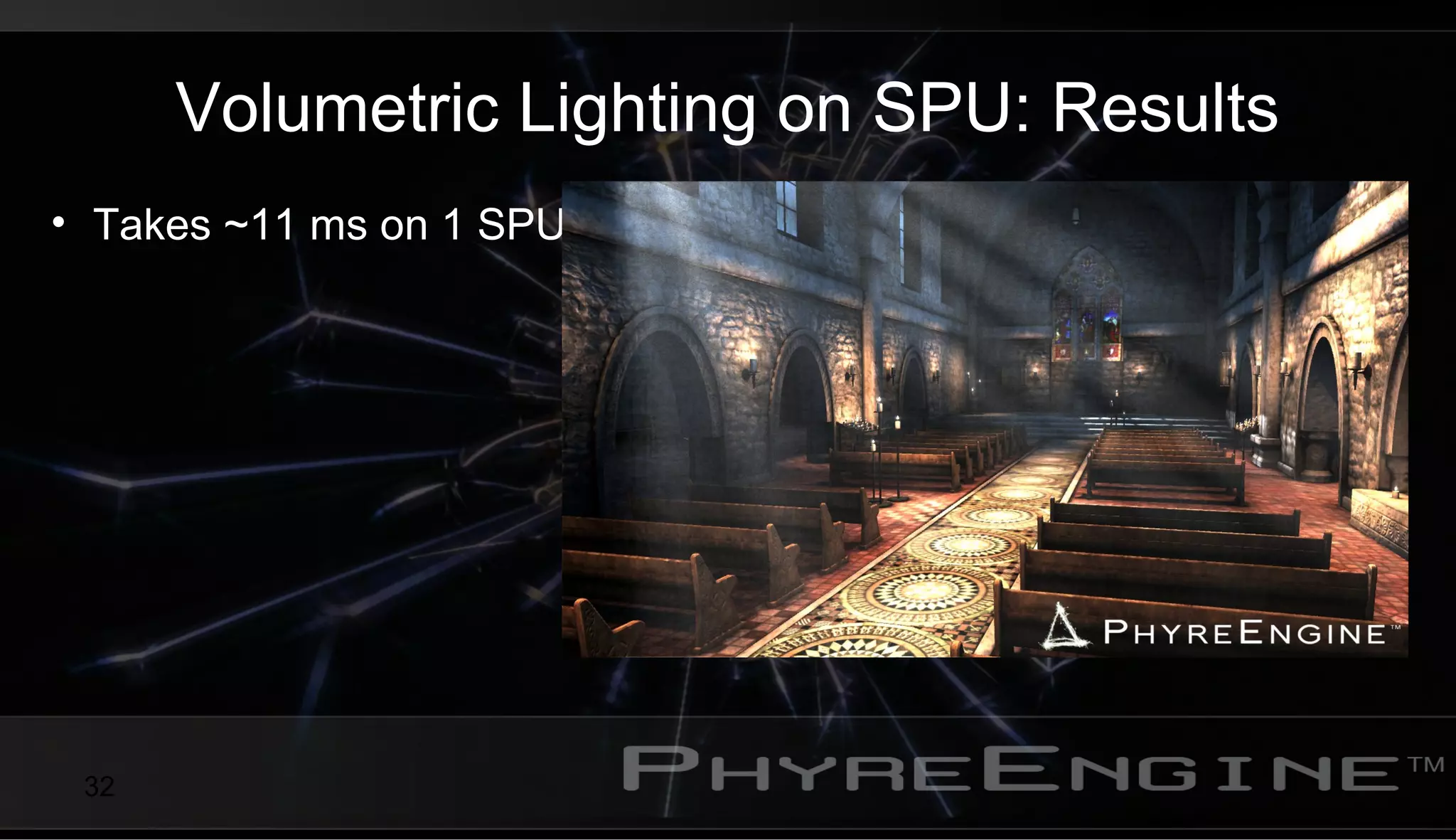

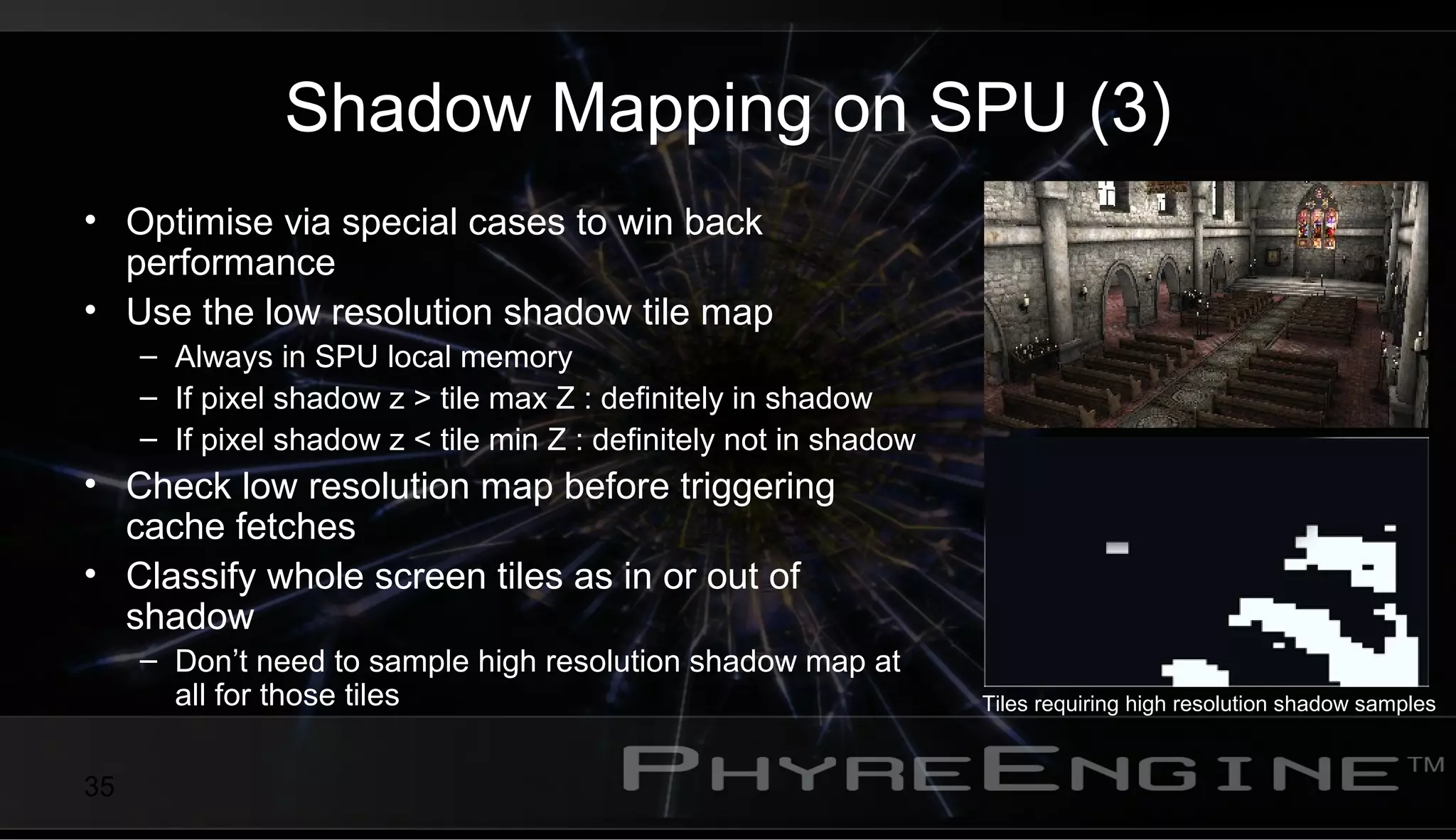

This document discusses optimizing post processing and deferred lighting on the PlayStation 3 by offloading work to the system processor units (SPUs). It provides examples of using the SPUs for depth of field preprocessing, screen space ambient occlusion, deferred lighting, volumetric lighting, and shadow mapping. The key advantages of using the SPUs include reducing GPU bottlenecking and improving performance by leveraging the SPUs' flexibility to optimize fragment processing through techniques like tile-based classification and caching shadow map tiles in local memory. Offloading post processing effects to the SPUs can significantly reduce frame times compared to performing the entire effect on the GPU.