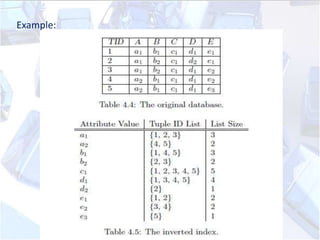

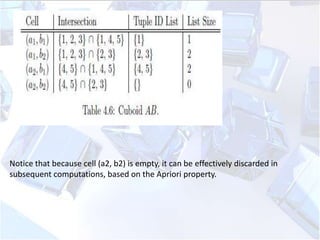

Data cube computation involves precomputing aggregations to enable fast query performance. There are different materialization strategies like full cubes, iceberg cubes, and shell cubes. Full cubes precompute all aggregations but require significant storage, while iceberg cubes only store aggregations that meet a threshold. Computation strategies include sorting and grouping to aggregate similar values, caching intermediate results, and aggregating from smallest child cuboids first. The Apriori pruning method can efficiently compute iceberg cubes by avoiding computing descendants of cells that do not meet the minimum support threshold.