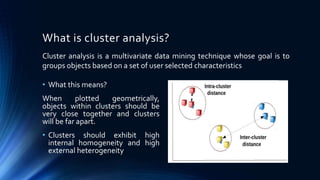

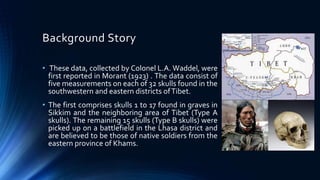

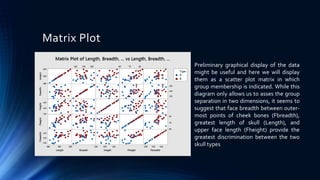

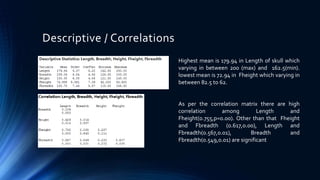

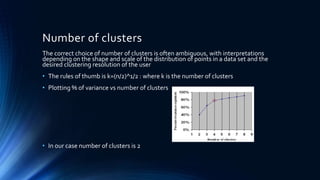

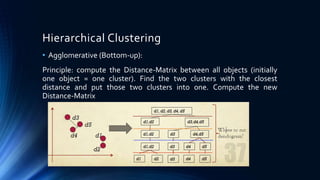

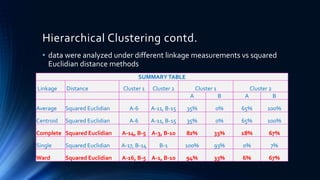

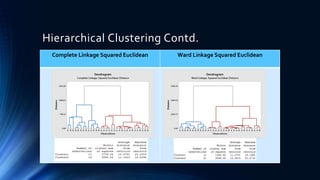

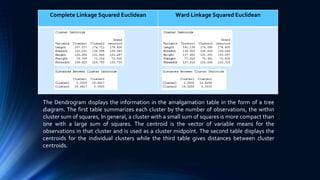

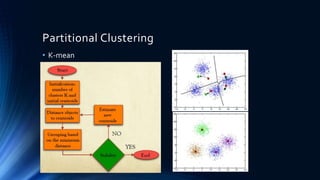

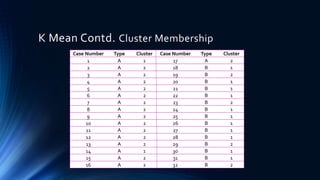

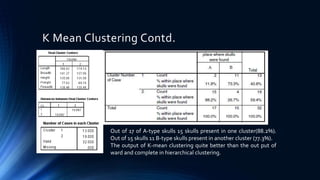

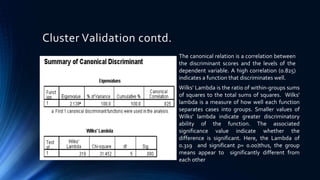

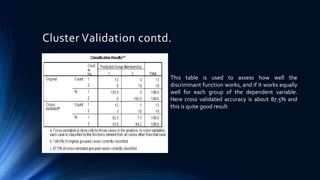

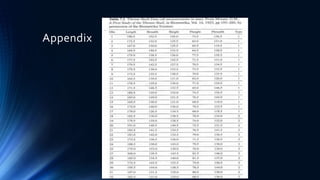

The document discusses a cluster analysis case study on Tibetan skulls collected by Colonel L.A. Waddel, analyzing measurements from two distinct types of skulls to test the hypothesis regarding their genetic origins. It outlines various clustering methods, including hierarchical and k-means clustering, and concludes that the skulls from the Khams district show significant differences from general Tibetans based on measured traits. Validation through discriminant analysis indicates a high discriminatory ability and strong separation between groups.