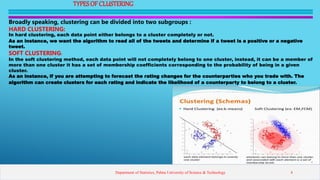

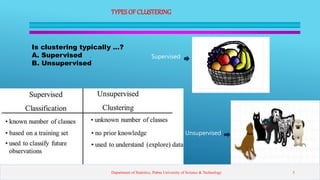

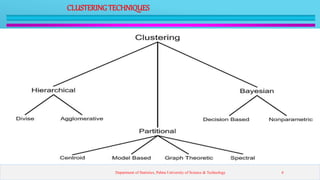

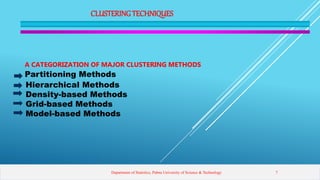

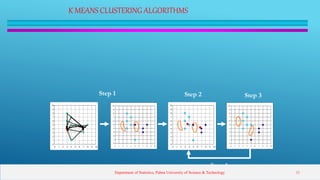

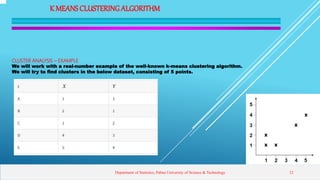

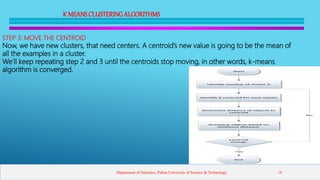

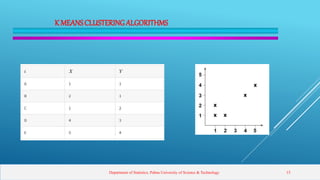

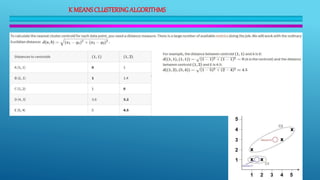

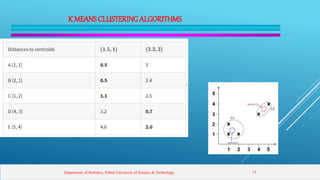

This presentation introduces clustering analysis and the k-means clustering technique. It defines clustering as an unsupervised method to segment data into groups with similar traits. The presentation outlines different clustering types (hard vs soft), techniques (partitioning, hierarchical, etc.), and describes the k-means algorithm in detail through multiple steps. It discusses requirements for clustering, provides examples of applications, and reviews advantages and disadvantages of k-means clustering.