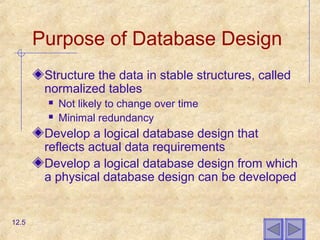

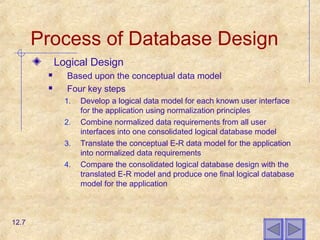

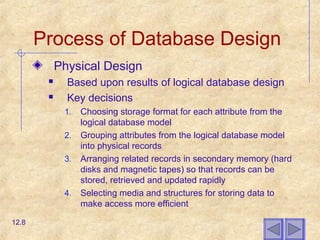

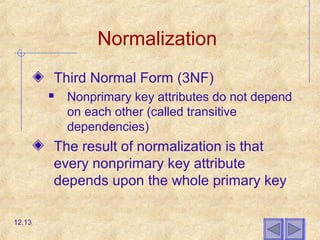

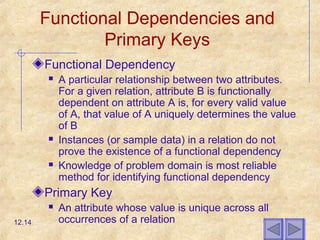

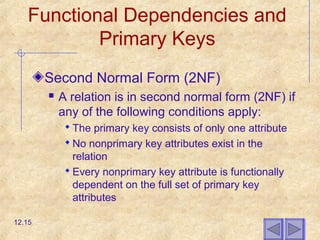

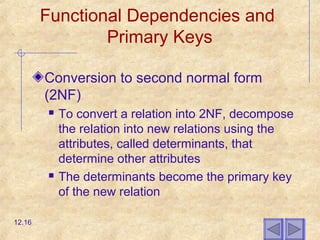

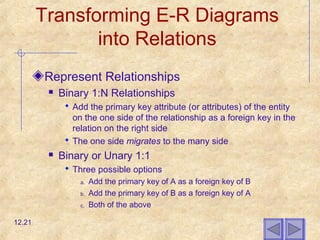

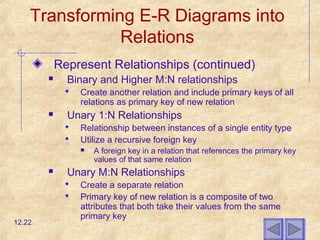

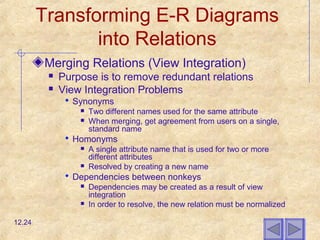

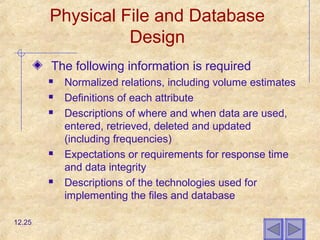

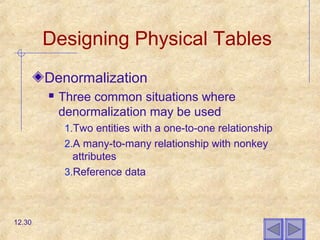

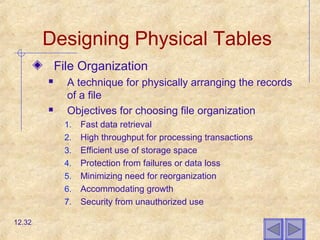

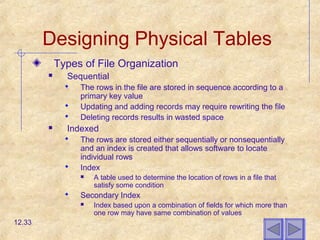

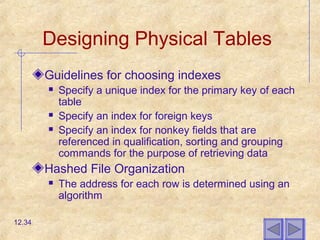

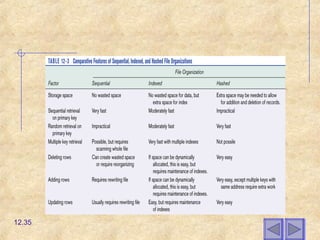

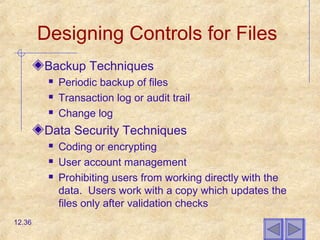

The document discusses database design, including transforming entity-relationship diagrams into normalized relations, integrating different user views, choosing data storage formats, designing efficient database tables, file organization, and indexes. It covers key database concepts such as relations, primary keys, normalization, foreign keys, and data types. The goal of database design is to structure data in stable, normalized tables that are efficient for storage and access.