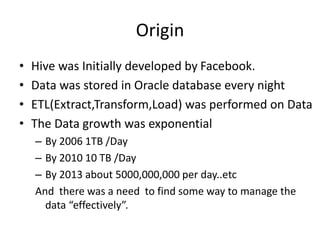

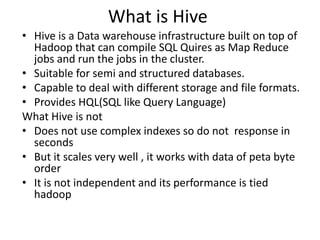

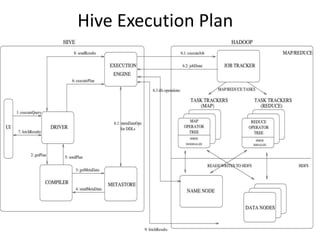

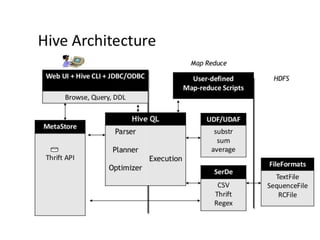

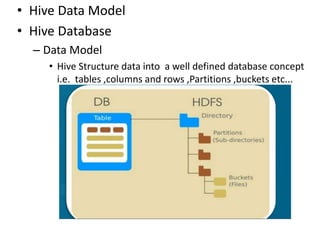

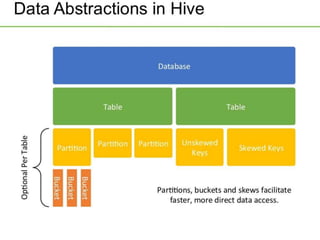

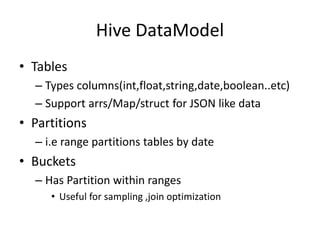

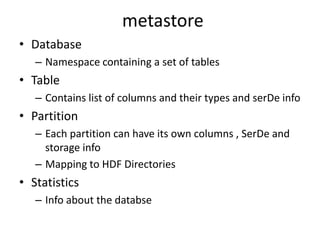

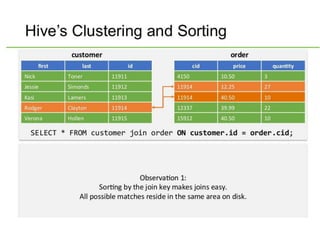

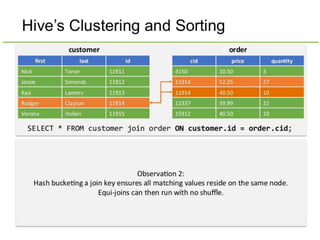

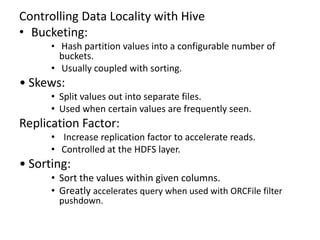

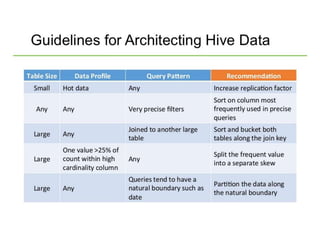

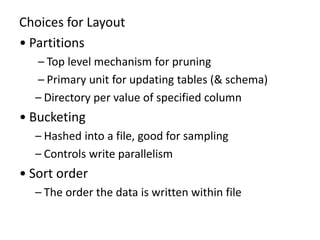

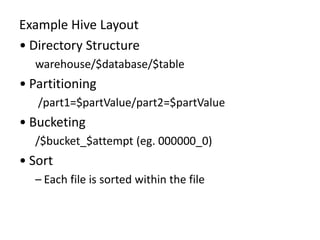

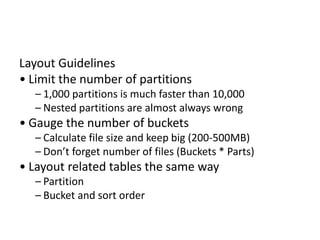

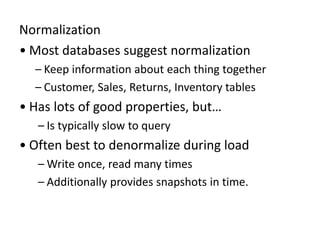

Hive was initially developed by Facebook to manage large amounts of data stored in HDFS. It uses a SQL-like query language called HiveQL to analyze structured and semi-structured data. Hive compiles HiveQL queries into MapReduce jobs that are executed on a Hadoop cluster. It provides mechanisms for partitioning, bucketing, and sorting data to optimize query performance.