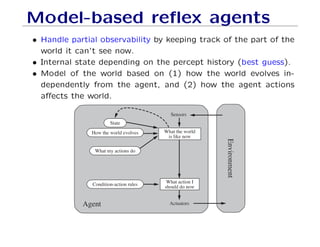

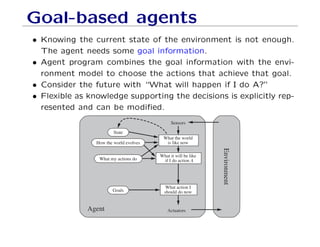

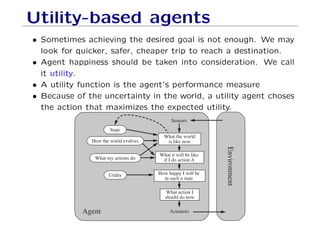

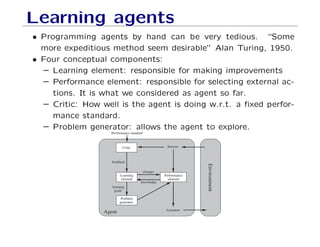

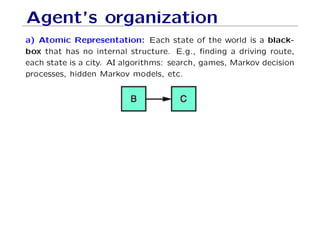

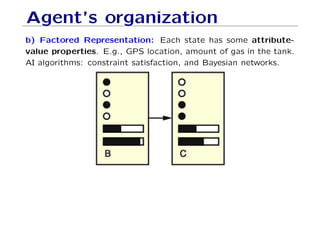

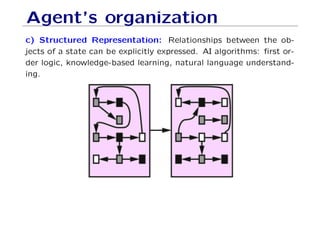

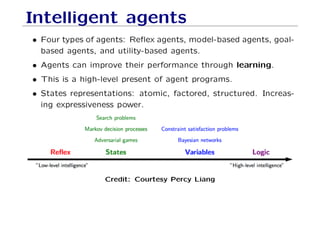

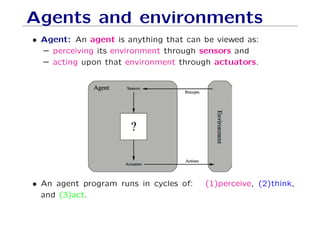

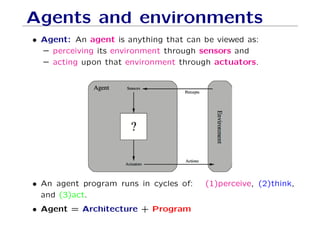

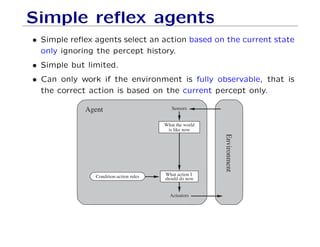

The document defines key concepts in artificial intelligence including intelligent agents, environments, and rational agents. An intelligent agent is anything that can perceive its environment and take actions to achieve its goals. Rational agents aim to maximize their performance measure given their percepts and knowledge. Different types of agents are described including reflex agents, model-based agents, goal-based agents, and utility-based agents. State representations and learning agents are also covered at a high level.

![Vacuum cleaner

A B

• Percepts: location and contents e.g., [A, Dirty]

• Actions: Left, Right, Suck, NoOp

• Agent function: mapping from percepts to actions.](https://image.slidesharecdn.com/agents1-171227052052/85/Agents1-7-320.jpg)

![Vacuum cleaner

A B

• Percepts: location and contents e.g., [A, Dirty]

• Actions: Left, Right, Suck, NoOp

• Agent function: mapping from percepts to actions.

Percept Action

[A, clean] Right

[A, dirty] Suck

[B, clean] Left

[B, dirty] Suck](https://image.slidesharecdn.com/agents1-171227052052/85/Agents1-8-320.jpg)

![Vacuum (reflex) agent

A B

• Let’s write the algorithm for the Vacuum cleaner...

• Percepts: location and content (location sensor, dirt sensor).

• Actions: Left, Right, Suck, NoOp

Percept Action

[A, clean] Right

[A, dirty] Suck

[B, clean] Left

[B, dirty] Suck](https://image.slidesharecdn.com/agents1-171227052052/85/Agents1-27-320.jpg)

![Vacuum (reflex) agent

A B

• Let’s write the algorithm for the Vacuum cleaner...

• Percepts: location and content (location sensor, dirt sensor).

• Actions: Left, Right, Suck, NoOp

Percept Action

[A, clean] Right

[A, dirty] Suck

[B, clean] Left

[B, dirty] Suck

What if the vacuum agent is deprived from its location sen-

sor?](https://image.slidesharecdn.com/agents1-171227052052/85/Agents1-28-320.jpg)