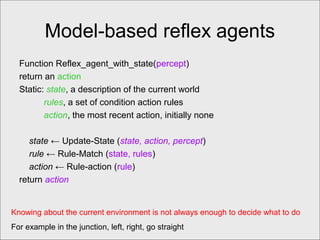

This document discusses intelligent agents and their environments. It defines an agent as anything that perceives its environment and acts upon it. Rational agents are those that select actions expected to maximize their performance based on perceptions. An agent's task environment consists of its performance measure, environment, actuators, and sensors. Environment types include fully/partially observable, deterministic/stochastic, and single/multi-agent. Four basic agent types are described: simple reflex agents, model-based reflex agents, goal-based agents, and utility-based agents. Learning agents use feedback to improve performance over time. The document provides examples of agents and discusses their design considerations based on task environment properties.

![Agents and environments

• The agent function maps from percept histories

to actions:

[f: P* A]

• The agent program runs on the physical

architecture to produce f.

• agent comprised of : architecture + program](https://image.slidesharecdn.com/lecture2-120919112122-phpapp01/85/Lecture-2-4-320.jpg)

![Vacuum-cleaner world

• Percepts: location and contents, e.g., [A,Dirty]

• Actions: Left, Right, Suck, NoOp](https://image.slidesharecdn.com/lecture2-120919112122-phpapp01/85/Lecture-2-5-320.jpg)

![A vacuum-cleaner agent

Percept Sequence Action

[A, Clean] Right

[A,Dirty] Suck

[B, Clean] Left

[B, Dirty] Suck

[A, Clean], [A, Clean] Right

[A,Clean], [A,Dirty] Suck

…. …..

[A, Clean], [A, Clean], [A, Clean] Right

[A, Clean], [A, Clean], [A, Dirty] Suck

….. ….](https://image.slidesharecdn.com/lecture2-120919112122-phpapp01/85/Lecture-2-6-320.jpg)

![Agent program for a vacuum-

cleaner agent

Function Reflex_Va_Agent([location,

status]) return an action

If status = Dirty then return Suck

Else if location = A then return Right

Else if location = B then return Left

Good only if environment is fully observable otherwise disaster.

For example if location sensor is off then problem? [dirty] [clean]](https://image.slidesharecdn.com/lecture2-120919112122-phpapp01/85/Lecture-2-22-320.jpg)