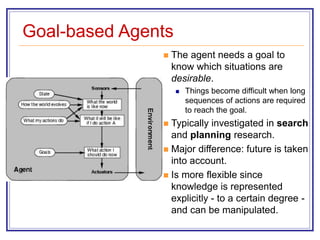

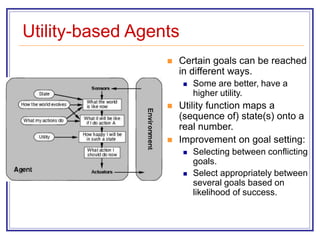

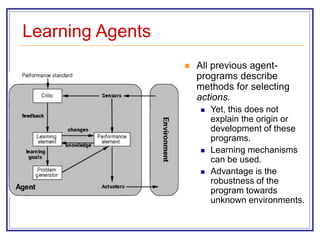

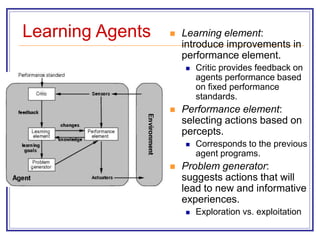

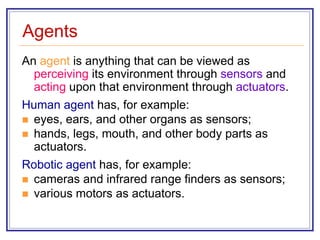

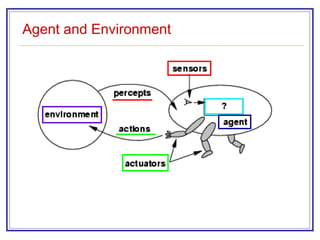

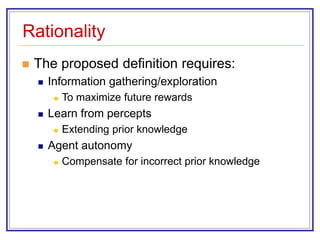

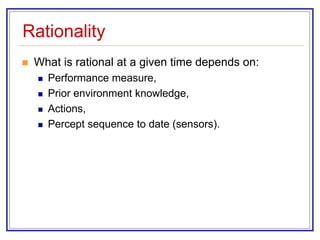

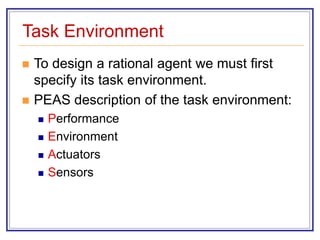

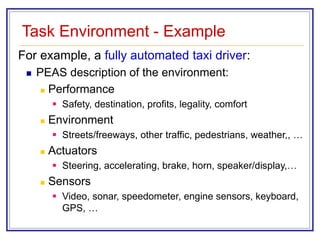

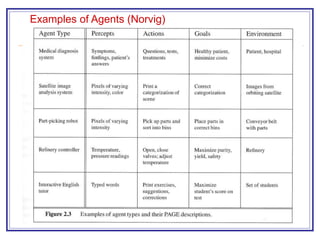

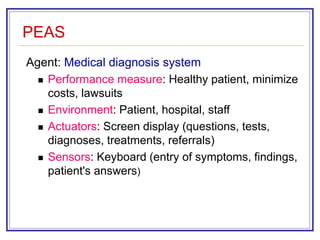

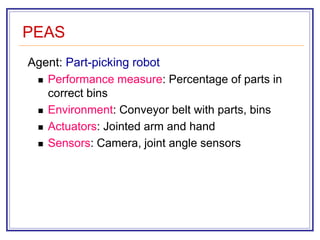

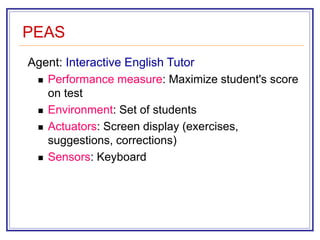

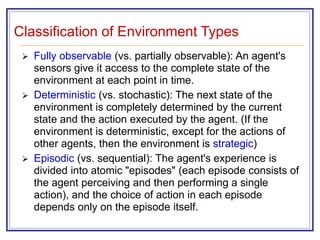

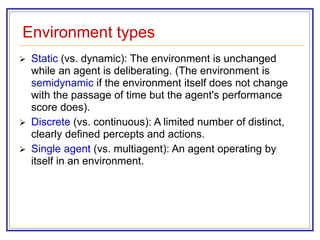

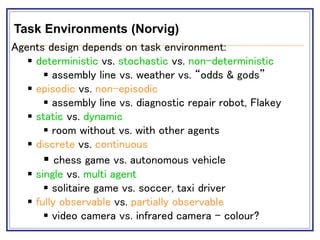

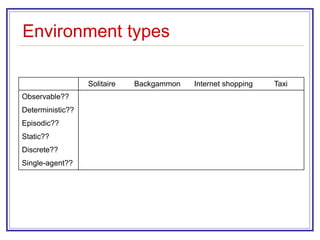

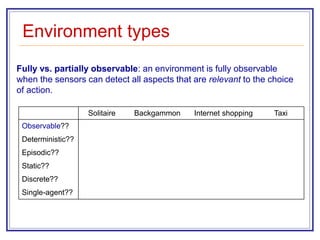

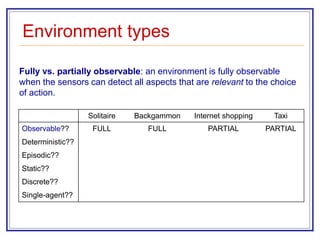

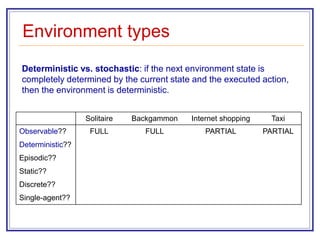

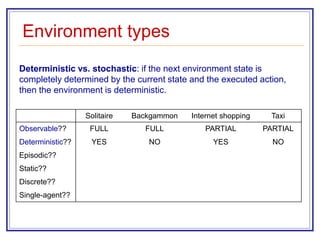

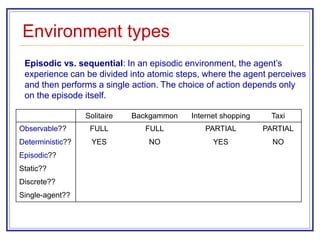

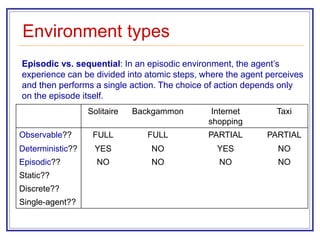

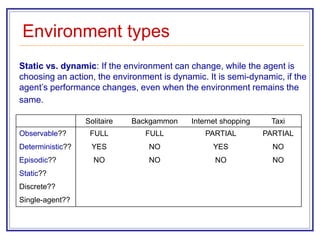

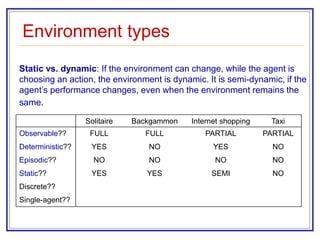

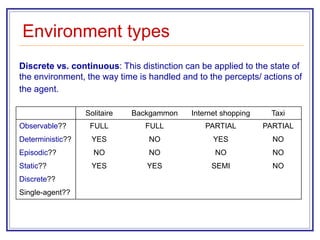

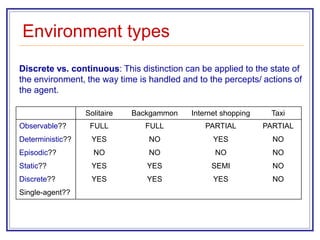

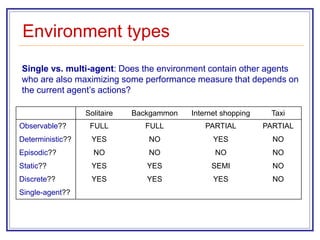

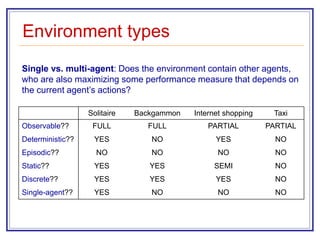

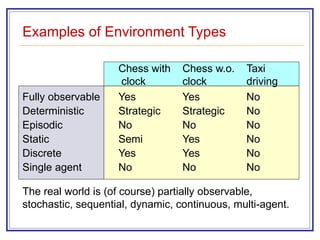

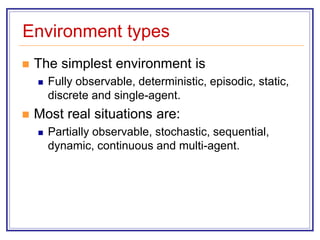

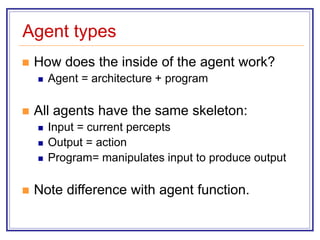

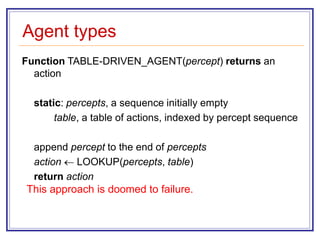

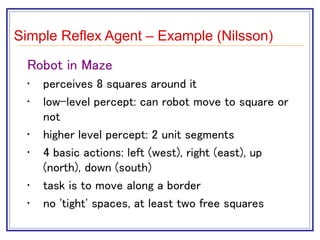

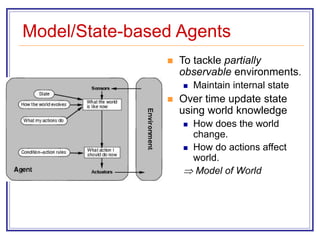

The document discusses different types of intelligent agents and their environments. It describes how agents are defined as anything that can perceive its environment and act upon it. The key aspects of an agent's task environment are its performance measure, possible environment states, available actions, and sensors. Different types of environments are classified based on properties like observability, determinism, episodic vs sequential, static vs dynamic, discrete vs continuous, and single vs multi-agent. The document also outlines different approaches for how an agent's program or architecture can work, including simple reflex agents, model-based agents, goal-based agents, and utility-based agents.

![The Vacuum-Cleaner Mini-World

Environment: square A and B

Percepts: location and status, e.g., [A, Dirty]

Actions: left, right, suck, and no-op](https://image.slidesharecdn.com/agents-1-230914165748-3becbcfe/85/agents-in-ai-ppt-5-320.jpg)

![The Vacuum-Cleaner Mini-World

World State Action

[A,Clean] Right

[A, Dirty] Suck

[B, Clean] Left

[B, Dirty] Suck

[A, Dirty], [A, Clean] Right

[A, Clean], [B, Dirty]

[A, Clean], [B, Clean]

...

Suck

No-op

...](https://image.slidesharecdn.com/agents-1-230914165748-3becbcfe/85/agents-in-ai-ppt-6-320.jpg)

![Agent Function

The agent function maps from percept histories to

actions:

[f: P* A]

An agent is completely specified by the agent

function mapping percept sequences to actions

The agent program runs on the physical

architecture to produce f.

agent = architecture + program](https://image.slidesharecdn.com/agents-1-230914165748-3becbcfe/85/agents-in-ai-ppt-7-320.jpg)

![The Vacuum-Cleaner Mini-World

function REFLEX-VACUUM-AGENT ([location, status]) return an

action

if status == Dirty then return Suck

else if location == A then return Right

else if location == B then return Left

Does not work this way. Need full state space (table) or memory.](https://image.slidesharecdn.com/agents-1-230914165748-3becbcfe/85/agents-in-ai-ppt-8-320.jpg)

![The Vacuum-Cleaner Mini-World

World State Action

[A, Clean]

[A, Dirty]

[B, Clean]

[B, Dirty]

[A, Dirty], [A, Clean]

[A, Clean], [B, Dirty]

[B, Dirty], [B, Clean]

[B, Clean], [A, Dirty]

[A, Clean], [B, Clean]

[B, Clean], [A, Clean]

Right

Suck

Left

Suck

Right

Suck

Left

Suck

No-op

No-op](https://image.slidesharecdn.com/agents-1-230914165748-3becbcfe/85/agents-in-ai-ppt-9-320.jpg)

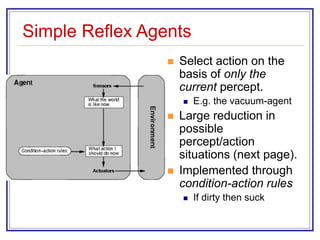

![Simple Reflex Agents

function SIMPLE-REFLEX-AGENT(percept)

returns an action

static: rules, a set of condition-action rules

state INTERPRET-INPUT(percept)

rule RULE-MATCH(state, rules)

action RULE-ACTION[rule]

return action

Will only work if the environment is fully observable.

Otherwise infinite loops may occur.](https://image.slidesharecdn.com/agents-1-230914165748-3becbcfe/85/agents-in-ai-ppt-47-320.jpg)

![The Vacuum-Cleaner Mini-World

function REFLEX-VACUUM-AGENT ([location, status]) return an

action

if status == Dirty then return Suck

else if location == A then return Right

else if location == B then return Left

Does not work this way. Need full state space (table) or memory.](https://image.slidesharecdn.com/agents-1-230914165748-3becbcfe/85/agents-in-ai-ppt-48-320.jpg)

![Model/State-based Agents

function REFLEX-AGENT-WITH-STATE(percept)

returns an action

static: rules, a set of condition-action rules

state, a description of the current world state

(action, the most recent action)

state UPDATE-STATE(state, (action,) percept)

rule RULE-MATCH(state, rule)

action RULE-ACTION[rule]

return action](https://image.slidesharecdn.com/agents-1-230914165748-3becbcfe/85/agents-in-ai-ppt-50-320.jpg)