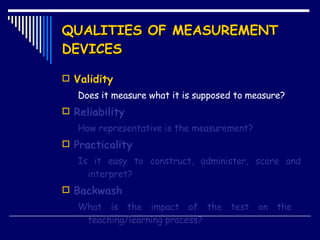

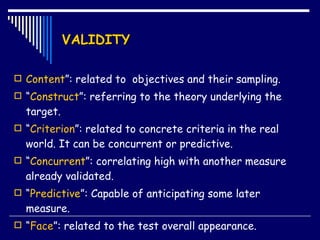

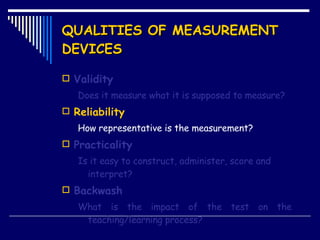

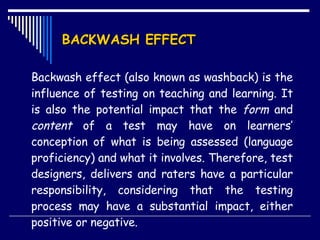

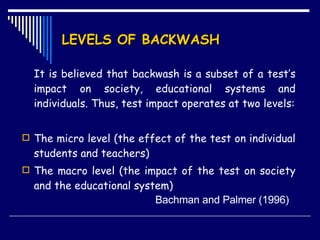

The document discusses key qualities of measurement devices: validity, reliability, practicality, and backwash effect. It defines each quality and provides examples. Validity refers to what a test measures, and includes content, construct, criterion-related, concurrent, and predictive validity. Reliability is how consistent measurements are, including equivalency, stability, internal, and inter-rater reliability. Practicality means a test is easy to construct, administer, score and interpret. Backwash effect is a test's influence on teaching and learning.