The internship report discusses techniques for removing small stopping sets in low-density parity-check (LDPC) codes, which are crucial for error correction in communication systems. It presents methods such as adding redundant rows to the parity-check matrix and using a greedy heuristic approach to improve decoder performance. The techniques are compared in terms of their effectiveness in eliminating stopping sets, with results indicating that the greedy method requires fewer redundant rows than the linear combination method.

![3

LDPC codes and their importance:

Low-density parity-check (LDPC) codes are linear bock codes used for forward error correction.

The name comes from code’s characteristics of having small amount of 1’s as compare to 0’s in

the matrix. LDPC codes provide the performance which is near to capacity of most communication

channels.

LDPC codes were invented by Gallager in 1960’s during his PhD studies. But these codes didn’t

get much attention due to the unavailability of high performance encoder and decoder until about

15 years ago [1].

LDPC codes can be represented by two methods, one is by linear block codes via matrices of zeros

and ones and other is by graphs (Tanner/bipartite graph) which consist of check nodes and variable

nodes (or bit nodes). An edge joins the variable node to check node if that bit is present in

corresponding parity check equation. The number of bits in the Tanner graph is equal to number

of ones in parity check matrix [1]. An example of LDPC code is given in figure 1.1 below (taken

from the paper of [2]).

A LDPC code is called regular if number of 1’s in each column and rows is constant, if it’s not

constant then it is called irregular LDPC code.

To construct LDPC codes, there are many different algorithms available. There is one which

Gallager introduced, MacKay also suggested one algorithm which randomly generate sparse parity

check matrices. I have also used a software based on Unix/Linux designed by Canadian professor

Radford M. Neal [3], which randomly generates the LDPC codes given matrix size and number of

1’s in each row and column.

LDPC codes are used in a variety of applications in the communication and data storage systems,

the reason being they enable the effective decoding over noisy channel and provide better error

correction performance.

Figure 1.1: Example of LDPC code [2].](https://image.slidesharecdn.com/internshipreport-160329144112/85/techniques-for-removing-smallest-Stopping-sets-in-LDPC-codes-3-320.jpg)

![4

Stopping sets:

Stopping set is commonly defined by the help of the Tanner graph. Here are its few definitions:

“A stopping set S is a subset of V, the set of variable nodes, such that all neighbors of S are

connected to S at least twice” [4] or in other words, “a stopping set is the set of variable nodes

whose induced graph contains no singly connected check nodes” [5].

The iterative decoding will be unsuccessful if the bits corresponding to a stopping set are erased.

The stopping set of small size has even worse effect on the performance of the decoder since it is

more likely that all bits in the stopping set are erased. Therefore, it is important to remove small

stopping sets. The stopping sets of small size are more dangerous because there is higher

probability that all bits in the set are erased, this is because the message passing decoder used with

LDPC codes cannot recover the transmitted information in a unique way.

Addition of redundant rows:

There are some techniques by which these small stopping sets can be removed. One of them is by

taking the linear combination of rows of the parity-check matrix H and adding them together to

generate redundant rows. These redundant rows, then can be appended to the original matrix to

remove the stopping sets of small size. In terms of Tanner graph, redundant rows enhance the code

graph by increasing the number of check nodes. Therefore, the redundant rows can eliminate the

stopping set S if that check nodes is connected to S exactly once. Since these redundant rows are

from linear combination of the actual code so it doesn’t change the code and hence there is no rate

penalty [5].

The other approaches include finding low weight individual redundant rows to eliminate big

number of small-sized stopping sets. One of them is greedy heuristics technique. By this technique,

minimum number of redundant rows are found by continuous experiments that can remove large

amount of stopping sets, later on these rows can be combined with PCM to eliminate all stopping

sets of small size.

There are many other ways to generate redundant rows and each methods have its pros and cons,

usually low weight redundant rows are considered to be good because of their ability to remove

many potential stopping sets.

The new rows can be either redundant or linearly independent; addition of redundant rows is good

in the sense that it doesn’t modify the code but it may require many redundant rows, conversely

addition of linearly independent rows changes the code a bit having small loss in code rate but it

generates the code with better performance of the decoder with addition of just few rows.](https://image.slidesharecdn.com/internshipreport-160329144112/85/techniques-for-removing-smallest-Stopping-sets-in-LDPC-codes-4-320.jpg)

![5

Previous work:

There is lot of similar work that was done on this subject in past. One of the big efforts is made in

[6]. The authors explained that performance of linear code is effected by smallest stopping sets

and then they introduced many new terminologies like stopping distance, stopping redundancy.

They also suggested that adding redundant row to the parity check matrix can be very useful to

remove the smallest stopping sets.

In [5], the authors introduced a scheme of finding low weight redundant rows to be added to the

parity-check matrix in order to remove the smallest stopping sets. They first described the GARS

(Genie-aided random search) algorithms and its weakness of having high weight redundant rows

which increases the density of PCM and then they presented their method called LWRRS (Low

weight redundant rows search). The idea is to first generate the list of code words from parity-

check matrix H by enumeration procedure and then create generator matrix G from those code

words and then find the parity-checks. After that, these parity checks can be added to the H to

remove small stopping set of small size.

In [7], the authors have proposed new scheme which they claimed by their results that it provides

better efficiency and improved performance for Parity-check matrix extension algorithms. They

suggested that adding linearly independent rows to PCM can change the code a little but it forms

the better code by adding fewer new redundant rows. The basic idea was to identify the positions

of those columns numbers which are present in most of the stopping sets and then putting 1 in

those column indexes in the new row and the elements in other columns are set to zero. This

technique eliminates many potential stopping set but it has slight disadvantage that there are some

stopping set which are reactived by these new rows during elimination process.

Our techniques:

I used two techniques to remove smallest stopping sets in the parity-check matrix. In first

technique, the basic idea is to take linear combination of the rows of the original matrix to generate

the redundant rows and then appending those redundant rows to the PCM to eliminate small-sized

stopping sets. The redundant rows can be generated by multiplying either by 0 or 1 (based on

random decision of matlab code) with every row of the original matrix and then adding the

resultant row to previously generated rows and their sum produces new row. I generated 200

redundant rows for my experiment. The matlab code for generating redundant rows is given in

appendix. The matlab code for finding smallest stopping sets consists of nested for loops. The code

checks for every column of the matrix to find the smallest stopping set. The LDPC code used in

my experiment is (10, 20) regular code with matrix size of 30x60. This matrix was generated by

the help of Professor Radford M. Neal’s software [8]. This matrix is given in appendix. The

minimum stopping set in this code is of size 5. An example of matlab code for removal of stopping

set of size 5, 6 and 7 is also given in the appendix as well as code for generation of redundant rows

by linear combination. The matlab code for removal of stopping set follows a pattern and can be

extended to calculate stopping set of any size.

I ran many iteration of the matlab function to check that exactly how many redundant rows are

required to remove stopping set of 5, 6 and 7. The result is mentioned in section below.](https://image.slidesharecdn.com/internshipreport-160329144112/85/techniques-for-removing-smallest-Stopping-sets-in-LDPC-codes-5-320.jpg)

![7

Future work:

This work can also be extended by trying some other potential techniques of generating small

number of low weight redundant rows to eliminate all small-sized stopping sets in parity-check

matrix to optimize the overall efficiency of decoder.

The work can be extended to remove trapping set and pseudo codewords as the basic idea is the

same behind all these concepts.

References:

[1] Bernhard M.J. Leiner “LDPC Codes – a brief Tutorial”

[2] Tinoosh Mohsenin and Bevan M. Baas “A Split-Decoding Message Passing Algorithm for

Low Density Parity Check Decoders”

[3] Prof. Radford M. Neal http://www.cs.utoronto.ca/~radford/

[4]Changyan Di, David Proietti, I. Emre Telatar, Thomas J. Richardson, and Rüdiger L. Urbanke

“Finite-Length Analysis of Low-Density Parity-Check Codes on the Binary Erasure Channel”

[5] Omer Fainxilber, Eran Sharon and Simon Litsyn “Decreasing Error Floor in LDPC codes by

Parity-Check Matrix Extensions”

[6] Moshe Schwartz, Alexander vardy “On the stopping distance and the stopping redundancy of

codes”

[7] Saejoon Kim, Hyuncheol Park “Improved stopping set Elimination by Parity-Check matrix

extension of LDPC Codes”

[8] Prof. Radford M. Neal’s software for generating LDPC codes

ftp://ftp.cs.utoronto.ca/pub/radford/LDPC-2001-05-04/index.html

Appendix:

LDPC matrix of (10, 20) regular code of size 30x60 used in experiment.

0 1 0 0 1 0 1 1 0 1 1 0 1 1 0 0 0 1 0 0 1 0 0 0 0 0 0 0 1 1 0 0 0 0 1 0 0 0 0 0 0 1 0 1 0 0 0 0 0 0 1 1 0 1 1 0 1 0 0 0

1 0 0 0 1 0 1 0 0 1 0 0 0 0 1 0 0 0 1 0 1 0 0 0 0 0 0 1 0 0 1 1 1 0 0 0 0 1 1 0 1 1 1 1 0 0 1 1 0 0 0 0 0 0 1 0 0 0 0 0

0 1 1 1 1 1 0 1 1 0 0 0 0 0 0 1 0 1 1 0 0 0 1 0 0 0 1 0 0 0 0 0 0 0 0 0 0 1 1 0 1 1 1 0 0 0 1 0 0 0 0 0 1 0 1 0 0 0 0 0

1 0 1 0 0 0 1 0 0 0 0 0 1 0 0 0 0 1 1 1 1 0 0 0 1 0 0 0 0 0 1 0 1 0 0 1 1 0 1 0 0 1 1 1 0 0 1 1 0 0 0 0 0 0 1 0 0 0 0 0

0 0 0 1 0 0 0 0 0 0 0 0 0 0 1 1 0 0 0 0 0 0 0 1 1 1 1 0 0 0 0 1 1 0 1 1 0 0 0 0 1 0 1 1 0 0 0 1 0 0 0 1 1 1 0 1 0 0 1 0

1 0 0 0 0 1 1 0 0 1 0 1 0 0 1 0 1 1 0 0 0 0 0 1 0 1 0 0 0 0 0 0 1 0 1 0 0 0 0 0 0 1 0 0 1 1 0 0 0 0 1 1 0 0 0 1 1 0 1 0

0 0 0 1 1 1 1 1 0 0 0 1 0 0 0 0 0 0 0 0 0 0 0 0 1 0 1 0 1 1 0 0 0 1 0 0 1 1 0 1 0 0 1 0 0 1 0 0 1 0 1 1 0 0 0 0 0 1 0 0

0 0 0 1 0 0 0 0 1 1 0 0 1 0 1 0 0 1 1 1 0 1 0 1 0 0 0 0 0 0 1 1 0 1 0 1 0 1 1 0 1 0 1 0 0 0 1 0 1 0 0 0 0 0 0 0 0 0 0 0

1 0 0 0 1 0 1 1 0 0 0 1 1 1 0 0 0 0 0 0 1 0 1 0 0 1 0 0 0 1 0 0 0 0 0 0 1 1 0 1 0 1 1 0 0 0 0 1 0 0 0 1 0 0 0 0 0 0 0 1](https://image.slidesharecdn.com/internshipreport-160329144112/85/techniques-for-removing-smallest-Stopping-sets-in-LDPC-codes-7-320.jpg)

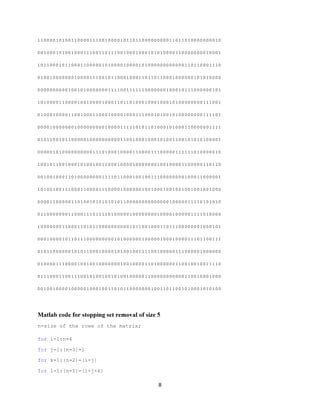

![9

for m=1:n-(i+j+k+l)

if (Z(:,i)+Z(:,i+j)+Z(:,i+j+k)+Z(:,i+j+k+l)+Z(:,i+j+k+l+m)>=2)

|(Z(:,i)+Z(:,i+j)+Z(:,i+j+k)+Z(:,i+j+k+l)+Z(:,i+j+k+l+m)==0)

W=['stopping set of size five is in the column ' num2str(i), ' ' num2str(i+j)

' ' num2str(i+j+k) ' ' num2str(i+j+k+l) ' and ' num2str(i+j+k+l+m) ];

disp(W)

end

end

end

end

end

end

Matlab code for stopping set removal of size 6

n=size of the rows of the matrix;

for i=1:n-5

for j=1:(n-4)-i

for k=1:(n-3)-(i+j)

for l=1:(n-2)-(i+j+k)

for m=1:(n-1)-(i+j+k+l)

for o=1:n-(i+j+k+l+m)

if Z(:,i)+Z(:,i+j)+Z(:,i+j+k)+Z(:,i+j+k+l)+Z(:,i+j+k+l+m)+Z(:,i+j+k+l+m+o)>=2

| Z(:,i)+Z(:,i+j)+Z(:,i+j+k)+Z(:,i+j+k+l)+Z(:,i+j+k+l+m)+Z(:,i+j+k+l+m+o)==0

W=['stopping set of size six is in the column ' num2str(i), ' ' num2str(i+j)

' ' num2str(i+j+k) ' ' num2str(i+j+k+l) ' ' num2str(i+j+k+l+m) ' and '

num2str(i+j+k+l+m+o)];

disp(W)

end

end

end

end

end

end](https://image.slidesharecdn.com/internshipreport-160329144112/85/techniques-for-removing-smallest-Stopping-sets-in-LDPC-codes-9-320.jpg)

![10

end

Matlab code for stopping set removal of size 7

n=size of the rows of the matrix;

for i=1:n-6

for j=1:(n-5)-i

for k=1:(n-4)-(i+j)

for l=1:(n-3)-(i+j+k)

for m=1:(n-2)-(i+j+k+l)

for o=1:(n-1)-(i+j+k+l+m)

for p=1:(n-1)-(i+j+k+l+m+o)

if Z(:,i)+Z(:,i+j)+Z(:,i+j+k)+Z(:,i+j+k+l)+Z(:,i+j+k+l+m)+Z(:,i+j+k+l+m+o)

+Z(:,i+j+k+l+m+o+p)>=2|Z(:,i)+Z(:,i+j)+Z(:,i+j+k)+Z(:,i+j+k+l)+Z(:,i+j+k+l+m)

+Z(:,i+j+k+l+m+o) +Z(:,i+j+k+l+m+o+p)==0

W=['stopping set of size seven is in the column ' num2str(i), ' '

num2str(i+j) ' ' num2str(i+j+k) ' ' num2str(i+j+k+l) ' ' num2str(i+j+k+l+m) '

' num2str(i+j+k+l+m+o) ' and ' num2str(i+j+k+l+m+o+p)];

disp(W)

end

end

end

end

end

end

end

end

Code for generating 200 redundant rows by linear combination

s=[0];

for i=1:200

for j=1:30;

s=mod(s+randint(1,1)*H(j,:),2);](https://image.slidesharecdn.com/internshipreport-160329144112/85/techniques-for-removing-smallest-Stopping-sets-in-LDPC-codes-10-320.jpg)

![11

end

Y(i,:)=s;

s=[0];

end

Code for greedy heuristic technique

Z=[H;Y(80,:)]; /* Adding every individual row from matrix Y to original matrix

and check how many stopping set it removes. Note: Y is the matrix of already

generated redundant rows */

X=[(Y(2:2,:)); (Y(5:5,:)); (Y(7:7,:)); (Y(11:11,:)); (Y(15:15,:));

(Y(17:17,:))]; /* After knowing good rows from Y, they can be combined to form a

matrix X */

Z=[H;X]; /* Matrix X is then combined to original parity-check matrix H to from

matrix Z which can remove all stopping sets */](https://image.slidesharecdn.com/internshipreport-160329144112/85/techniques-for-removing-smallest-Stopping-sets-in-LDPC-codes-11-320.jpg)